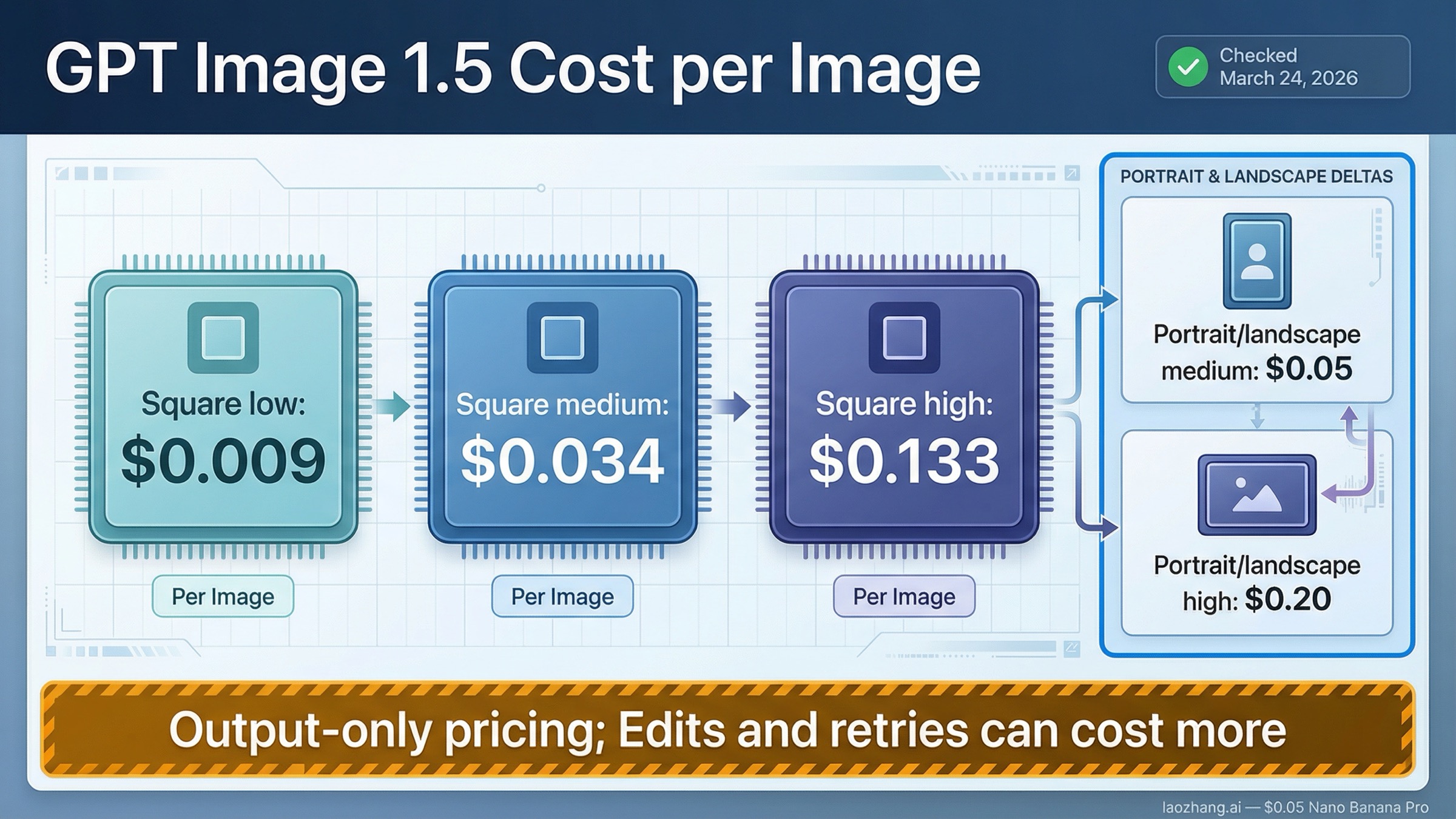

As checked on March 24, 2026, GPT Image 1.5 costs $0.009, $0.034, and $0.133 for one 1024x1024 image at low, medium, and high quality. If you only need the clean official answer to gpt-image-1.5 cost per image, that is it.

The part most search results skip is that OpenAI's per-image rows are output-image prices, not a universal invoice for every workflow. If you send a short prompt and generate one fresh image, the official row is usually close enough. If you edit images, preserve references, or rerun prompts until one final image is usable, the effective cost per finished image is higher.

The safest default is simple. Use the official GPT Image 1.5 row as your baseline for one image. Treat that baseline as incomplete when the workflow includes edits, reference-image inputs, or a lot of retries. If cost matters more than flagship quality, benchmark gpt-image-1-mini before assuming GPT Image 1.5 is the right lane.

TL;DR

- One square GPT Image 1.5 image currently costs $0.009, $0.034, or $0.133 depending on low, medium, or high quality.

- Portrait and landscape images cost more at $0.013, $0.05, and $0.20.

- Those rows are the right shortcut for simple prompt-to-image generation, but they are not the full cost of edit-heavy or reference-heavy workflows.

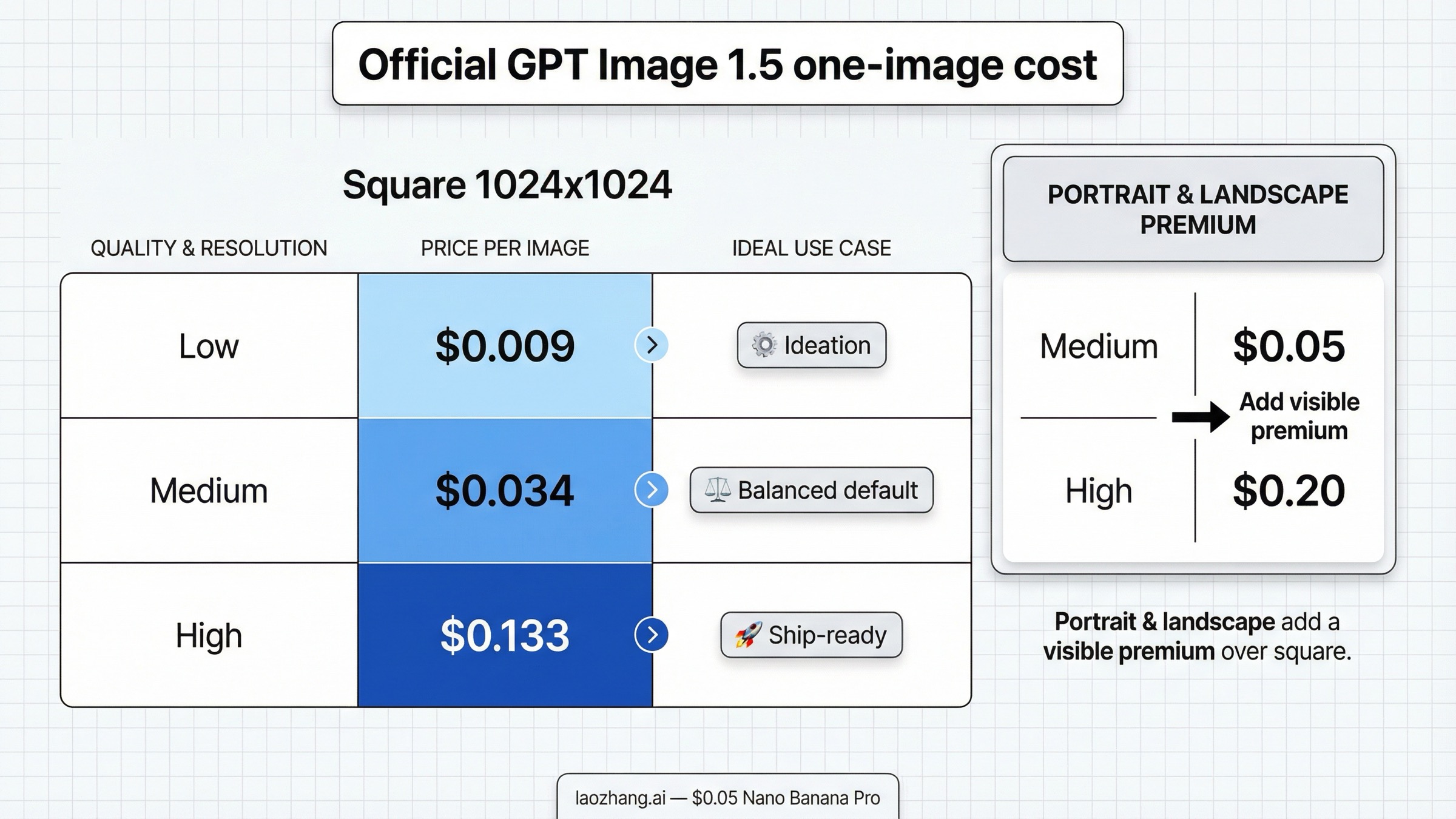

| Output size | Low | Medium | High | Best way to read the row |

|---|---|---|---|---|

| 1024x1024 | $0.009 | $0.034 | $0.133 | Best shortcut for one prompt-to-image request |

| 1024x1536 | $0.013 | $0.05 | $0.20 | Portrait costs more than square |

| 1536x1024 | $0.013 | $0.05 | $0.20 | Landscape costs the same as portrait |

That table is the answer most readers need first. The rest of this page exists to answer the question behind it: what does one finished image actually cost once the workflow stops being a clean one-shot generation call?

If you need a default planning row and do not know where to start, square medium at $0.034 is the most practical baseline for many teams. It is cheap enough for experimentation without collapsing all the way to the lowest-quality lane, and it gives you a realistic middle ground before you decide whether the workflow truly needs high-quality output.

Official GPT Image 1.5 Cost per Image

The cleanest source for current GPT Image 1.5 pricing is the official GPT Image 1.5 model page, with the image-generation guide repeating the same per-image rows. That matters because this keyword attracts a lot of pages that sound official while actually selling a subscription, a credit bundle, or an OpenAI-compatible relay.

If your question is strictly "what does one image cost on OpenAI's own API surface," the current answer is straightforward:

- one square low-quality image is $0.009

- one square medium-quality image is $0.034

- one square high-quality image is $0.133

- one portrait or landscape medium image is $0.05

- one portrait or landscape high image is $0.20

Those numbers are more useful than many page-one results make them seem. They are not rough community averages. They are the official current rows OpenAI publishes for common output shapes. That means they are the right starting point for estimating a single marketing visual, one product mockup, one hero image concept, or one internal prototype frame.

The more important decision is how to choose the row.

If the image is disposable ideation, low or medium quality may be enough. If the image must ship to a user, preserve visual details, or render text more reliably, the jump to high quality can be rational even though the price increases sharply. A lot of weak pricing pages treat those rows as if the only question were "which one is cheapest?" Real teams usually care about the price of one image that is good enough to keep, not the cheapest possible row in the abstract.

The size choice matters too. A reader searching for cost per image often assumes portrait and landscape are just cosmetic variants of square pricing. They are not. The jump from $0.034 for a square medium image to $0.05 for a portrait or landscape medium image is not dramatic for one test prompt, but it becomes material once the same creative format becomes the default in a workflow.

That is also why this article stays narrower than our broader guides to GPT Image 1.5 pricing and GPT Image 1.5 API pricing. Those pages explain the whole pricing surface. This one is about the narrower decision a reader usually needs in the moment: what should I assume one image costs before I move forward?

What One Finished Image Really Costs

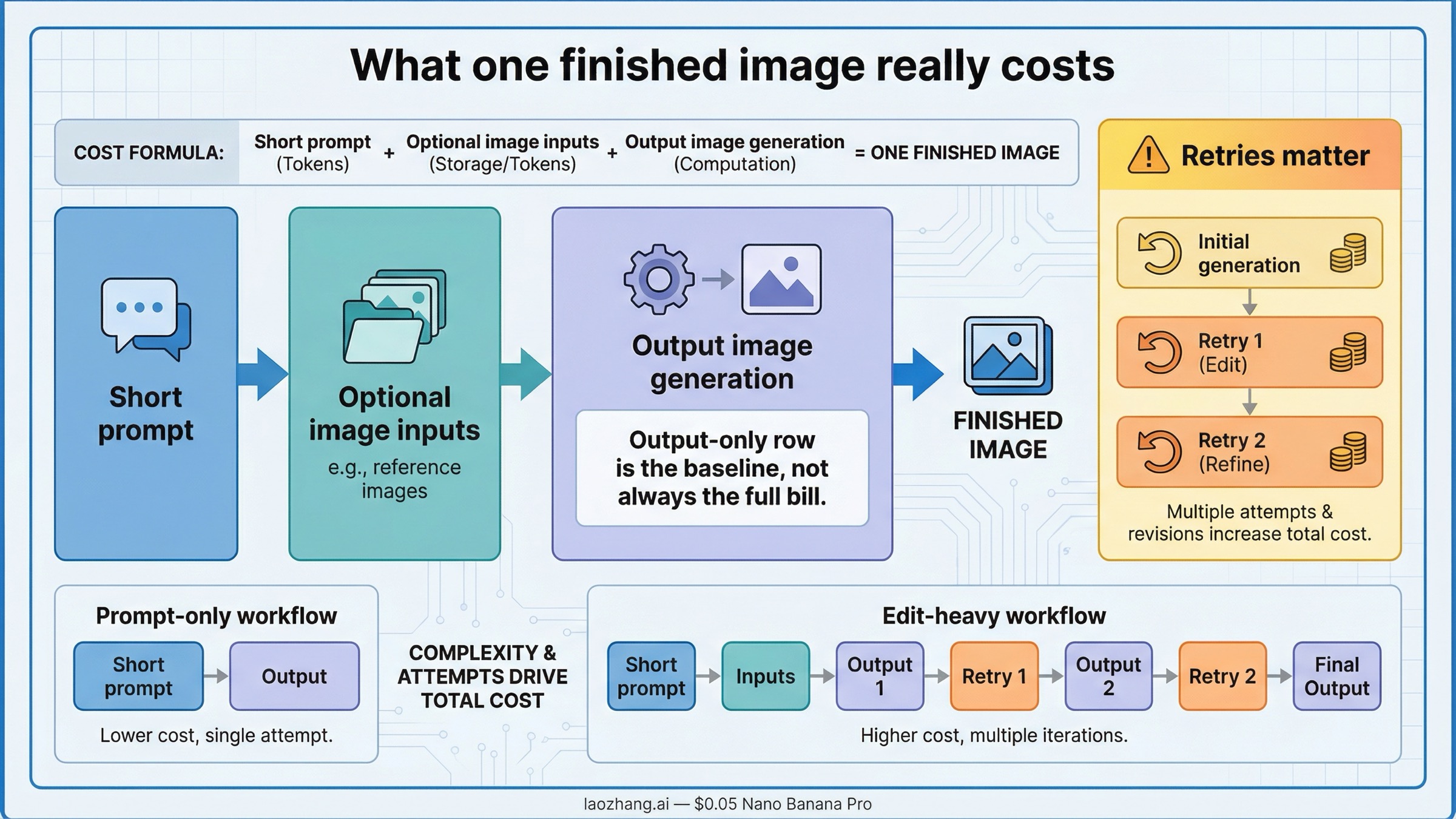

The official image rows are the right first answer, but they are not the full billing model.

OpenAI's current image-generation guide says the published per-image tables cover output image generation only. The same guide says the final cost of a request can also include text input tokens and image input tokens when you use edits or reference-heavy workflows.

That distinction sounds small until you map it to how people actually use the model.

For a plain prompt-to-image request, one image often really does behave close to the visible row. If you send a short prompt and generate one square medium image, thinking in terms of about $0.034 for one image is a reasonable shortcut.

That shortcut gets weaker in three common situations.

The first is edits. If you are modifying existing visuals rather than generating from scratch, the request includes image inputs. OpenAI also documents higher-fidelity preservation in GPT Image 1.5: the first five input images can be preserved with higher fidelity when input_fidelity is set to high. That is useful for brand work, product revisions, or controlled visual changes. It is also a reminder that a polished edited image is not billed like a blank-slate generation.

The second is reference-heavy generation. Many practical GPT Image 1.5 workflows use logos, style references, packaging comps, or previous campaign assets as inputs. That can make one finished image cheaper in labor but more expensive in API billing than the clean output-only row suggests.

The third is retries. This is the part many pricing pages dodge because it is not a neat row in a model card. If you generate four medium images before one is usable, your effective cost per kept image is not \$0.034. It is closer to the combined cost of the attempts you actually made. That sounds obvious once stated, but it is exactly why people keep searching after they already saw the official table.

The practical rule is:

- use the official row for one-shot prompt-to-image planning

- expect higher effective cost when the workflow includes inputs, edits, or repeated attempts

- use the official token pricing page when you need to model the full request rather than one output row

That is the real reason this keyword deserves its own page. The headline price is easy. The operational question is whether that headline price still describes the image you are actually trying to ship.

If your next task is full workload math rather than one-image budgeting, the better next read is our GPT Image 1.5 pricing calculator, because that page is built for 100-, 1,000-, and 10,000-image planning instead of a single-image decision.

When GPT Image 1.5 Is Worth Paying For

The biggest mistake in this query family is assuming the answer is only about price. It is usually about whether the flagship lane saves enough downstream work to justify its price.

GPT Image 1.5 is easier to justify when the cost of getting the image wrong is higher than the price difference itself.

That usually includes:

- brand-sensitive edits where reference preservation matters

- product images or packaging comps where visual consistency is expensive to fix manually

- text-heavy images where better output quality prevents rework

- marketing assets where one extra retry loop costs more time than the model premium

In those cases, asking only for the cheapest row is the wrong frame. One high-quality image at $0.133 can still be cheaper than multiple low-cost attempts plus cleanup if the result needs to ship.

The opposite is also true. If the image is low-stakes, disposable, or part of a wide ideation funnel, paying flagship rates for every frame can be a waste. A lot of teams default to the best-sounding model first and only later realize the workflow never needed it.

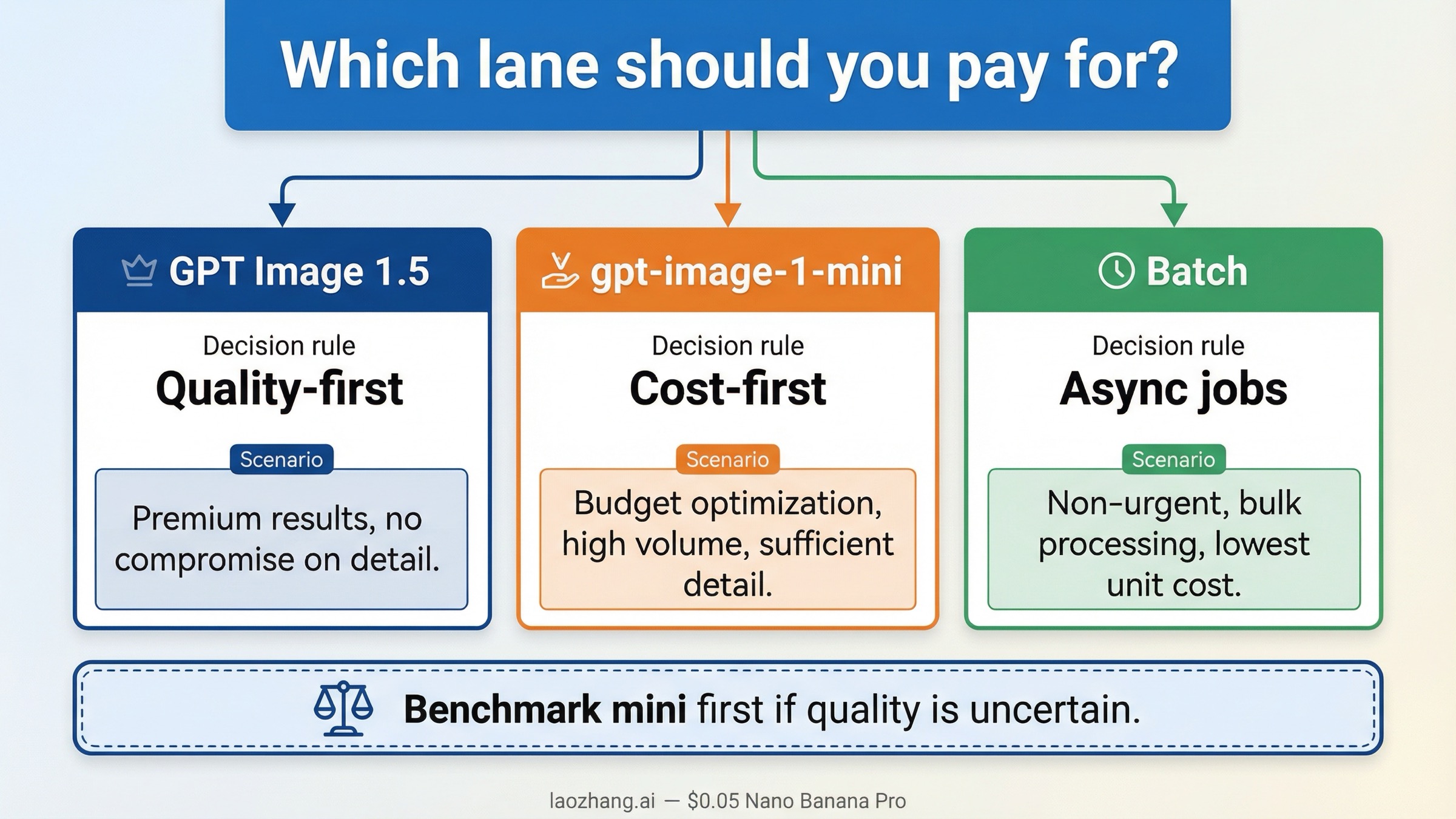

The operator-style decision rule is simple:

- keep GPT Image 1.5 when image quality, edit reliability, or preservation save enough downstream work

- switch lanes when the work is volume-heavy and cheap to discard

- think in terms of the cost of one usable image, not the posted price of one theoretical output

This is also why older GPT Image 1 screenshots are now a bad pricing baseline. OpenAI's current GPT Image 1 page still lists the previous flagship at $0.011, $0.042, and $0.167 for square low, medium, and high outputs. OpenAI's December 16, 2025 launch note also says GPT Image 1.5 image inputs and outputs are 20% cheaper than GPT Image 1. The newer lane is not just current. It is also the cheaper official flagship.

When gpt-image-1-mini or Batch Changes the Math

The narrow cost-per-image answer gets much more useful once you compare it with the two main budget levers readers actually control: model choice and processing mode.

The first lever is gpt-image-1-mini. OpenAI's current mini model page lists $0.005, $0.011, and $0.036 for one square low, medium, and high image. That is materially cheaper than GPT Image 1.5. So if the workflow is cost-first, internal-only, or highly disposable, the most important budgeting question is often not "how do I make GPT Image 1.5 cheaper?" It is "why am I paying for GPT Image 1.5 at all?"

The second lever is Batch. On the official pricing page, GPT Image 1.5 Batch token rates are half of standard rates. That matters for asynchronous jobs. It matters much less when a human is waiting on an interactive result. Batch is the right lever when the job is background rendering, overnight queue work, or a workflow where latency is not the first constraint.

| Workflow shape | Best default | Why |

|---|---|---|

| Brand-sensitive edits or high-stakes final assets | GPT Image 1.5 | The quality and edit reliability are often worth more than the price premium |

| Cheap ideation, prototypes, disposable variants | gpt-image-1-mini | Lower one-image cost matters more than flagship quality |

| Large asynchronous jobs where quality still matters | GPT Image 1.5 + Batch | You keep the flagship lane and use the official cost discount |

| Uncertain quality requirements | Benchmark mini first | It is cheaper to prove you do not need the flagship before paying for it at scale |

There is one more naming trap worth clearing away here. The current official chatgpt-image-latest page lists the same token and per-image rows as GPT Image 1.5. So if you searched because you thought the ChatGPT alias might be a cheaper price surface, that is not the current split. The difference is alias behavior and stability, not a lower posted cost.

If you need the full current OpenAI image family in one place, use OpenAI image generation API pricing. For this page, the important rule is narrower: if one image is expensive to get wrong, GPT Image 1.5 still makes sense; if cost is the first constraint, mini deserves the first benchmark.

Why Search Results Disagree About Cost per Image

The disagreement across page one is real, but it is not random.

One cluster of results is quoting official OpenAI API pricing. Those are the pages you should trust for current rows, token rates, supported endpoints, and tier limits.

Another cluster is quoting third-party pricing surfaces. These pages may sell monthly plans, credits, or OpenAI-compatible access. They are not automatically useless. They are just answering a different question from the one most readers mean when they search gpt-image-1.5 cost per image.

That is why this SERP feels messy. A reader sees one official model page, one guide, one exact-match plan page, and one broad blog guide, then feels like everyone is disagreeing about the same price. In reality, they are often pricing different products.

The clean buyer rule is:

- if you want official OpenAI API pricing, trust the model page, the pricing page, and the image-generation guide together

- if you want third-party access pricing, treat those pages as separate commercial products, not as proof that OpenAI itself charges the same way

This distinction matters more than it seems. The strongest current third-party pages are usually easier to click because they promise one fast answer. The problem is that they often switch the billing surface without saying so clearly enough. A good article on this keyword needs to stop that confusion near the top, not in a buried caveat.

If your next problem is not price but model naming, our guide to ChatGPT Image Latest vs GPT Image 1.5 is the better follow-up. If your next problem is broader migration logic, use GPT Image 1 vs GPT Image 1.5.

FAQ

What is the current GPT Image 1.5 cost per image?

As checked on March 24, 2026, GPT Image 1.5 costs $0.009, $0.034, and $0.133 for one 1024x1024 low-, medium-, and high-quality image.

How much does one portrait or landscape image cost?

The current official rows are $0.013, $0.05, and $0.20 for one 1024x1536 or 1536x1024 low-, medium-, and high-quality image.

Does GPT Image 1.5 cost per image include edits and reference images?

Not completely. OpenAI's guide says the published per-image rows cover output image generation only. Text input and image input tokens can raise total request cost in edit-heavy or reference-heavy workflows.

What is the cheapest current OpenAI image lane?

gpt-image-1-mini is the current cheaper official lane, starting at $0.005 for one square low-quality image.

Does Batch lower GPT Image 1.5 cost per image?

It lowers the underlying token rates for asynchronous workflows. On the official pricing page, GPT Image 1.5 Batch image and text token pricing is half of standard pricing. That helps most when the workflow is not interactive.

Is there a free GPT Image 1.5 API tier?

The current GPT Image 1.5 model page lists Free not supported. Paid API usage starts at Tier 1, with public IPM values of 5, 20, 50, 150, and 250 across Tiers 1 through 5.