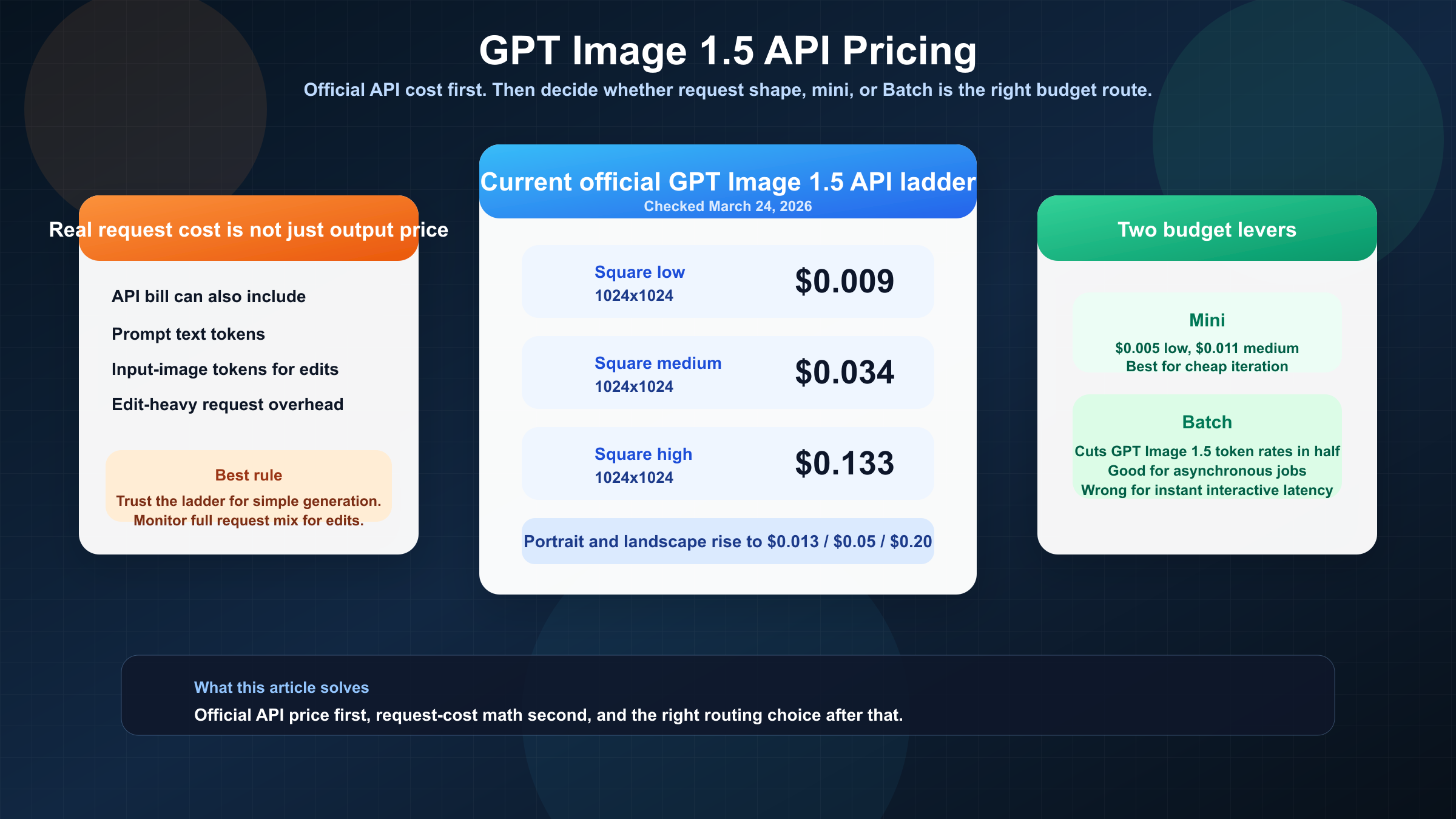

As of March 24, 2026, GPT Image 1.5 API pricing is $0.009, $0.034, and $0.133 for 1024x1024 low, medium, and high outputs. If you only need the clean official number, that is the answer. If you are budgeting a real API workflow, that is only the starting point.

The part that keeps tripping teams up is that GPT Image 1.5 does not behave like a flat-fee image vending machine. The current OpenAI docs split the answer across the GPT Image 1.5 model page, the API pricing page, and the image-generation guide. That means the visible per-image ladder is real, but it is only the output-image part of the bill. Prompt text, input images, edit-heavy workflows, and Batch all change what the invoice looks like in practice.

The safest default is simple. Use GPT Image 1.5 when output quality, text rendering, editing reliability, or brand preservation justify flagship pricing. Benchmark gpt-image-1-mini first if your workload is volume-heavy or low-stakes. Use Batch when the job is asynchronous and price matters more than instant response time.

TL;DR

If you only need the fast budgeting answer, start here.

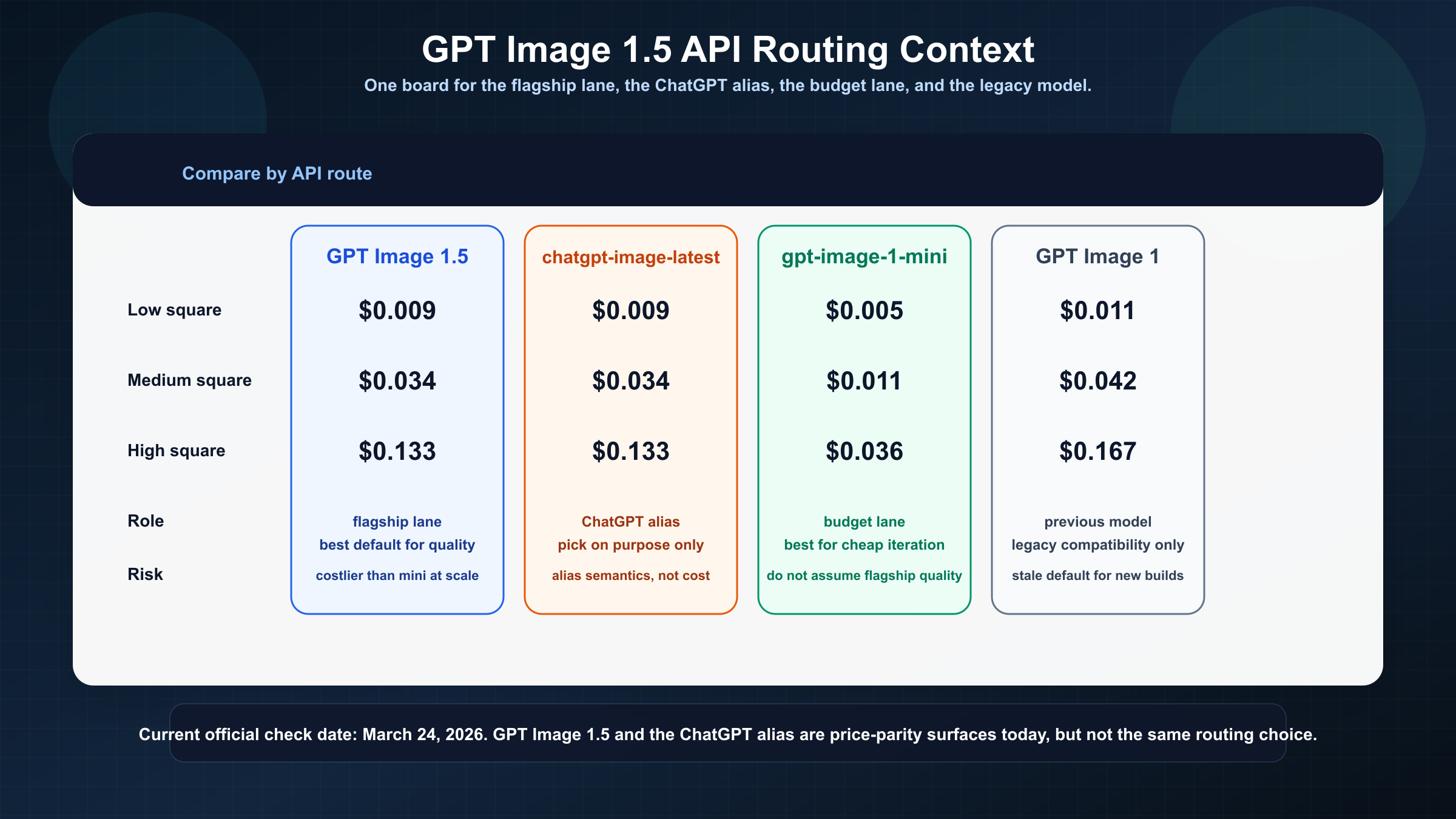

| Surface | 1024x1024 low | 1024x1024 medium | 1024x1024 high | What it means for API buyers |

|---|---|---|---|---|

| GPT Image 1.5 | $0.009 | $0.034 | $0.133 | Current flagship lane for production quality, editing, and brand-safe workflows |

chatgpt-image-latest | $0.009 | $0.034 | $0.133 | Alias that currently matches GPT Image 1.5 pricing, but is a moving ChatGPT snapshot |

gpt-image-1-mini | $0.005 | $0.011 | $0.036 | Cheapest current OpenAI image lane for bulk generation and prototype-heavy work |

| GPT Image 1 | $0.011 | $0.042 | $0.167 | Previous model, mostly relevant for migration or stale screenshot cleanup |

Three rules follow from that table.

First, GPT Image 1.5 is the right default only when you actually need the flagship lane. The model is easier to justify when image quality, edits, or text rendering save you enough retries to offset the higher price.

Second, mini is the real official price floor. A lot of pages ranking for this keyword talk as if GPT Image 1.5 is the only current answer. It is not. If the workload is high-volume testing, internal variants, or cheap prompt iteration, mini is the first benchmark to run.

Third, the table above is an output-image shortcut, not a complete request formula. For plain text-to-image generation it is often close enough. For edits, image references, or more complex request shapes, the full invoice is higher than the headline output number.

GPT Image 1.5 API Pricing at a Glance

The official pricing answer is now easier to state than it was during the GPT Image 1 launch period. OpenAI's current GPT Image 1.5 listing still uses the same square ladder for low, medium, and high image generation, and the image-generation guide repeats that same ladder in the cost section. For square outputs, the current numbers are $0.009, $0.034, and $0.133. For portrait or landscape outputs, the current guide lists $0.013, $0.05, and $0.20.

Under that per-image layer, the API still bills in tokens. On the current OpenAI pricing page, GPT Image 1.5 is listed at $8.00 input, $2.00 cached input, and $32.00 output per 1M image tokens. Text tokens are listed separately at $5.00 input, $1.25 cached input, and $10.00 output per 1M tokens. That is the part many quick pricing pages skip. The per-image numbers are not a different billing system. They are the easiest way to quote a common request shape.

The API surface matters too. The current model listing for GPT Image 1.5 exposes v1/responses, v1/images/generations, v1/images/edits, and v1/batch among its supported endpoints. That matters because this keyword is really about developer budgeting, not just product marketing. If your workload includes edits, asynchronous jobs, or multimodal request orchestration, the supported API surface changes which pricing rule you should care about first.

That is the main difference between this page and a generic pricing recap. A reader searching gpt image 1.5 pricing api is usually not just asking for a screenshot-ready number. They are asking which official number maps to the request they are about to ship.

If your broader question is OpenAI image pricing across the whole family rather than GPT Image 1.5 alone, the better next read is OpenAI image generation API pricing, because that page compares the whole current OpenAI image lineup.

What a Real GPT Image 1.5 API Request Actually Costs

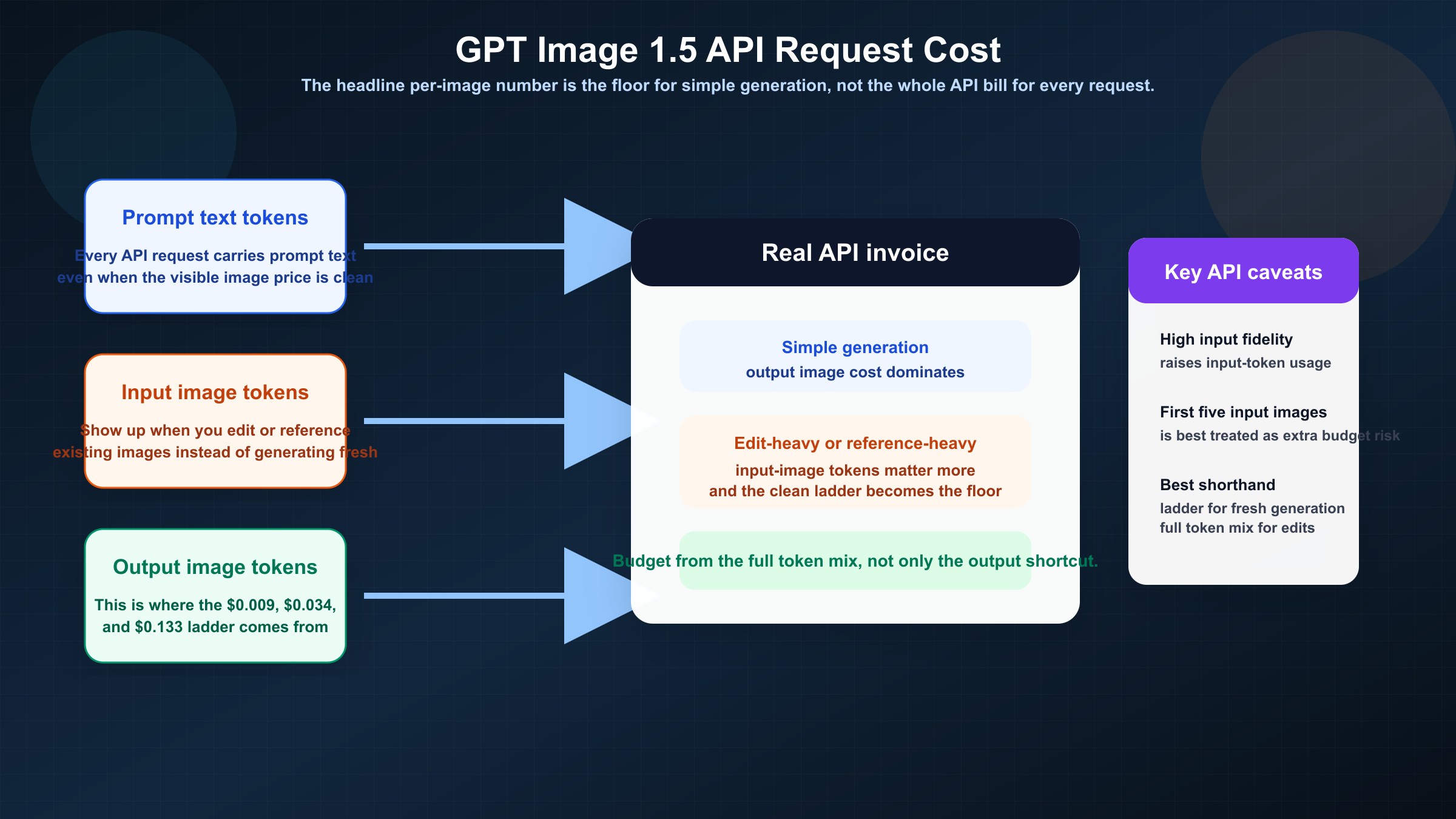

The most important sentence in the current official guide is also the sentence most ranking pages hide: the per-image tables cover output image generation only. OpenAI says you should still account for text and image input tokens when estimating the total cost of a request.

That changes the budgeting model immediately. For a simple text-to-image call with a short prompt and one output image, the output-image number usually dominates. In that case, the visible per-image ladder is a good working estimate.

That estimate stops being enough as soon as the workload becomes more realistic.

If you are editing existing images, using reference images, or leaning on higher-fidelity preservation, the request looks more like this:

texttotal request cost = text input tokens + image input tokens + output image generation

That is the API budgeting rule to trust. It is also why quick comparison pages feel incomplete even when their headline price is technically right. They are pricing the output, not the workflow.

The edit path is where this becomes operationally important. The current image-generation guide documents input_fidelity="high" for higher-fidelity edits. That is useful for brand work, product variants, or visual consistency across revisions. It also means you should not pretend a heavy edit workflow will behave like one low-cost, one-shot generation call. The price ladder remains correct, but it may stop being the number that matters most.

This is also where retry costs become real. One current Reddit thread about GPT Image 1.5 API usage shows a user repeatedly fighting cropping issues despite explicit framing instructions. That is not proof that every workload will pay a retry tax. It is a reminder that the true cost of an image workflow is not only the posted price. It is the price multiplied by how often the model gets you to a usable output.

So the practical budgeting split should be:

- For simple fresh generation, start from the official per-image ladder.

- For edit-heavy or reference-heavy work, treat the per-image ladder as the floor rather than the final invoice.

- For retry-sensitive production work, judge cost by useful output, not by nominal price alone.

When GPT Image 1.5 Is the Right API Default

The strongest case for GPT Image 1.5 is not that it is the newest lane. It is that the flagship lane can save money indirectly by reducing redo work.

That is easiest to see in production editing. OpenAI's December 16, 2025 launch note positioned GPT Image 1.5 as stronger at image preservation and editing than GPT Image 1. If your workflow depends on keeping logos, packaging details, layout structure, or branded text stable across revisions, the cheaper model is not automatically the cheaper choice. A lower nominal price can become more expensive if it forces extra retries, cleanup, or manual correction.

The model is also easier to justify when text rendering actually matters. GPT Image 1.5 is the lane to start with if you are shipping posters, packaging comps, social creatives, ecommerce assets, or product images where usable text inside the image matters to the final output. In those cases the question is less "is GPT Image 1.5 expensive?" and more "does GPT Image 1.5 remove enough downstream work to pay for itself?" That is also consistent with OpenAI's December 16, 2025 launch note, which positioned GPT Image 1.5 as stronger at image preservation and editing than GPT Image 1.

The opposite case matters just as much. GPT Image 1.5 is usually the wrong default for low-stakes prototyping, internal ideation, or large batches of rough variants. That is where mini becomes the better first benchmark. The official current mini pricing starts at $0.005, $0.011, and $0.036 for square low, medium, and high outputs. At that point the real buyer question becomes whether your workload truly needs the flagship at all.

This is why the cleanest routing rule is workload-first, not model-first.

- Choose GPT Image 1.5 when quality, editing reliability, prompt adherence, or brand preservation save more money than the higher posted rate costs.

- Choose mini when volume, cheap iteration, or internal experimentation are the main constraints.

- Choose Batch when the workload is asynchronous and the price discount matters more than instant results.

- Choose

chatgpt-image-latestonly when you explicitly want the moving ChatGPT snapshot rather than the clearest stable model ID.

If your actual decision is whether to stick with GPT Image 1 or move forward, read GPT Image 1 vs GPT Image 1.5 instead of using old GPT Image 1 screenshots as your pricing baseline.

GPT Image 1.5 vs Mini vs chatgpt-image-latest vs GPT Image 1

The ranking pages around this keyword keep mixing four different surfaces together, and that is why the query feels noisier than it should.

| Surface | Current role | Square start price | Use it when | Do not confuse it with |

|---|---|---|---|---|

| GPT Image 1.5 | Current flagship | $0.009 | You want the strongest current OpenAI default for production quality, editing, and prompt adherence | A generic ChatGPT app feature |

gpt-image-1-mini | Budget lane | $0.005 | Cost is the first constraint and the job can tolerate lower-end routing | A mini version of 1.5 with identical tradeoffs |

chatgpt-image-latest | ChatGPT alias | $0.009 | You intentionally want the image snapshot currently used in ChatGPT | A stable production model ID |

| GPT Image 1 | Previous lane | $0.011 | You are maintaining or auditing an older workflow | The current recommended default |

Two distinctions matter most.

The first is that chatgpt-image-latest is not currently a cheaper answer. The current alias page lists the same text-token rates, image-token rates, and per-image ladder as GPT Image 1.5. The difference is naming and stability, not list price. If you want a stable production decision, GPT Image 1.5 is still easier to reason about.

The second is that mini is not a footnote. It is the real official price floor in the current OpenAI image family. The reason many teams overpay is not that they misread one number. It is that they never pause to ask whether the workload needed flagship routing in the first place.

GPT Image 1 still matters, but mostly as a migration reference. The page is useful when you are debugging an old assumption or checking whether a stale tutorial is still relevant. It is not the right baseline for a fresh March 2026 API budgeting decision.

If alias behavior is your real problem rather than pure cost, read ChatGPT Image Latest vs GPT Image 1.5 next. That is a routing question more than a pricing question.

Batch Pricing, Rate Limits, and Monthly Budget Math

Batch is where the keyword stops being editorial and starts being operational.

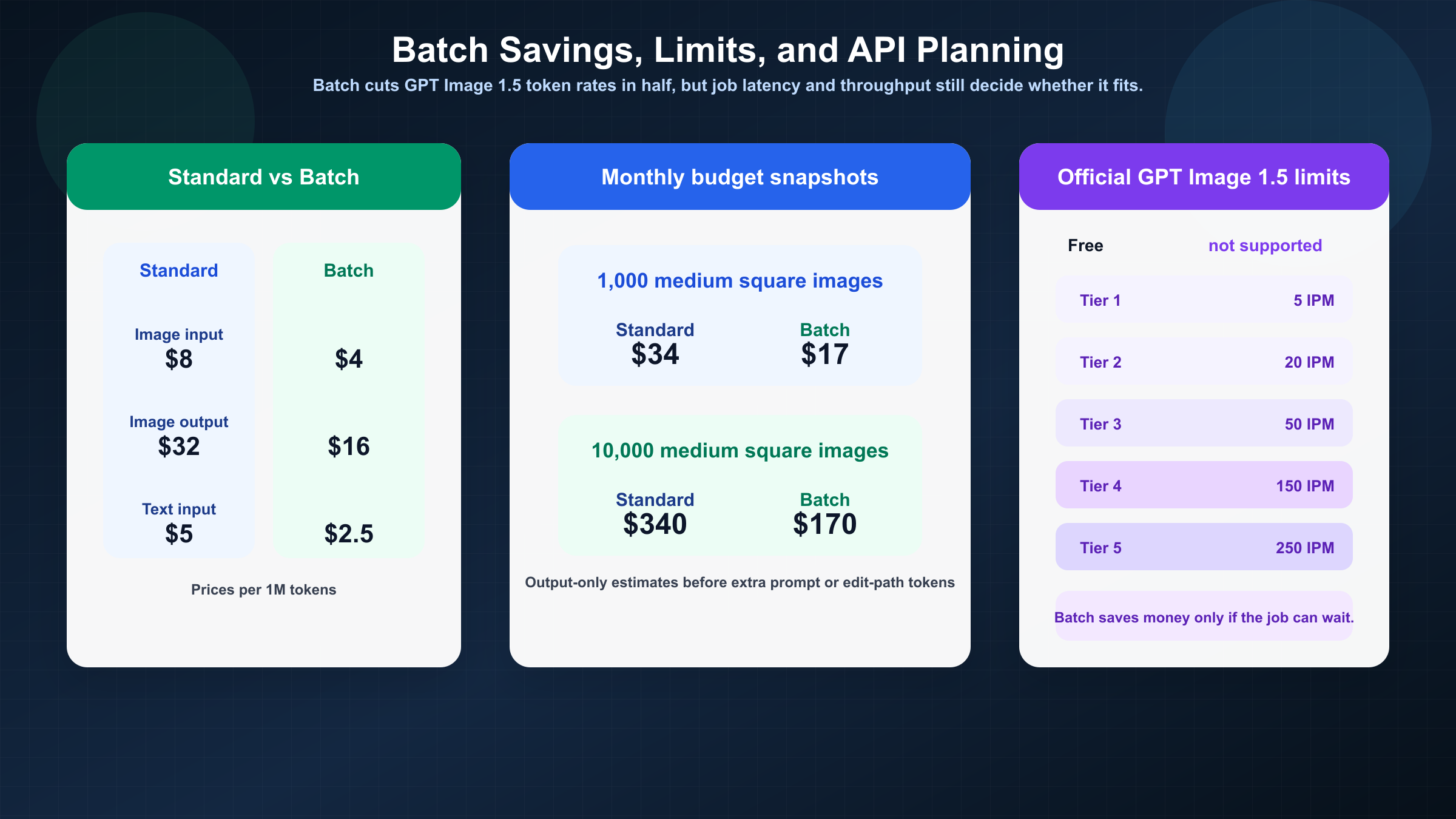

On the current OpenAI pricing page, GPT Image 1.5 Batch pricing cuts standard rates in half. Image tokens drop from $8 / $2 / $32 to $4 / $1 / $16 per 1M tokens. Text tokens drop from $5 / $1.25 / $10 to $2.50 / $0.63 / $5. If your job can run asynchronously, that is the cleanest official pricing lever available.

What does that mean in plain planning math?

For output-only estimates, 1,000 square GPT Image 1.5 images at medium quality cost about $34 in standard processing and about $17 in Batch. At 10,000 images, the same lane becomes about $340 in standard processing and about $170 in Batch before prompt-text or edit-path costs. High quality scales much faster: 1,000 high square outputs are about $133, and 10,000 are about $1,330 before extra request components.

That is why the article cannot stop at a visible per-image number. A team generating ten assets a week can tolerate rough math. A team budgeting for a monthly pipeline cannot.

Throughput matters at the same time. The current GPT Image 1.5 model listing still shows Free not supported, then 5 IPM at Tier 1, 20 IPM at Tier 2, 50 IPM at Tier 3, 150 IPM at Tier 4, and 250 IPM at Tier 5. Those are not background details. They change whether the official API is viable for your launch schedule.

So the clean buyer rule here is:

- If the job is interactive, standard pricing may still be the correct choice because Batch saves money but adds the wrong latency shape.

- If the job is background or overnight, Batch is the first official cost lever to test.

- If the job is high-volume and latency-sensitive, price alone is not enough. Tier limits and effective throughput may become the real constraint.

This is the point where many teams start looking at gateway access or reseller routes. That can be rational. It is just a separate question from official OpenAI API pricing, and the article should keep those two buying surfaces separate.

Why Search Results Still Disagree About GPT Image 1.5 API Pricing

The disagreement in search is real, but it is not mysterious.

One cluster of pages is quoting official OpenAI pricing. Those pages are the ones to trust for the current ladder, token rates, supported endpoints, and tier limits.

Another cluster is quoting gateway credits, bundles, or subscription plans. Those pages are not always useless, but they are answering a different question. They are telling you what their access product costs, not what OpenAI officially charges.

The third source of confusion is stale GPT Image 1 context. OpenAI's December 16, 2025 release note said GPT Image 1.5 image inputs and outputs were 20% cheaper than GPT Image 1. The old GPT Image 1 page is still official, still indexable, and still useful for migration. That also means older screenshots and blog posts can keep ranking even when they are no longer the right default for a fresh build.

That is why this keyword keeps producing mixed answers.

- Official pages answer the facts, but they spread the facts across multiple URLs.

- Commercial exact-match pages promise a faster answer, but often switch the topic from official billing to paid access.

- Older tutorials can still rank because they were useful once, even if they are now anchored to the wrong model generation.

The practical rule is simple.

If you need official OpenAI API pricing, trust the official model page, the official pricing page, and the image-generation guide together.

If you need alternative access pricing, treat reseller pages as separate products, not as contradictory evidence about OpenAI's own billing.

If your next problem is the whole OpenAI image family rather than only GPT Image 1.5, go to OpenAI image generation API pricing. If your next problem is routing between stable and moving image model IDs, use ChatGPT Image Latest vs GPT Image 1.5.

FAQ

What is the current official GPT Image 1.5 API price?

As checked on March 24, 2026, GPT Image 1.5 is listed at $0.009, $0.034, and $0.133 for 1024x1024 low, medium, and high outputs.

Does GPT Image 1.5 API pricing include edits and reference images?

Not completely. The official per-image table covers output image generation only. Text tokens and image-input tokens still affect the full request cost, especially for edit-heavy workflows.

Is chatgpt-image-latest cheaper than GPT Image 1.5?

No. The current official alias page lists the same per-image ladder and the same token rates. The difference is alias behavior, not a lower posted price.

What is the cheapest current OpenAI image model for API use?

gpt-image-1-mini is the official current price floor, starting at $0.005 for a square low-quality output.

Does Batch really matter for GPT Image 1.5?

Yes. On the current official pricing page, Batch cuts GPT Image 1.5 text and image token rates in half. That can materially change monthly cost for asynchronous jobs.

Is there a free tier for GPT Image 1.5 API usage?

The current official GPT Image 1.5 listing shows Free not supported. Practical usage starts at paid Tier 1.