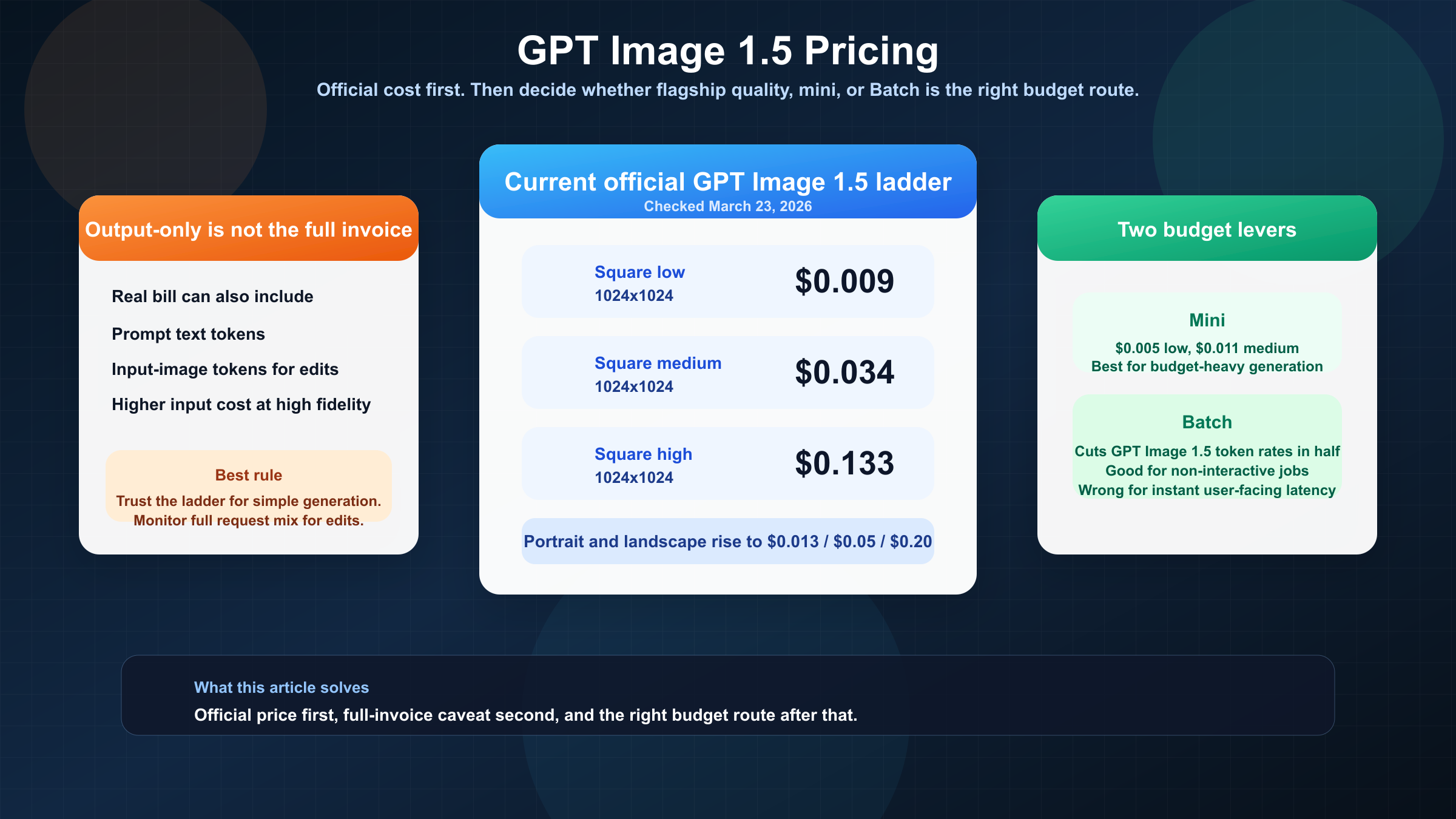

As of March 23, 2026, OpenAI lists GPT Image 1.5 at $0.009, $0.034, and $0.133 for 1024x1024 low, medium, and high outputs. That is the clean official answer to the keyword. It is also the point where most pricing pages stop too early.

The harder budgeting question is what your invoice looks like when you do more than one-shot generation. GPT Image 1.5 pricing is straightforward for simple output images, but real API usage can also include prompt tokens, input-image tokens, and higher input-token usage when you preserve edits with input_fidelity="high". If you are planning a production workflow rather than looking for a screenshot-friendly price table, that distinction matters more than one extra decimal place.

The clean default is this: use GPT Image 1.5 when output quality, editing, or brand preservation justify the spend; benchmark gpt-image-1-mini or Batch pricing when volume is the first constraint. If a search result is selling subscription credits or reseller plans, treat it as a different buying surface rather than as the official OpenAI answer.

TL;DR

If you only need the fast answer, start here.

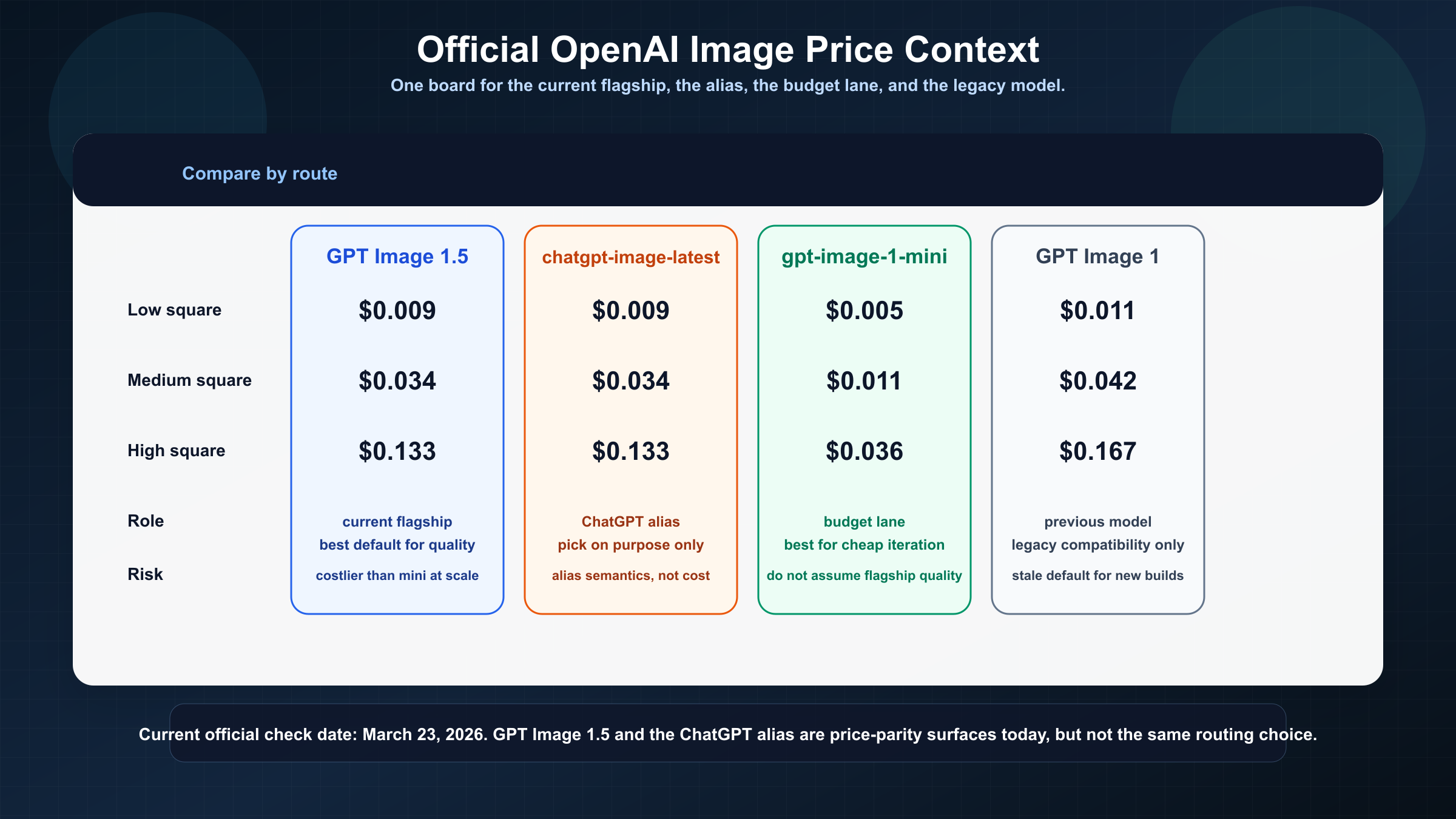

| Surface | 1024x1024 low | 1024x1024 medium | 1024x1024 high | What it really means |

|---|---|---|---|---|

| GPT Image 1.5 | $0.009 | $0.034 | $0.133 | Current flagship lane for quality, editing, and prompt adherence |

chatgpt-image-latest | $0.009 | $0.034 | $0.133 | Alias that points to the current ChatGPT image snapshot |

gpt-image-1-mini | $0.005 | $0.011 | $0.036 | Cheapest current OpenAI lane for budget-heavy generation |

| GPT Image 1 | $0.011 | $0.042 | $0.167 | Previous OpenAI image model, usually a legacy choice now |

Three decisions follow from that table.

First, GPT Image 1.5 is the right default when your team cares about image quality, editing, or brand consistency. OpenAI's current model page and the December 16, 2025 launch note both frame it as the current flagship.

Second, gpt-image-1-mini is the real price-floor answer inside OpenAI's current image family. A lot of results ranking for this keyword either ignore mini or mention it too late. If your workload is bulk generation, internal prototypes, or cheap iteration, mini should be the first benchmark before you assume the flagship is necessary.

Third, the visible per-image number is not always the full request cost. OpenAI's image generation guide is explicit that text tokens and image-input tokens still matter, especially for edits and high-fidelity reference-image workflows.

Current GPT Image 1.5 Pricing at a Glance

The most useful way to read GPT Image 1.5 pricing is to separate the clean headline from the underlying billing model.

The headline is simple. OpenAI's current model page lists three quality tiers for GPT Image 1.5: low, medium, and high. For square 1024x1024 outputs, those are $0.009, $0.034, and $0.133. Portrait 1024x1536 and landscape 1536x1024 outputs are slightly higher at $0.013, $0.05, and $0.20. If you searched this keyword because you needed the current official list price, that is the part to anchor on.

Underneath that, the model is still billed by tokens. On the current OpenAI pricing page, GPT Image 1.5 lists $8.00 per 1M image-input tokens, $2.00 per 1M cached image-input tokens, and $32.00 per 1M image-output tokens. Text tokens are listed separately at $5.00 input, $1.25 cached input, and $10.00 output per 1M tokens. The per-image ladder is just OpenAI doing the token math for the most common output sizes and quality tiers.

That distinction matters because it explains why GPT Image 1.5 pricing feels more stable than many page-one summaries suggest. You are not dealing with a mysterious marketplace price that changes every week. You are dealing with a public token model plus a public per-image shortcut table. That is also why OpenAI's image generation guide can list the corresponding square token counts: 272 tokens for low, 1,056 for medium, and 4,160 for high.

The other useful comparison is historical. OpenAI's current GPT Image 1 page still lists the previous model at $0.011, $0.042, and $0.167 for square low, medium, and high outputs. That makes GPT Image 1.5 materially cheaper than the previous lane, not just newer. The vendor said the same thing in the launch announcement on December 16, 2025, where it described GPT Image 1.5 image inputs and outputs as 20% cheaper than GPT Image 1.

This is where weaker results drift off course. Some focus on the launch story, some quote a gateway's own credit system, and some still surface GPT Image 1 context as if it were the current default. The better rule is simple: if you want the official current answer, start from GPT Image 1.5's own model page and then move outward only when your budget question becomes more specific than "what is the list price?"

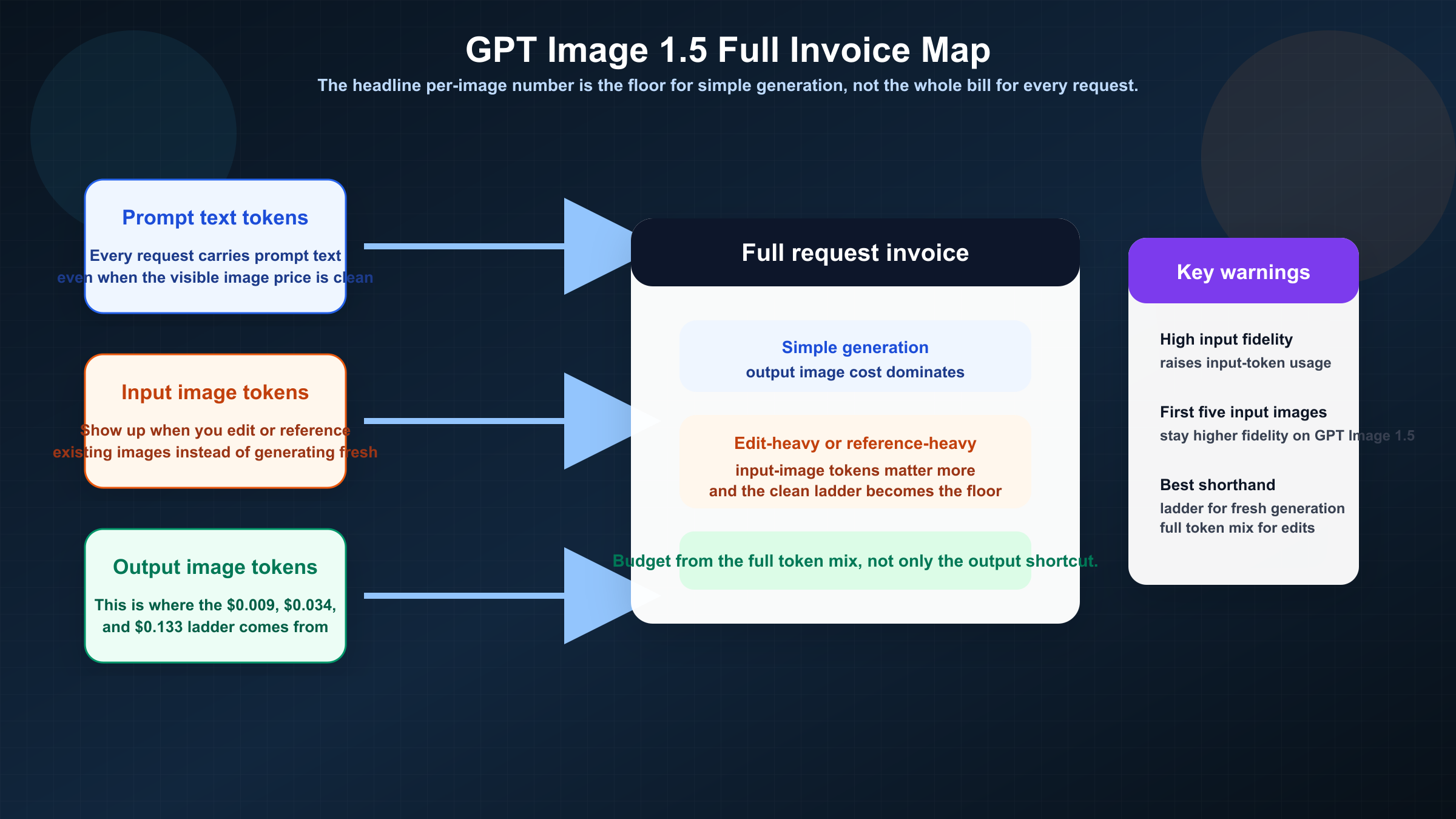

What Actually Gets Billed Beyond the Output Image

This is the section most keyword pages avoid, and it is the reason many developers keep searching after they already found the price table.

For a simple text-to-image request, the output-image ladder is often close enough. If you send a short prompt and ask for one fresh image, the output-image charge will dominate the request, and the official per-image number is a perfectly reasonable planning shortcut.

That shortcut stops being reliable as soon as your workflow gets more realistic.

OpenAI's image generation guide says you also need to account for text input tokens and image-input tokens. That matters for any workflow built around edits, reference images, or image preservation instead of pure one-shot generation. The same guide also says GPT Image 1.5 can preserve the first five input images with higher fidelity when input_fidelity is set to high. That is great for brand work, product variants, or revision-heavy creative flows. It is also exactly the kind of feature that raises the total request cost above the clean output-only number.

In practical terms, your mental model should look like this:

texttotal request cost = prompt text tokens + input image tokens when editing or referencing images + output image tokens

If you are using GPT Image 1.5 as a production editing model, this is the real budgeting rule to trust. The page-one mistake is to treat "\$0.009 per image" as a universal answer. It is only universal for the simplest kind of request.

There is also real user friction behind this caveat. In an OpenAI Developer Community thread about GPT Image 1.5 edit billing, a developer described how edit tests were billed but hard to break down cleanly in the dashboard. That does not mean OpenAI has hidden pricing. It means GPT Image 1.5 behaves like a multimodal model request, not like a flat-fee image vending machine.

That is why the right budgeting language should stay slightly conservative. For pure generation, the per-image ladder is the best starting estimate. For edit-heavy, reference-heavy, or high-fidelity jobs, treat the per-image ladder as the floor and monitor the full request mix in your own workload.

When GPT Image 1.5 Is Worth Paying For

The cleanest reason to choose GPT Image 1.5 is not that it is the newest model. It is that the newer lane can save money indirectly by lowering retries, cleanup, and edit drift.

That is easiest to see in brand and editing work. OpenAI's December 2025 launch post positioned GPT Image 1.5 as stronger than GPT Image 1 at image preservation and editing. If your team has to maintain composition, likeness, packaging details, or branded text across iterations, that is not a cosmetic upgrade. It is the difference between one usable pass and three or four regeneration cycles that erase the apparent price advantage of a cheaper lane.

The model is also easier to justify when text rendering matters. GPT Image 1.5 is now the better OpenAI choice for posters, packaging comps, ecommerce assets, social cards, and other outputs where text in the image needs to be legible enough to ship or at least usable enough to edit lightly. If the model saves you one extra correction round per asset, the visible price gap versus mini can disappear quickly.

This is why the best buyer question is not "is GPT Image 1.5 cheap?" It is "does GPT Image 1.5 reduce enough downstream work to be cost-effective?" For many marketing, ecommerce, and design workflows, the answer is yes.

The opposite case is just as important. If you are generating a high volume of low-stakes variants, doing internal prototyping, or running early prompt experiments, then GPT Image 1.5 may be the wrong default. That is where gpt-image-1-mini becomes more interesting than many ranking pages admit. Mini starts at $0.005 for square low, $0.011 for medium, and $0.036 for high. At scale, that difference is not cosmetic. It changes the budget conversation entirely.

If your broader question is OpenAI image pricing across the whole family rather than GPT Image 1.5 specifically, our guide to OpenAI image generation API pricing is the better next read because it covers mini, the alias, and legacy lanes in one place.

GPT Image 1.5 vs Mini vs chatgpt-image-latest vs GPT Image 1

The surface confusion around this keyword is real, and page one does a poor job of sorting it out.

| Surface | Current role | Square start price | Use it when | Do not confuse it with |

|---|---|---|---|---|

| GPT Image 1.5 | Current flagship image model | $0.009 | You want the best current OpenAI default for editing, brand preservation, and stronger output quality | A generic ChatGPT subscription feature |

gpt-image-1-mini | Current budget lane | $0.005 | Cost matters more than flagship quality | A smaller version of GPT Image 1.5 with identical tradeoffs |

chatgpt-image-latest | Alias for the image snapshot currently used in ChatGPT | $0.009 | You intentionally want the current ChatGPT image snapshot in the API | A stable long-term model ID |

| GPT Image 1 | Previous OpenAI image model | $0.011 | You are maintaining a legacy workflow or comparing old pricing assumptions | The current recommended default |

Two details matter here.

The first is that chatgpt-image-latest is not currently a cheaper route. OpenAI's current alias page lists the same token rates, same per-image ladder, and same tier limits as GPT Image 1.5. The difference is not price. The difference is stability. chatgpt-image-latest is an alias tied to the image snapshot currently used in ChatGPT, while GPT Image 1.5 is the cleaner model ID to reason about when you want a stable production contract.

The second is that mini is not just a footnote. OpenAI's current mini page lists square low/medium/high pricing at $0.005, $0.011, and $0.036. That is why a budget-first routing rule beats a model-first routing rule. If you are trying to drive down cost on a large batch of internal or low-stakes outputs, the smarter question is often "why am I paying flagship rates at all?"

Legacy GPT Image 1 still matters, but mostly for migration logic. If that is your decision, read our GPT Image 1 vs GPT Image 1.5 comparison rather than treating the old model as the current buying baseline.

Batch Pricing, Rate Limits, and Monthly Budget Math

Batch is where the pricing discussion starts to matter operationally rather than just editorially.

On the current OpenAI pricing page, GPT Image 1.5 Batch rates are exactly half of standard rates: $4 input, $1 cached input, and $16 output per 1M image tokens, with text tokens also halved to $2.50, $0.63, and $5. If your workload can run asynchronously, that is the cleanest official lever for cost reduction.

What does that mean in plain monthly math?

For output-only estimates, 1,000 square GPT Image 1.5 images at medium quality work out to about $34 in standard processing and roughly $17 in Batch. At 10,000 images, the same lane becomes about $340 in standard processing and about $170 in Batch before prompt-text or edit-path costs. High quality scales much faster: 1,000 square high-quality outputs are about $133, and 10,000 are about $1,330 before extra token costs.

This is exactly why the article should not stop at the visible per-image number. A team that generates ten images a day can live with imprecision. A team planning tens of thousands of outputs a month needs to know where Batch changes the budget and where edit-heavy requests break the easy math.

Throughput matters too. OpenAI's current GPT Image 1.5 model page lists Free not supported, then 5 IPM at Tier 1, 20 IPM at Tier 2, 50 IPM at Tier 3, 150 IPM at Tier 4, and 250 IPM at Tier 5. Those are not exotic details. They change whether the official API is practical for your current workload. A cheap per-image lane with the wrong throughput for your launch schedule is still the wrong answer.

This is also where some users leave the official pricing question and start looking for alternative routing. That is reasonable. It just needs to be phrased correctly. A gateway or reseller may still be the better commercial choice for a specific team, but that is a separate decision from the question "what does OpenAI officially charge for GPT Image 1.5?"

Why Search Results Disagree About GPT Image 1.5 Pricing

The disagreement across search results is real, but it is not random.

One cluster of results is quoting official OpenAI pricing. Those are the pages you should trust for token rates, per-image ladder values, launch timing, and tier limits. Another cluster is quoting gateway credits, bundled subscriptions, or reseller plans. Those pages may still be useful if you are shopping for alternative access, but they are answering a different question.

That is why page one feels messy. A result like PoYo can show a simple "\$0.01 per image"-style pitch because it is selling its own route to the model. A result like gptimage15.org/pricing can rank on exact-match relevance even though the page is mostly a plan grid. And a guide-style article can mention the correct official prices while still centering benchmarks, marketing use cases, or alternative tools rather than the full invoice math readers actually need.

The official OpenAI answer is not hard to find. It is just fragmented across the GPT Image 1.5 model page, the pricing page, and the image generation guide. That fragmentation creates room for commercial exact-match pages to intercept the query with a faster but less precise answer.

The practical rule is simple:

If you need official OpenAI pricing, trust the model page, the pricing page, and the image guide.

If you need alternative access pricing, treat gateway and reseller pages as separate products rather than as contradictory evidence about OpenAI's own billing.

That distinction sounds obvious once stated, but it is exactly what most ranking pages fail to make explicit.

If your next problem is not pricing but alias stability, our guide to ChatGPT Image Latest vs GPT Image 1.5 is the better follow-up because that decision is about production routing, not just cost.

FAQ

What is the current official GPT Image 1.5 price?

As checked on March 23, 2026, OpenAI lists GPT Image 1.5 at $0.009, $0.034, and $0.133 for 1024x1024 low, medium, and high outputs.

Is chatgpt-image-latest cheaper than GPT Image 1.5?

No. The current official alias page shows the same token rates and the same per-image ladder as GPT Image 1.5. The difference is alias behavior, not price.

What is the cheapest current OpenAI image model?

gpt-image-1-mini is the cheapest current OpenAI lane, starting at $0.005 for a square low-quality output.

Does GPT Image 1.5 pricing include edits and reference images?

Not completely. The visible per-image ladder is the clean output-image shortcut. Prompt text and input-image tokens still affect the total request cost, especially for edit-heavy or high-fidelity workflows.

Does Batch really matter for GPT Image 1.5?

Yes. On the current OpenAI pricing page, Batch halves GPT Image 1.5 text and image token rates. For large asynchronous jobs, that can materially change monthly cost.

Is there a free tier for GPT Image 1.5 API usage?

The current official GPT Image 1.5 model page lists Free not supported.