As checked on March 26, 2026, the cheapest credible alternative to the OpenAI image generation API is not automatically another provider. If price is your only problem, stay with OpenAI and move to gpt-image-1-mini, which OpenAI currently prices at \$0.005, \$0.011, and \$0.036 for low, medium, and high 1024x1024 outputs. If you mean "cheaper than GPT Image 1.5 specifically," then Imagen 4 Fast at \$0.02 per image is the clearest mainstream hosted alternative, while FLUX.1 Kontext and FLUX.2 dev become better answers when edit-heavy work or local experimentation is what is really driving your cost.

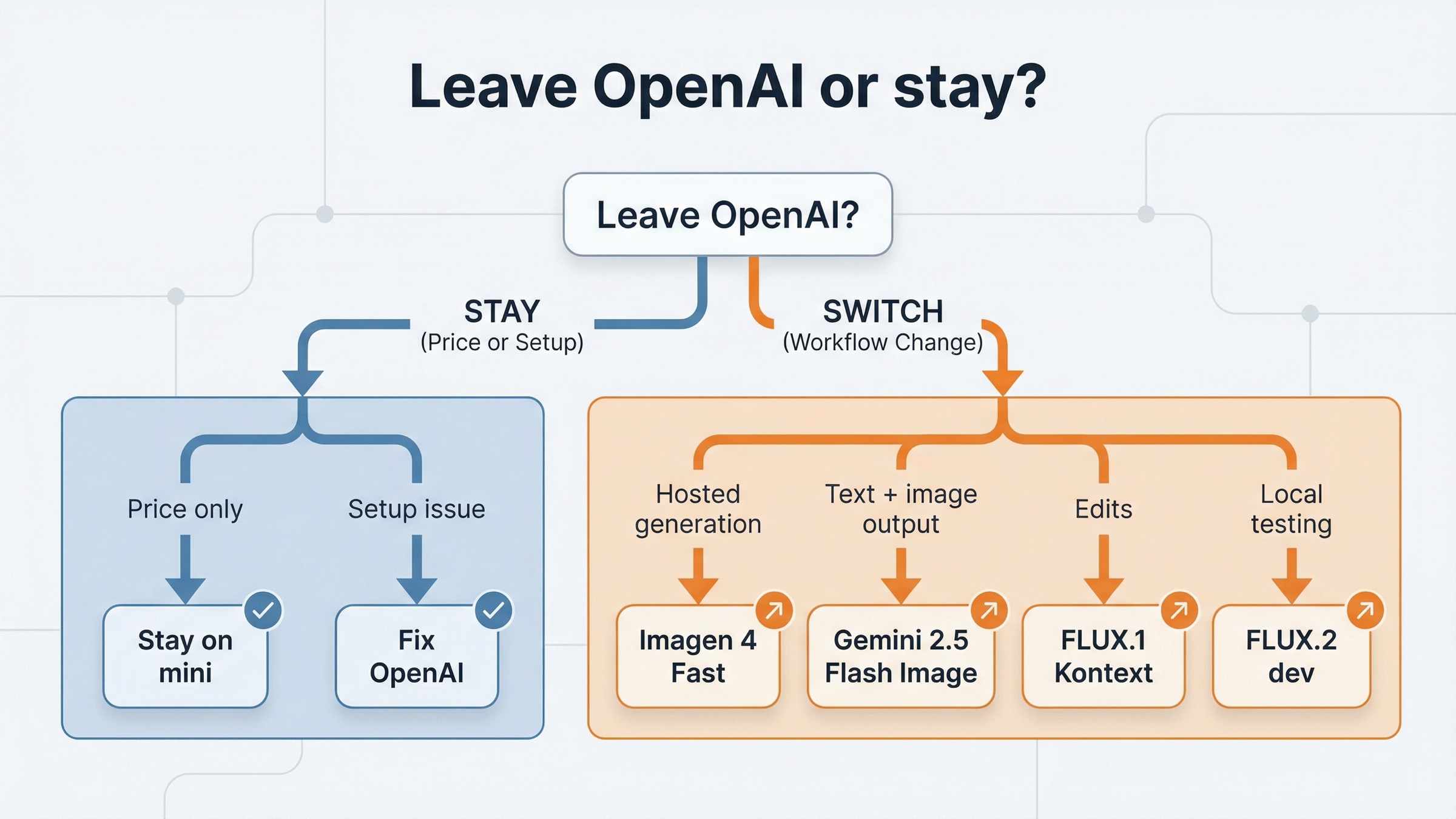

That distinction is what most ranking pages still fail to explain. They treat every cheaper-alternative search like a broad market roundup, but the reader is usually trying to solve a narrower budgeting problem. Do I really need to leave OpenAI, or am I only paying for the wrong OpenAI lane? If I do leave, am I replacing one-shot image generation, a multimodal model call, an edit-heavy design loop, or just an expensive experimentation habit?

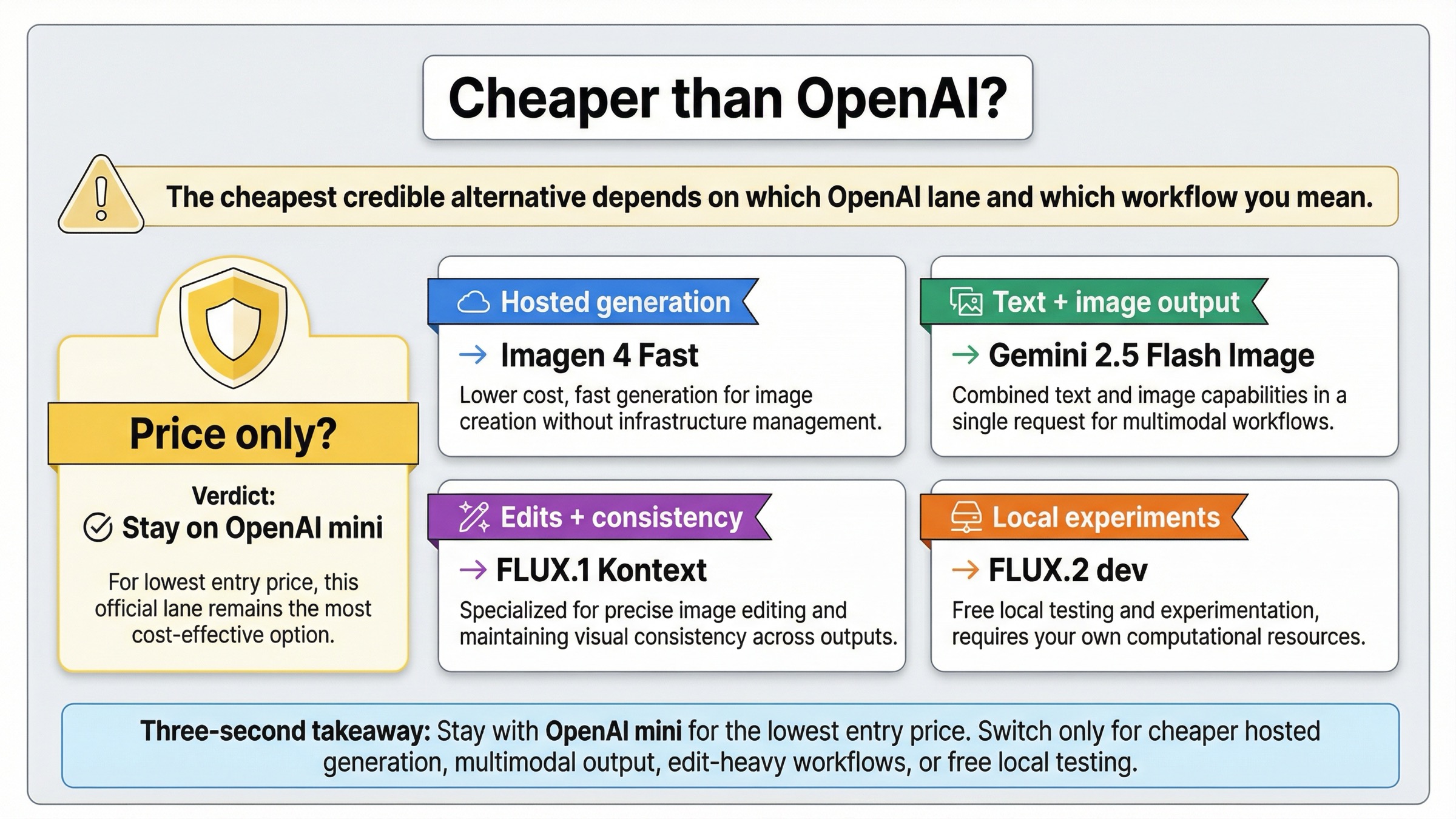

That is why this page does not start with a top-10 vendor catalog. The useful answer is a switch rule. Stay on OpenAI mini when raw entry price is the whole question. Switch to Imagen 4 Fast when you want a cheaper hosted generation lane than GPT Image 1.5. Switch to Gemini 2.5 Flash Image when you need text and image output in one model call. Switch to FLUX.1 Kontext when OpenAI becomes expensive because you keep revising the same image. Switch to FLUX.2 dev when you want a free local non-commercial path before another hosted commitment.

TL;DR

| If your real problem is... | Cheapest credible answer | Why it wins | Main tradeoff |

|---|---|---|---|

| You only want the lowest official entry price | Stay with gpt-image-1-mini | OpenAI still lists it at $0.005 for one low 1024x1024 image | You are giving up GPT Image 1.5 quality and some edit strength |

| You want a cheaper hosted generation lane than GPT Image 1.5 | Imagen 4 Fast | Google lists $0.02 per image, which undercuts GPT Image 1.5 medium and high | It is a different provider stack, not a drop-in OpenAI route |

| You need one model call to return both text and images | Gemini 2.5 Flash Image | It combines text-plus-image IO in one workflow | It is not the cleanest apples-to-apples per-image comparison |

| You keep paying for retries and revisions, not first drafts | FLUX.1 Kontext | Edit-heavy workflows can cost less in practice when the model preserves more of what already works | The posted per-image row is not the cheapest headline number |

| You want to test locally before another hosted bill | FLUX.2 dev | Black Forest Labs lists it as free for local development and non-commercial use | It is not a hosted commercial production default |

The shortest honest answer is this: do not switch for price alone until you compare against OpenAI mini first. If mini is too weak for the work, then compare GPT Image 1.5 against Imagen 4 Fast, Gemini 2.5 Flash Image, and FLUX based on the workflow you actually need.

Which option is actually cheaper than OpenAI right now?

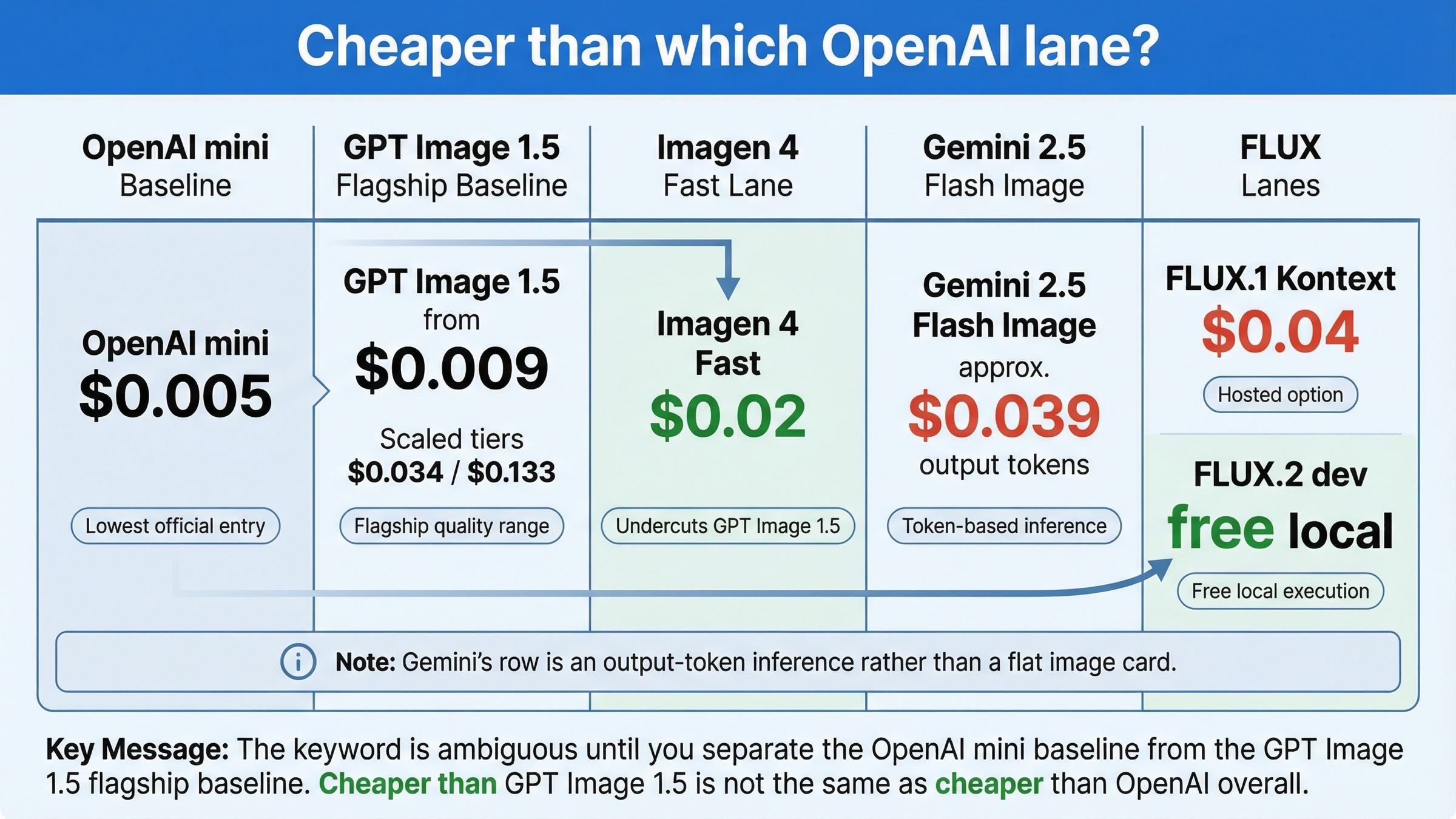

The phrase openai image generation api cheaper alternative hides the most important ambiguity in the whole query. Cheaper than which OpenAI lane?

If you compare against GPT Image 1.5, there are several credible cheaper answers. Google's current Vertex AI pricing page lists Imagen 4 Fast at $0.02 per image, which is clearly below GPT Image 1.5 medium at $0.034 and high at $0.133. Black Forest Labs' current pricing page lists FLUX.1 Kontext [pro] at $0.04 per image, which is not cheaper than GPT Image 1.5 medium, but can still be cheaper in real edit-heavy workflows if it cuts revision count. BFL also lists FLUX.2 [dev] as free for local development and non-commercial use, which matters if your real goal is to prototype without another hosted bill.

If you compare against all current OpenAI image lanes, the answer changes. OpenAI's current gpt-image-1-mini model page still lists the cheapest official starting row in this comparison set: $0.005, $0.011, and $0.036 for low, medium, and high 1024x1024 outputs. That is why broad "best alternatives" pages are still misleading here. They compare competitors against GPT Image 1.5, then quietly let the reader forget that OpenAI already has a cheaper budget lane.

The table below is the simplest way to keep those surfaces straight.

| Option | Current price surface | Cheapest visible row | Best fit | What it does not solve well |

|---|---|---|---|---|

gpt-image-1-mini | OpenAI official image model | $0.005 | Lowest-cost official OpenAI generation | Premium output quality or higher-end editing |

| GPT Image 1.5 | OpenAI flagship image model | $0.009 | Better output quality, stronger text rendering, higher-confidence edits | Budget-first generation |

| Imagen 4 Fast | Google hosted image-generation model | $0.02 | Cheaper hosted generation than GPT Image 1.5 | Cheapness versus OpenAI mini |

| Gemini 2.5 Flash Image | Token-priced multimodal model | Inference: about $0.039 in output-image tokens per image before input tokens | One model call that can reason, answer, and render | Clean one-number per-image planning |

| FLUX.1 Kontext [pro] | BFL edit-focused hosted model | $0.04 | Edit-heavy workflows, character consistency, text changes | Lowest headline price |

| FLUX.2 dev | Local non-commercial model | Free | Local experimentation | Hosted commercial production |

That Gemini row needs one note. Google's docs do not publish Gemini 2.5 Flash Image as a flat per-image card the way they do for Imagen. The Gemini 2.5 Flash Image model page says one generated image consumes 1290 tokens, and Vertex pricing lists $30 per 1M image-output tokens. That implies roughly $0.039 in output-image token cost per image before input tokens. That is an inference from the official numbers, not a separate flat-fee price card.

So the correct cost question is not "who is cheapest?" It is "cheapest compared with which OpenAI lane, for which workflow?"

Stay with gpt-image-1-mini when price is your only problem

This is the part most alternative pages still dodge because it weakens the clean switch narrative. But it is the most useful advice for a large share of readers.

OpenAI's own image-generation guide says gpt-image-1-mini is the more cost-effective option when image quality is not the priority. That matters because a lot of developers are not actually upset with OpenAI as a provider. They are upset because they started on the flagship lane and only later realized the workload was mostly cheap ideation, disposable variants, low-stakes drafts, or internal graphics.

If that sounds like your case, switching vendors is often the wrong first move. The lower-friction move is to stay on OpenAI and benchmark mini against your actual prompts. The API surface is the same family, the billing relationship is already in place, and the cost floor is still lower than the mainstream hosted alternatives in this comparison.

This is also where the live SERP keeps overpromising. Broad alternatives pages turn "OpenAI feels expensive" into "leave OpenAI." But a budget problem does not automatically mean a provider problem. It can just mean a lane-selection problem.

I would stay with OpenAI mini when:

- the workload is mostly one-shot generation rather than long edit loops

- you already have OpenAI billing and infrastructure in place

- you do not need a unified text-plus-image model call

- you are optimizing for lowest official entry price, not for a provider change

If the benchmark fails on quality or edit reliability, then move on. But skip that benchmark and you risk doing a bigger migration than the economics actually require.

If you want the broader current OpenAI price surface before making that choice, read OpenAI image generation API pricing and GPT Image 1.5 cost per image. Those pages help with the narrower budgeting math this article intentionally compresses.

Use Imagen 4 Fast when you want a cheaper hosted generation lane

If you already know the problem is GPT Image 1.5 specifically, not OpenAI mini, then Imagen 4 Fast is the cleanest mainstream cheaper alternative.

Google's current Vertex AI pricing page lists Imagen 4 Fast at $0.02 per image. Its Imagen 4 documentation also makes the product shape clear: this is a generation-first model line on Vertex AI, with support for up to 4 output images per prompt. That is important because it means you are not comparing a pure image-generation API against a chat-oriented model that happens to produce images. You are comparing one hosted image-generation lane against another.

That is why Imagen 4 Fast is the best answer when the reader says:

- "GPT Image 1.5 is too expensive for my generation volume"

- "I want a Google Cloud hosted image stack"

- "I do not need my image model to return text in the same call"

- "I want a more straightforward per-image planning number than a token-heavy multimodal route"

It is also why Imagen 4 Fast is not the answer for every cheaper-alternative search. It does not beat OpenAI mini on raw entry price. It is cheaper than GPT Image 1.5 for many generation-first workloads, not cheaper than every OpenAI option in the family.

This distinction matters a lot for teams doing medium-quality production work. If GPT Image 1.5 medium at $0.034 is your current default, Imagen 4 Fast at $0.02 can be a cleaner cost-down move than a full vendor-market survey. But if your current default should have been OpenAI mini all along, Imagen is not the first optimization.

So the operator rule is simple. Use Imagen 4 Fast when the real comparison is against GPT Image 1.5, not when the real comparison is against OpenAI mini.

Use Gemini 2.5 Flash Image when one model call needs text and images

Some readers search for a cheaper alternative when the real issue is not the image price row. It is the surrounding orchestration cost.

That is the strongest case for Gemini 2.5 Flash Image. Google's current model documentation says the model supports text and image inputs and returns text and image outputs. That makes it a different category from OpenAI's direct image endpoint or Imagen's generation-first path. You pick Gemini when you want one model interaction that can interpret, explain, revise, and then generate, not when you want the simplest possible per-image billing surface.

This is also why price comparisons around Gemini get sloppy fast. If you reduce Gemini 2.5 Flash Image to a pure image-generation price fight, you miss the main reason it can still be the cheaper choice. The savings can come from workflow compression rather than the cheapest single image row. If one Gemini call replaces a text-model step plus an image-model step plus some routing glue, the total workflow may be cheaper even if the flat image row is not the lowest on paper.

The opposite is also true. If your product only needs a clean generate-image endpoint, Gemini may be the wrong comparison and Imagen 4 Fast may be the clearer answer. That is why this article separates those two Google options instead of collapsing them into one Google bucket.

Choose Gemini 2.5 Flash Image when:

- the application needs text and image output in the same turn

- model-call simplification matters more than one low per-image number

- you are already designing around a multimodal model workflow

- the real cost problem is orchestration complexity, not only the image fee

If you only need one-shot image generation, this is usually not the cleanest cheaper path. In that case, stick with the direct OpenAI image family or move to Imagen.

Use FLUX.1 Kontext when edits are making OpenAI expensive in practice

This is the section most cheaper-alternative pages should have, but usually do not.

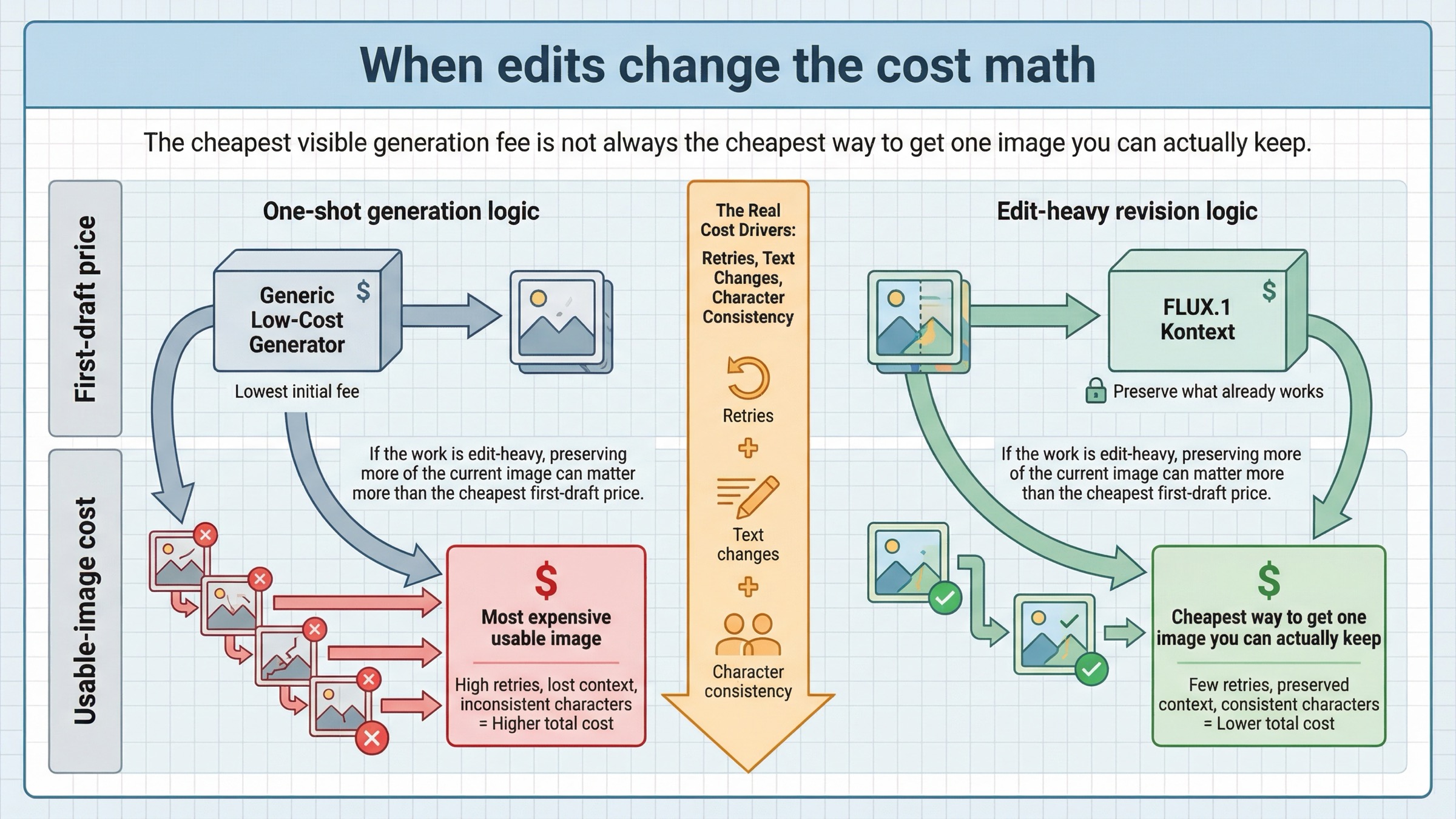

The real cost of image generation is often not the first output. It is the number of times you have to regenerate or re-edit before the image is actually usable. That is why FLUX.1 Kontext belongs in this article even though its posted $0.04 row is not the cheapest headline number in the whole field.

Black Forest Labs' Kontext overview positions the model around image editing, character consistency, text editing, and style transformation. That is a very different promise from "cheap image generation." It is really a promise about preserving what already works so you stop paying for avoidable restarts.

If your team keeps saying things like:

- "keep the character, but change the background"

- "keep the product angle, but rewrite the text"

- "keep the composition, but fix the typography"

- "keep the campaign style, but make five variations"

then the cost you are fighting is often iteration cost, not just generation cost. In that situation, a model that preserves more of the current image can be cheaper in practice than a model with a better-looking first-row price.

This is also why I would not describe Kontext as a universal OpenAI replacement. It is a better answer to a narrower pain. If the pain is one-shot generation cost, mini or Imagen may win. If the pain is repeated revision cycles, Kontext becomes much more compelling.

So the right question is not "is Kontext cheaper per image?" It is "does Kontext reduce the number of paid attempts required to get one image I can actually keep?"

Use FLUX.2 dev when you want free local experimentation first

Some readers are still too early in the decision to care about a production hosted bill. They just want to test whether the workflow is worth pursuing.

That is where FLUX.2 dev is the strongest alternative in this article. Black Forest Labs' current pricing page lists it as free for local development and non-commercial use. That makes it the cleanest official answer when the real question is not "which hosted API should I migrate to tomorrow?" but rather "how do I stop paying while I learn what this image feature actually needs?"

This matters more than many roundups admit. A large share of cheaper-alternative demand happens before the buyer has stabilized the real workload. They are still testing prompts, edit loops, asset pipelines, or output quality thresholds. In that stage, the most rational move may be to avoid another hosted spend entirely until the workflow is clearer.

That does not make FLUX.2 dev a hosted commercial replacement for OpenAI. It makes it the best bridge between expensive experimentation and a better-informed production choice. If the local tests prove the feature is worth shipping, you can then decide whether the production path should be OpenAI mini, Imagen 4 Fast, Gemini, a hosted FLUX lane, or something else.

So if your real objective is "stop paying while I learn," FLUX.2 dev is the best cheaper alternative in this whole page.

When the right answer is still to stay with OpenAI

A trustworthy alternatives page needs one section that says when not to switch.

OpenAI's current model availability article still ties gpt-image-1 and gpt-image-1-mini access to usage tiers and, in some cases, organization verification. The OpenAI community still shows how confusing that feels in practice. In one developer thread, users reported 429 errors even before a successful image was generated, with replies pointing back to free-tier versus Tier 1 status and organization verification. Another community thread shows how silent failures or missing output quickly get interpreted as a provider problem, even when the root cause may be transient load, route confusion, or access state.

That does not mean the frustration is fake. It means the cheapest alternative is sometimes not a product switch. It is fixing the setup and moving to the right OpenAI lane.

I would stay with OpenAI when:

- the issue is access, tier, or verification rather than model fit

- the workload is still mostly one-shot generation

- mini already satisfies the budget target

- the migration overhead would cost more than the price delta you are trying to save

If the broader replacement question is still open after that, use our more general OpenAI image generation API alternative guide. If the issue is route choice rather than price, OpenAI image API tutorial is the better next step.

FAQ

What is the cheapest OpenAI image generation API alternative right now?

If you mean the cheapest alternative to OpenAI overall, the answer is often OpenAI's own gpt-image-1-mini, which OpenAI currently lists at $0.005 for a low 1024x1024 image. If you mean the cheapest alternative to GPT Image 1.5 specifically, Imagen 4 Fast at $0.02 per image is the clearest mainstream hosted alternative.

Is Imagen 4 Fast cheaper than OpenAI?

It is cheaper than GPT Image 1.5 for many generation-first workloads, but not cheaper than gpt-image-1-mini on raw entry price.

Is Gemini 2.5 Flash Image actually cheaper?

Sometimes, but usually because it compresses workflow steps rather than because it publishes the cheapest flat image row. It is better treated as a multimodal workflow alternative than as a simple one-shot image-price replacement.

When is FLUX.1 Kontext the cheaper choice?

When repeated edits, text changes, or character-consistency work are the real cost center. In those cases, lower revision count can matter more than the lowest posted generation fee.

What if my OpenAI image API problem is really setup friction?

Then the cheapest move may be to stay with OpenAI, fix tier or verification state, and benchmark gpt-image-1-mini before you migrate the whole stack.