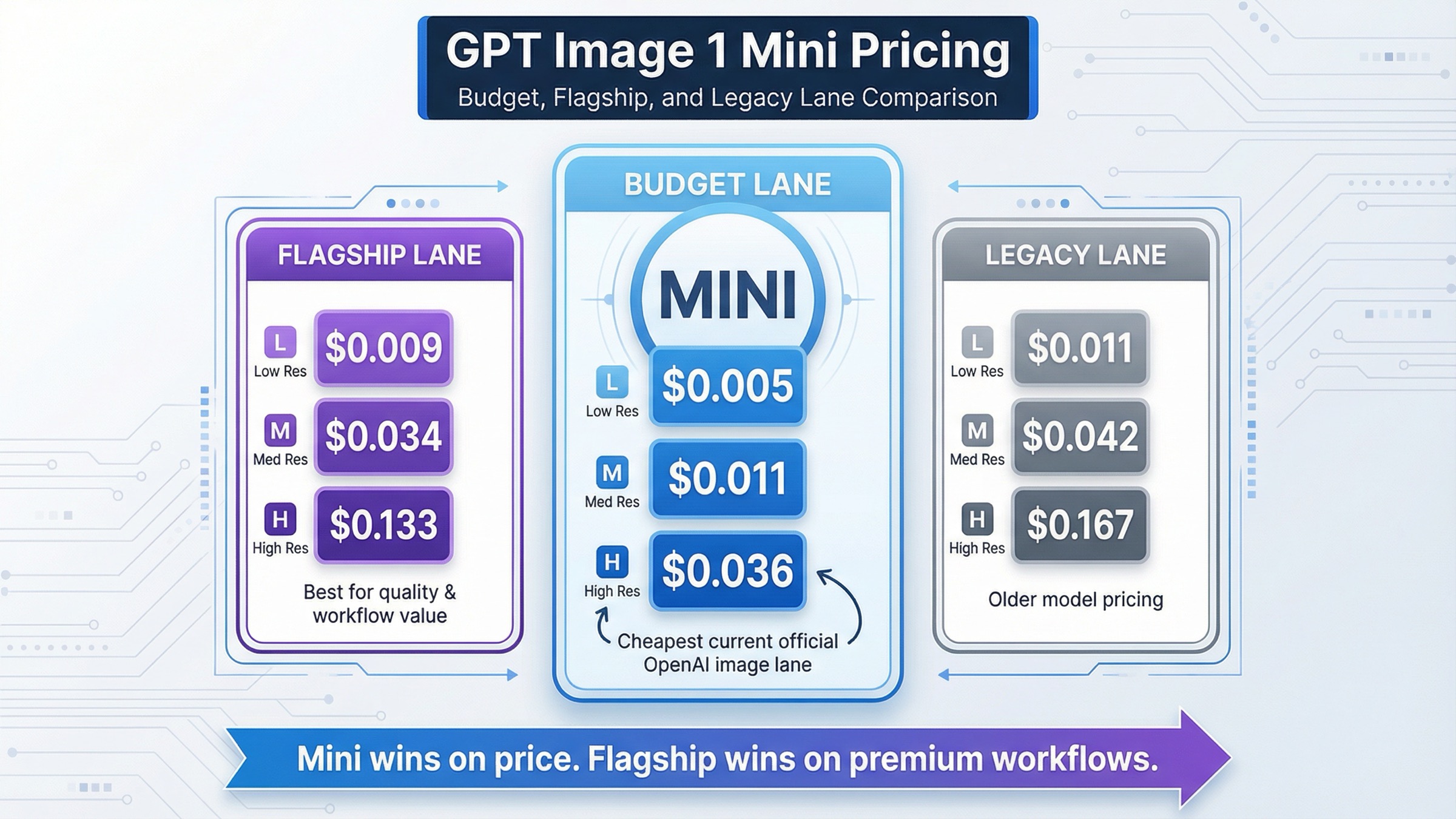

As of March 27, 2026, gpt-image-1-mini costs $0.005, $0.011, and $0.036 for 1024x1024 low, medium, and high image generation, with $0.006, $0.015, and $0.052 for the two larger portrait and landscape sizes. If your main question is "what is the cheapest current official OpenAI image lane for generation-heavy workloads?", mini is the answer.

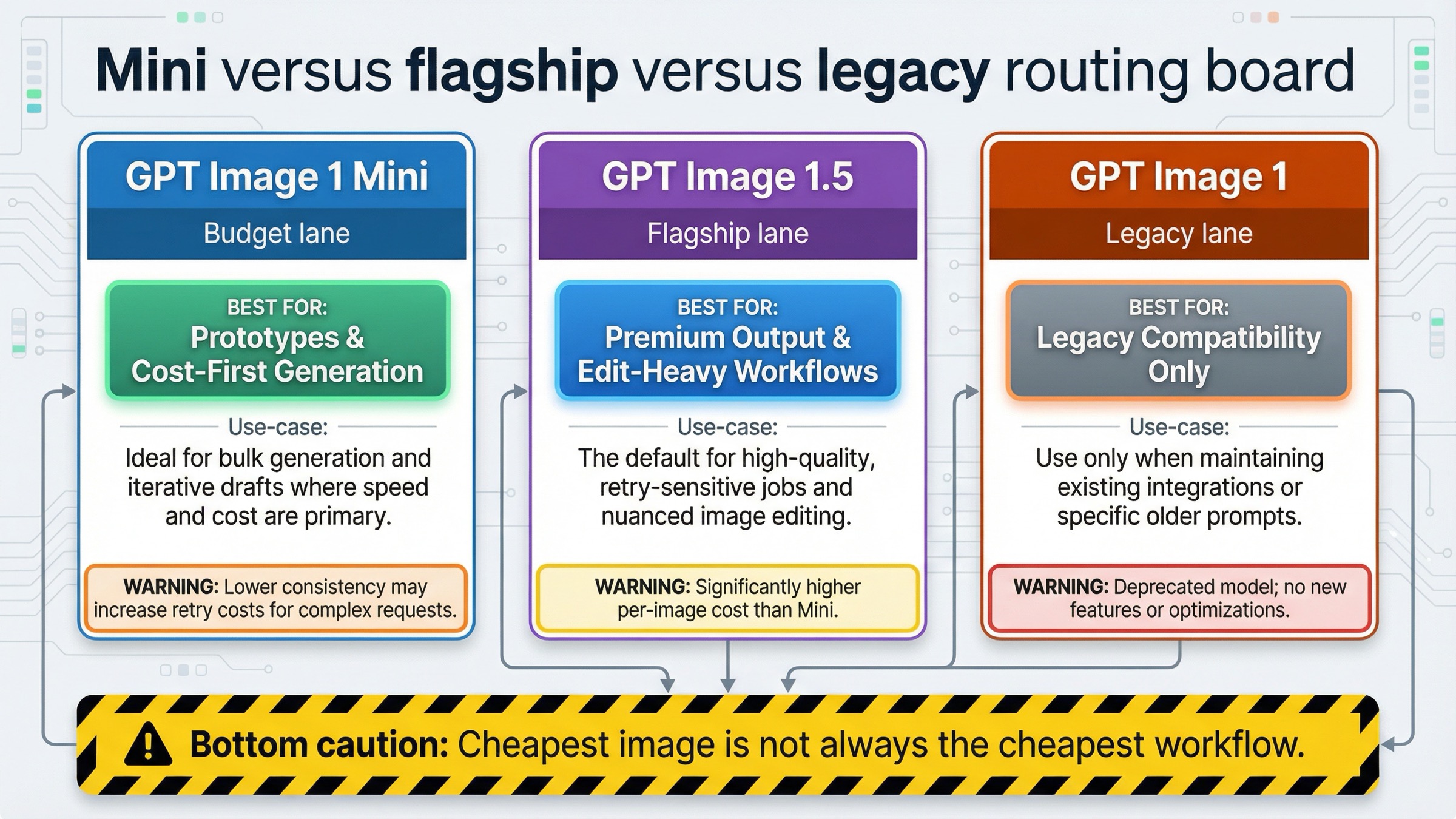

That still does not settle the real buying decision. OpenAI's current model catalog positions GPT Image 1.5 as the flagship image model, GPT Image 1 as the previous lane, and gpt-image-1-mini as a cost-efficient version of GPT Image 1. So the useful question is not only "what does mini cost?" It is when mini is the right default, and when the low sticker price becomes the wrong optimization.

The short operator rule is this: start with mini when cost per output image is the first constraint and the workload is mostly straightforward generation. If your workflow depends on stronger prompt adherence, edit-heavy work, or higher-value outputs where retries cost more than the model premium, benchmark GPT Image 1.5 before you optimize for the cheapest lane.

TL;DR

- Current mini square pricing: $0.005 low, $0.011 medium, $0.036 high

- Current mini portrait and landscape pricing: $0.006 low, $0.015 medium, $0.052 high

- Current mini token rates: $2.00 per 1M text input tokens, $0.20 cached text input, $2.50 per 1M image input tokens, $0.25 cached image input, and $8.00 per 1M image output tokens

- Current access floor: Free is not supported; the mini model page lists Tier 1 at 100,000 TPM and 5 IPM

- Best fit: budget-first prototyping, bulk generation, and generation-heavy internal workloads

- When not to assume mini wins: when retry cost, editing reliability, or premium output value matters more than the lowest per-image price

GPT Image 1 Mini pricing at a glance

The cleanest way to read this query is to separate the visible per-image ladder from the underlying token billing. The per-image ladder is what most people want first, and OpenAI's current gpt-image-1-mini model page makes that part unusually clear.

| Quality | 1024x1024 | 1024x1536 | 1536x1024 |

|---|---|---|---|

| Low | $0.005 | $0.006 | $0.006 |

| Medium | $0.011 | $0.015 | $0.015 |

| High | $0.036 | $0.052 | $0.052 |

Underneath that image-generation table, OpenAI currently lists these token rates for gpt-image-1-mini:

- Text input: $2.00 per 1M tokens

- Cached text input: $0.20 per 1M tokens

- Image input: $2.50 per 1M tokens

- Cached image input: $0.25 per 1M tokens

- Image output: $8.00 per 1M tokens

That split matters because page one often stops at the output-image number. For a short prompt and a simple generation request, that shortcut is usually directionally fine. But the token table is the reason the keyword is really a budget-routing query, not just a one-row lookup query. OpenAI's broader API pricing page matters here too because it is where the current Batch discount lives, and that discount can materially change the answer for offline generation workloads.

If your real need is broader OpenAI image budget planning across mini, GPT Image 1.5, chatgpt-image-latest, and legacy GPT Image 1, the more general follow-up is OpenAI image generation API pricing. This article is deliberately narrower: it is about mini itself and the decision around it.

When mini is the right choice vs GPT Image 1.5 and GPT Image 1

The most useful thing this page can do is turn raw prices into a model choice. Current OpenAI pricing makes mini the cheapest official image lane, but the cheapest lane is not always the cheapest workflow once you factor in retries, output value, and editing needs.

| Model | Current role | 1024x1024 low | 1024x1024 medium | 1024x1024 high | Best default when |

|---|---|---|---|---|---|

gpt-image-1-mini | Current budget lane | $0.005 | $0.011 | $0.036 | Cost is the first constraint and the workload is generation-heavy |

| GPT Image 1.5 | Current flagship lane | $0.009 | $0.034 | $0.133 | Output quality, editing value, and prompt adherence matter more than the lowest price |

| GPT Image 1 | Previous image lane | $0.011 | $0.042 | $0.167 | Legacy compatibility only, not new default builds |

Mini is the right default in three common situations.

First, it is the right place to start when you are testing whether the job really needs the flagship. If you are generating large batches of internal concepts, prototypes, or low-risk marketing variations, mini gives you the lowest official OpenAI price floor and lets you find out quickly whether paying more would change the outcome enough to matter.

Second, mini is the right place to start when you are building volume-first workflows. The difference between $0.011 and $0.034 at square medium looks small for ten images and very large for ten thousand. If your workload is dominated by generation volume rather than by expensive failures, mini can stay the rational default far longer than page one usually suggests.

Third, mini is the right place to start when you want the cheapest current official OpenAI image route without dropping back into legacy GPT Image 1 assumptions. That last part matters. OpenAI's current catalog does not position GPT Image 1 as the modern budget lane. It positions mini that way.

GPT Image 1.5 earns its premium in a different class of workflow. If the real job is client-facing production output, harder prompt-following, or edit-heavy work where one failed run is more expensive than the per-image difference, mini's savings can disappear fast. In those cases, the safer rule is not "always pay for the flagship." The safer rule is benchmark mini first, then keep the flagship if the quality delta reduces retries enough to win back the cost.

GPT Image 1 mainly matters because old screenshots and old articles still frame it as the image baseline. That is exactly why pricing search results can feel messier than they should. Current OpenAI documentation already labels GPT Image 1 as the previous image generation model, so it should be treated as a legacy reference, not as the model you compare mini against by default for new builds.

That is also why page one still feels more fragmented than it should. Some pages are ranking on the strength of an exact price table, some are ranking on OpenAI's own model-family documentation, and some are still leaking older GPT Image 1 assumptions into what should really be a current mini decision. A good mini-pricing article needs to clean up that market noise instead of inheriting it.

If your real confusion is not mini versus the flagship but rather stable model IDs versus OpenAI's ChatGPT alias, the right next read is chatgpt-image-latest vs gpt-image-1.5.

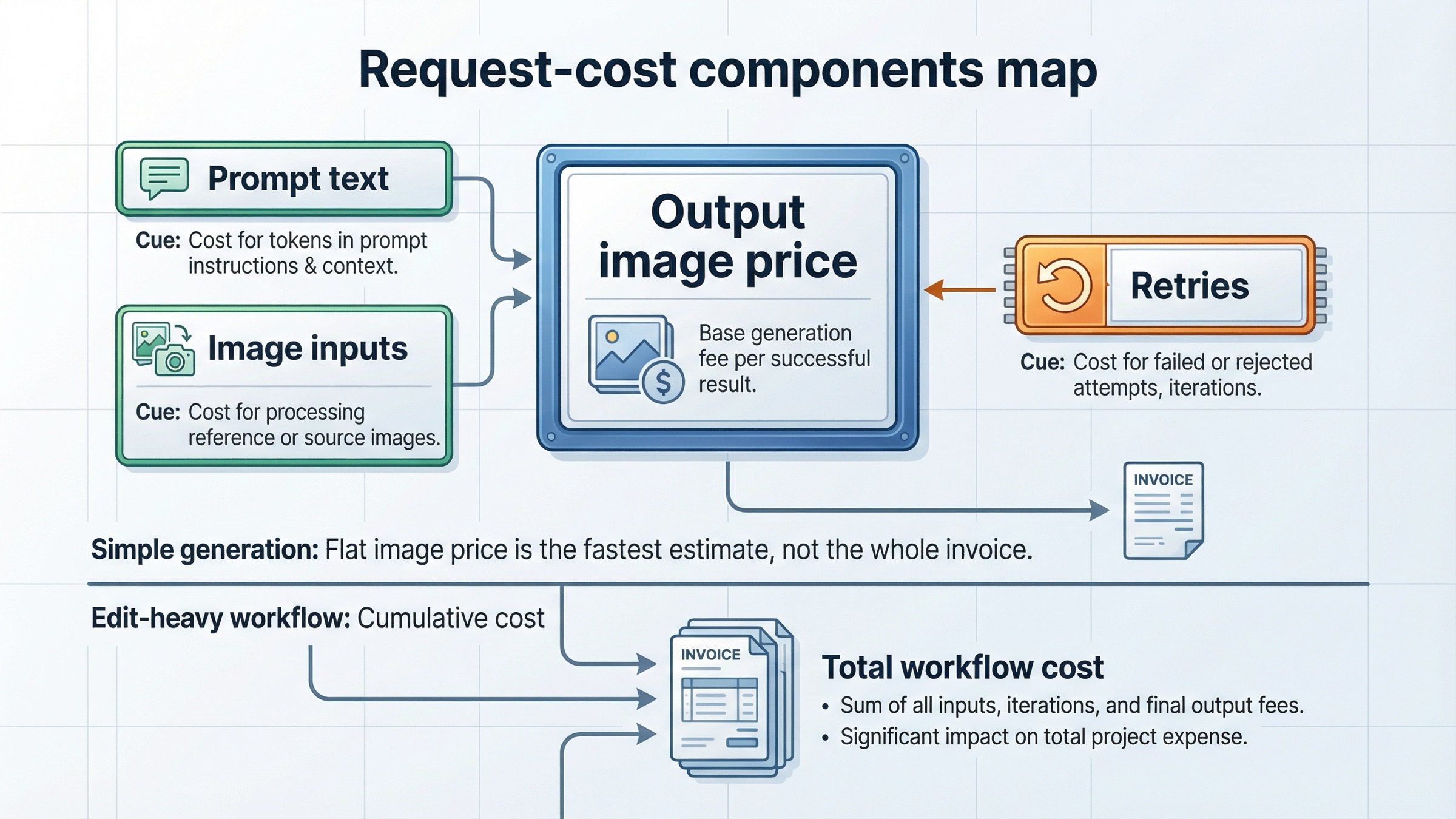

What you actually get billed for beyond the flat image price

This is where many quick pricing pages quietly stop being useful.

The per-image ladder above is the headline answer, and it is the right first answer for this query. But the model page also shows text-token pricing and image-token pricing, which tells you something important immediately: not every GPT Image invoice is just the flat output-image number.

In practical terms, your request cost has a few moving parts:

- prompt text

- image inputs, if you are editing or using reference images

- generated image output

- how often you need to retry because the cheaper lane did not get you close enough

For a basic generation flow with short prompts, the output-image number is often the dominant part of the bill. That is why people searching this keyword keep gravitating toward a flat cost-per-image answer. But once your workflow starts leaning on edits, reference-heavy prompts, or higher-stakes output quality, the pure sticker-price comparison gets weaker.

That does not mean mini becomes a bad choice. It means the correct budgeting question changes from "what is the cheapest listed image?" to "what is the cheapest successful workflow?"

This is the exact trap weak pricing pages create. They compare $0.011 and $0.034 and stop there. But if mini needs more retries, or if your workflow has enough edit and reference complexity that the premium lane cuts rework, the cheaper listed price does not automatically become the cheaper production path.

The safest way to use the current official pricing is this:

- Use the per-image ladder for first-pass planning

- Use token pricing and workflow shape for edit-heavy or reference-heavy planning

- Use real retry rates for production budgeting

If you are still deciding which API surface to use for direct generation or edits, OpenAI image API tutorial is the better operational companion. The model choice gets easier once the endpoint choice is already clear.

Rate limits, tier access, and Batch discounts

Mini's list price is only part of the answer because access and throughput shape whether that cheap price is usable at all.

On the current mini model page, OpenAI lists:

- Free: not supported

- Tier 1: 100,000 TPM and 5 IPM

- Tier 2: 250,000 TPM and 20 IPM

- Tier 3: 800,000 TPM and 50 IPM

- Tier 4: 3,000,000 TPM and 150 IPM

- Tier 5: 8,000,000 TPM and 250 IPM

OpenAI's current help-center article on model availability adds the other key caveat: GPT-image-1 and GPT-image-1-mini are available to API users on tiers 1 through 5, with some access subject to organization verification.

That creates a practical rule that many calculator-style pages skip: if you are still on a true free path, mini's low price is irrelevant because the model is not available there. And if your workflow is throughput-sensitive, the IPM table can matter as much as the per-image cost.

Batch is the other caveat that can flip the answer for some teams. OpenAI's pricing page currently says the Batch API saves 50% on inputs and outputs. For non-interactive jobs, that means the economics of the more expensive lane can tighten faster than many readers expect. As a planning shortcut, that makes both mini and GPT Image 1.5 meaningfully cheaper for offline workloads, but it matters especially when a team was only rejecting the flagship because the standard-price gap looked too wide.

The correct way to read that is not "Batch makes mini irrelevant." It is "Batch makes the model decision depend more on workflow shape than on sticker price alone." If your users need immediate image responses, Batch is the wrong answer no matter how attractive the discount looks. But if you are running overnight or asynchronous jobs, the Batch discount belongs in your first budget spreadsheet, not in an afterthought.

If access is your blocker rather than pricing, the better next step is OpenAI image generation API verification.

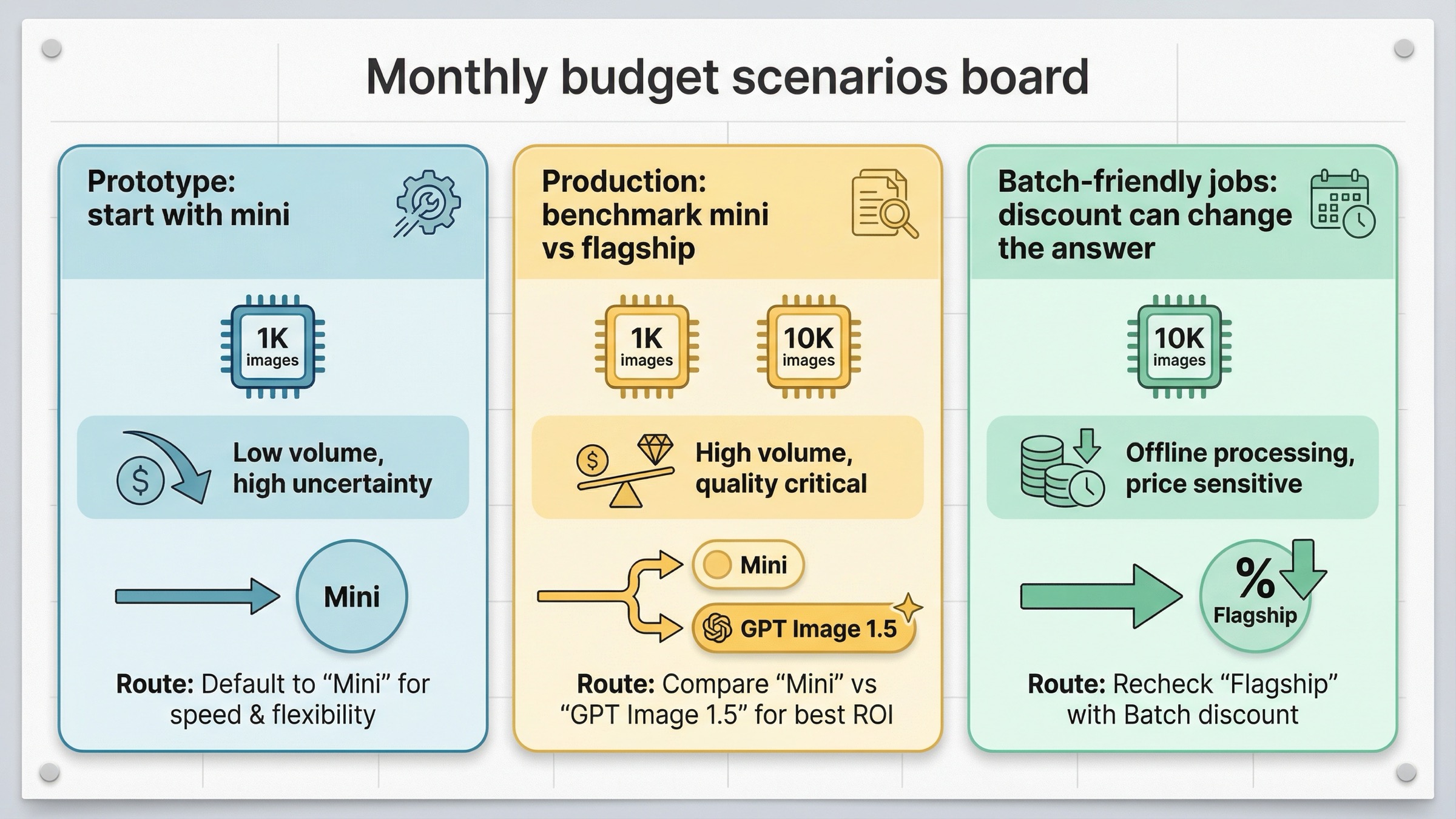

Monthly budget examples for real workloads

Per-image prices become much easier to reason about once you turn them into workload numbers.

For 1,000 square outputs, the current official ladder looks like this:

- Mini low: about $5

- Mini medium: about $11

- Mini high: about $36

- GPT Image 1.5 low: about $9

- GPT Image 1.5 medium: about $34

- GPT Image 1.5 high: about $133

- GPT Image 1 low: about $11

- GPT Image 1 medium: about $42

- GPT Image 1 high: about $167

For 10,000 square outputs, multiply those numbers by ten:

- Mini low: about $50

- Mini medium: about $110

- Mini high: about $360

- GPT Image 1.5 low: about $90

- GPT Image 1.5 medium: about $340

- GPT Image 1.5 high: about $1,330

That gives you three useful workload rules.

For a prototype or internal concept pipeline, mini is usually the first place to start because the dollar gap compounds quickly and the cost of minor imperfections is low.

For a steady production workflow, the correct answer depends on how much bad output costs you. If one failed generation wastes review time, manual cleanup, or another model call, the flagship can win on total workflow cost even when it loses on list price.

For an asynchronous bulk-generation workload, mini stays attractive, but Batch makes the comparison closer. If your team was only rejecting GPT Image 1.5 because the medium tier looked too expensive at volume, the 50% Batch discount is the first thing to recheck before you lock mini as a permanent default.

This is also why a page titled only "gpt-image-1-mini pricing calculator" is often not enough. A calculator gives you multiplication. It does not give you the routing judgment around retries, access, and workflow shape.

FAQ

What is the current cheapest official OpenAI image API price?

As checked on March 27, 2026, the cheapest current official OpenAI image price is gpt-image-1-mini low at $0.005 for 1024x1024.

Is GPT Image 1 Mini cheaper than GPT Image 1.5?

Yes. On the current official model pages, mini is materially cheaper than GPT Image 1.5 across the visible low, medium, and high image-generation ladder.

Is GPT Image 1 Mini the same thing as GPT Image 1.5 Mini?

No. OpenAI's current catalog describes mini as a cost-efficient version of GPT Image 1, while GPT Image 1.5 is the current flagship image model.

Does the flat per-image price equal the whole invoice?

Not always. It is the fastest planning number for simple generation, but the model page also lists text-token and image-token billing. The more your workflow leans on edits, references, or retries, the weaker the flat-image shortcut becomes.

Do I need a paid API tier to use GPT Image 1 Mini?

Yes. The current mini model page shows Free not supported, and OpenAI's model-availability article says GPT-image-1 and GPT-image-1-mini are available on tiers 1 through 5, with some access subject to organization verification.

Bottom line

gpt-image-1-mini is the current budget answer, not the universal answer. If your workload is generation-heavy and cost-first, mini is the right place to start. If your workflow is edit-heavy, quality-sensitive, or expensive to retry, benchmark GPT Image 1.5 before you optimize for the cheapest sticker price.

That is the cleanest current way to read the keyword. Use mini when the low cost is the real advantage. Use the flagship when the workflow is valuable enough that the cheaper image is not automatically the cheaper decision.