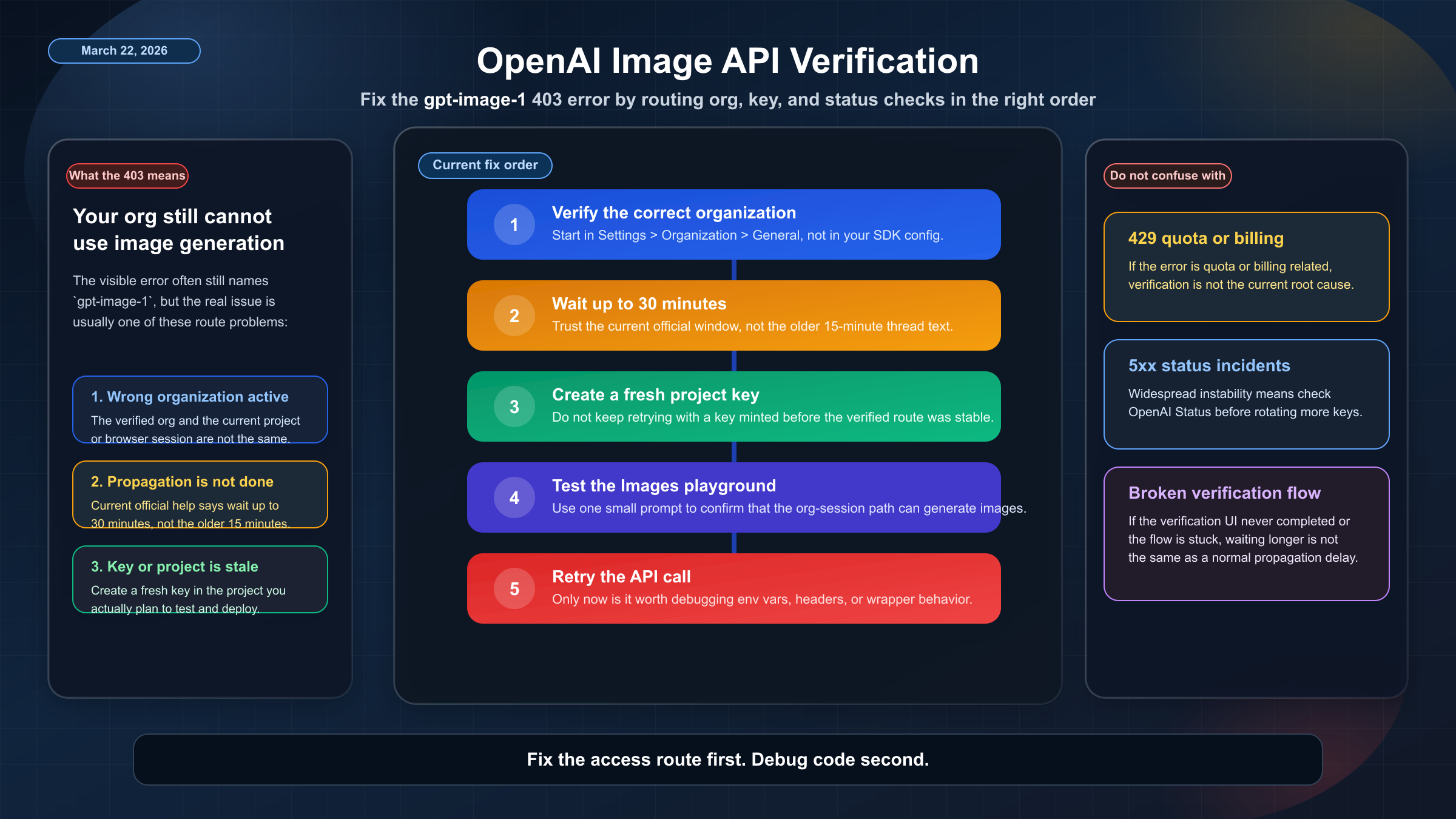

As of March 22, 2026, the fastest safe fix for OpenAI image generation API verification errors is this: verify the correct organization in Settings > Organization > General, wait up to 30 minutes, create a new API key in the correct project, test once in the Images playground, and only then retry your API call. If you skip that order, you can waste a lot of time debugging code when the problem is really org selection, propagation delay, or the wrong key.

That is also why this keyword is more confusing than it looks. The exact error many developers still see is tied to gpt-image-1, and older community reports still quote a 15-minute propagation message. But OpenAI's current help-center guidance is broader than the old forum wording: organization verification unlocks image generation capabilities in the API, some image access is still tied to usage tier, and the current official wait guidance is up to 30 minutes, not 15.

The important practical point is that verification is one gate, not the whole stack. A verified org can still fail if you are using the wrong organization, the wrong project, a stale key, or if the problem is not verification at all. The article below is built to answer that exact situation, not to re-explain how the image API works in general. If your real question is cost after access is restored, the better follow-up is our guide to OpenAI image generation API pricing.

| What you see | What it usually means | What to do next |

|---|---|---|

| You have not verified the org yet | You are hitting the intended image-access gate | Go to Settings > Organization > General and complete Verify Organization |

| The org shows verified but you just finished | The status has not propagated everywhere yet | Wait up to 30 minutes, then refresh and try again |

| The dashboard says verified but the Images playground still says verify | You may be in the wrong org, wrong project, or using an old key | Confirm the selected org, create a fresh key in the active project, and retest |

API calls still return 403 after 30 minutes | The failure is often key, project, or org context rather than code syntax | Test in the Images playground first, then retry with a new project key |

| You are getting 5xx or broader failures instead of a clean 403 | The problem may be platform-side, not verification | Check OpenAI Status before changing more config |

Why OpenAI image generation still hits verification gates

OpenAI's current help article on API Organization Verification says verification unlocks access to additional model features and image generation capabilities in the API. It also says verification does not require a spending threshold. That matters because many older discussions mix verification with the old idea that you must cross some paid-usage milestone first. That is not how OpenAI currently documents the verification step.

What verification does not mean is "every image request will work the moment the green badge appears." The same official help page says some organizations may already have access without completing verification, and it tells users to check the Limits page or try the Playground to see whether they already have access. That is a subtle but useful clue: OpenAI treats image access as an organization-level capability decision, not as a property of one API key string by itself.

That organization-level framing explains a lot of the confusion behind the keyword. In community threads, developers describe the exact mismatch that drives this search: the dashboard shows Organization Verified, but the Images playground still says Verify organization details to use the Images playground, or the API still returns a 403 saying the org must be verified to use gpt-image-1. The easiest mistake is to read that as proof that verification "did not work." In practice, it often means the right org was verified, but the current browser session, project, or key path is still attached to something else.

It is also worth separating the keyword from the current model lineup. Readers still search the issue through the gpt-image-1 error string because that is what many wrappers, examples, and forum posts expose. But OpenAI's current image stack now also includes newer surfaces such as GPT Image 1.5, whose current model page shows Free not supported and paid-tier rate limits starting at Tier 1. In other words, even when verification succeeds, image access is still not a free-for-all lane.

The fastest way to fix the gpt-image-1 verification error

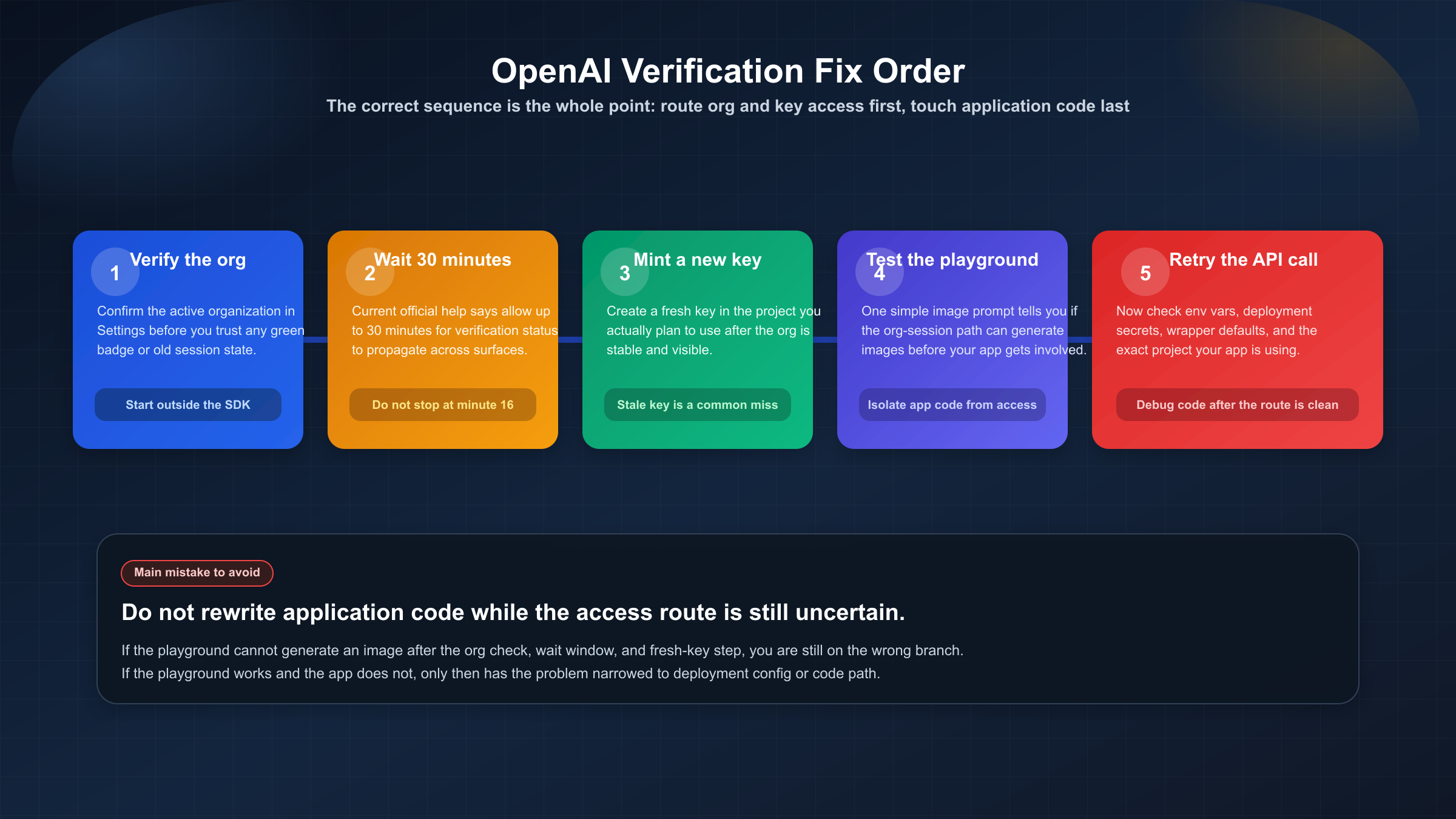

Start with the simplest rule: treat this as an access-routing problem before you treat it as a code problem. That means fixing it in the order OpenAI's current guidance supports, not in whatever order happens to be easiest inside your IDE.

First, confirm the correct organization. OpenAI's help article explicitly tells users to make sure they are viewing the correct organization in settings. That detail is easy to miss if you belong to multiple orgs, switched orgs recently, or are helping a teammate inside a shared platform account. Verifying one organization does not magically unlock another one. If the wrong org is active in the platform selector, the verification badge can be true and still irrelevant to the request you are making.

Second, if you just finished verification, wait up to 30 minutes. This is the current official guidance. Older community threads still show the 403 text saying access can take up to 15 minutes to propagate, which is why a lot of developers keep repeating that number. But if you are troubleshooting today, the safer number to trust is the current help-center number: up to 30 minutes.

Third, generate a new API key in the project you actually plan to use. This is one of OpenAI's official post-verification suggestions, and it matches what experienced users keep recommending in the community threads. If your old key was created before verification or before you settled on the right project, reusing it is a low-value gamble. Replace it.

Fourth, test in the Images playground before you return to application code. This is the cleanest way to answer a very important question: "is the org and session actually able to use image generation at all?" If the playground still tells you to verify, you are not done with the org/session layer yet. If the playground works but your application still fails, the problem has narrowed to project, key, environment, or code path.

Fifth, only after those checks should you retry the API request. That is the moment to look at headers, env vars, project wiring, and SDK behavior. Doing it earlier usually just creates more noise.

If your actual blocker is even earlier than this, such as not having a working key or not being sure your API setup is complete, read how to get an OpenAI API key and the current OpenAI API key requirements first. Those are different problems from verification, but they often get mixed together in practice.

What to check when you are verified but still blocked

The most common "I already verified" case is not a failed verification. It is a misaligned context problem.

The first context problem is the wrong organization. If your platform account has access to more than one org, the one shown in Settings may not be the one your project or browser context is actively using. OpenAI's own help guidance to check the correct organization is a strong sign that this is not a theoretical edge case. It is a real failure path.

The second context problem is the wrong project or a stale project key. In community troubleshooting, one of the most useful pieces of advice is to create a fresh project, avoid restrictive project-level settings during the test, generate a new key inside that project, and then retry. That is not an official policy statement, but it is a sensible operational translation of OpenAI's own "generate a new API key" guidance. It helps isolate whether the blockage is org-level or project-level.

The third problem is believing the old propagation text instead of the current one. A lot of people still quote the 15-minute message because that is what they saw in the 403 body in late-2025 forum threads. The problem is that the current official help article says up to 30 minutes. If you stop waiting at minute 16 and start rewriting code, you may be solving the wrong problem.

There is also a harder edge case: the verification attempt itself may not have completed cleanly. OpenAI's current help article says failed verification attempts can happen for several reasons, including unsupported documents, mismatched details, technical submission issues, or orgs that do not meet current eligibility requirements. It also says that retries are not currently supported when verification was unsuccessful. That matters because it changes the default recommendation. If you never actually reached a successful verified state, waiting longer or rotating keys is not the answer.

This is where community bug threads are still useful. OpenAI users reported broken Persona links, stale "not verified" states, and verification UIs that did not regenerate correctly. Those reports are not proof of a current live incident, but they are enough to justify a practical rule: if the verification flow itself looks broken, stop pretending you are in a normal propagation case. You may need support, not another browser refresh.

Troubleshooting when the problem is not verification

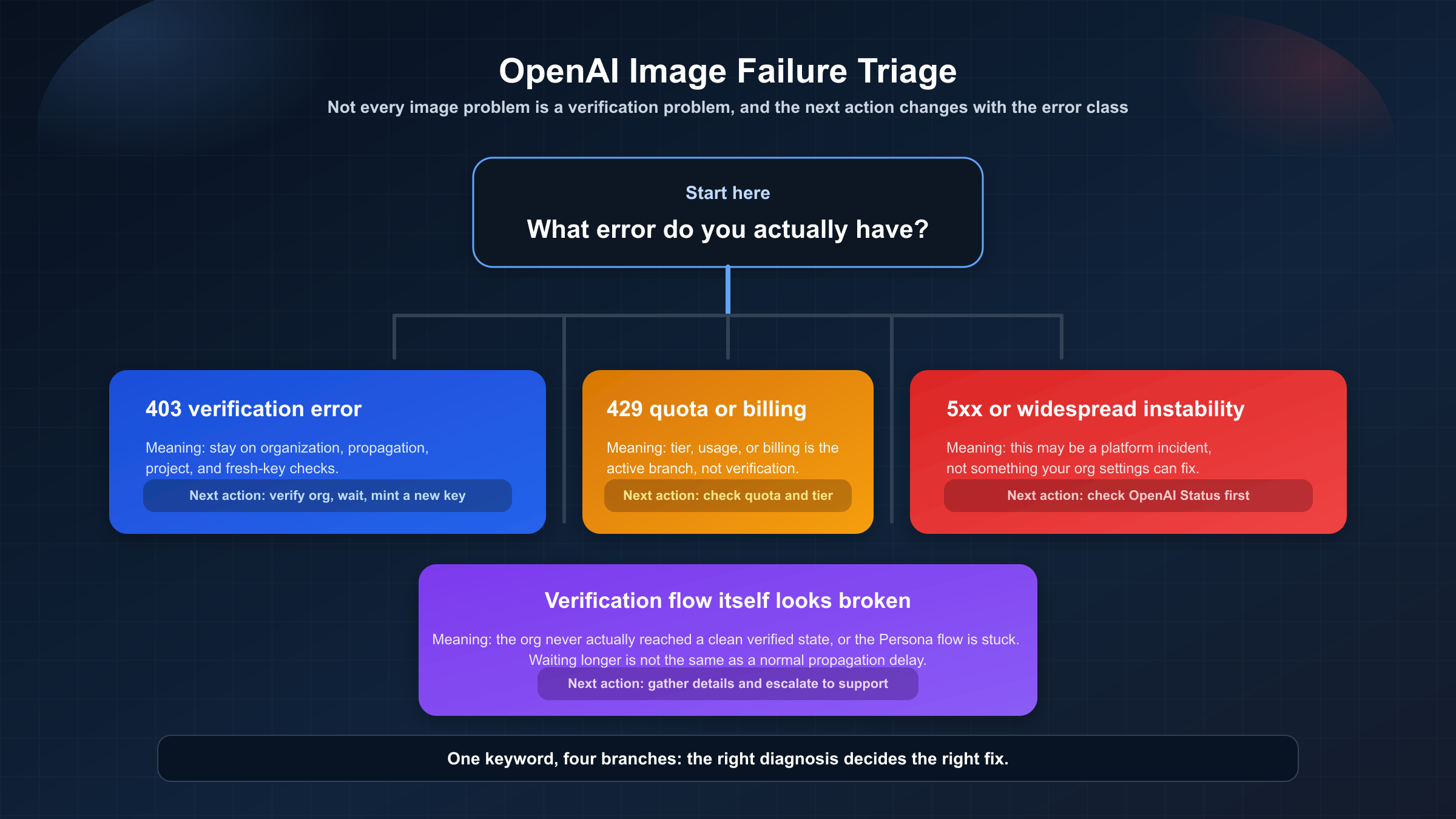

One reason this keyword stays messy is that developers collapse every image failure into one bucket. That is a mistake. A clean 403 saying the organization must be verified is a very different situation from a 429 quota problem or a 500 image-pipeline incident.

If the error is a clear verification message, stay on the org, project, and key path. If the error is about quota, billing, or exhausted limits, move to a different branch of debugging. OpenAI's help center also has a separate model-availability matrix by usage tier and verification status, and the current GPT Image 1.5 model page shows that image access is not a true Free-tier surface. If you are out of quota or your tier does not line up with the model you want, verification alone will not rescue the request. In that case, our guide to the OpenAI API key quota exceeded error is the better next read.

If the errors are broader than your own request, check OpenAI Status before you keep changing local settings. As checked on March 22, 2026, the status page says OpenAI is fully operational. That means there is no current public incident you should assume is responsible for your verification error today. But status checks still matter because OpenAI has had real image-generation incidents before. The May 14, 2025 write-up for Image Generation API Errors describes a pipeline issue that returned HTTP 500 responses after a code update. That is the opposite of a verification gate. It is the kind of distinction that can save you an hour of pointless reconfiguration.

The diagnostic rule is simple:

- 403 with explicit verification wording: stay on org, verification, project, and key checks.

- 429, quota, or billing wording: move to tier, usage, and billing checks.

- 5xx or widespread unstable behavior: check status first.

The article wins only if it keeps those branches separate.

What verification unlocks today for OpenAI image models

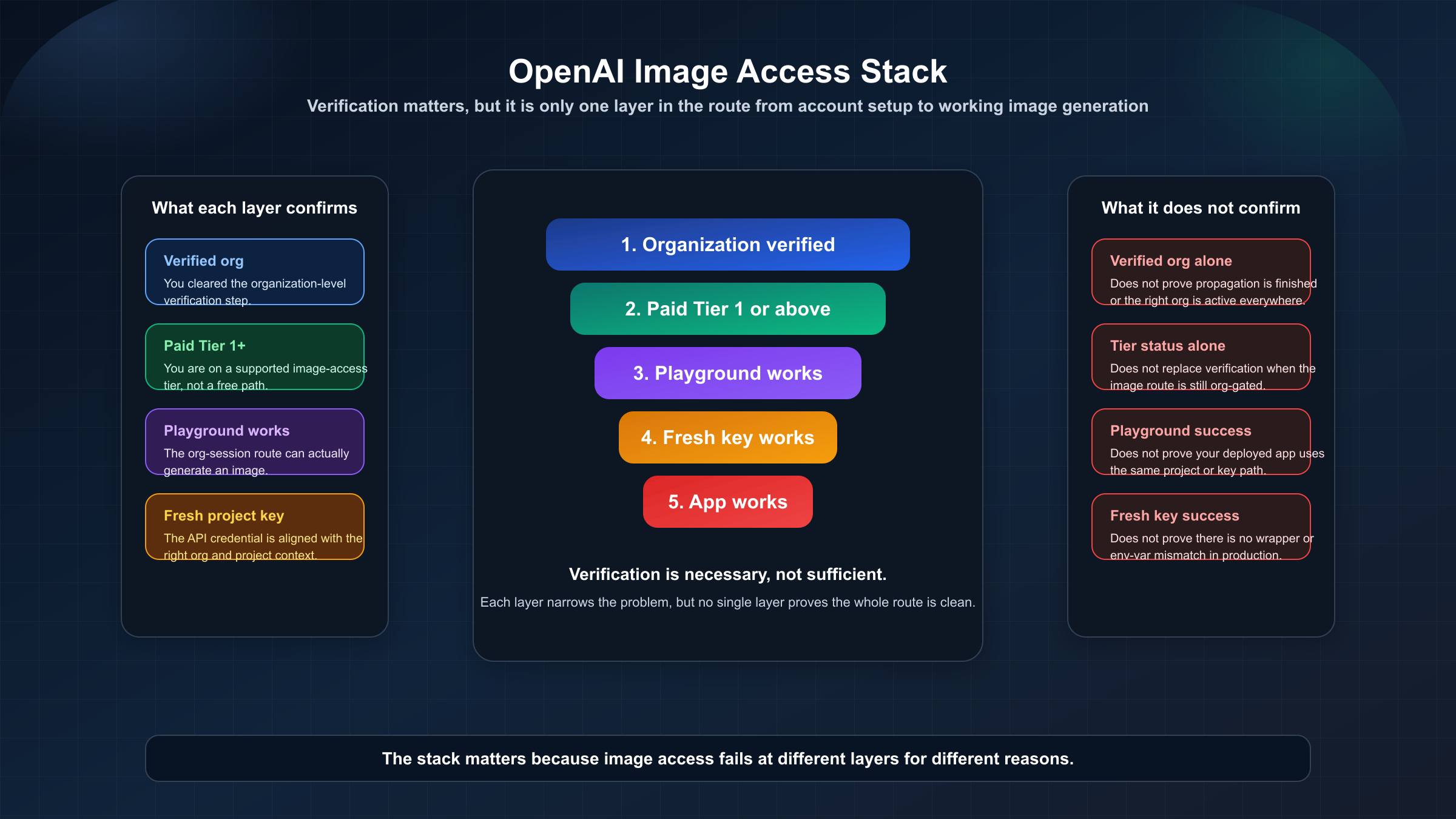

The most useful mental model is a stack, not a switch.

OpenAI's current help article says organization verification unlocks image generation capabilities in the API. The current model-availability article says GPT-image-1 and GPT-image-1-mini are available to API users on tiers 1 through 5, with some access subject to organization verification. And the current GPT Image 1.5 model page says Free not supported, with Tier 1 starting at 100,000 TPM and 5 IPM.

Put together, that means the real access story looks like this:

| Check | What it confirms | What it does not confirm |

|---|---|---|

| Organization shows verified | Your org cleared the verification step | It does not prove propagation is finished or that the right org/project is active |

| Limits page or playground shows image access | The org-session path can actually see image capability | It does not prove your existing project key is the one your app is using |

| New project key works | Your API key path is now aligned with the verified org and project | It does not prove every other environment variable or deployment secret is correct |

| Paid Tier 1 or above is in place | You are on a supported image-access tier | It does not replace verification where verification is still required |

This is also why "I verified but it still fails" can be true without meaning OpenAI is contradicting itself. Verification is necessary for some image access paths, but it is not sufficient for every failure mode. The stack still includes org selection, project selection, fresh credentials, and paid-tier reality.

If your goal after fixing access is cost control rather than debugging, move from this article to OpenAI image generation API pricing. The decision changes once the gate is gone.

How to run the cheapest safe post-fix test

Once you think the gate is resolved, do one small confirmation test. Do not jump straight into a batch workflow, multi-image edit job, or a full production retry loop.

The lowest-friction confirmation path is usually the Images playground with a simple prompt and the lowest reasonable quality setting. That test answers the biggest question first: can this org-session combination actually generate an image now?

After that, run one matching API test from the exact project and environment your application uses. Keep it deliberately boring:

- one prompt

- one image

- no edit inputs

- no background complexity

- no wrapper-specific magic

The reason is not only cost. It is diagnostic clarity. OpenAI's current image-generation guide makes clear that final request cost can include text input, image input for edits, and image output. A minimal test keeps both your spend and your uncertainty low.

If the playground works and the minimal API test fails, you have narrowed the problem to your application path. If both work, the verification problem is closed. If neither works after the 30-minute window and a fresh project key, stop circling the same checks and gather the exact org, project, and error details for support.

FAQ

Why does the Images playground still ask me to verify after the dashboard says verified?

The most common causes are propagation delay, the wrong organization being active, or a stale project or API key. The current official help-center guidance says to wait up to 30 minutes, generate a new API key, refresh the session, and confirm the correct organization.

Why does the error still mention gpt-image-1?

Because that is still the model name exposed in many existing logs, wrappers, and forum threads. The keyword cluster around verification still follows that error string even though OpenAI's broader current image stack now includes newer surfaces such as GPT Image 1.5.

Do I need to create a new API key after verification?

In many cases, yes. OpenAI's current help article explicitly recommends generating a new API key if you are still seeing "not verified" errors after verification. It is one of the highest-value steps in the current fix path.

When should I stop waiting and contact support?

If more than 30 minutes have passed, the correct org is selected, a fresh project key still fails, and the playground is still blocked, you are no longer in the simple propagation case. If the verification flow itself looked broken or never completed cleanly, escalate sooner rather than later.