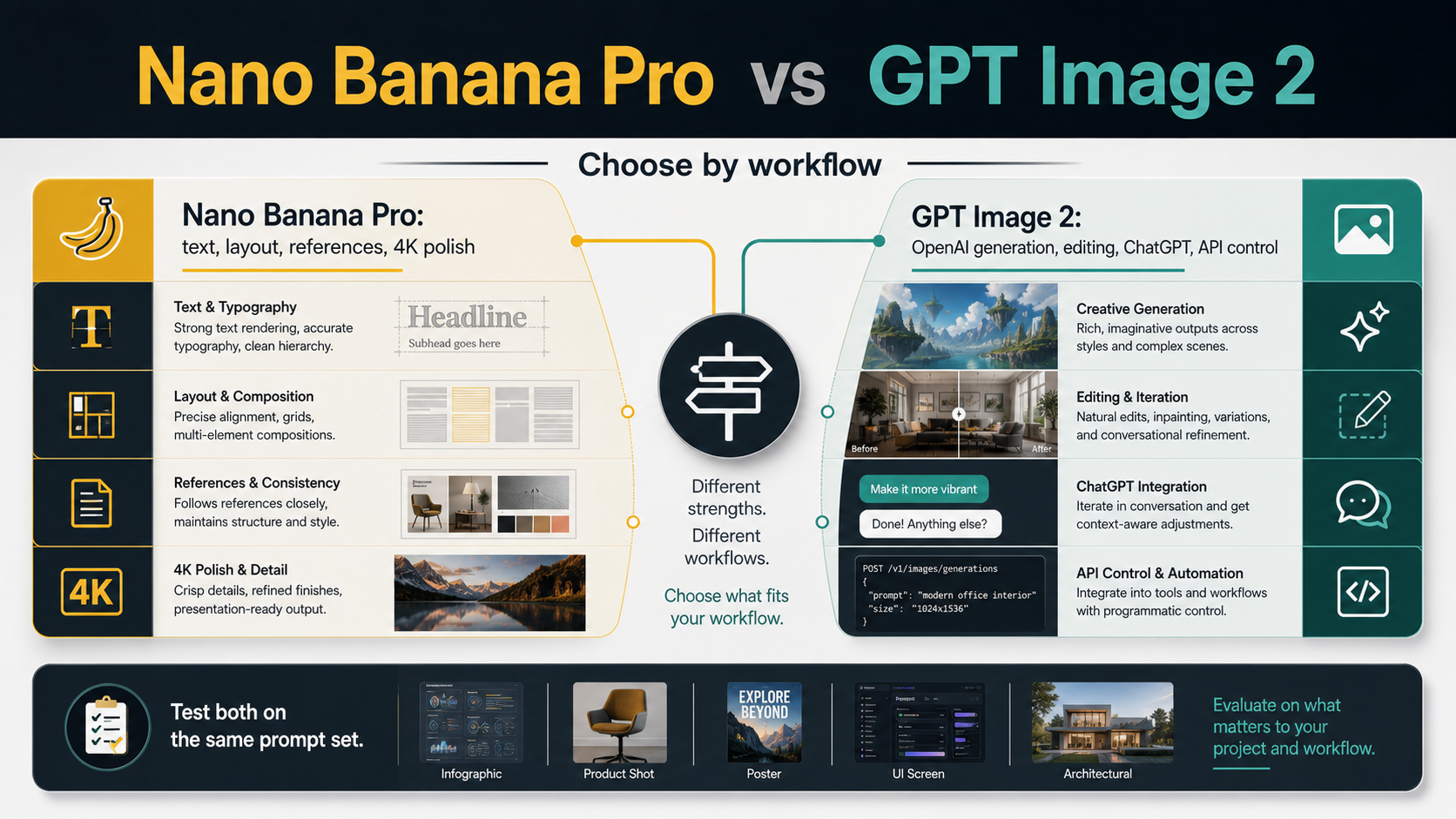

Start with the job, not the leaderboard. Use Nano Banana Pro first when the image needs readable text, tight layout control, multiple references, or 4K polish. Use GPT Image 2 first when the work lives inside OpenAI generation, editing, ChatGPT context, or an API workflow. If the output is client-facing, run the same prompt and reference set through both before calling either model the winner.

The name map matters only after that choice: Nano Banana Pro is the Google premium image lane, while GPT Image 2 is OpenAI's current image model for API and related ChatGPT image workflows. Price, quota, 4K behavior, speed, and gateway availability belong to the exact route you use, so verify those facts on the app, API, or provider surface before production.

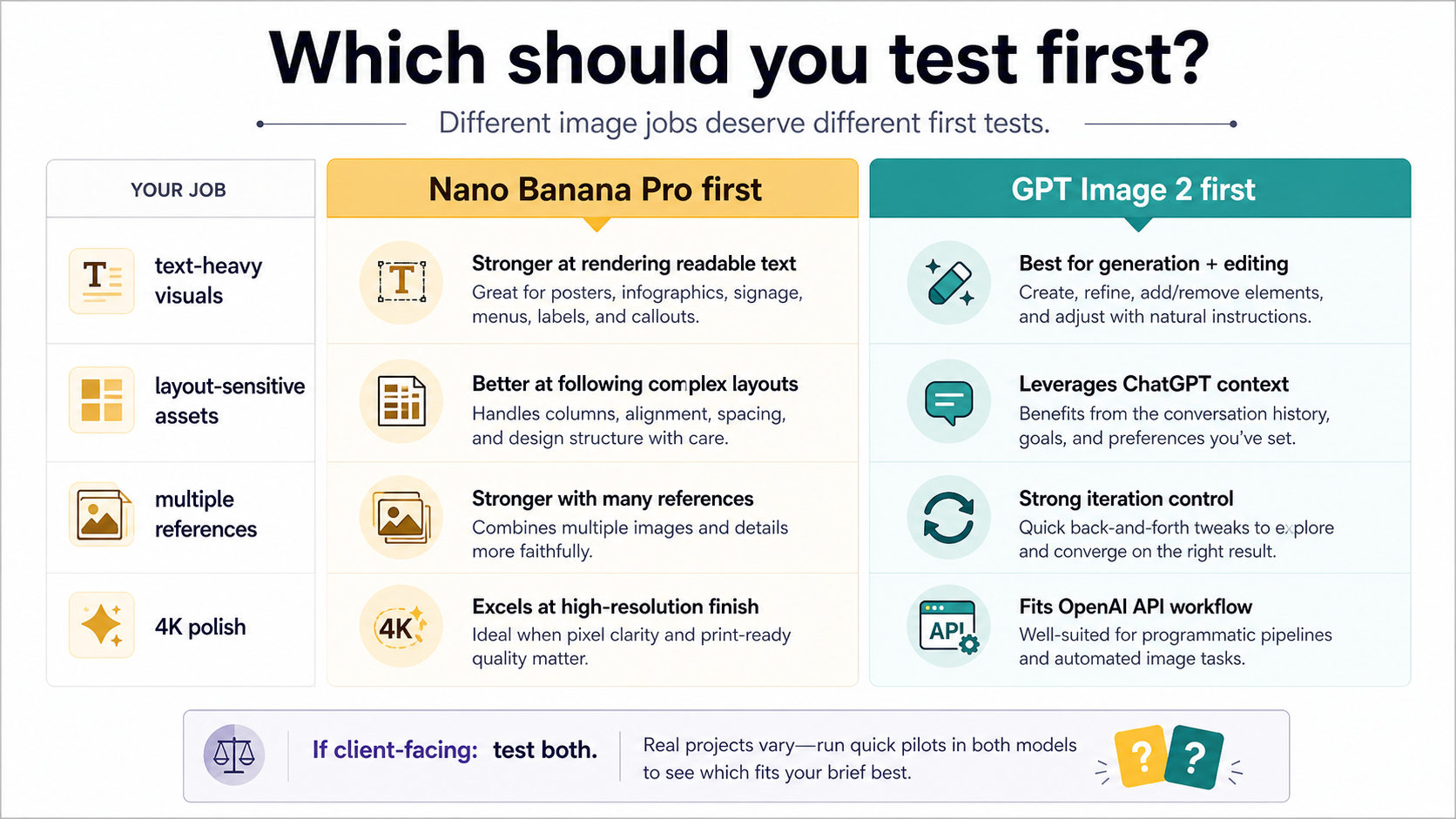

Quick decision: which model should you test first?

The practical answer is not "which one is better?" It is "which one should absorb the first serious test for this job?" A single sample can make either model look unbeatable, but production work has constraints: text has to stay readable, brand references have to survive, the output has to land in the right app or API route, and the bill has to belong to the surface you actually use.

| Your image job | Test Nano Banana Pro first when... | Test GPT Image 2 first when... |

|---|---|---|

| Text-heavy design | labels, posters, UI boards, or deck graphics must stay legible | the text is part of a broader OpenAI generation or editing loop |

| Layout-sensitive asset | the composition needs tight alignment, hierarchy, and controlled visual rhythm | the layout will be revised through conversational or programmatic edits |

| Reference-driven work | several reference images, product shots, or style constraints define the asset | source images enter an OpenAI edit or assistant workflow |

| 4K or final polish | the output needs a premium Google image lane before handoff | route control and repeatable OpenAI API behavior matter more than the Google lane |

| Product integration | the image is created inside Google's Gemini, AI Studio, or Vertex route | the image belongs in ChatGPT, Images API, Responses, or an OpenAI-native backend |

That split is deliberately conservative. It does not claim that Nano Banana Pro always beats GPT Image 2 on text or that GPT Image 2 always edits better. It says where each model deserves the first controlled test. For any asset that will be shown to a client, printed, embedded in a product page, or reused across campaigns, the final answer should come from the same prompt and reference set on the exact route you plan to use.

Map the names before you compare features

The comparison becomes unreliable when model names are treated like interchangeable product labels. Nano Banana Pro is the reader-visible name for Google's premium image lane, and Google's Gemini API image-generation docs map that lane to Gemini 3 Pro Image Preview. GPT Image 2 is OpenAI's current image model in developer docs, with the API model ID gpt-image-2 and the snapshot gpt-image-2-2026-04-21 listed on the OpenAI model page as of May 13, 2026.

That mapping matters because the route determines what you can trust. A Gemini app result, an AI Studio test, a Vertex AI call, a ChatGPT image workflow, a direct Images API request, a Responses tool call, and a provider gateway can all sit near the same comparison conversation, but they are not the same contract. The model name tells you what generated the image. The route tells you where access, price, quota, request fields, storage, and error handling live.

Use this route rule before making any expensive decision:

| Claim you are checking | Where to verify it |

|---|---|

| GPT Image 2 model ID, model snapshot, output options, and API limitations | OpenAI developer docs |

| GPT Image 2 direct generation or editing behavior | OpenAI Images API docs and your own account route |

| GPT Image 2 inside an assistant or agent flow | Responses API tool behavior, not only the image model name |

| Nano Banana Pro official model lane and positioning | Google Gemini API image-generation docs |

| Nano Banana Pro pricing or quota | Google pricing or the exact Google Cloud route you will use |

| Gateway availability, rates, or routing behavior | the gateway's own current contract, not OpenAI or Google official pricing |

The safest body of evidence is first-party for identity and route facts, then your own same-prompt outputs for the final creative decision. Public examples are useful for seeing what people notice, but they are not production proof unless you can reproduce the result on your own route.

Judge outputs by the asset, not by one viral sample

Image-model comparisons often collapse into taste. One result looks more cinematic, another preserves text better, another wins because the prompt accidentally favored its style. That is fine for exploration, but it is a weak basis for production choice. A useful comparison should define what would make the asset usable before the image is generated.

For text-heavy or layout-sensitive work, judge whether letters are readable at the final size, whether the hierarchy still makes sense, whether labels stay attached to the right objects, and whether the composition can survive review without a designer rebuilding it. Nano Banana Pro deserves an early test here because Google's current positioning treats the Pro lane as the premium image model for more professional asset work, especially where text, layout, references, and polished output matter.

For editing or iteration-heavy work, judge whether the workflow can keep context, apply revisions, preserve source-image intent, and fit into the product surface you already use. GPT Image 2 deserves an early test when the asset is part of an OpenAI-native loop: a ChatGPT-assisted creative session, a direct Images API request, an edit endpoint, or a broader Responses flow where image generation is one step inside an assistant experience.

For realism and polish, do not score only surface beauty. Check lighting consistency, hands and edges, product geometry, brand-like details, and whether the output has been over-smoothed. A model that makes a striking first image can still fail the job if it changes a reference product, invents a label, or produces an image that cannot be repeated under the same constraints.

Where Nano Banana Pro should be tested first

Test Nano Banana Pro first when the asset is closer to design production than casual image generation. The strongest use cases are not "make a cool picture" prompts. They are prompts where the model has to hold together text, layout, multiple references, and polished output while staying close enough to the brief that a human does not have to rebuild the image afterward.

That includes poster concepts, infographic-style boards, pitch-deck graphics, product campaign visuals, menu or signage mockups, branded layouts, and hero images where the final output needs a cleaner professional finish. If the job is going to be reviewed by a stakeholder who cares about readable copy, aligned elements, and reference fidelity, Nano Banana Pro is a sensible first lane to test.

The caveat is that Pro should not become the default answer for every Google image task. Google's own image-generation lineup now separates Nano Banana, Nano Banana 2, and Nano Banana Pro. The related Nano Banana Pro review is useful if you need the broader "is Pro still worth it?" decision. Against GPT Image 2, Pro earns the first test when the job specifically benefits from Google's premium text, layout, reference, and 4K-oriented positioning.

Even then, avoid turning positioning into a guarantee. If an output has important legal text, product labels, medical or financial detail, or brand-critical typography, inspect it as an image artifact, not as a model reputation. The right workflow is to give Pro the first shot, then keep the prompt, references, and route visible enough that a failed output can be diagnosed rather than argued about.

Where GPT Image 2 should be tested first

Test GPT Image 2 first when the image belongs inside an OpenAI workflow. That may be a direct generation request, an edit request, a ChatGPT creative session, a backend that already uses OpenAI APIs, or an assistant flow where the model needs to reason about user context before producing an image. In those cases, route fit can matter more than one isolated comparison output.

OpenAI's developer docs list gpt-image-2 as a generation and editing image model with flexible image sizes and high-fidelity image inputs. The implementation consequence is straightforward: if your product already needs OpenAI request logging, account checks, response handling, or edit workflows, GPT Image 2 is usually the cleaner first test. You can stay inside one provider contract while you learn whether the image quality is good enough for the job.

The route still matters. A direct Images API call is not the same thing as a Responses flow with an image-generation tool. If the product is simply "generate or edit this image," start with the direct image route. If the product is "an assistant should understand the task, maybe call tools, and then generate or revise an image," Responses may be the better architecture. The deeper implementation split belongs in the GPT Image 2 API guide, but the comparison decision is simple: choose GPT Image 2 first when OpenAI is the workflow you need to ship.

One current limitation should stay visible. OpenAI's image-generation tool guidance says GPT Image 2 does not currently support transparent backgrounds. That does not make the model worse for every job, but it matters if your output is a logo cutout, UI sticker, product overlay, or asset that must arrive with alpha. Do not bury that requirement under a generic "image quality" comparison; route it as a production constraint.

Cost and availability: compare route contracts, not model names

A cost comparison is only useful after the route is known. On May 13, 2026, OpenAI's image-generation cost guidance listed GPT Image 2 output examples by quality and size, including 1024x1024 low, medium, and high examples, and made clear that input tokens still affect total cost. Those examples are useful as official OpenAI pricing context. They are not a universal per-image price for ChatGPT, Codex, a gateway, or a different deployment surface.

Google's pricing and model availability for Nano Banana Pro belong to Google's current route, not to an older comparison table and not to a social post. If you are using Gemini app behavior, Google AI Studio, or Vertex AI, verify the current route where you will actually generate the image. The same model-family language can hide different limits, billing surfaces, or access states.

Provider gateways add a third contract. A gateway may be useful for payment handling, route aggregation, access convenience, or operational routing, but its availability and price are provider-owned claims. The core Nano Banana Pro versus GPT Image 2 choice does not require a provider recommendation. If a later integration requires a gateway, verify that provider's current model coverage, rate, refund, quota, and failure behavior separately before putting it in a production cost model.

The decision rule is:

| Before you compare cost | Ask this first |

|---|---|

| OpenAI direct API | Which endpoint, model, quality, size, and input-token pattern will production use? |

| OpenAI assistant workflow | Is image generation a hosted tool inside a broader Responses flow? |

| Google route | Is the output coming from Gemini app behavior, AI Studio, or Vertex AI? |

| Gateway route | Which provider owns the route, billing, retries, and support path? |

If those answers are not clear, the price table will mislead you. Choose the route first, then compare the costs that belong to that route.

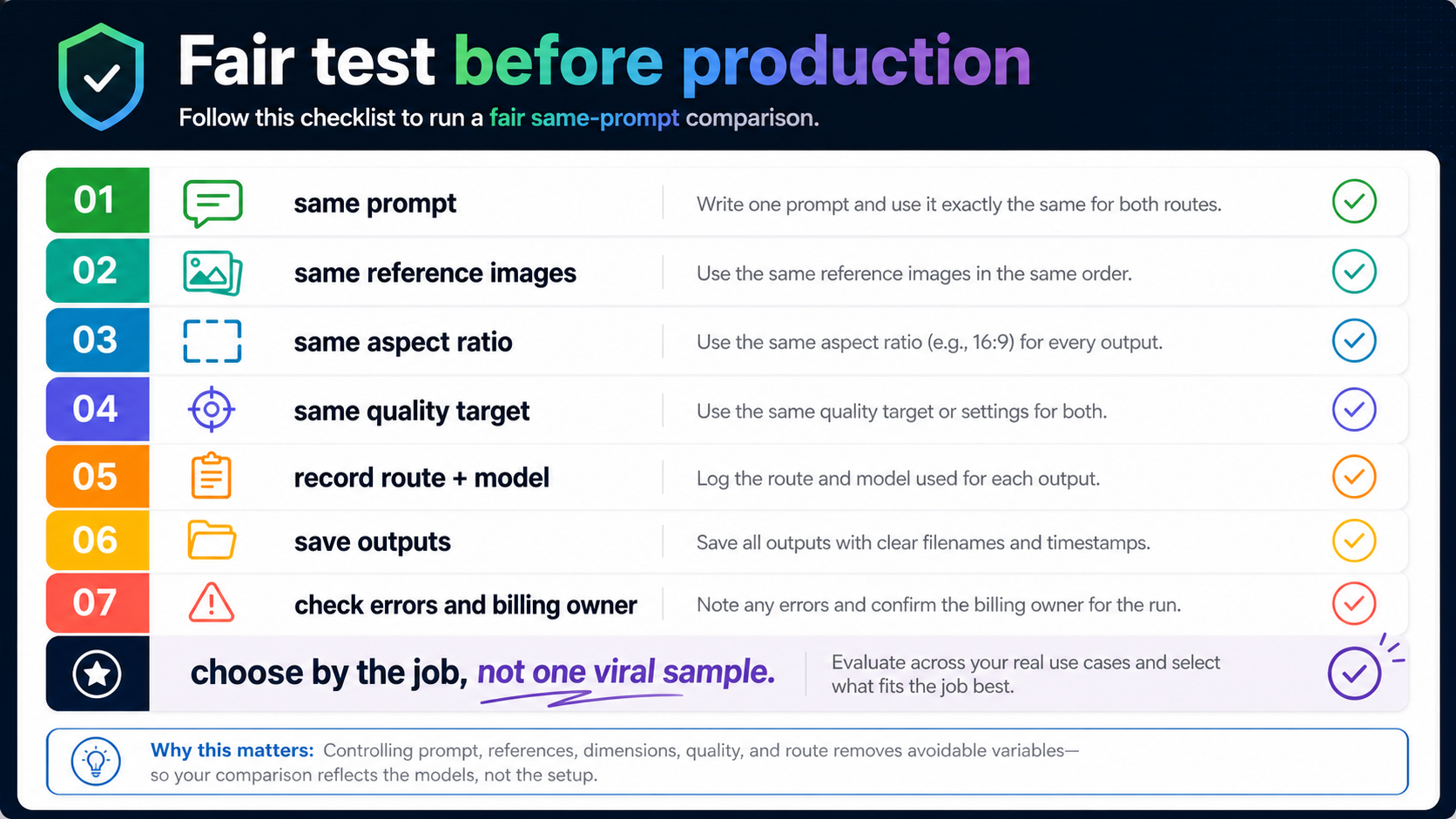

Run a fair same-prompt test before choosing

The fastest way to make this comparison useful is to test both models with the same brief. Use one prompt, the same reference images in the same order, the same target aspect ratio, the same quality target where the route supports it, and the same acceptance criteria. If one route cannot express the same constraint, record that as part of the result instead of silently changing the brief.

A fair test should include at least three prompts:

| Test prompt type | What it reveals |

|---|---|

| Text and layout board | typography, alignment, label stability, hierarchy, and over-processing |

| Reference-driven product or character image | reference adherence, identity stability, unwanted changes, and lighting consistency |

| Edit or revision prompt | whether the route can revise without destroying the parts that already work |

Save the outputs with route, model, date, prompt, source images, aspect ratio, quality setting, and billing owner. That log is not bureaucracy. It lets you explain why one result won, repeat the winning route later, and avoid blaming the model when the real difference was app behavior, request shape, account access, or gateway routing.

Use the same acceptance line for both models: would this output survive the next real step? If the asset needs a designer to rebuild the text, it failed the text job. If it needs a developer to change the whole route, it failed the integration job. If it looks great once but cannot be reproduced under the same constraints, it is a good demo and a weak production choice.

FAQ

Is Nano Banana Pro better than GPT Image 2?

Not universally. Nano Banana Pro is the first model to test for Google's premium text, layout, reference, and 4K-oriented asset work. GPT Image 2 is the first model to test when the workflow belongs inside OpenAI generation, editing, ChatGPT context, or API control. For client-facing work, test both with the same prompt set.

Is Nano Banana Pro the same as Nano Banana 2?

No. Google's current image-generation lineup separates Nano Banana, Nano Banana 2, and Nano Banana Pro. Treat Nano Banana Pro as the premium Google lane, not as a synonym for the newer all-around Nano Banana 2 route.

Is GPT Image 2 available through the API?

Yes. OpenAI's developer docs list gpt-image-2 as an official model ID with the current snapshot gpt-image-2-2026-04-21. For implementation details, use the route-specific GPT Image 2 API guide rather than copying a generic image endpoint example.

Which one should I use for image editing?

Use GPT Image 2 first if your editing workflow is already OpenAI-native or needs direct API control. If the edit is really a Google-side professional asset workflow with heavy text, layout, or references, test Nano Banana Pro as well. For deeper OpenAI edit request details, see the OpenAI image editing API.

Which one is cheaper?

There is no honest single answer without the route. OpenAI API examples, Google pricing, app entitlements, and provider gateway rates are different contracts. Verify cost, quota, speed, and availability on the exact route before making a production choice.

Should I use both models?

Use both when the asset is expensive to rerun, client-facing, brand-sensitive, or likely to be reused. Use one model first when the job clearly belongs to one route: Nano Banana Pro for Google premium asset work, GPT Image 2 for OpenAI-native generation, editing, and API workflows.