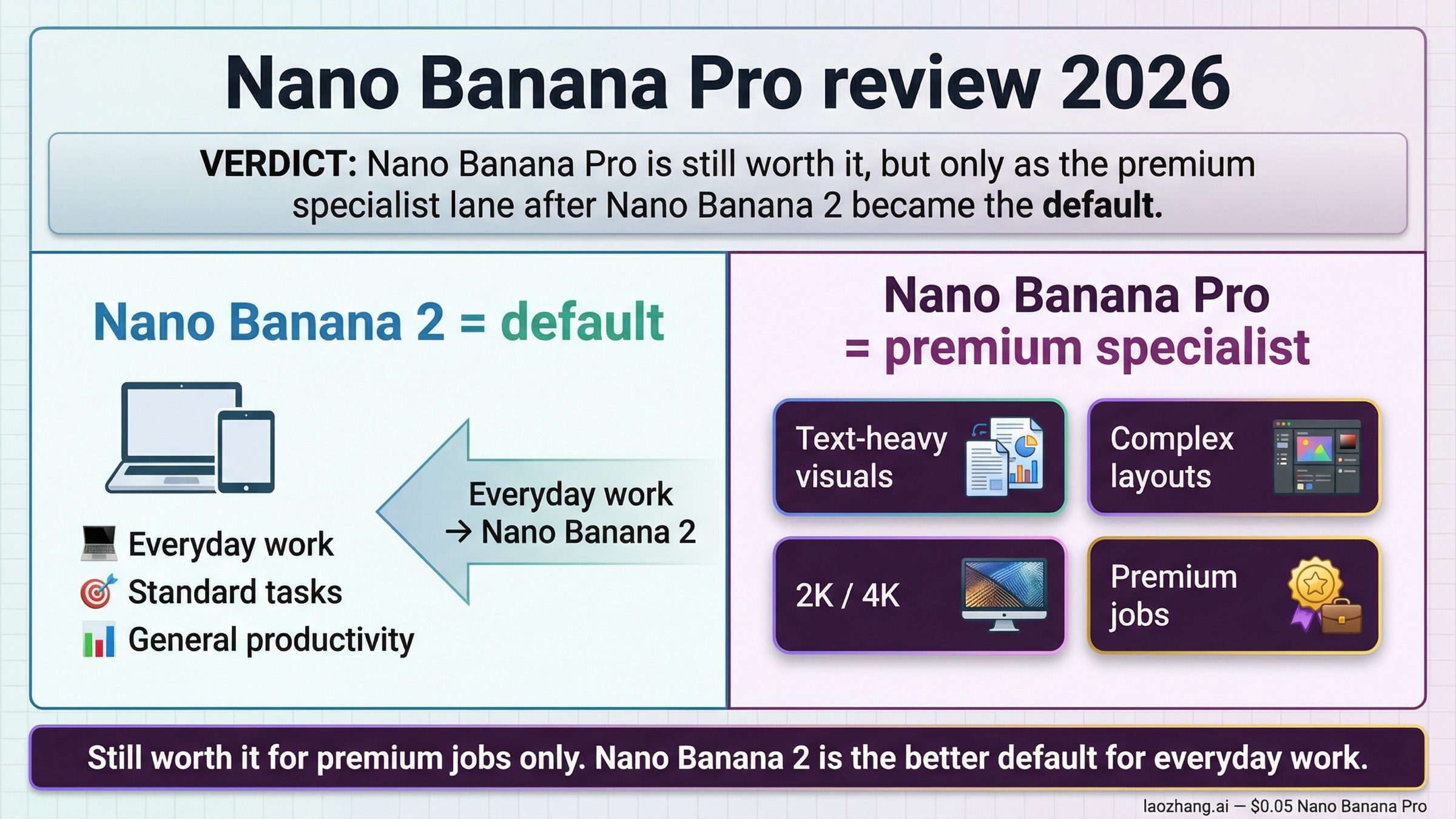

As of March 27, 2026, Nano Banana Pro is still worth it, but only for narrower premium jobs than many older review pages admit. If your work depends on stronger embedded text, infographic-style layouts, multi-image composition, or higher-fidelity 2K and 4K output, Nano Banana Pro still earns a place in the stack. If your job is fast everyday generation, quick iterations, or the best all-around Google default, start with Nano Banana 2 instead.

That split matters because the current Google story is not the one many November and December 2025 reviews were written for. Google's current Gemini image-generation docs now describe Nano Banana 2 as the go-to model for all-around image generation, while Nano Banana Pro, officially gemini-3-pro-image-preview, is positioned as the premium lane for professional asset production and more complex instructions. A current review has to start there or it becomes stale before the second paragraph.

There is one more reason this review query feels messy right now: the Gemini app surface and the underlying model story are no longer the same thing. In Gemini, many users now experience Nano Banana 2 as the default path and Nano Banana Pro as a harder-to-reach upgrade flow. In the developer stack, Pro is still a separate model with its own pricing, preview status, and strengths. If a review page blurs those surfaces together, it usually turns either too bullish or too negative.

TL;DR

| If this sounds like you | Should you choose Nano Banana Pro? | Why | Main caveat |

|---|---|---|---|

| You need a fast default for everyday image generation, ads, mockups, and iterations | No | Google now positions Nano Banana 2 as the better all-around default | Pro costs more and adds preview friction you may not need |

| You make infographic-style visuals, poster mockups, deck graphics, or brand assets with important text | Yes | Pro still has the stronger premium case for text-heavy and layout-sensitive work | It is still preview-labeled and not always consistent enough to trust blindly |

| You care about 2K and 4K final assets more than generation speed | Yes, often | Pro remains Google's premium 4K lane for professional asset production | The price jump is real, and not every asset needs it |

| You mostly judge the model from what changed in the Gemini app | Maybe, but carefully | App frustration is real, but it does not automatically describe the whole API or Vertex route | Community complaints are useful signals, not official proof |

| You mainly want the best default in Google's own stack | Usually no | Nano Banana 2 is now the cleaner default answer | Pro should be the escalation path, not the baseline |

The shortest honest rule is this: Nano Banana Pro is still a strong specialist, not the best default. That is a better answer than "yes" or "no," and it matches the current Google source set more closely than most page-one reviews do.

Why this review changed after Nano Banana 2

The biggest mistake in the current SERP is acting as if the review question stayed frozen at launch. It did not. Google's own Gemini API release notes list gemini-3-pro-image-preview as released on November 20, 2025, which is the moment most early Nano Banana Pro commentary anchored itself to. But the same release notes list gemini-3.1-flash-image-preview, the model behind Nano Banana 2, as released on February 26, 2026. That changed the lineup and, more importantly, the default recommendation.

Google's current image-generation docs now map the family this way:

- Nano Banana Pro =

gemini-3-pro-image-preview - Nano Banana 2 =

gemini-3.1-flash-image-preview - Nano Banana =

gemini-2.5-flash-image

Those docs also make the current buying logic explicit. Nano Banana 2 is presented as the go-to model for all-around use. Nano Banana Pro is the premium lane for professional asset production, more complex instructions, Google Search grounding, and up to 4K output. That means the right 2026 review question is not "is Pro impressive?" It is "when is Pro impressive enough to justify not using the new default?"

That is also why this page should not be confused with the repo's broader Nano Banana 2 vs Nano Banana Pro comparison. That page is the direct side-by-side routing guide. This one is narrower and more honest to the exact keyword. It asks whether Nano Banana Pro still deserves a yes once you factor in the March 2026 lineup, the current premium cost, and the fact that many users now meet Google image generation through Nano Banana 2 first.

The answer is still yes for some readers. It is just no longer yes by default.

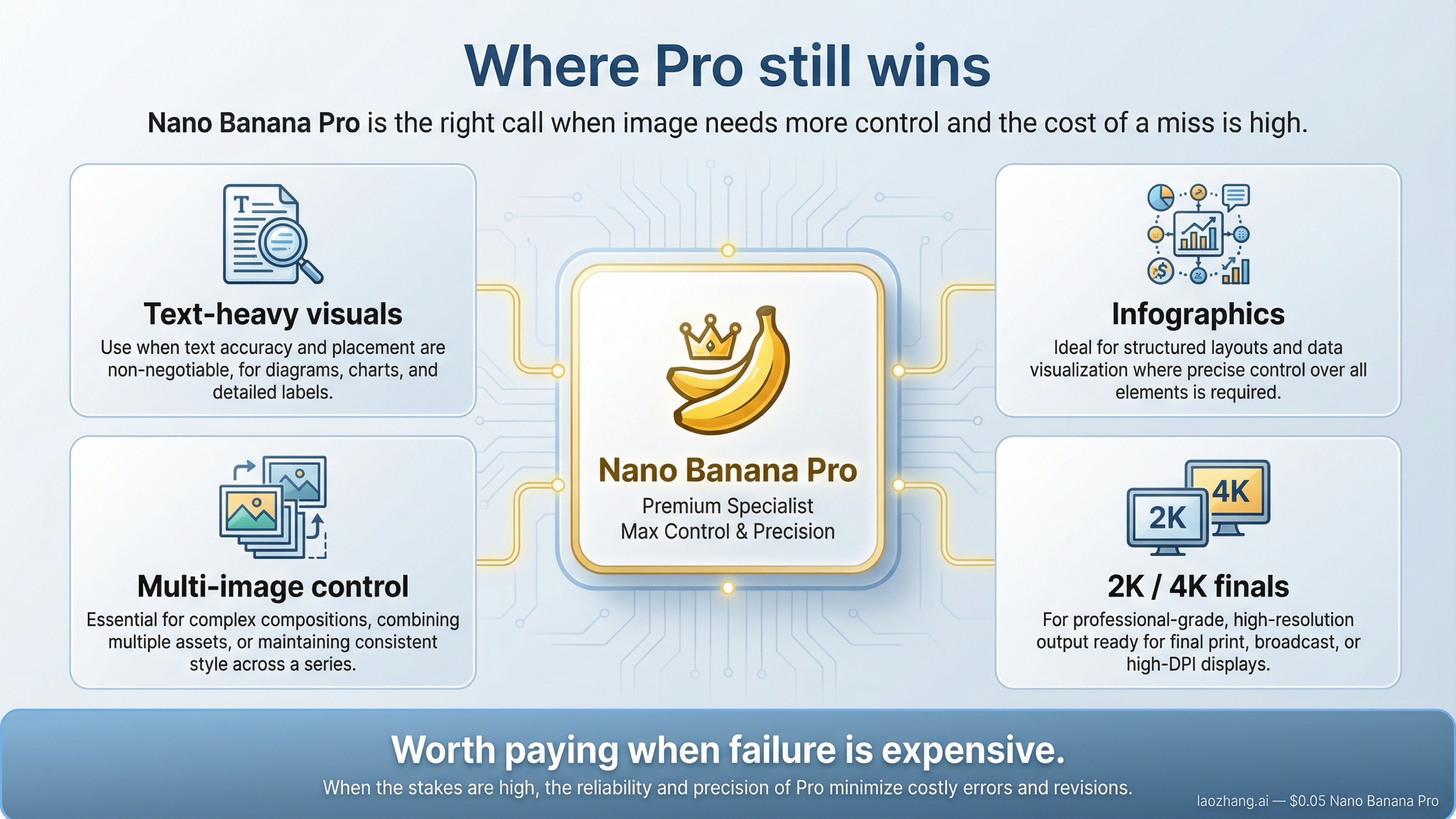

What Nano Banana Pro still does better than Nano Banana 2

Nano Banana Pro still earns its best reviews in the kinds of workflows where "almost right" wastes more time than paying more upfront. Google's original Nano Banana Pro launch post is still useful here, because it highlights exactly the cases the premium lane is supposed to serve: infographics, multilingual text, more controlled compositions, Google Search grounding, up to 14 input images, and 2K or 4K outputs. Those are not casual-use selling points. They are premium-production selling points.

The strongest current first-party evidence is the Gemini 3 Pro Image model card. Google says the model leads the GenAI-Bench image preference Elo ranking and specifically calls out strong text rendering and identity preservation for up to five people. That matters more than generic quality claims because it points to the exact kinds of work where users feel the difference quickly:

- poster and flyer mockups where text cannot look like decorative gibberish

- pitch-deck or report graphics where labels need to survive close inspection

- product or campaign concepts built from multiple references rather than one vague prompt

- premium hero images and presentation visuals where final polish matters more than raw throughput

This is where Nano Banana Pro still feels like a real upgrade rather than a branding exercise. Nano Banana 2 has inherited much of Google's current image-generation strength, and for many everyday tasks it is already enough. But when the job itself is text-heavy, layout-sensitive, or meant to look like a finished asset rather than a rough concept, Pro is still the model Google frames as the deliberate choice.

The API and enterprise route reinforce that premium positioning. The current Vertex AI model page still labels Gemini 3 Pro Image as Public Preview, supports up to 65,536 input tokens, and lists 4K output support. That does not make it automatically the better default, but it does explain why the premium case remains real. Google has not quietly turned Pro into a dead-end leftover. It still exists because there are jobs where the Flash lane is not enough.

So if your review question is really "Can Nano Banana Pro still earn a yes when the output matters?" the answer is absolutely yes. If your question is "Should I reach for it first every time?" that is where the answer turns into no.

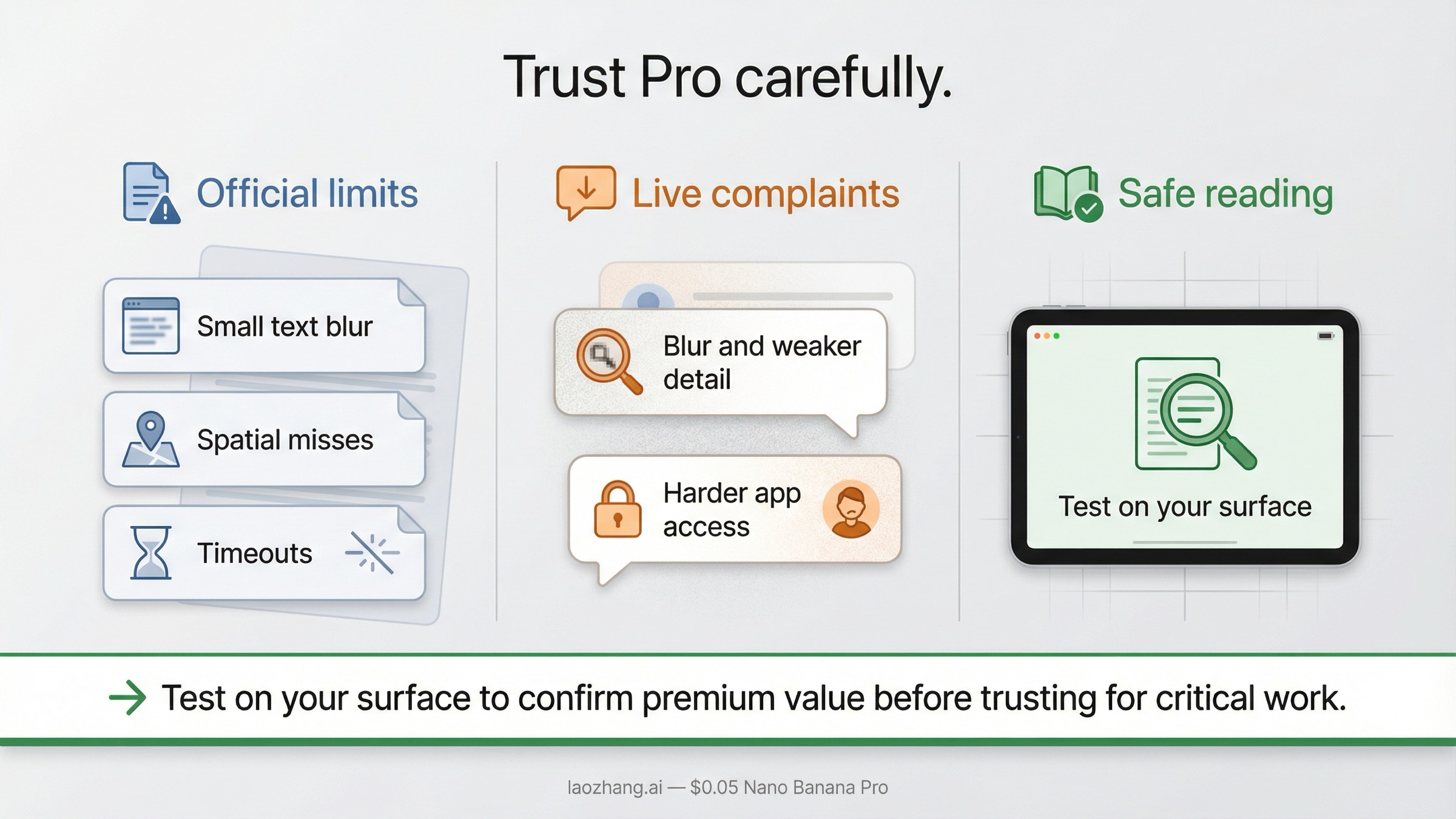

Where Nano Banana Pro still disappoints

A review that stops at the premium case is not a review. It is launch marketing with a timestamp on it.

The good news is that Google has already published the downside story itself. In the same model card that highlights benchmark leadership, Google also lists the failures that matter most in real use: small blurry text, missed spatial relations, and generated-image timeouts. Those are not minor footnotes. They tell you exactly where the model can still betray the kinds of premium workflows it is supposed to help.

That matters because Nano Banana Pro often gets judged hardest on the jobs where its wins are supposed to be decisive. If you are making a simple concept image, a little inconsistency is tolerable. If you are making an infographic, poster, packaging mockup, or client-facing hero visual, a tiny text failure can ruin the asset. That is the core tension of using Pro in 2026. Its best cases are valuable, but its misses are also more expensive.

The live community reaction adds another layer. Reddit threads like Nano Banana Pro change... and Enshittification of Nano Banana Pro show why review queries now carry anxiety instead of simple curiosity. Users are reporting blurrier results, weaker detail, and harder access in the Gemini app after March 10, 2026. Those threads are not official proof that Google's underlying Pro model suddenly became bad, but they do prove something important: trust around the app surface is shakier than many review pages admit.

This is where the review has to stay disciplined. Community complaints should not be inflated into fake release notes. But they should absolutely change the tone of the verdict. They mean you should not tell readers to trust Pro blindly just because the launch post and model card sound strong. The right advice is to treat Nano Banana Pro as a premium tool that still needs prompt-by-prompt validation on your actual surface, especially if that surface is the Gemini app instead of a direct API workflow.

One more official caveat matters for production teams. Google's rate-limit guidance says preview models can have more restrictive limits, applies quotas per project rather than per API key, and tells users to check active limits in AI Studio. That means Nano Banana Pro still carries more operational uncertainty than a finished, fully settled product lane would. For teams shipping something customer-facing, that should influence the review verdict just as much as any beauty-test prompt.

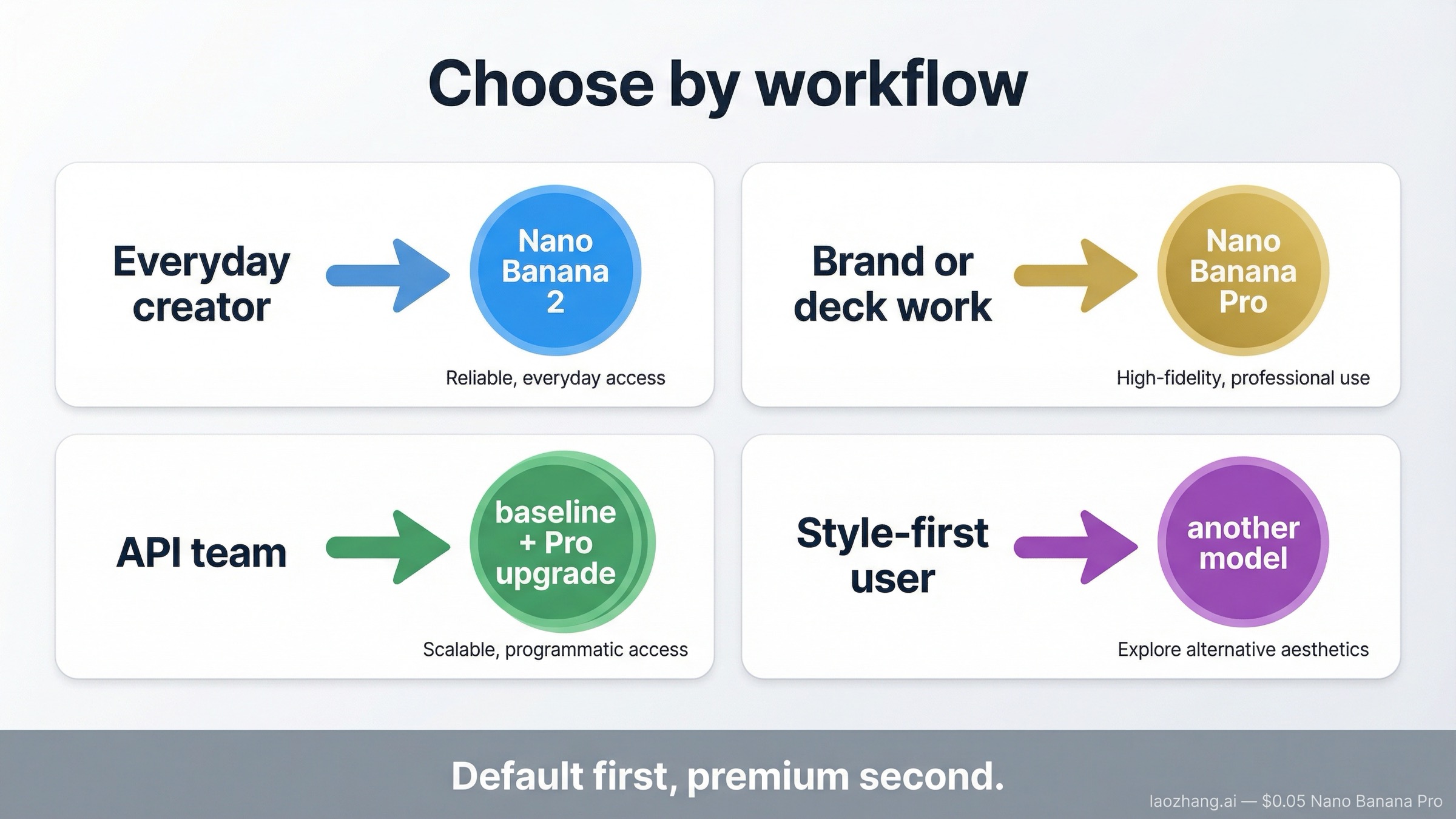

Who should still use Nano Banana Pro in 2026

The cleanest way to review Nano Banana Pro now is to stop asking whether it is "good" and start asking whether your workflow actually needs its premium edge.

If you mostly create social graphics, ad variants, blog visuals, concept mockups, or everyday image generations inside Google's ecosystem, you probably should not default to Pro anymore. Nano Banana 2 is cheaper, easier to justify, and now aligned with Google's own default recommendation. In that workflow, Pro is more likely to add friction than to rescue the result.

If you produce infographic-style visuals, presentation graphics, layout-heavy explainers, or branded marketing assets with embedded text, Nano Banana Pro is still worth serious consideration. That is the one cluster where the premium lane can save more time than it costs because the failure mode of "almost right" is so annoying. The stronger bet here is not that Pro is magically perfect. It is that its premium strengths line up with the specific things you care about most.

If you are a product or engineering team evaluating whether to expose Pro in an application, the right pattern is usually not "use Pro everywhere." It is "make Nano Banana 2 the baseline and let Pro be the premium or fallback route." That matches Google's current lineup, the published price difference, and the fact that many requests do not deserve a premium model call. If your real question is route design rather than review, the existing Nano Banana Pro API guide is the better next step.

If you are choosing between Google and a different premium image model, the answer depends on what failure you hate most. If you care more about edit-heavy production, transparent backgrounds, or cheaper standard-size output, GPT Image 1.5 may fit better. If you care more about aesthetic exploration and style-first image making, Midjourney can still be the more natural home. Nano Banana Pro makes the most sense when the winning criteria are Google-native grounding, text-heavy visuals, multi-reference control, or premium 4K inside Google's own stack.

That is why the right recommendation is conditional but still actionable:

- Use Nano Banana 2 as your default.

- Use Nano Banana Pro when the asset itself is valuable enough to justify a premium pass.

- Look elsewhere when your real priority is a different kind of workflow entirely.

Is Nano Banana Pro worth the money and preview risk?

This part of the review does not need its own giant pricing article because that already exists in the repo. It just needs to answer whether the premium cost still makes sense.

On the current Gemini pricing page, Nano Banana Pro's official lane is priced at $0.134 per 1K or 2K image and $0.24 per 4K image, with batch pricing at $0.067 and $0.12 respectively. That is not outrageous if the result really saves you revisions or client pain. It is expensive if you are using it as a default for routine, throwaway, or exploratory work.

The preview label matters just as much as the price. The current Vertex AI page still shows Pro as Public Preview, and Google's public rate-limit docs still point users back to AI Studio for active limits. In other words, the cost is not just dollars per image. The cost also includes workflow uncertainty. A premium model can still be worth it under those conditions, but only if the premium job is real.

That is why I would not answer the review query with a blanket "worth it" verdict. Nano Banana Pro is worth the price when:

- the image is likely to be reused, reviewed closely, or shipped to customers

- the text inside the image actually matters

- the layout or multi-image composition is hard enough that better control saves retries

- the business value of a cleaner first result is higher than the extra model cost

If you mainly want to understand the direct Google numbers, use Nano Banana Pro price in 2026. If your real question is cost posture across Google and other image models, Nano Banana Pro vs GPT Image 1.5 is the better branch. This review only needs the value judgment: Pro is worth paying for when the job really fits Pro.

Best alternatives when Nano Banana Pro is not the right answer

The best alternative inside Google's own stack is still Nano Banana 2. That is the easiest answer to defend because it comes from Google's current lineup logic, not from third-party brand positioning. If your main goal is fast general image generation and you still want to stay on Google's side of the ecosystem, Nano Banana 2 is the first thing to test and the most honest first recommendation. The dedicated Nano Banana 2 vs Nano Banana Pro guide goes deeper if that is the exact choice you are making.

The best alternative outside Google's stack depends on what makes you unhappy with Pro. If the problem is edit-heavy workflows, background control, or cheaper standard-size output, GPT Image 1.5 is the cleaner comparison because it is easier to budget and often easier to operationalize. If the problem is not quality but visual taste, atmosphere, or style exploration, Midjourney may still be the stronger alternative. The existing Nano Banana Pro vs Midjourney guide is the better follow-up for that route.

What I would not do is jump from "Pro is not the default" to "Pro is obsolete." That is not what Google's own docs say, and it is not what the strongest real use cases show. Pro still matters. It just matters for a narrower and more expensive class of jobs than many casual review pages pretend.

FAQ

Is Nano Banana Pro still the best Google image model?

Not as a universal default. Google's current docs point most users to Nano Banana 2 for all-around image generation. Nano Banana Pro is still the better choice when you need higher-fidelity text-heavy visuals, more complex composition, or premium 4K output.

Is Nano Banana Pro the same thing as Gemini 3 Pro Image Preview?

Yes. In Google's current naming, Nano Banana Pro maps to gemini-3-pro-image-preview. That naming correction matters because many exact-match pages never explain it cleanly.

Did Nano Banana Pro get worse in 2026?

The cautious answer is that users are clearly unhappy with parts of the Gemini app experience, especially in March 2026 Reddit threads, but that is not the same thing as an official statement that the underlying model was permanently downgraded. It is better to say trust has become more conditional, not to pretend there was no change at all.

Is Nano Banana Pro worth it in the Gemini app?

Sometimes, but much less often than older reviews suggest. Because Nano Banana 2 is now the default surface in Gemini, Pro works better as an upgrade path for specific high-value results than as the first thing you should reach for every time.

Is Nano Banana Pro worth it on the API?

Yes when the request really needs what Pro does best. No when you are paying premium-model prices for routine generation that Nano Banana 2 could already handle well enough.

Bottom line

Nano Banana Pro is still a good image model. It is just no longer the right answer to the broad question by default.

If you judge it as a premium specialist for text-heavy, layout-sensitive, multi-image, or 4K work, it still deserves a yes in 2026. If you judge it as the first model everyone should use for everyday Google image generation, the answer is now no.

That is the review verdict page one still needs: Nano Banana Pro is still worth it, but only when the job itself is premium enough to justify choosing something other than Nano Banana 2.