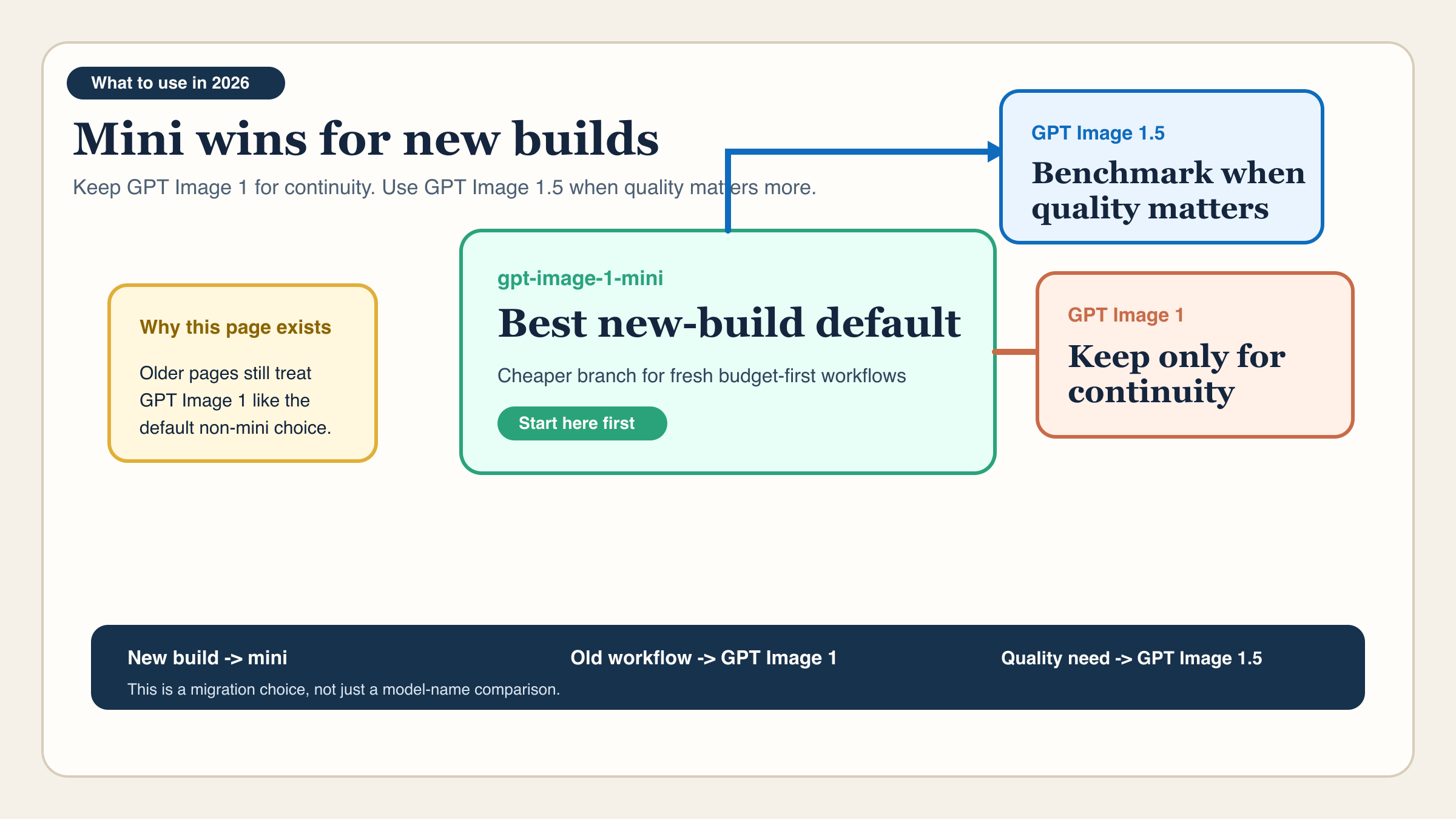

As of March 27, 2026, the cleanest answer is simple: for most new builds, choose gpt-image-1-mini over GPT Image 1. OpenAI's current model catalog describes GPT Image 1 as the previous image generation model, while it describes gpt-image-1-mini as a cost-efficient version of GPT Image 1. That means the budget-first comparison has largely been settled for new work. Mini is the current lower-cost branch. GPT Image 1 is mainly the legacy baseline.

That still leaves one important caveat. If your real complaint is not price but output quality, prompt adherence, or confidence on higher-value creative, the more useful modern comparison is often gpt-image-1-mini vs GPT Image 1.5, not mini versus GPT Image 1. OpenAI's current lineup makes that distinction clearer than many ranking pages do.

So the practical rule for this keyword is:

- choose

gpt-image-1-minifor most new cost-sensitive builds - keep GPT Image 1 only when an existing workflow depends on it

- benchmark GPT Image 1.5 if you are really asking for a better output lane

| If you need... | Best choice | Why |

|---|---|---|

| the better default for a fresh budget-first build | gpt-image-1-mini | It is the cheaper current OpenAI branch |

| short-term continuity for an older workflow | GPT Image 1 | It may preserve legacy prompt behavior while you migrate |

| stronger output rather than a legacy fallback | GPT Image 1.5 | It is the current flagship lane, not GPT Image 1 |

TL;DR

- Best default for a new build:

gpt-image-1-mini - Best reason to keep GPT Image 1: legacy continuity, not better value

- Current 1024x1024 low price: mini $0.005, GPT Image 1 $0.011

- Current 1024x1024 medium price: mini $0.011, GPT Image 1 $0.042

- Current 1024x1024 high price: mini $0.036, GPT Image 1 $0.167

- Current model positioning: GPT Image 1.5 is the flagship image lane, GPT Image 1 is the previous lane, and mini is the cheaper branch

- Best next read if quality is the real issue: GPT Image 1.5 pricing API

The fastest answer: choose mini for new builds, keep GPT Image 1 only for legacy continuity

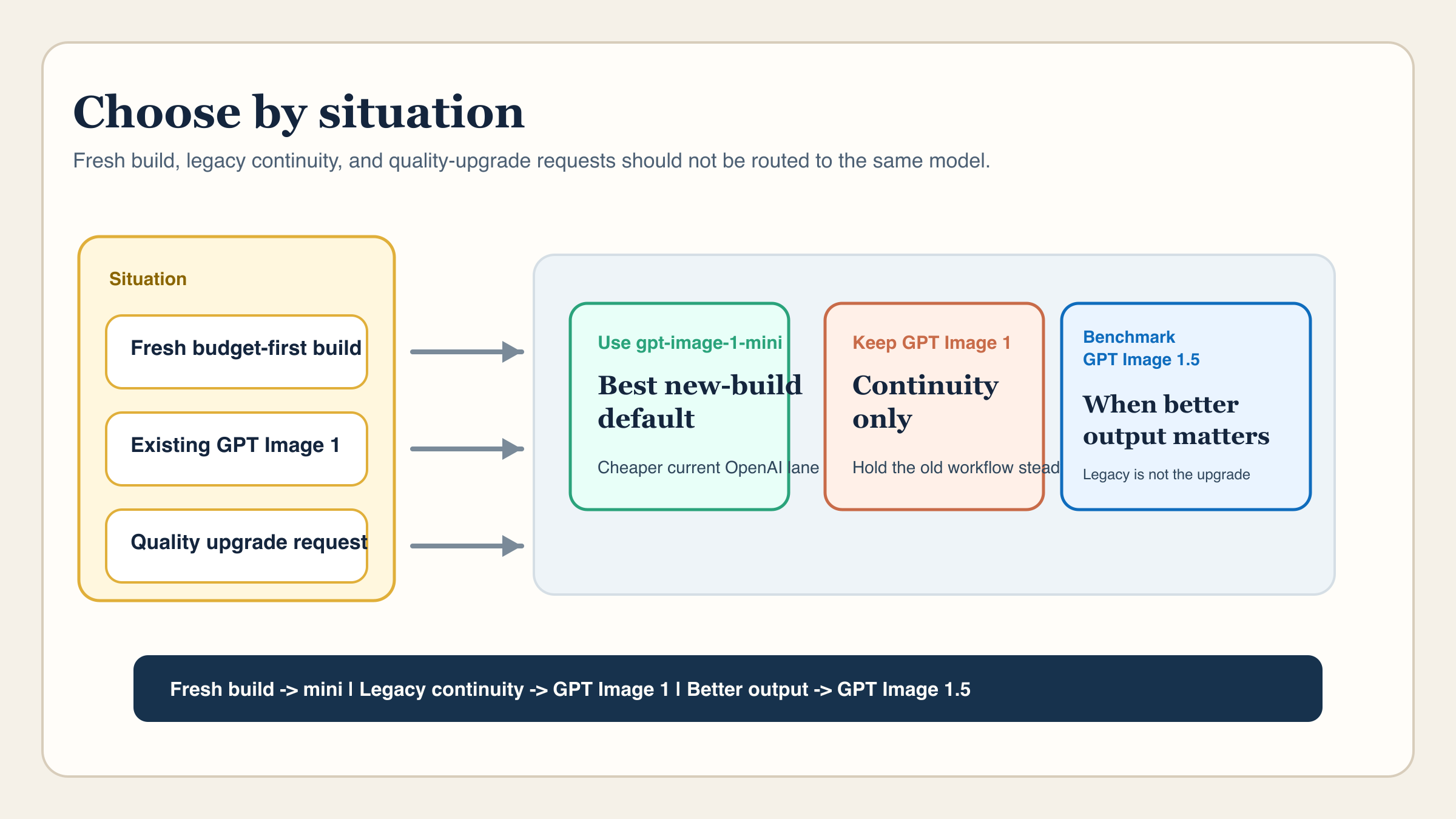

If you only want the routing answer, use this table and stop there.

| If your situation is... | Choose this model | Why | Main tradeoff |

|---|---|---|---|

| you are starting a new cost-sensitive image workflow | gpt-image-1-mini | It is OpenAI's current lower-cost branch and materially cheaper than GPT Image 1 across the visible price ladder | You are still choosing the budget lane, not the flagship |

| you already ship on GPT Image 1 and need continuity | GPT Image 1 | It may preserve prompt behavior, regression baselines, and workflow stability while you plan a cleaner migration | You are staying on the previous model, not the current default |

| you want better output rather than a legacy baseline | GPT Image 1.5 | It is OpenAI's current flagship image lane and the more relevant upgrade comparison | You pay substantially more than mini |

| you are not sure whether the flagship premium is worth it | Start with mini, then benchmark 1.5 | It gives you the cheapest current official OpenAI starting point for a controlled test | You still need to run the comparison on your own prompts |

That framing matters because many pages ranking for this keyword still treat GPT Image 1 as the obvious non-mini upgrade. OpenAI's current models directory does not support that reading anymore. It supports a different one: GPT Image 1 is the older baseline, mini is the cheaper branch, and GPT Image 1.5 is the current state-of-the-art lane.

So if your question is purely about which model belongs in a fresh build, the answer is not hard. Mini is the better starting point. The harder question is whether you have a reason to preserve GPT Image 1 anyway. That is a much narrower decision, and it is where a better comparison page has to be more specific than the current SERP average.

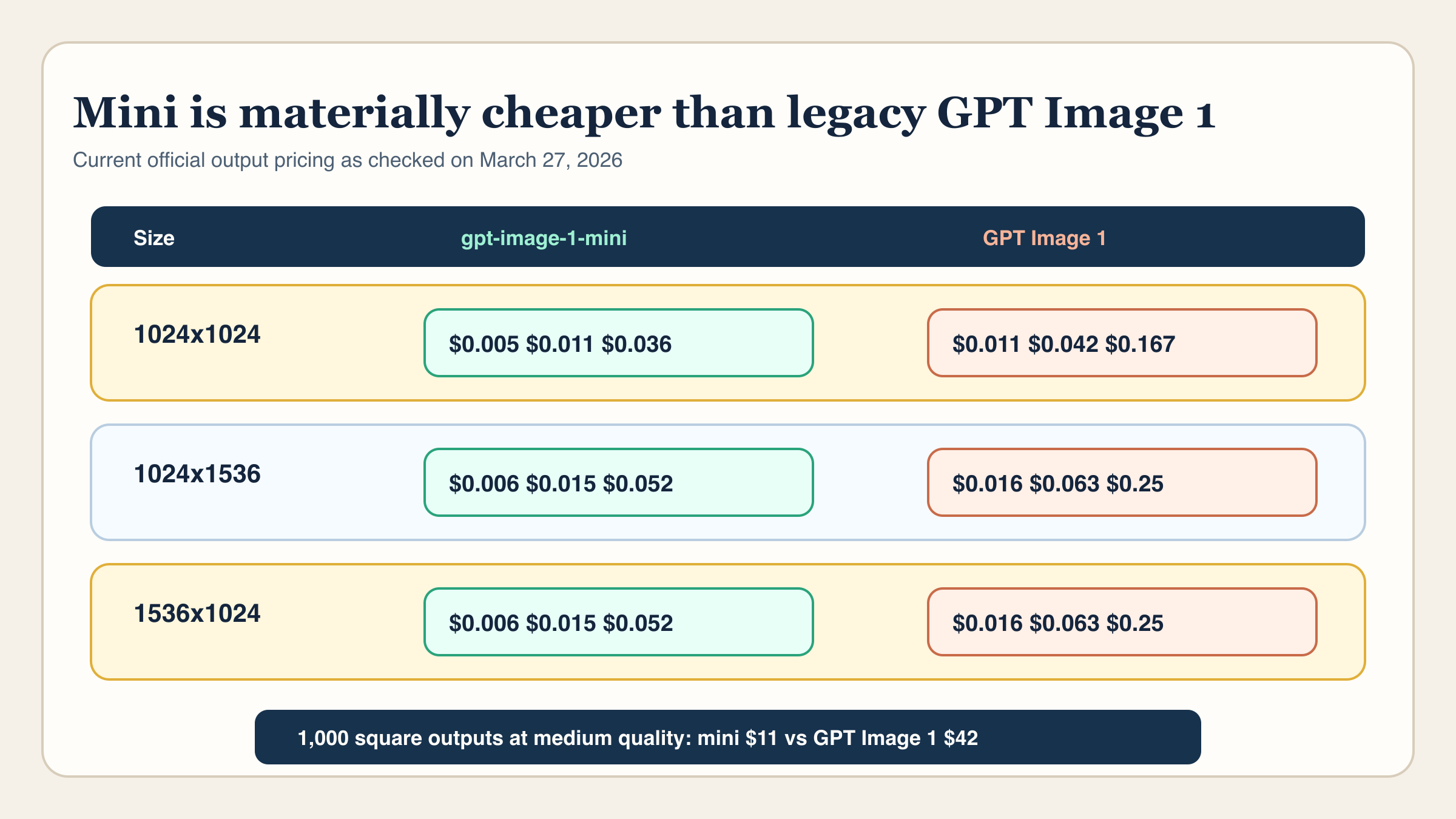

Current pricing: how much cheaper is gpt-image-1-mini than GPT Image 1?

On current official OpenAI model pages, mini is not just a little cheaper than GPT Image 1. It is cheaper enough that the difference changes the default recommendation for new builds.

For 1024x1024 output pricing, OpenAI currently lists:

gpt-image-1-mini: $0.005 low, $0.011 medium, $0.036 high- GPT Image 1: $0.011 low, $0.042 medium, $0.167 high

For the larger 1024x1536 and 1536x1024 sizes, the same pattern holds:

gpt-image-1-mini: $0.006 low, $0.015 medium, $0.052 high- GPT Image 1: $0.016 low, $0.063 medium, $0.25 high

That is not an edge-case savings. At square low quality, GPT Image 1 costs more than 2x mini. At square medium, it is nearly 4x mini. At square high, it is more than 4.5x mini. If you multiply that across batch generation, prototype variants, or internal creative exploration, the old model stops looking like a sensible fresh default very quickly.

The same point is easier to feel in small workload math. For 1,000 square outputs, mini's current ladder works out to about $5 low, $11 medium, and $36 high. GPT Image 1 works out to about $11 low, $42 medium, and $167 high. That is the difference between a cheap exploratory lane and a legacy lane that can become expensive surprisingly fast once you leave toy volumes.

The token tables strengthen the same conclusion. OpenAI's current model pages list these headline token rates:

- Mini text input: $2.00 per 1M

- GPT Image 1 text input: $5.00 per 1M

- Mini image output: $8.00 per 1M

- GPT Image 1 image output: $40.00 per 1M

Those numbers do not mean every real invoice is a pure token exercise. The visible per-image ladder is still the faster planning shortcut for straightforward generation. But together, the per-image and token tables tell the same story: mini is not a sidegrade. It is the current cheaper OpenAI branch.

That is why the better follow-up for pure cost planning is GPT Image 1 Mini pricing. This article is narrower. It is about whether GPT Image 1 still deserves selection when mini is already cheaper on the official sheet.

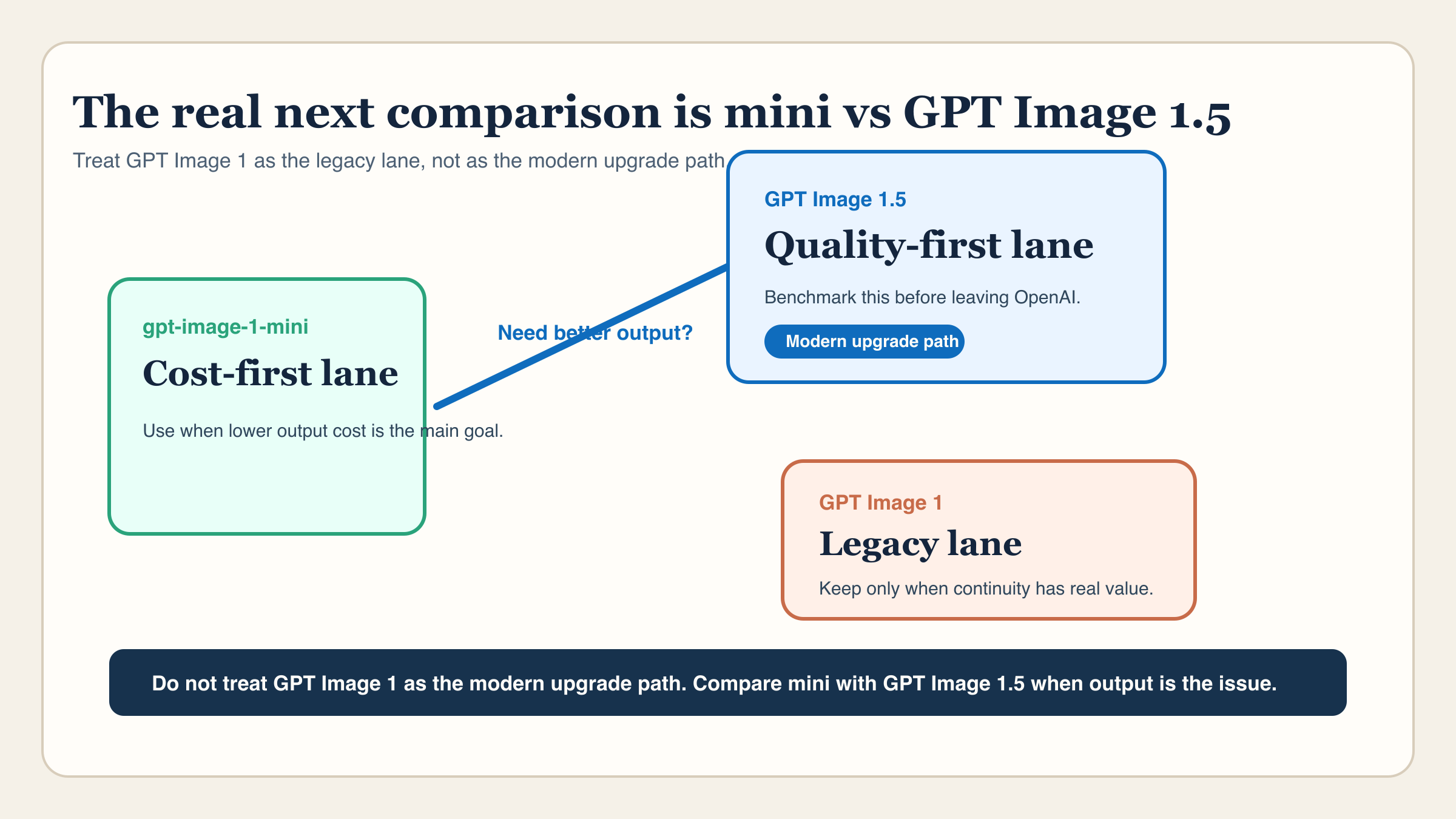

Why GPT Image 1 is now the legacy baseline, not the modern upgrade

This is the section most comparison pages dodge.

OpenAI's current GPT Image 1 model page describes GPT Image 1 as the previous image generation model. That one phrase changes the interpretation of the whole keyword. It means GPT Image 1 should now be read as a legacy reference point, not as the recommended non-mini choice for fresh work.

That matters because older tutorials, playground screenshots, and code examples still mention GPT Image 1. When people search gpt-image-1-mini vs gpt-image-1, some of what they are really asking is: "Is the full-size older model still the safer choice?" Current OpenAI positioning suggests the answer is usually no.

The wrong mental model is this:

- mini is the cheap version

- GPT Image 1 is the main version

- therefore GPT Image 1 must be the safer serious-production choice

The current lineup supports a different model:

- mini is the current lower-cost branch

- GPT Image 1 is the older baseline

- GPT Image 1.5 is the serious current premium lane

Once you accept that structure, the keyword becomes much easier to answer. GPT Image 1 stops being the model you recommend by habit, and becomes the model you keep only when continuity has real value.

This is also where OpenAI's current image generation guide helps. The guide groups GPT Image 1.5, GPT Image 1, and GPT Image 1 Mini together in one current reference, which makes it easier to see that GPT Image 1 is still supported in the family but no longer sits at the top of it.

When the real next comparison is gpt-image-1-mini vs GPT Image 1.5

If you are reading this because mini feels too weak, there is a good chance GPT Image 1 is not the answer you actually want.

OpenAI's current GPT Image 1.5 model page describes GPT Image 1.5 as the latest state-of-the-art image generation model. On its current price table, square 1024x1024 generation is listed at $0.009 low, $0.034 medium, and $0.133 high.

That pricing does two things at once.

First, it confirms that GPT Image 1.5 is materially more expensive than mini. At square medium, $0.034 is more than 3x mini's $0.011. At square high, $0.133 is much closer to GPT Image 1's $0.167 than to mini's $0.036. So the flagship is not the casual answer for a cost-first workflow.

Second, it makes GPT Image 1.5 the more honest answer when your real concern is output quality. If you are comparing mini against GPT Image 1 only because you assume the non-mini older model is the natural upgrade, you are probably asking the wrong question.

The better question is:

Do I want the cheapest current lane that still works, or do I want the current premium lane because retries, weak prompt following, or higher-value output are costing me more than the price difference?

That is a cleaner operator question than mini vs GPT Image 1. It is also why this article keeps GPT Image 1.5 as a controlled caveat instead of turning into a different keyword entirely. The comparison still matters, because people searching mini versus GPT Image 1 need help deciding whether GPT Image 1 should stay in the picture at all. But once the answer becomes "I need better output," GPT Image 1.5 is the current benchmark that belongs in the room.

When it still makes sense to keep GPT Image 1

GPT Image 1 is not useless. It is just narrow now.

The best reason to keep it is continuity.

That can mean a few different things:

- you already have approved prompts and workflows built around GPT Image 1 behavior

- you need regression stability while comparing a new lane

- you have internal quality benchmarks tied to GPT Image 1 output

- you want to avoid changing too many variables at the same time during a migration

Those are legitimate reasons. They are just very different from saying GPT Image 1 is the best default for a new build.

This distinction matters operationally. A team may rationally keep GPT Image 1 for thirty days while it tests mini for volume work and GPT Image 1.5 for premium work. That is a migration strategy. It is not the same as recommending GPT Image 1 to someone starting from zero today.

There is also less technical reason than many people assume to preserve GPT Image 1 forever. The current OpenAI model pages list the same major API families around these models, including the Responses API, Image API, and Batch path. So the argument for GPT Image 1 is usually not "it is the only route that fits our stack." The real argument is much narrower: "we already tuned our workflow around it, and changing now would add migration risk."

There is also a workflow-surface nuance worth keeping in mind. OpenAI's current image guide says the Image API is the best choice for single-image generation or edits, while the Responses API is better for conversational and multi-step image flows. If a team feels friction in production, sometimes the model is only half the issue. Sometimes the better fix is to keep the model family and choose the right surface. That is why OpenAI image API tutorial is a relevant companion if your problem is not purely model selection.

How I would benchmark the switch in one afternoon

The wrong way to compare mini and GPT Image 1 is to ask which one is "better" in the abstract. The right way is to test the exact thing that matters in your workflow.

I would do it in this order:

- Run 10 to 20 real prompts that reflect your actual output mix, not showcase prompts.

- Compare mini against your current GPT Image 1 setup on keep rate, retry count, and reviewer confidence.

- If mini loses mainly on quality, run the same set once on GPT Image 1.5.

- Separate fresh-build logic from migration logic. A new build can choose mini immediately even if an old build still needs GPT Image 1 for continuity.

- Record which lane wins on cost per accepted output, not just cost per call.

That last step is the one weak comparison pages skip. If mini costs less per call and gets accepted often enough, it wins for budget-first work. If GPT Image 1.5 costs more per call but reduces retries enough to cut total effort, it becomes the better premium lane. GPT Image 1 only wins when the continuity cost of changing away from it is still higher than the cost of keeping it for a little longer.

If I were running the migration in a real team, I would keep the decision window short. Give mini a real test on the same prompts. Give GPT Image 1.5 one controlled premium benchmark. If mini is already good enough, stop paying for the legacy baseline. If mini is not good enough, skip the sentimental middle step and test the current flagship. The one route that rarely makes sense now is choosing GPT Image 1 for a net-new workflow just because it sounds like the fuller version of mini.

Bottom line

For a fresh build in March 2026, gpt-image-1-mini is usually the right answer and GPT Image 1 is not. Mini is the cheaper current branch. GPT Image 1 is the previous baseline. If you need better output rather than legacy continuity, compare mini with GPT Image 1.5, not with GPT Image 1.

That is the cleanest current reading of the keyword. Use mini as the default for new budget-sensitive work. Keep GPT Image 1 only when continuity has real value. Upgrade your benchmark to GPT Image 1.5 when quality, not history, is the real reason you are reconsidering the model.