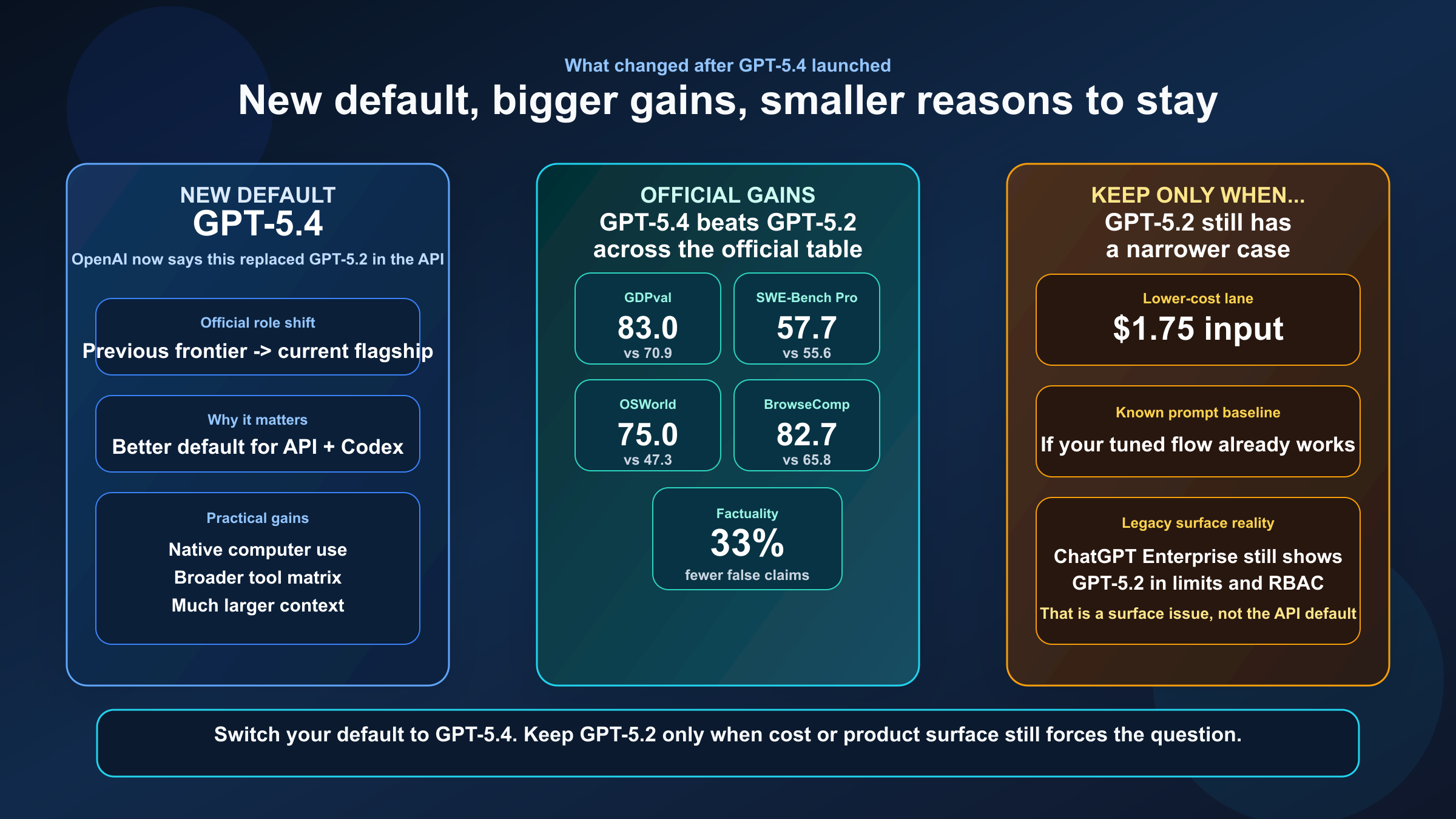

Short answer: for most teams, GPT-5.4 is the better default. OpenAI launched GPT-5.4 on March 5, 2026 and now recommends it as the flagship model for complex reasoning and coding. According to OpenAI's current model-selection guidance, GPT-5.4 replaced GPT-5.2 as the frontier default in the API and works as a drop-in replacement for most GPT-5.2 integrations.

That does not mean GPT-5.2 is useless. GPT-5.2, launched on December 11, 2025, is still cheaper on input and cached input, and it still shows up in some ChatGPT Enterprise model-limit guidance. If your workload is cost-sensitive, already tuned around GPT-5.2, or tied to a model-picker surface where GPT-5.4 is not the real default, GPT-5.2 can still be the smarter tactical choice.

This guide uses current OpenAI launch pages, current API model docs, and current help-center articles checked on March 19, 2026. It also separates the durable API and Codex recommendation from the messier ChatGPT and Enterprise reality, because that is where many comparison pages still lose users.

TL;DR

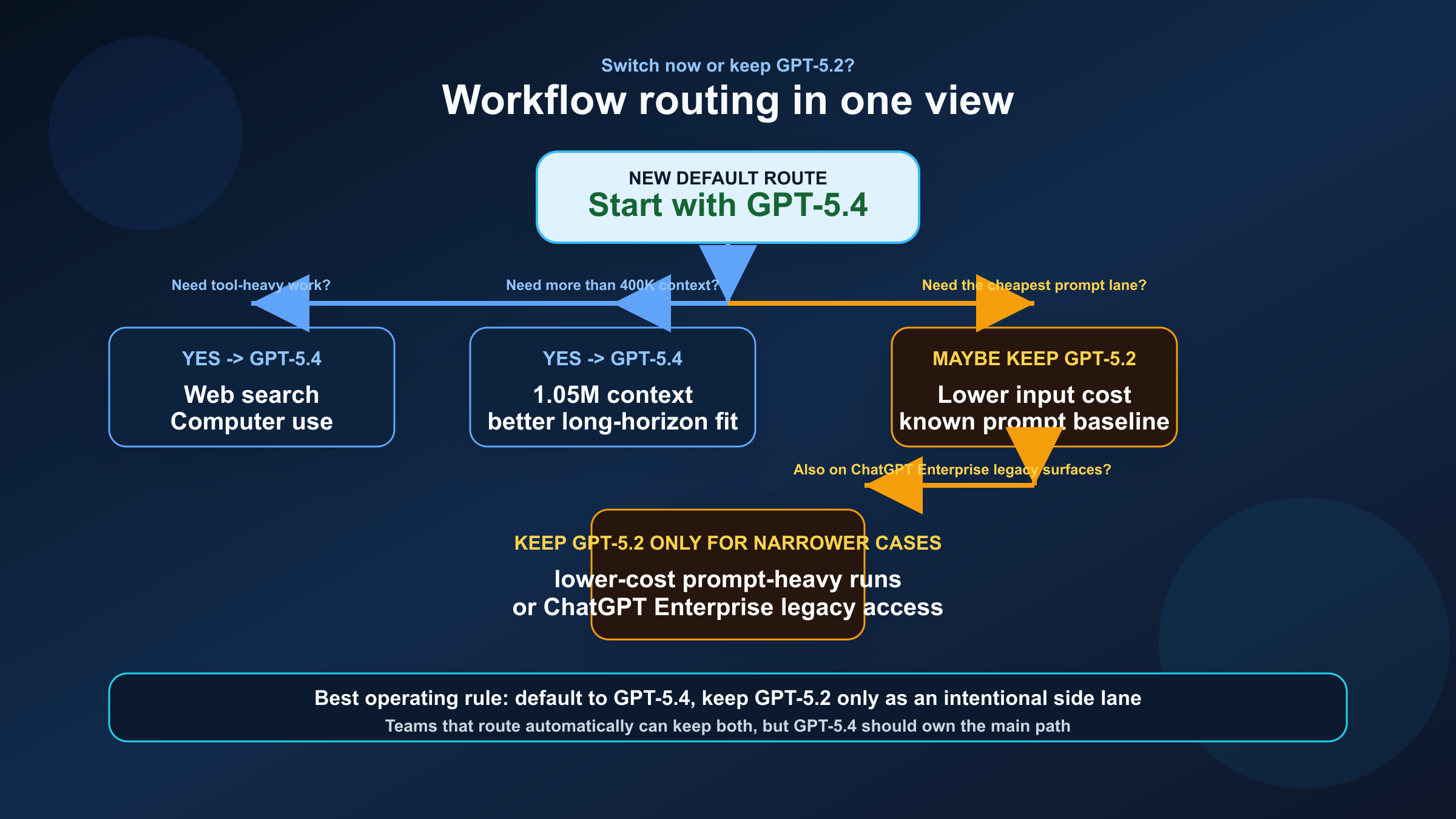

If you only want one recommendation, choose GPT-5.4 for most new API and Codex work. Keep GPT-5.2 only when lower prompt cost, a mature existing setup, or ChatGPT Enterprise surface constraints matter more than stronger tool support and a much larger context window.

| Category | GPT-5.4 | GPT-5.2 | Practical takeaway |

|---|---|---|---|

| Launch date | March 5, 2026 | December 11, 2025 | GPT-5.4 is the newer frontier default |

| Current official role | Flagship for complex reasoning and coding | Previous frontier model | GPT-5.4 is the default recommendation now |

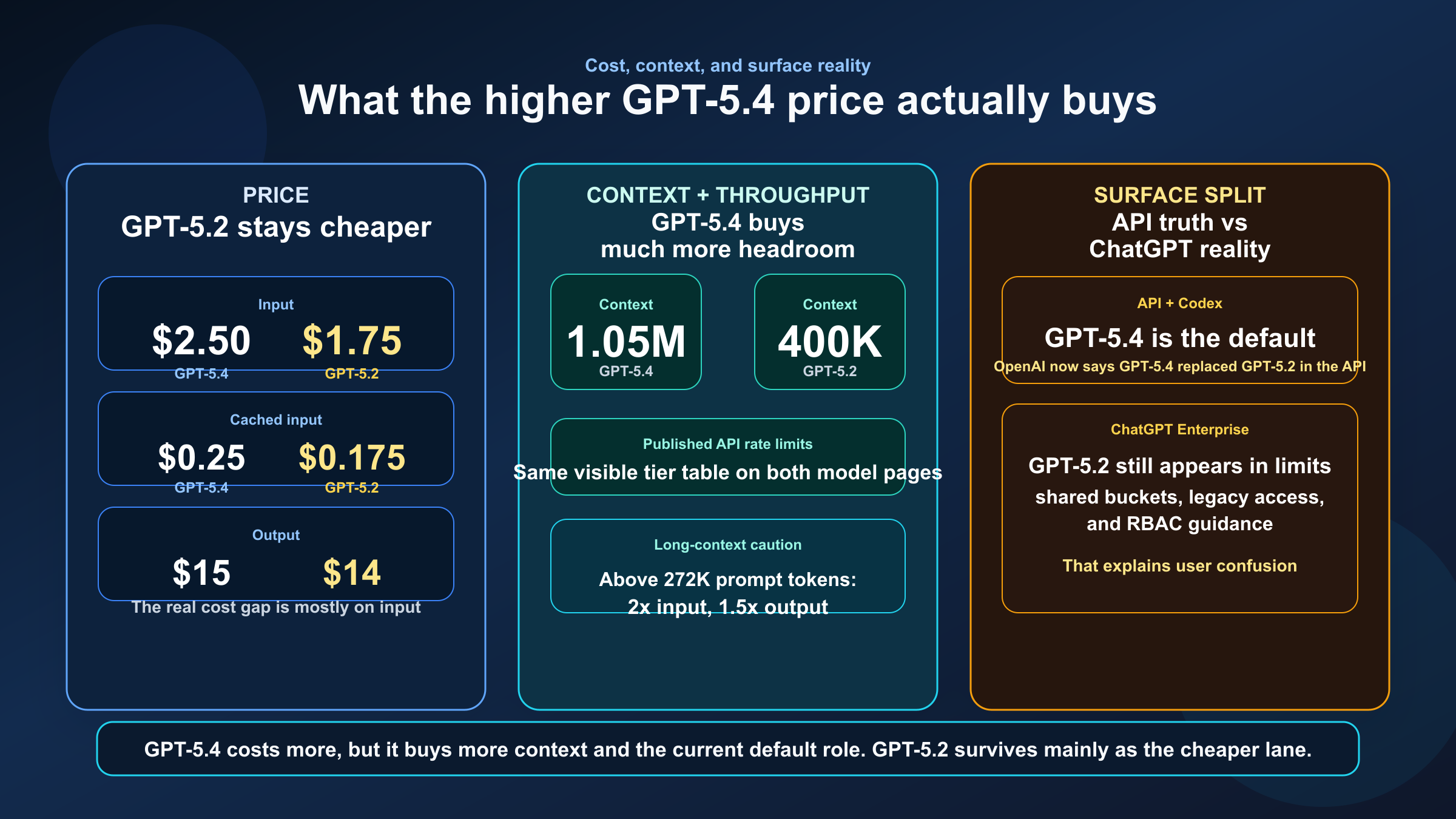

| Input price | $2.50 / 1M | $1.75 / 1M | GPT-5.2 is 30% cheaper on input |

| Cached input | $0.25 / 1M | $0.175 / 1M | GPT-5.2 stays cheaper for repeat-heavy flows |

| Output price | $15 / 1M | $14 / 1M | Output gap is small |

| Context window | 1,050,000 | 400,000 | GPT-5.4 is much better for long repo and document work |

| Max output | 128,000 | 128,000 | Tie |

| Published API rate limits | Same visible tiers as GPT-5.2 | Same visible tiers as GPT-5.4 | This is not really a throughput-limit story |

| Tool posture | Explicit broad Responses API tool matrix | Older frontier tool-calling posture | GPT-5.4 is the better agentic default |

| Best fit | New default for API, Codex, long-context, and tool-heavy workflows | Lower-cost prompt-heavy runs and legacy-surface cases | Use GPT-5.4 by default, keep GPT-5.2 intentionally |

The cleanest way to think about this comparison is simple: GPT-5.4 should replace GPT-5.2 as your default model, but GPT-5.2 should not necessarily disappear from your stack.

What Actually Changed From GPT-5.2 to GPT-5.4

The biggest change is not just that GPT-5.4 is newer. The real change is that OpenAI's own product guidance now treats GPT-5.4 as the center of gravity for serious work.

GPT-5.2 launched on December 11, 2025 as OpenAI's most advanced frontier model for professional work and long-running agents. Its launch emphasized spreadsheets, presentations, agentic coding, long-context reasoning, vision, and tool calling. At that time, GPT-5.2 was the answer to "what should I use when I need the strongest general OpenAI model?"

GPT-5.4 changed that on March 5, 2026. OpenAI now describes GPT-5.4 as the frontier model for professional work and says it combines recent advances in reasoning, coding, and agentic workflows into one default model. More importantly for this keyword, OpenAI's latest-model guide says GPT-5.4 replaced GPT-5.2 in the API and is a drop-in replacement for most GPT-5.2 integrations.

That official language matters because it answers the hidden question underneath the keyword. Most searchers are not trying to decide which model had the more impressive launch day. They are asking whether GPT-5.2 is still the default they should build around. OpenAI's own answer is now no.

GPT-5.4 also changed what "general-purpose" means in practice. OpenAI's launch page says GPT-5.4 is the first general-purpose model it has released with native, state-of-the-art computer-use capabilities and up to 1 million tokens of context. That is a major difference from GPT-5.2's role. GPT-5.2 already pushed long-context and tool-using workflows forward, but GPT-5.4 turns those capabilities into a more complete agentic platform.

This is why the right comparison is not "GPT-5.4 versus GPT-5.2 on one benchmark." The real comparison is:

- previous frontier default versus current frontier default

- strong tool-calling and long-context model versus broader agentic workhorse

- lower-cost mature path versus higher-ceiling default path

If you treat the comparison that way, the rest of the decision becomes much clearer.

Benchmarks and Capability Gains That Matter

OpenAI's GPT-5.4 launch page directly compares GPT-5.4 against GPT-5.2 across several benchmarks that map well to real work:

| Area | GPT-5.4 | GPT-5.2 | Why it matters |

|---|---|---|---|

| GDPval | 83.0% | 70.9% | Better performance on real professional knowledge-work tasks |

| SWE-Bench Pro | 57.7% | 55.6% | Better on tough software engineering tasks |

| OSWorld-Verified | 75.0% | 47.3% | Much stronger computer-use and GUI-task performance |

| Toolathlon | 54.6% | 46.3% | Better at multi-tool workflows |

| BrowseComp | 82.7% | 65.8% | Better at search-heavy, evidence-driven tasks |

The most important thing here is not that GPT-5.4 wins every line. It is where it wins.

The GDPval jump from 70.9% to 83.0% matters because GPT-5.4 is not only a coding upgrade. It is better at the mixed professional work that surrounds coding: reading documents, creating deliverables, synthesizing sources, and carrying complex tasks across multiple steps. If your real workflows mix engineering, analysis, writing, and tooling, that is exactly the kind of gain that changes daily output quality.

The SWE-Bench Pro difference is smaller, but it still points in the same direction. GPT-5.4 is at least a bit stronger on hard software-engineering tasks while also being better at the surrounding multi-step work. That matters because most production engineering is not just "write the patch." It is interpret the issue, inspect the codebase, use tools, verify assumptions, and make a clean decision under uncertainty.

The largest practical gap is in computer use and tool-heavy work. GPT-5.4's OSWorld-Verified score jumps far above GPT-5.2, and OpenAI explicitly frames GPT-5.4 as the first general-purpose model with native, state-of-the-art computer use. If your workflows involve browser navigation, screenshots, UI actions, external tools, or longer-running agents, that is not a minor improvement. It changes which model deserves to be your default route.

OpenAI also says GPT-5.4 is more factual than GPT-5.2: its individual claims are 33% less likely to be false, and its full responses are 18% less likely to contain any errors relative to GPT-5.2. That matters for a keyword like this because many teams are no longer choosing models only for code generation. They are choosing models for code plus reasoning plus decision support.

There is one more benchmark-like detail worth paying attention to: OpenAI says GPT-5.4 uses significantly fewer tokens to solve problems when compared to GPT-5.2. Even without reducing the comparison to one latency number, that is an important signal. Better raw quality plus lower token use in harder workflows is exactly the kind of improvement that makes a new default real rather than cosmetic.

So the benchmark conclusion is not subtle:

- GPT-5.4 is stronger overall

- GPT-5.4 is especially stronger in long-horizon agentic work

- GPT-5.2 still looks respectable, but it no longer defines the frontier

If you want a useful sibling comparison for the OpenAI-side coding lane, our guide to GPT-5.4 vs GPT-5.3-Codex is worth reading after this one because it shows where the newer flagship still competes with a more specialized path.

Pricing, Context Window, and Published API Rate Limits

This is where GPT-5.2 still keeps a serious argument.

| Feature | GPT-5.4 | GPT-5.2 | Why it matters |

|---|---|---|---|

| Input | $2.50 / 1M | $1.75 / 1M | GPT-5.2 is cheaper when prompt volume dominates |

| Cached input | $0.25 / 1M | $0.175 / 1M | GPT-5.2 remains cheaper in repeat-heavy flows |

| Output | $15 / 1M | $14 / 1M | Output cost difference is small |

| Context window | 1,050,000 | 400,000 | GPT-5.4 can hold much larger repos and document sets in one session |

| Max output | 128,000 | 128,000 | No meaningful difference here |

| Long-context pricing caveat | >272K input triggers 2x input and 1.5x output for the full session | No equivalent published multiplier | GPT-5.4's giant context is powerful, but not free |

| Published rate limits | Same visible tier table as GPT-5.2 | Same visible tier table as GPT-5.4 | Throughput caps are not the deciding factor |

The real pricing story is straightforward. GPT-5.4 is more expensive, but not massively more expensive across every dimension. The output gap is small: $15 versus $14 per million. The main delta is input and cached input, where GPT-5.2 keeps a real edge.

That matters in prompt-heavy workflows. If your system repeatedly sends large instructions, repo context, or long repeated blocks, GPT-5.2 can still be the more economical choice. If the work does not need GPT-5.4's broader tool posture or larger context window, the cheaper model can still be rational.

The context window difference is larger than the price difference. GPT-5.4's 1.05M context window is dramatically larger than GPT-5.2's 400K. For repo-scale analysis, multi-document synthesis, and long-running agent sessions, that is a meaningful upgrade. It lets you hold more of the problem in one place and reduces the need for aggressive truncation or summarization.

But this is exactly where weaker comparison pages get sloppy. OpenAI's current GPT-5.4 model page also says that prompts above 272K input tokens are charged at 2x input and 1.5x output for the full session. So the giant context window is not a free bonus. It is a bigger ceiling that you should use when the workflow genuinely benefits from it.

That leads to a better rule:

- If your workload is long-context enough to benefit from 1.05M context, GPT-5.4 is usually worth it.

- If your workload fits comfortably inside GPT-5.2's 400K context and cost matters, GPT-5.2 still has a case.

One more useful nuance: the published API rate-limit tables on the current GPT-5.4 and GPT-5.2 model pages are effectively the same across the visible usage tiers. That means you should not turn this into a false "which model gets more RPM or TPM?" comparison. On the published API side, the decision is mainly about model capability, context, tools, and cost.

If you need broader budgeting context, our existing OpenAI API pricing guide and OpenAI API cost guide are useful follow-up reads once you have decided which model belongs in the default lane.

API and Codex Guidance vs ChatGPT and Enterprise Reality

This is the section many comparison pages skip, and it is one reason users keep feeling that different articles are talking about different products.

On the API and Codex side, the story is clean:

- GPT-5.4 is the new flagship default

- GPT-5.4 replaced GPT-5.2 in the API according to OpenAI's latest-model guide

- GPT-5.4 is the safer default for new tool-heavy and long-running workflows

On the ChatGPT and Enterprise side, the story is messier.

OpenAI's current help-center documentation for ChatGPT Enterprise and Edu still shows GPT-5.2 in multiple places. As of March 19, 2026, the page says GPT-5.2 and GPT-5.3 Instant share usage limits, GPT-5.4 and GPT-5.2 Thinking share the 200-per-week bucket, and GPT-5.2 still appears in role-based access control and legacy access logic. The same page also says access to GPT-5.3 Instant and GPT-5.4 Thinking can be disabled by default in Enterprise workspaces.

That does not mean GPT-5.2 is the better frontier model. It means product-surface behavior still depends on plan, admin settings, and legacy routing. If you are a developer choosing the best default for new technical work, the API guidance matters more. If you are an Enterprise admin or a heavy ChatGPT user trying to explain what people actually see in the model picker, the help-center guidance matters too.

This is why the keyword feels noisier than it should. Two people can both say they are comparing GPT-5.4 and GPT-5.2 while actually talking about different environments:

- one is choosing an API default for production routing

- one is choosing a Codex default for long-running technical work

- one is talking about ChatGPT Enterprise limits, RBAC, or legacy access

If you do not separate those surfaces, the comparison turns into a mess of half-true statements.

The practical takeaway is:

- For API and Codex decisions: trust the current model docs and latest-model guide first.

- For ChatGPT and Enterprise decisions: also check the current help-center limits and admin rules, because GPT-5.2 can still appear there in ways that do not reflect the broader API recommendation.

If part of your team is still deciding between ChatGPT subscription behavior and API usage rather than only model quality, our guide to ChatGPT Plus vs free speed and quota behavior for GPT-5 is the better companion article.

When GPT-5.4 Is Clearly the Better Choice

GPT-5.4 is the better choice when the work is broader than just "answer this prompt cheaply."

Use GPT-5.4 when you need:

- long-context repo analysis

- browser or computer-use tasks

- multi-tool agentic workflows

- better factual reliability

- document-heavy or spreadsheet-heavy professional work

- one strong default model across coding and non-coding tasks

For example, if you are building agents that inspect a codebase, search the web, use tools, read documents, patch files, and verify outputs, GPT-5.4 is the obvious winner. Its broader tool posture and stronger official benchmark profile map directly to that workload.

It is also the better default for teams that want to simplify routing. One of the biggest hidden costs in production AI systems is maintaining too many "special" default paths. GPT-5.4 lets you collapse more of that complexity into one route because it is strong across reasoning, coding, and professional knowledge work instead of being optimized mainly for one slice of that stack.

GPT-5.4 is also easier to justify for senior engineering, architecture, and operations work where tasks frequently shift between reading, planning, implementing, and checking. Those jobs are often bottlenecked by context management and tool orchestration rather than by raw token price.

So if your workload looks like "real work across a messy tool and context environment," choose GPT-5.4.

When GPT-5.2 Still Makes Sense

GPT-5.2 still makes sense in narrower situations, and pretending otherwise would make this article less useful.

Keep GPT-5.2 when:

- input cost matters more than frontier performance

- your prompt patterns already fit comfortably inside 400K context

- you already have a tuned GPT-5.2 production path and do not need a migration immediately

- you are working in ChatGPT Enterprise or other surfaces where GPT-5.2 is still part of the live access model

- you want a cheaper fallback route under a GPT-5.4-first system

This matters most for prompt-heavy, repeat-heavy, or cost-sensitive engineering flows. GPT-5.2's lower input and cached-input pricing can still add up to a meaningful savings difference at scale. If the work is not tool-heavy and does not benefit from GPT-5.4's bigger context or broader agentic behavior, the older model can still be a sensible production lane.

GPT-5.2 can also remain useful during migration. If your team has a well-understood GPT-5.2 prompt baseline, you do not need to rip it out immediately. A better move is to change the default to GPT-5.4 for new work while keeping GPT-5.2 as a fallback or cost-optimized option.

The key is to use GPT-5.2 intentionally, not by inertia. GPT-5.2 is no longer the model you choose because you assume it is still the best OpenAI default. It is the model you keep because one of its narrower advantages still matters in your environment.

Migration Checklist: Moving From GPT-5.2 to GPT-5.4

If your team already uses GPT-5.2, the smartest migration is practical rather than ideological.

- Make GPT-5.4 the default route for new API and Codex workflows.

- Keep GPT-5.2 available for lower-cost prompt-heavy workloads or legacy flows that are already performing well.

- Re-test at least three real workloads before cutting over fully: one long-context job, one tool-heavy workflow, and one cost-sensitive prompt-heavy task.

- Add cost monitoring for sessions that exceed 272K input tokens so GPT-5.4's long-context premium does not surprise you.

- If your users work in ChatGPT Enterprise, document the difference between API guidance and workspace model availability so people do not confuse admin settings with model quality.

- Keep one fallback rule that explains when to route back to GPT-5.2 instead of assuming every problem belongs on GPT-5.4.

That approach gives you the benefit of the better model without forcing a messy all-or-nothing migration.

FAQ

Is GPT-5.4 a full replacement for GPT-5.2?

For most new API and Codex work, yes. OpenAI's current latest-model guide says GPT-5.4 replaced GPT-5.2 in the API and acts as a drop-in replacement for most GPT-5.2 integrations. But that does not mean GPT-5.2 vanished from every product surface or stopped making sense in lower-cost workflows.

Is GPT-5.4 always worth the extra cost?

No. It is usually worth it when you benefit from stronger reasoning, computer use, broader tool support, or the larger context window. If your workload is mainly prompt-heavy and stays well below GPT-5.2's context ceiling, GPT-5.2 can still be the better economic choice.

Are GPT-5.4 and GPT-5.2 different on API rate limits?

Not in any major way on the current published model pages. The visible API rate-limit tiers are effectively the same, so this is not primarily a throughput-limit comparison.

Why do some users still see GPT-5.2 in ChatGPT Enterprise?

Because ChatGPT Enterprise is not the same thing as the API model catalog. OpenAI's current help-center documentation still shows GPT-5.2 in shared limit buckets, RBAC logic, and legacy or fallback access flows. That is a product-surface detail, not proof that GPT-5.2 is still the best frontier model.

When should I keep GPT-5.2 instead of fully switching?

Keep it when lower input cost matters, when your workflows already perform well on GPT-5.2, or when you are dealing with account surfaces where GPT-5.4 is not the practical default yet. Otherwise, GPT-5.4 should become the main route.