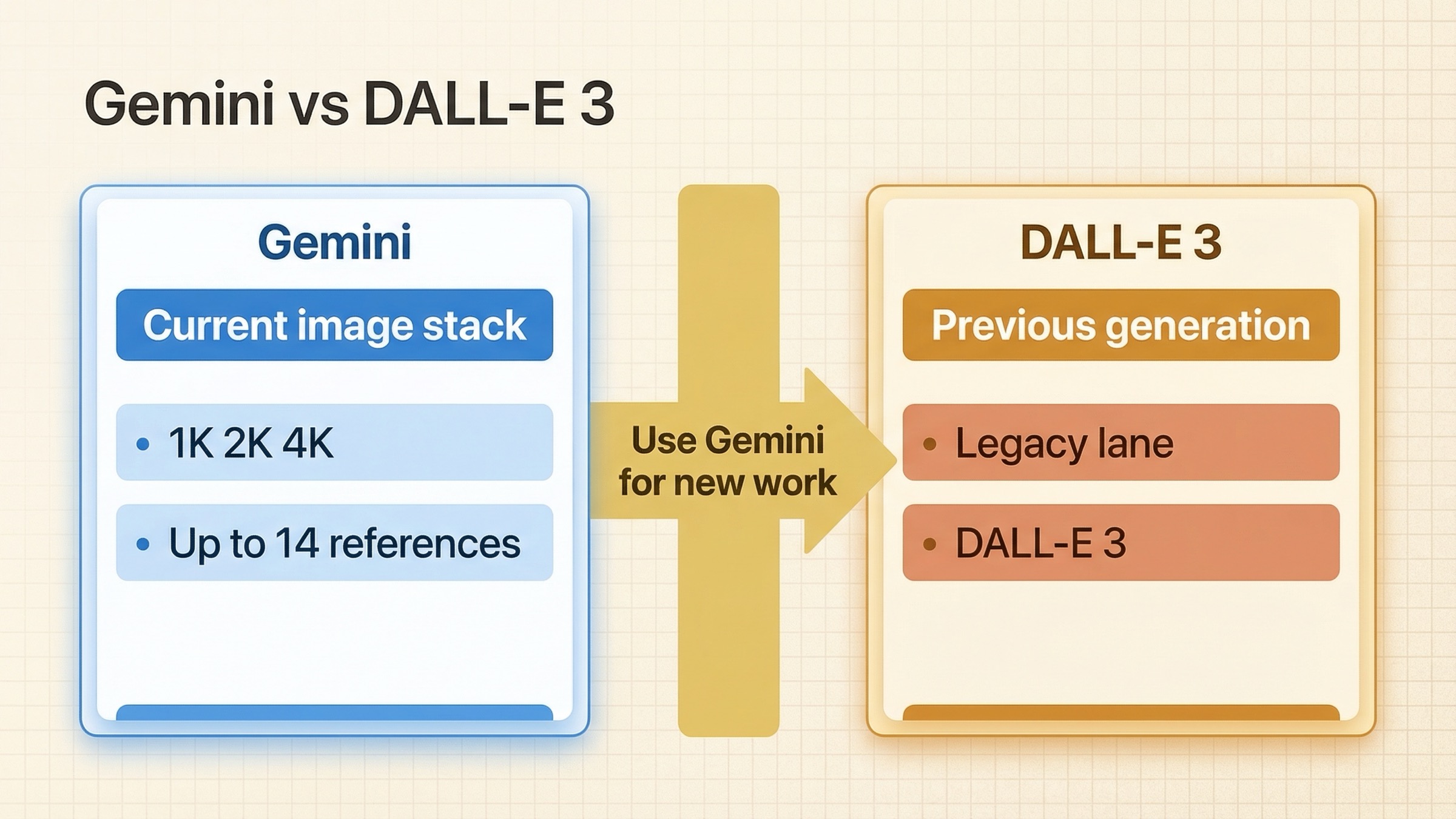

As of March 22, 2026, Gemini is the better choice for new image-generation work, while DALL·E 3 is mainly a legacy OpenAI lane worth keeping only if you already depend on it. That is the real answer behind this keyword, and most pages still blur it because they compare today's Gemini image stack against yesterday's OpenAI image default as if both sides were equally current.

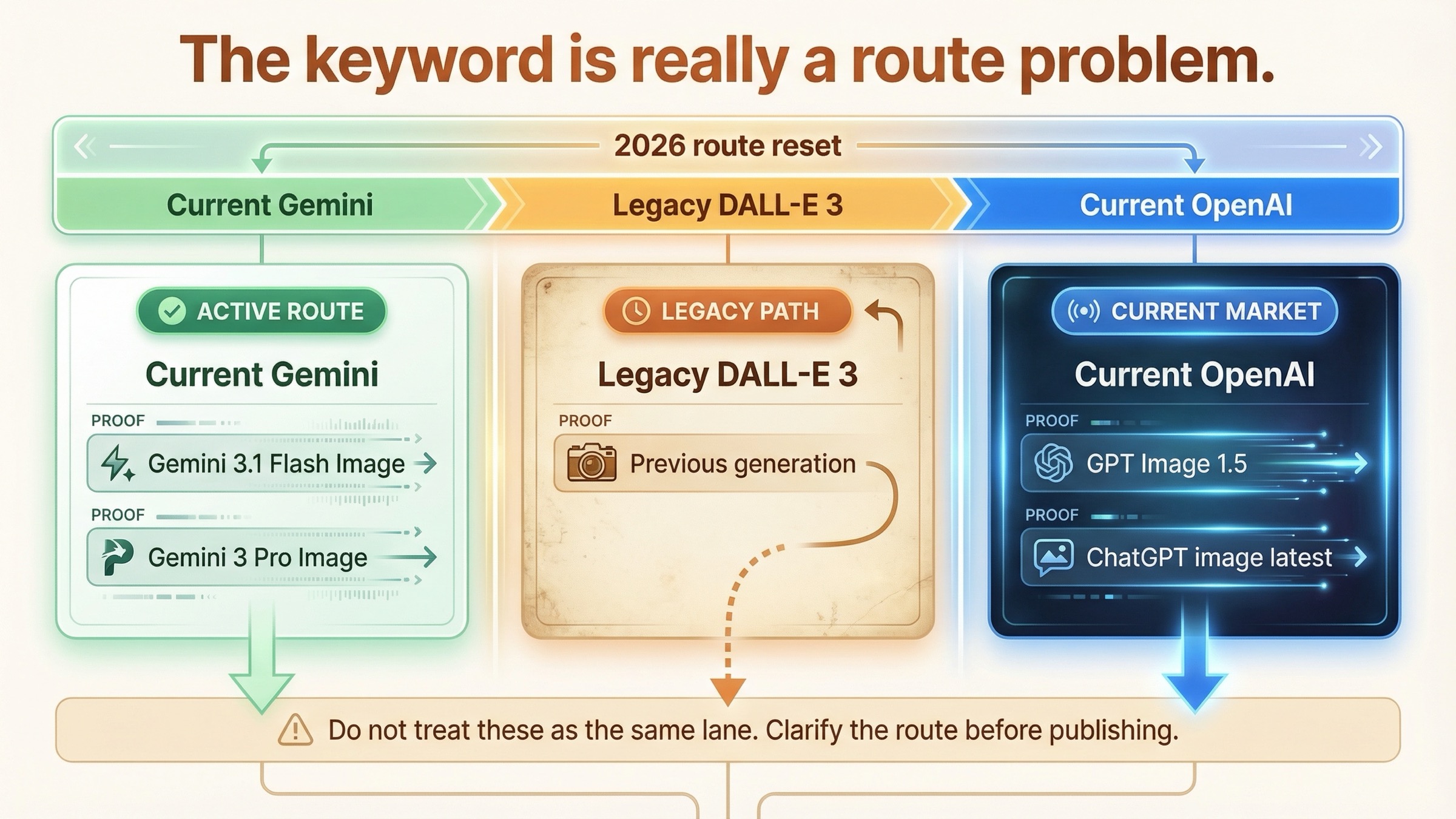

The key change is on the OpenAI side. OpenAI's current image-generation guide now calls DALL·E 3 a previous-generation model and recommends GPT Image for a better experience. At the same time, Google's live Gemini image docs center Gemini 3.1 Flash Image Preview and Gemini 3 Pro Image Preview, with bigger output-size flexibility, stronger reference-image workflows, and a more clearly current production story.

So this is not really a clean same-generation head-to-head anymore. It is a buying decision about whether to choose current Gemini, keep legacy DALL·E 3, or skip the legacy comparison and evaluate GPT Image 1.5 instead. If you want the short answer first, start with the table below.

TL;DR

| What you actually need | Better answer now | Why |

|---|---|---|

| A new image workflow in 2026 | Gemini | Google's current image docs expose active Gemini image lanes with 1K, 2K, and 4K output, reference-image workflows, and richer editing features. |

| A legacy OpenAI image workflow already tuned for DALL·E 3 | Keep DALL·E 3 short term, but plan a migration | DALL·E 3 still exists, but OpenAI now labels it previous generation and deprecated. |

| Current OpenAI image generation | GPT Image 1.5 / chatgpt-image-latest, not DALL·E 3 | This is the current OpenAI image answer today. |

| Text-heavy graphics, infographics, or structured layouts | Gemini | Google's current Gemini image docs explicitly emphasize advanced text rendering, larger sizes, and professional asset production. |

| 4K output or more flexible size planning | Gemini | DALL·E 3's official model page still lists only 1024x1024, 1024x1536, and 1536x1024 sizes. |

| Simple compatibility with an older DALL·E 3 prompt library | DALL·E 3 for now | If the workflow is already working, migration cost may matter more than chasing the newest surface immediately. |

The clean rule is this: use Gemini for new work, keep DALL·E 3 only for legacy continuity, and compare Gemini to GPT Image 1.5 if you want the strongest current OpenAI alternative.

Why This Keyword Is Harder Than It Looks in 2026

This keyword still gets searched because DALL·E 3 became the default mental model for OpenAI image generation in 2023 and 2024. A lot of comparison pages, prompt galleries, and example threads were built around it, so people still type "Gemini vs DALL·E 3" even after the market moved on.

But the official product surfaces no longer line up cleanly with that old framing. On Google's side, the live Gemini image-generation documentation now describes a current Gemini stack built around Gemini 3.1 Flash Image Preview and Gemini 3 Pro Image Preview. On OpenAI's side, the current image-generation guide says DALL·E 3 is a previous-generation model and that developers should use GPT Image for a better experience. OpenAI's December 16, 2025 product post also says the new ChatGPT image model is rolling out in ChatGPT and is available in the API as GPT Image 1.5.

That means a lot of page-one content is outdated in a very specific way. It is not always factually useless, but it is framed around a market state that no longer exists. Some pages are really comparing Imagen 3 in Gemini against DALL·E 3. Some are comparing older ChatGPT image behavior against Google's current image stack. Some are comparing sample outputs with no attention to what the vendors themselves now say is current.

The better question today is not "Which brand won the old rivalry?" It is "What should I choose now if I need an image model that I can actually build on?" Once you ask that version, Gemini gains a much stronger case.

Where Gemini Is Better Than DALL·E 3 Right Now

Gemini's biggest advantage in 2026 is not that it has a prettier brand-new demo. It is that Google's current image stack looks like an active product surface with multiple useful lanes instead of one legacy model still carrying old name recognition.

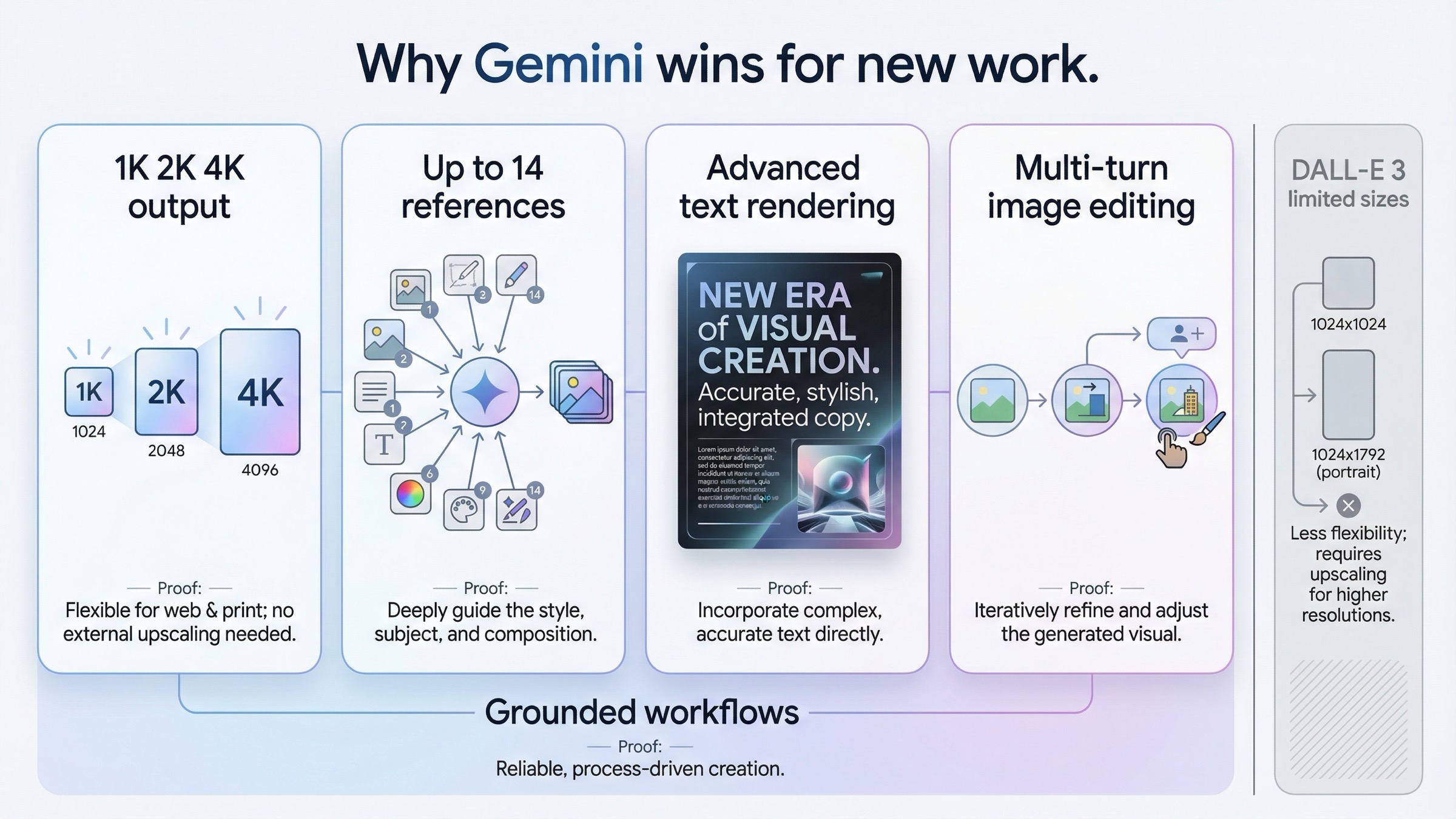

The first practical difference is size flexibility. Google's current image docs say Gemini 3 image models support 1K, 2K, and 4K output, and that Gemini 3.1 Flash Image adds 512 as well. DALL·E 3's current model page still lists only 1024x1024, 1024x1536, and 1536x1024. If your work includes print-like assets, web hero images, dense infographics, or anything where final delivery size matters, that difference is not cosmetic. It changes what the model can reasonably be asked to do without an extra scaling step.

The second difference is reference-heavy workflow support. Google's image-generation guide says Gemini 3 image models can mix up to 14 reference images, with specific limits for object fidelity and character consistency depending on the model. That is a very different workflow posture from the older DALL·E 3 story. It means Gemini is better suited to brand systems, asset families, product variations, and other repeatable image-production jobs where consistency matters more than a single pretty output.

The third difference is current editing and production framing. Google's live docs and Gemini app updates talk about multi-turn image editing, likeness-preserving edits, blending photos, grounded generation, and structured visual tasks such as infographics and marketing assets. DALL·E 3 still matters historically, but the current OpenAI docs no longer present it as the main path for that kind of work. When a vendor's own current documentation stops treating a model as the forward path, that matters.

Gemini also has the cleaner current story for text-heavy visual work. Google explicitly calls out advanced text rendering and professional asset production in the current Gemini 3 image documentation. That matters because many real image workflows are not fantasy art contests. They are ad creatives, menus, product cards, hero images, diagrams, and social visuals that need labels, numbers, or structured copy. A model that is documented and positioned around that kind of work is easier to trust for it.

This does not automatically mean Gemini wins every aesthetic preference. Official docs cannot tell you which model matches your personal taste. But they can tell you which platform is actively expanding the workflow surface, and right now that answer is Gemini rather than DALL·E 3.

Where DALL·E 3 Still Has a Case

DALL·E 3 still has a case when the decision is really about continuity, not innovation.

If you already have a DALL·E 3 prompt library, internal examples, QA expectations, or downstream tooling tuned to its outputs, there is a real cost to migration. That cost is not just money. It is prompt retuning, output comparison, brand review, edge-case testing, and developer time. In that situation, "keep DALL·E 3 for now" can be the right short-term answer even if it is not the best new-build answer.

It can also make sense when the workflow is deliberately narrow and stable. DALL·E 3's official page still provides a simple size-and-price picture. For a small legacy tool that only needs one-shot generations in those supported aspect ratios, the path can remain serviceable for a while. That is especially true if the team does not need 2K or 4K output, reference-image composition, or a broader current editing workflow.

There is also a softer reason the model survives in search demand: some users simply prefer the outputs or the prompt behavior they already know. That is not an official capability claim. It is an operational truth about creative systems. If people have built an intuition around DALL·E 3 over many months, replacing it is not free.

Still, the honest way to frame that case is limited. DALL·E 3 is not the stronger current strategic choice. It is the model you keep when the migration cost is higher than the short-term benefit of switching today.

What the Current OpenAI Answer Actually Is

This is the section most "Gemini vs DALL·E 3" pages still skip, and it matters because it changes what the reader should do next.

If you want current OpenAI image generation, you usually should not stop at DALL·E 3. OpenAI's current image-generation guide recommends GPT Image for a better experience, and OpenAI's December 16, 2025 launch post says the new ChatGPT image model is available in the API as GPT Image 1.5. OpenAI's chatgpt-image-latest model page also says that alias points to the image model currently used in ChatGPT.

So if your real buyer question is "Gemini or OpenAI today?" the sharper comparison is usually:

- Gemini vs GPT Image 1.5

- or Gemini vs chatgpt-image-latest

not Gemini versus DALL·E 3 alone.

That does not make this exact keyword useless. It makes it transitional. A lot of users arrive through DALL·E 3 because that is the legacy brand name they remember, then discover they actually need a current comparison instead. That is why this page should not end with a fake "overall winner" and leave the reader there. It should tell them which comparison they actually need.

If you want that broader current comparison next, our guide to Gemini image vs ChatGPT in 2026 is the better follow-up. If you are comparing API budgeting more than product feel, Gemini image generation API pricing and OpenAI image generation API pricing are the cleaner next reads.

Pricing, Sizes, and Workflow Fit

Pricing is where this keyword becomes misleading fast, because DALL·E 3 and Gemini are not presented in the same format.

OpenAI's DALL·E 3 model page still lists straightforward per-image prices: $0.04 for 1024x1024 and $0.08 for 1024x1536 or 1536x1024 on the standard path. That makes DALL·E 3 look simple, and in a narrow sense it is. But it is also narrow because those are the only official size options listed on the current page.

Google's Gemini image pricing is broader and more obviously tied to workflow choice. As checked on March 22, 2026, Google's pricing page lists Gemini 3.1 Flash Image Preview at $0.067 for 1K, $0.101 for 2K, and $0.151 for 4K on the standard lane, with cheaper batch pricing for non-interactive jobs. Gemini 3 Pro Image Preview is the premium lane at $0.134 for 1K or 2K and $0.24 for 4K.

That means DALL·E 3 can still look cheaper at its limited size envelope. But cost alone is not the right reading. The better reading is:

- DALL·E 3 is a simpler legacy lane with fewer current workflow options.

- Gemini costs more when you move into larger sizes and richer workflows because it is offering more current flexibility.

If you are choosing a new production path, that tradeoff often favors Gemini. If you are trying to keep a stable old integration alive with minimal changes, DALL·E 3's narrower lane can still be easier to leave alone for a while.

There is one more important pricing caveat: if you are price-sensitive on the OpenAI side, DALL·E 3 is not even the whole story anymore. The current OpenAI image stack includes newer GPT Image lanes with a different pricing model and a stronger current feature story. That is another reason this keyword should end in a routing decision, not a simplistic "Gemini costs more" or "DALL·E 3 costs less" verdict.

Best Choice by Use Case

Once you separate current work from legacy work, the routing gets much cleaner.

| Your real situation | Better default | Why |

|---|---|---|

| Starting a new image-generation workflow in 2026 | Gemini | The current Gemini image stack is more current, more flexible on size, and better documented for production-style image work. |

| Keeping an older OpenAI image workflow alive with minimal disruption | DALL·E 3 for now | The migration cost may be higher than the short-term gain if the workflow is already stable and narrow. |

| Wanting the strongest current OpenAI answer | GPT Image 1.5 / chatgpt-image-latest | This is the actual current OpenAI comparison branch now. |

| Making text-heavy graphics, infographics, or structured visuals | Gemini | Google's current image docs and model descriptions lean directly into this type of work. |

| Needing 2K or 4K output in the main workflow | Gemini | DALL·E 3's current model page does not offer those larger output options. |

| Working from many reference images or consistency-sensitive asset sets | Gemini | Up to 14 reference images is a meaningful workflow advantage. |

The main thing to avoid is pretending that every row above is part of the same argument. Some rows are about new work. Some are about legacy continuity. Some are about switching to a different OpenAI lane entirely. If you keep those separate, the decision stops feeling messy.

Should You Migrate If You Still Use DALL·E 3?

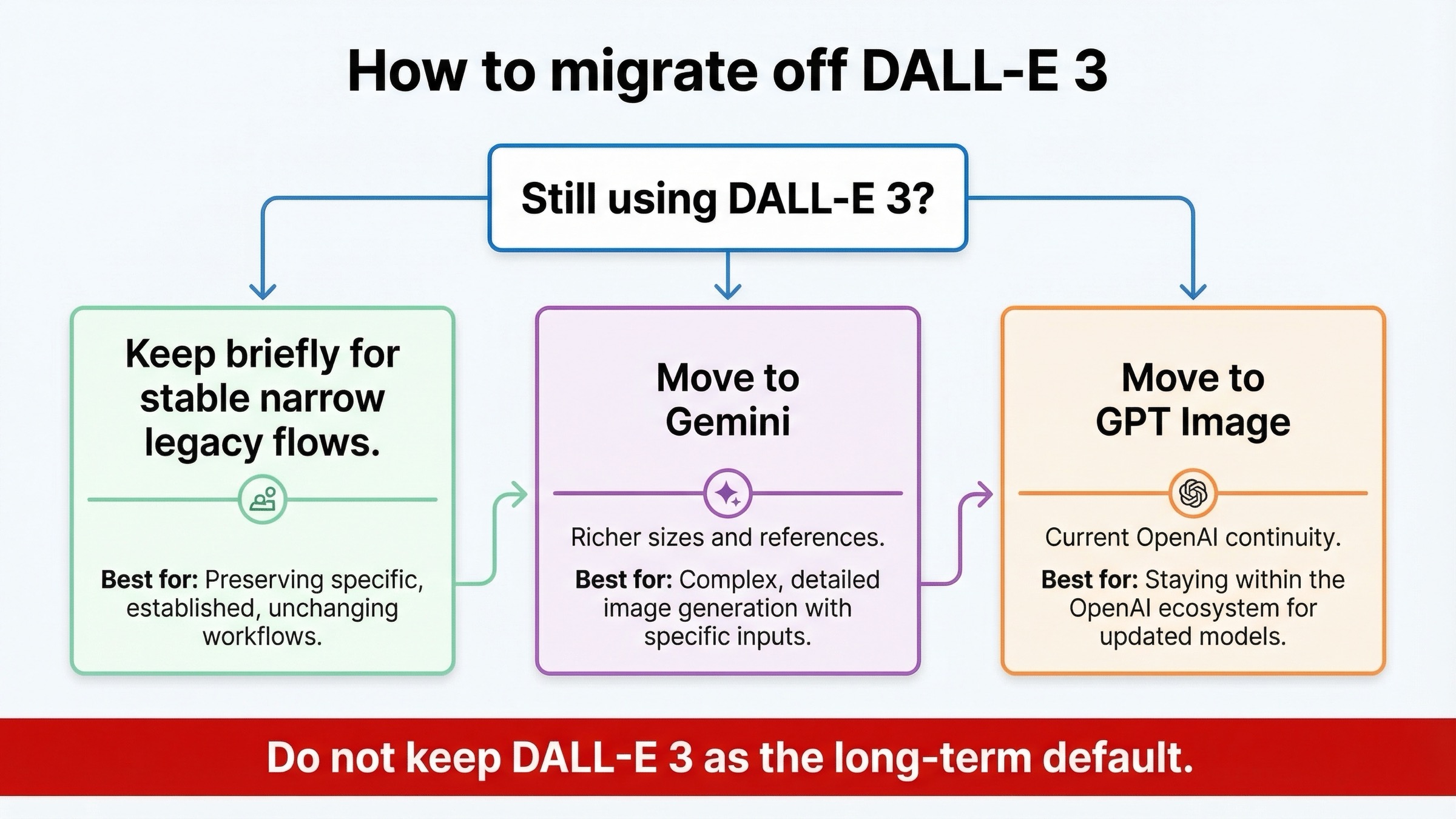

If you are still using DALL·E 3 today, the best move depends on where the rest of your system lives.

If your workflow is mostly OpenAI-native and what you really want is a current OpenAI image path, start by testing GPT Image 1.5 or chatgpt-image-latest before you jump ecosystems. That will tell you whether the main issue is simply that you are on an old OpenAI lane.

If your workflow needs larger output sizes, richer editing, reference-image composition, or more production-oriented visual control, Gemini deserves the first serious test. This is where the current Gemini story is strongest, and where staying on DALL·E 3 usually starts to feel like inertia rather than strategy.

If your workflow is stable, narrow, and already shipping, you do not need a panic migration. But you should stop treating DALL·E 3 as your long-term default. OpenAI's own docs already frame it as a previous-generation deprecated model. That is enough reason to put a migration review on the roadmap even if you do not move this week.

The most practical migration sequence is:

- Identify whether the real requirement is legacy compatibility or current capability.

- Test your top 10 prompts or edits on the target model, not just one hero example.

- Decide whether the better target is Gemini or current OpenAI image models.

- Keep DALL·E 3 only where retuning cost still outweighs the benefit of switching.

That is a much safer plan than either blind loyalty or blind trend-chasing.

If you want the sharper current OpenAI head-to-head after this, our Nano Banana 2 vs GPT Image 1.5 and Nano Banana Pro vs GPT Image guides are the better next comparisons.

FAQ

Is Gemini better than DALL·E 3 in 2026?

Yes for new work. Gemini is the better default because its current image stack is newer, more flexible, and more clearly positioned for production workflows. DALL·E 3 still makes sense mainly for legacy continuity.

Is DALL·E 3 deprecated?

OpenAI's current DALL·E 3 model page labels the alias as deprecated and describes DALL·E 3 as a previous-generation image generation model.

Does ChatGPT still use DALL·E 3?

The current OpenAI model page says chatgpt-image-latest is the image model used in ChatGPT, and OpenAI's December 16, 2025 product post says the new ChatGPT image model is available in the API as GPT Image 1.5.

When should I still use DALL·E 3?

Use it when you already depend on a stable DALL·E 3 workflow and the migration cost is higher than the short-term benefit of switching. It is not the best default for a fresh build.

What should I compare Gemini against if I want the current OpenAI answer?

Compare Gemini against GPT Image 1.5 or chatgpt-image-latest, not just against DALL·E 3.

Which one is better for text-heavy graphics?

Gemini. Google's current image docs explicitly emphasize advanced text rendering, structured assets, and professional production workflows.

Bottom Line

The clean 2026 answer is this: Gemini is the better choice for new image-generation work, while DALL·E 3 is now mostly a legacy OpenAI lane.

If you already run DALL·E 3 and it is doing the job, you do not need to rip it out overnight. But if you are choosing a model today, the market has moved. Gemini's current image stack is the stronger new-work default, and the real current OpenAI alternative now lives in GPT Image 1.5 and chatgpt-image-latest, not in DALL·E 3 alone.