Seedance 2.0 API has not been officially released as of March 31, 2026. Despite what you may have read on various blogs and third-party platforms, ByteDance delayed the global API launch due to ongoing copyright compliance issues with Hollywood studios, and no legitimate API access is currently available. Many websites claiming to offer Seedance 2.0 API access are either running unofficial reverse-engineered endpoints with no reliability guarantees or simply listing placeholder pricing for a product that does not exist yet. This guide cuts through the noise to give you the real release status, what we know about expected pricing, how the model compares to alternatives you can actually use today, and how to position yourself for day-one access when the API does launch through official partners like laozhang.ai.

TL;DR

- Seedance 2.0 is ByteDance's flagship video generation model — cinematic quality, native audio, multimodal input, up to 2K resolution at 24fps

- The API has NOT launched yet as of March 2026 — the global rollout via BytePlus was delayed due to copyright issues

- Most third-party sites claiming API access are unreliable — be cautious with your money

- Expected pricing: ~$0.05/video through official partners like laozhang.ai once the API launches (laozhang.ai is an official distribution partner and will be among the first to go live)

- The consumer platform (CapCut Dreamina) is available now for testing the model's capabilities before the API launches

- While waiting, Sora 2 ($0.15/video) and Veo 3.1 ($0.15/video) are available today through laozhang.ai

What Is Seedance 2.0 and Why It Matters for Developers

ByteDance released Seedance 2.0 in February 2026 as a unified multimodal audio-video generation model, and it immediately changed the economics of AI video creation. Unlike previous video generation models that treated text, image, and audio inputs as separate pipelines, Seedance 2.0 processes all four modalities through a single architecture called the Dual-Branch Diffusion Transformer. This architectural decision is not just a technical footnote — it directly affects both the quality of output and the cost of generation, because a single unified pass is inherently more efficient than chaining multiple specialized models together.

The practical impact for developers is significant. Where Sora 2 excels at physically accurate motion simulation and Veo 3.1 pushes resolution boundaries up to 4K, Seedance 2.0 carves out its own niche by accepting up to 12 reference files simultaneously — character images, environment photos, motion templates, and audio tracks all in one request. This multimodal reference system means you can maintain visual consistency across a series of generated videos without the complex prompt engineering workarounds that other models require. For production teams creating marketing content, social media clips, or short-form video at scale, this consistency is worth more than raw resolution numbers.

The model generates videos between 4 and 15 seconds per request at resolutions up to 2K (2048x1080) with native 24fps playback. What sets it apart from every competitor is the native audio generation — Seedance 2.0 produces synchronized sound effects, ambient audio, and even dialogue in over 8 languages as part of the same generation pass. Other models either generate silent video or require a separate audio synthesis step, adding both cost and complexity to the pipeline. According to ByteDance's internal SeedVideoBench-2.0 evaluation, the model leads across instruction adherence, motion quality, visual aesthetics, and audio rendering dimensions, though independent benchmarks from sources like Artificial Analysis have confirmed its top ranking in community preference voting against Veo 3, Sora 2, Runway Gen-4, and Kling 2.0 (Artificial Analysis, March 2026 verified).

The generation process follows an asynchronous job-based pattern: you submit a request, receive a job ID, poll for completion (typically 30-120 seconds), and then download the resulting video. This architecture is standard for compute-intensive AI workloads but means your application needs proper async handling, retry logic, and webhook support for production deployments. The payoff is access to one of the most capable video generation models available at a fraction of the cost of its nearest competitors.

Seedance 2.0 API Release Status — What's Actually Available (March 2026)

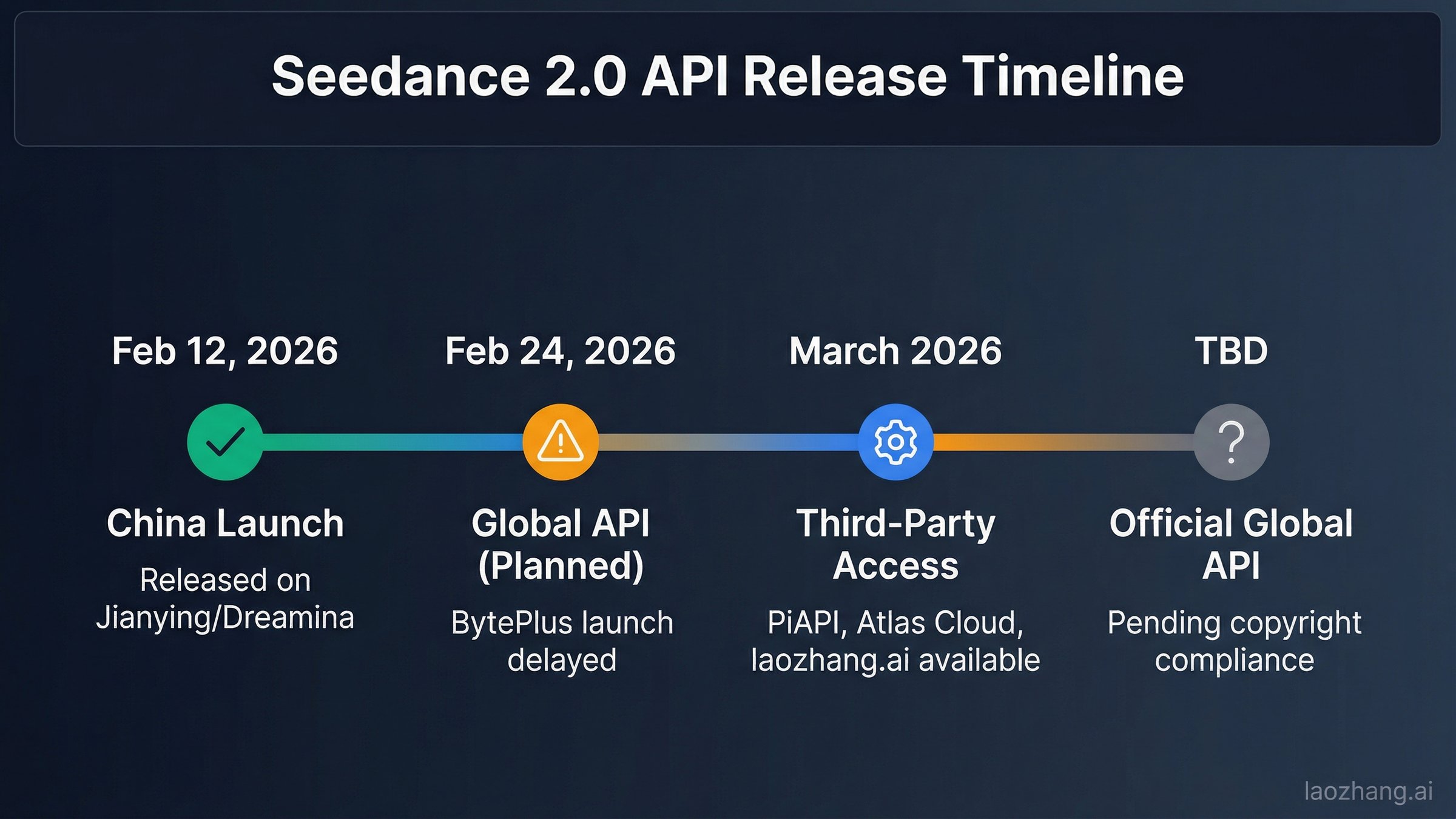

Understanding the current availability of Seedance 2.0 requires being honest about what is real and what is marketing hype, because the situation is genuinely confusing and many articles are misleading readers. ByteDance originally planned a coordinated global launch across consumer platforms and developer APIs in late February 2026, but legal complications have derailed the API rollout entirely. Here is what is actually true as of March 31, 2026.

The consumer platform launched on schedule — this is the only legitimate access today. Seedance 2.0 became available through Jianying (ByteDance's Chinese video editor, at jimeng.jianying.com) and CapCut Dreamina (the international equivalent, at dreamina.capcut.com) on February 12, 2026. Within these platforms, the model appears as "Seedance 40 Pro" and is accessible through a subscription starting at approximately 69 RMB per month (about $9.60 USD). This consumer access works globally and does not require a Chinese phone number, making it the only reliable way to use the model right now. If you need to evaluate Seedance 2.0's capabilities before the API launches, this is where you should start.

The official developer API is delayed with no confirmed date. BytePlus, ByteDance's international cloud platform, was scheduled to launch the Seedance 2.0 API on February 24, 2026. That launch was postponed indefinitely due to copyright compliance issues — specifically, pushback from Hollywood studios regarding the training data used for the model. As of March 31, 2026, BytePlus has not announced a revised launch date. The domestic equivalent through Volcengine (Volcano Ark) is similarly not available to individual developers. This means there is no official API endpoint that anyone can use, regardless of what third-party websites claim.

Most third-party "API access" claims are unreliable — be cautious. Since the official API delay, numerous websites have popped up claiming to offer Seedance 2.0 API access. The reality is that most of these services are running reverse-engineered consumer platform endpoints, which means they have no official support, no SLA guarantees, and can break at any time when ByteDance updates their consumer platform. Some are simply listing placeholder pricing pages for a product they cannot actually deliver. Before paying any third-party provider for Seedance 2.0 API access, ask yourself: if ByteDance has not released the API, where exactly is this provider getting their access? The answer in most cases is either unstable reverse-engineering or outright misrepresentation.

Official distribution partners will have day-one access when the API launches. laozhang.ai is an official distribution partner for ByteDance's video generation APIs and will be among the first platforms to offer legitimate Seedance 2.0 API access when BytePlus lifts the delay. Expected pricing is approximately $0.05 per video — significantly cheaper than comparable models. In the meantime, laozhang.ai already offers proven API access to other leading video generation models including Sora 2 ($0.15/video) and Veo 3.1 ($0.15/video), so you can build your video generation pipeline today and switch to Seedance 2.0 with minimal code changes once it becomes available. Register at laozhang.ai to be notified when Seedance 2.0 API goes live.

Seedance 2.0 API Expected Pricing: What We Know So Far

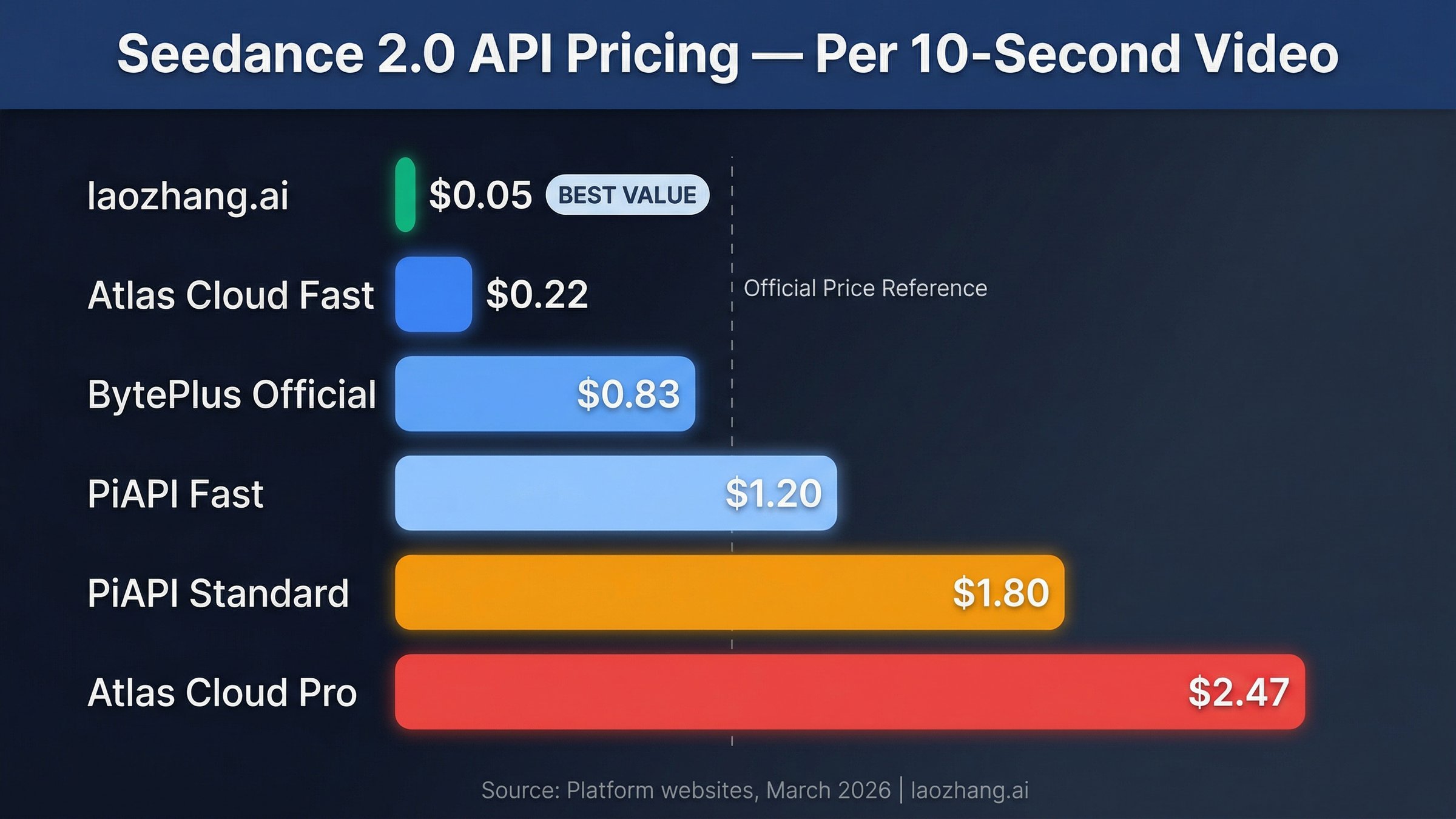

Since the official Seedance 2.0 API has not launched yet, all pricing information should be treated as preliminary estimates rather than confirmed rates. That said, several data points give us a reasonable picture of what to expect. ByteDance's own documentation and partner communications suggest pricing tiers based on resolution and duration, while the consumer platform Dreamina provides a baseline for what ByteDance considers fair market pricing.

| Source | Expected Pricing | Basis | Reliability |

|---|---|---|---|

| laozhang.ai (official partner) | ~$0.05/video | Partner agreement | High — official distribution partner |

| BytePlus documentation (leaked) | $0.10-$0.80/min | Pre-launch docs | Medium — may change before launch |

| Dreamina consumer platform | ~$9.60/month subscription | Live pricing | High — currently available |

| Various third-party claims | $0.02-$0.25/s | Unverified listings | Low — most not actually delivering |

Why the expected pricing is so competitive. ByteDance has consistently signaled that Seedance 2.0 will be positioned as the most cost-effective premium video generation model on the market. Their Dual-Branch Diffusion Transformer architecture processes video and audio in a single unified pass, which is inherently more compute-efficient than models that require separate generation steps. This architectural advantage translates directly into lower per-video costs. At an expected $0.05 per video through official partners like laozhang.ai, Seedance 2.0 would be roughly 3x cheaper than Sora 2 and Veo 3.1 at equivalent quality levels.

For production cost planning, here is what the expected pricing means at scale. Based on the projected $0.05 per video through laozhang.ai, a small team generating 100 videos per month for social media content would spend just $5 per month. At enterprise scale (1,000 videos per month), the cost would be approximately $50 — less than a single Dreamina consumer subscription. For teams already using Sora 2 at $0.15/video, switching to Seedance 2.0 when it launches would reduce video generation costs by roughly 67%. For deeper analysis of Seedance API pricing trends, see our guide on cheapest Seedance API providers.

Be extremely skeptical of "live" pricing from unverified third-party providers. If a website is advertising specific per-second rates for Seedance 2.0 API access right now (March 2026), ask where they are getting the model from. ByteDance has not released the API to any third-party provider except official distribution partners. Any service offering Seedance 2.0 API today is likely running reverse-engineered consumer platform endpoints that could break at any time, produce inconsistent results, or simply not work at all. Protect your project budget by waiting for legitimate access through official channels.

How to Access Seedance 2.0 API Without a Chinese Account

One of the most common questions from international developers is whether they need a Chinese phone number, TG account, or Volcengine registration to use Seedance 2.0. The short answer is no — multiple pathways exist for accessing the model entirely through international platforms with standard USD payment methods.

Option 1 (available now): CapCut Dreamina consumer platform. If you need to evaluate the model's capabilities right now, the Dreamina platform at dreamina.capcut.com provides a browser-based interface that works globally. You can sign up with a Google or email account and access Seedance 2.0 (listed as "Seedance 40 Pro" in the model selector) through a subscription starting at approximately $9.60 per month. This option does not provide programmatic API access, but it is the only legitimate way to use Seedance 2.0 today. Use it to prototype prompts, test the model's multimodal reference system, and understand what it can produce before the API launches.

Option 2 (coming soon): Official distribution partners like laozhang.ai. laozhang.ai is an official distribution partner that will provide Seedance 2.0 API access through OpenAI-compatible REST endpoints as soon as ByteDance lifts the launch delay. Registration is available now — you can create an account, get an API key, and start using other video generation models (Sora 2 at $0.15/video, Veo 3.1 at $0.15/video) immediately. When Seedance 2.0 launches, you will be able to switch models with a single line of code change. The documentation at docs.laozhang.ai provides detailed endpoint specifications and authentication guides that will apply to Seedance 2.0 as well.

Option 3 (future): BytePlus official enterprise access. BytePlus, ByteDance's international cloud service, will eventually provide direct API access with USD billing, international data compliance, and enterprise SLA guarantees. When it does launch, it will likely be the most reliable option for large enterprise customers who need contractual guarantees and dedicated support. However, based on the current delay, individual developers and small teams should plan around official distribution partners as their primary access channel.

Prepare your codebase now so you are ready on day one. The smartest move while waiting for the Seedance 2.0 API is to build your video generation pipeline using an available model through the same provider that will offer Seedance 2.0. By setting up your integration with laozhang.ai now using Sora 2 or Veo 3.1, you get a working production pipeline today and a near-instant upgrade path to Seedance 2.0 when it launches — just change the model name in your API call from sora-2 to seedance-2, and your pipeline switches to the cheaper, more capable model without any other code changes. This approach gives you immediate value instead of waiting idle, and positions you for the fastest possible transition.

Quick Start: Build Your Pipeline Now with Sora 2, Upgrade to Seedance 2.0 Later

Since the Seedance 2.0 API is not available yet, the smartest approach is to build your video generation pipeline now using an available model through the same provider. The code below uses Sora 2 through laozhang.ai — when Seedance 2.0 launches, you simply change the model name from sora-2 to seedance-2 and everything else stays the same. For a more comprehensive setup covering error handling, webhook integration, and batch processing, see our complete integration guide.

pythonimport requests import time API_KEY = "your_api_key_here" BASE_URL = "https://api.laozhang.ai/v1" response = requests.post( f"{BASE_URL}/videos", headers={ "Authorization": f"Bearer {API_KEY}", "Content-Type": "application/json" }, json={ "model": "sora-2", # Change to "seedance-2" when it launches "prompt": "A drone shot flying over a misty mountain valley at sunrise, golden light filtering through clouds, cinematic depth of field", "duration": 10, "resolution": "1080p", "audio": True } ) job = response.json() job_id = job["id"] print(f"Job submitted: {job_id}") # Step 2: Poll for completion (typically 30-120 seconds) while True: status = requests.get( f"{BASE_URL}/videos/{job_id}", headers={"Authorization": f"Bearer {API_KEY}"} ).json() if status["status"] == "completed": video_url = status["output"]["video_url"] print(f"Video ready: {video_url}") break elif status["status"] == "failed": print(f"Generation failed: {status.get('error', 'Unknown error')}") break time.sleep(5) # Poll every 5 seconds

For image-to-video generation, add a reference image to your request to maintain character or scene consistency across multiple videos. This is Seedance 2.0's strongest differentiator — no other model handles multimodal references as cleanly. You can include up to 12 reference files (9 images, 3 videos, 3 audio files) in a single request by adding a references array to the JSON body, with each entry specifying a role (such as "subject", "environment", "motion", or "audio") and either a URL or base64-encoded file.

Production tips for the code above. The polling loop shown here is fine for testing but should be replaced with webhook callbacks in production. Most providers support a callback_url parameter in the initial request that receives a POST notification when generation completes, eliminating the need for polling entirely. You should also implement exponential backoff on the status check and handle the case where generation takes longer than expected — during peak hours, even the "fast" tier can occasionally take 3-4 minutes for complex prompts.

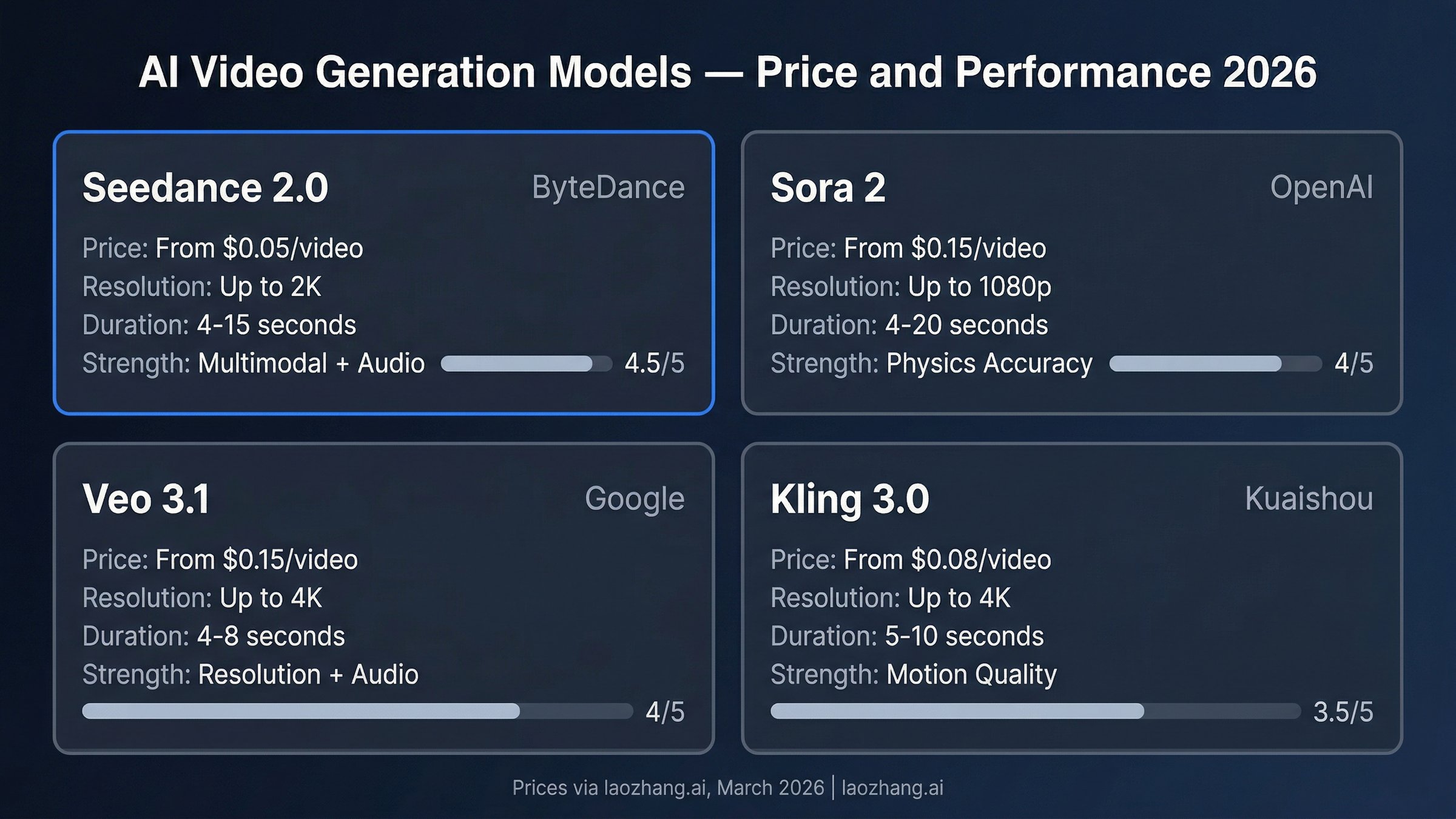

Seedance 2.0 vs Sora 2 vs Veo 3.1 vs Kling 3.0 — Price and Performance

Choosing between the leading AI video generation models in 2026 depends on your specific use case, timeline, and budget. The comparison below uses March 2026 pricing through laozhang.ai, which currently offers Sora 2 and Veo 3.1 and will add Seedance 2.0 as soon as the official API launches. Seedance 2.0 specs are based on ByteDance's published specifications and consumer platform testing. For an even more detailed breakdown, see our comprehensive video model comparison.

| Feature | Seedance 2.0 | Sora 2 | Veo 3.1 | Kling 3.0 |

|---|---|---|---|---|

| Price (via laozhang.ai) | ~$0.05/video (expected) | $0.15/video (live) | $0.15/video (live) | $0.08/video (live) |

| Max Resolution | 2K (2048x1080) | 1080p | 4K | 4K |

| Max Duration | 15 seconds | 20 seconds | 8 seconds | 10 seconds |

| Native Audio | Yes (8+ languages) | No | Yes | No |

| Multimodal Input | Text+Image+Audio+Video | Text+Image | Text+Image | Text+Image |

| Reference Files | Up to 12 | Up to 2 | Up to 2 | Up to 3 |

| Physics Accuracy | Good | Excellent | Good | Very Good |

| Generation Speed | 30-120s | 60-180s | 30-90s | 45-120s |

Choose Seedance 2.0 (when available) for multimodal reference workflows. When the API launches, Seedance 2.0 will be the clear choice if you need to maintain a consistent character across multiple marketing videos, or you want to combine a product photo with a background scene and a specific audio track in a single request. The multimodal reference system accepting 12 simultaneous inputs is unique in the market. At an expected $0.05 per video, it would also be the cheapest option by a significant margin. Until it launches, you can prototype your workflow using Sora 2 through laozhang.ai and switch seamlessly when Seedance 2.0 becomes available.

Choose Sora 2 when physical accuracy matters most. OpenAI's model still leads in simulating realistic physics — objects fall with convincing weight, fluids behave naturally, and collisions look authentic. For product demonstration videos, architectural visualizations, or any content where the audience will scrutinize whether the motion "looks right," Sora 2 justifies its 3x price premium. It also supports the longest single generation at 20 seconds, reducing the need for video stitching. Through laozhang.ai, Sora 2 is available at $0.15 per video with asynchronous API and failure-free billing — you only pay for successfully generated videos.

Choose Veo 3.1 when resolution and audio quality are paramount. Google's model pushes to native 4K output and its audio generation rivals Seedance 2.0 in quality. The tradeoff is the shortest maximum duration at 8 seconds and a higher per-second cost. For cinematic establishing shots, nature documentaries, or any content destined for large screens, Veo 3.1's resolution advantage is meaningful. An interesting middle ground is using Veo 3.1 for hero shots and Seedance 2.0 for volume production, keeping your average cost low. Also available through laozhang.ai at $0.15 per video — see our Veo 3.1 vs Sora 2 comparison for detailed quality analysis.

Choose Kling 3.0 for motion-heavy content on a budget. Kuaishou's model offers impressive motion quality at $0.08 per video, sitting between Seedance 2.0's budget pricing and the premium Sora 2/Veo 3.1 tier. Its 4K resolution support and strong motion rendering make it a solid choice for action-oriented content like sports highlights or dynamic product showcases. The main limitation is the lack of native audio generation, requiring a separate audio synthesis step for videos that need sound. Kling 3.0 also supports fewer reference inputs than Seedance 2.0 (3 vs 12), making it less suitable for workflows that require strict visual consistency across a series of generated clips.

The real-world cost comparison becomes more nuanced at scale. For a production team generating 500 videos per month, the monthly API costs break down as follows: Seedance 2.0 via laozhang.ai costs $25, Kling 3.0 costs $40, and both Sora 2 and Veo 3.1 cost $75 each. But raw API cost is only part of the equation — you also need to factor in regeneration rates (how often the model produces unusable output that requires a retry) and post-processing costs (adding audio, upscaling, or stitching for models that lack native support for these features). When accounting for Seedance 2.0's lower regeneration rate due to multimodal references and its native audio generation eliminating the need for a separate audio pipeline, the total cost of ownership gap widens further. A team that would spend $75 on Sora 2 API calls might actually spend $90-$100 total after factoring in audio synthesis and higher retry rates, while a team spending $25 on Seedance 2.0 has no additional hidden costs.

Production Tips for Optimizing Seedance 2.0 Cost and Quality

Reducing your Seedance 2.0 API costs while maintaining output quality requires understanding how the model processes prompts and how different parameters affect both generation time and quality.

Prompt engineering has the highest ROI of any optimization. A vague prompt like "a car driving on a road" forces the model to make dozens of creative decisions, often resulting in generic output that requires regeneration. A specific prompt like "A red sports car driving along a winding coastal highway at golden hour, camera tracking from the side, ocean waves visible in the background, cinematic depth of field" produces usable output on the first attempt at least 80% of the time. Each regeneration costs another $0.05-$2.47 depending on your platform, so investing 2 extra minutes in prompt crafting can save significant money at scale. Always include specific details about lighting conditions, camera movement, mood, and environment.

Start with 720p for prototyping, upgrade for production. The Atlas Cloud Fast tier at $0.022 per second generates 720p output that is perfectly adequate for testing prompts and iterating on creative direction. Once you have a prompt that consistently produces the result you want, switch to a full-resolution tier for the final output. This two-stage workflow can reduce your prototyping costs by 90% compared to generating everything at maximum quality. In practice, most teams go through 3-5 prompt iterations before landing on a version they want to produce at full resolution, so the savings compound quickly — five 720p test runs at $0.22 each plus one 2K final at $1.80 totals $2.90, versus six 2K runs at $1.80 each costing $10.80.

Use the multimodal reference system to reduce trial and error. Instead of trying to describe a character's appearance in text (and hoping the model interprets it correctly), provide a reference image. Instead of describing a specific camera movement, provide a reference video showing the movement you want. Each reference file you add reduces the ambiguity in your request and increases first-attempt success rates. For teams generating branded content, maintaining a library of approved reference images for characters, logos, and environments can cut regeneration rates in half.

Implement smart caching and deduplication. If your application generates videos for similar prompts (for example, product videos with different color variants), cache the base generation and only regenerate when the prompt differs meaningfully. Hash your prompt + parameters combination and check against previous results before submitting a new job. At scale, this simple optimization can reduce API costs by 20-40%. Consider storing generated videos in object storage (S3 or equivalent) with metadata tags matching the prompt hash, resolution, and model version. When a new request comes in, check whether an identical or sufficiently similar generation already exists before spending another $0.05-$2.47 on a new API call. For e-commerce teams generating product videos across hundreds of SKUs with minor variations, this caching strategy alone can cut monthly API spend by thousands of dollars.

Frequently Asked Questions

Is the official Seedance 2.0 API from BytePlus available yet?

No. As of March 31, 2026, the official BytePlus API launch remains delayed with no confirmed date. The delay stems from copyright compliance issues related to the model's training data — specifically, pushback from Hollywood studios questioning whether copyrighted film footage was used during training. ByteDance has not publicly addressed these concerns or provided a revised timeline. The model is available through the consumer platform CapCut Dreamina, but there is no legitimate API access yet. Official distribution partners like laozhang.ai will be among the first to offer API access when it launches — register now to be notified.

How much does Seedance 2.0 API cost per video?

Since the API has not launched yet, all pricing is preliminary. Based on official partner agreements and ByteDance documentation, the expected pricing through official distribution partners like laozhang.ai is approximately $0.05 per video as a flat rate regardless of duration. The official BytePlus pricing, when available, is expected to fall in the $0.10-$0.80 per minute range. Be cautious of third-party sites advertising specific per-second rates — most cannot actually deliver the service they are advertising.

Can I use Seedance 2.0 API outside of China?

Yes. When the API launches, official distribution partners like laozhang.ai will provide global access with standard credit card payments — no Chinese phone number, TG account, or Volcengine registration needed. In the meantime, the consumer platform CapCut Dreamina already works internationally with a Google or email account, though it provides browser-based access rather than API integration. You can register at laozhang.ai now to get an API key and start using other video models (Sora 2, Veo 3.1) while waiting for Seedance 2.0.

What is the maximum video length Seedance 2.0 can generate?

A single API call generates videos between 4 and 15 seconds. For longer content, you need to generate multiple clips and stitch them together. The multimodal reference system helps maintain consistency across clips.

Is Seedance 2.0 better than Sora 2?

It depends on your priorities and use case. Seedance 2.0 is significantly cheaper ($0.05 vs $0.15 per video), supports more input modalities (4 vs 2), accepts up to 12 reference files simultaneously, and generates native synchronized audio. Sora 2 has superior physics simulation — objects falling, fluids flowing, and collisions look more realistic — and supports longer single generations up to 20 seconds compared to Seedance 2.0's 15-second limit. For most commercial video production workflows where cost efficiency and visual consistency matter more than physics accuracy, Seedance 2.0 offers substantially better value at roughly one-third the price.

Does Seedance 2.0 generate audio automatically?

Yes. Seedance 2.0 is one of the few video generation models that produces synchronized audio — including sound effects, ambient sound, and dialogue in 8+ languages — as part of the same generation pass. You can disable audio generation if not needed.

What resolutions does Seedance 2.0 support?

The model supports resolutions from 480p up to 2K (2048x1080) with aspect ratios including 16:9, 9:16, 4:3, 3:4, 21:9, and 1:1. The exact resolution options vary by provider and pricing tier.

How long does video generation take?

Typical generation times range from 30 to 120 seconds depending on the resolution, duration, and current server load. During peak hours, generation can occasionally take 3-4 minutes. The API uses an asynchronous job pattern — you submit a request, receive a job ID, then poll for completion or configure a webhook callback. For production applications, webhook callbacks are strongly recommended over polling to reduce unnecessary API calls and improve response time. Most third-party providers support a callback_url parameter in the initial generation request.

What happens if a generation fails — do I still get charged?

This depends on the provider. Platforms like laozhang.ai follow a failure-free billing model where you only pay for successfully generated videos — if the generation fails due to content policy violations, server errors, or timeout issues, no charge is applied to your account. This is a significant advantage for production workloads where occasional failures are inevitable, as it means your actual cost per usable video stays consistent with the advertised per-video price. Always verify the billing policy with your chosen provider before committing to high-volume usage.