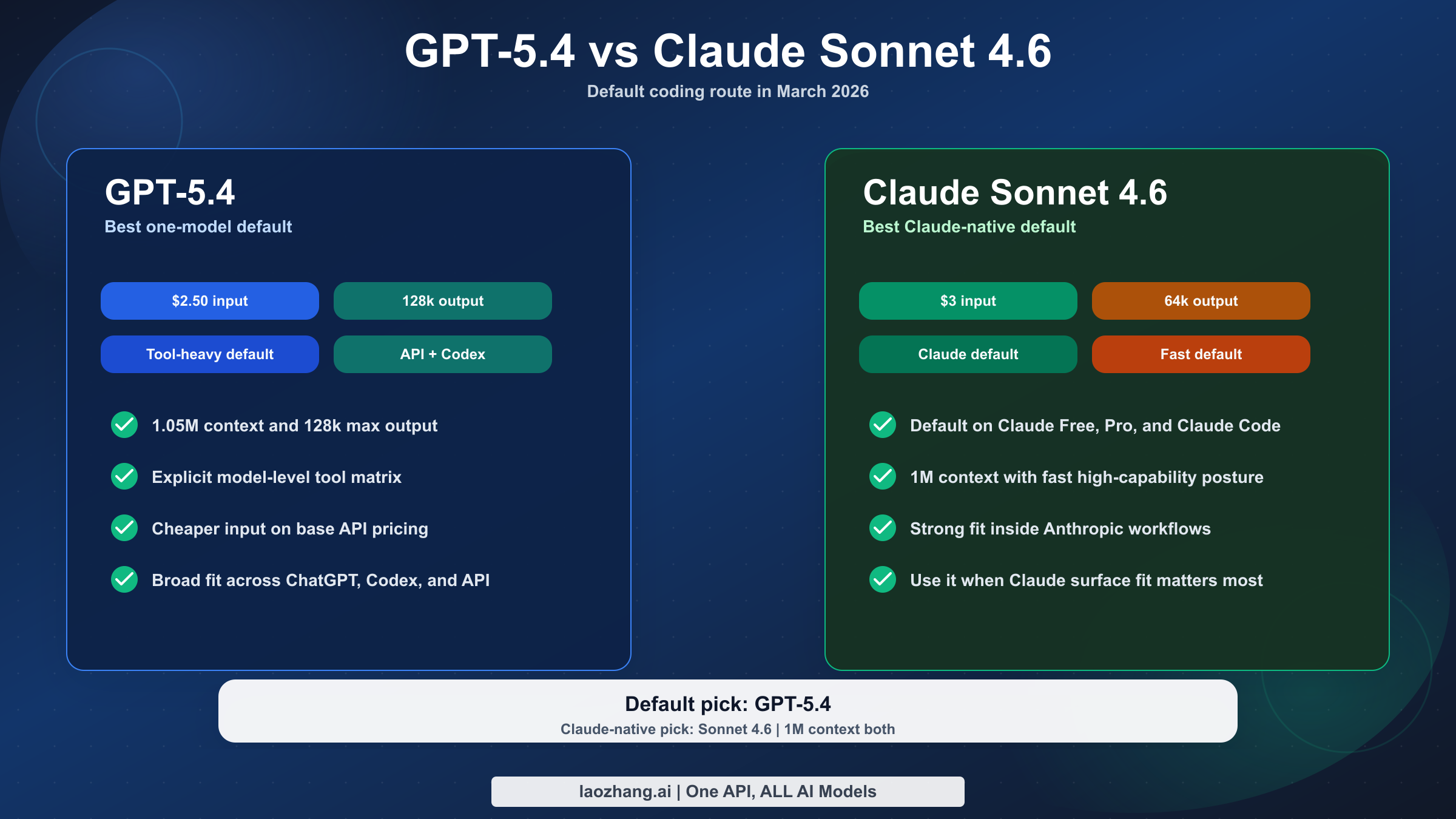

Short answer: for most developers, GPT-5.4 is the better default in March 2026. OpenAI's current launch and API docs make GPT-5.4 the broader frontier model for coding, long-context work, and agentic workflows across ChatGPT, Codex, and the API. It roughly matches Claude Sonnet 4.6 on the headline million-token context class. Anthropic's live docs now need a more precise read: the original February 17 launch page still preserves the beta-era framing for 1M context, while the current models overview lists Sonnet 4.6 with 1M context and 64k max output, and Anthropic's current context windows guide plus its March 13, 2026 "What's new in Claude 4.6" notes say the full 1M token context window is now generally available for Sonnet 4.6 at standard pricing. GPT-5.4 still beats Sonnet 4.6 on max output (128k vs 64k), publishes a much clearer model-level tool matrix, and is currently cheaper on base API input pricing (\$2.50 vs \$3 per million tokens).

That does not make Claude Sonnet 4.6 a bad model. Anthropic launched Sonnet 4.6 on February 17, 2026 as its most capable Sonnet model, made it the default on Claude Free and Pro plus Claude Cowork, and also shipped it across Claude Code, the API, and major cloud platforms. If you already live in the Claude ecosystem, prefer Anthropic's workflow style, or want the strongest Claude default without paying Opus pricing, Sonnet 4.6 still makes sense. But the clean spec-and-pricing comparison is more favorable to GPT-5.4 than many ranking pages suggest.

This guide uses current OpenAI and Anthropic pages checked on March 19, 2026. It also does something many comparison pages skip: it separates directly comparable facts from benchmark claims that are not perfectly apples-to-apples.

TL;DR

If you only want one recommendation, choose GPT-5.4 as your default model for coding and agentic work. Choose Claude Sonnet 4.6 when your workflow is already centered on Claude, Claude Code, or Anthropic's platform features and you care more about ecosystem fit than about winning the spec sheet.

| Category | GPT-5.4 | Claude Sonnet 4.6 | Practical takeaway |

|---|---|---|---|

| Release date | March 5, 2026 | February 17, 2026 | Both are current-generation models, but GPT-5.4 is newer |

| Product role | OpenAI's frontier default for professional, coding, and agentic work | Anthropic's fastest high-capability default | GPT-5.4 is the broader default; Sonnet 4.6 is the Claude-native default |

| Input price | $2.50 / 1M | $3 / 1M | GPT-5.4 is cheaper on base published input pricing |

| Output price | $15 / 1M | $15 / 1M | Tie on base published output pricing |

| Context window | 1,050,000 | 1M | Effectively tied on headline context size; Anthropic's current models overview plus the context windows guide and March 13 release notes support treating Sonnet 4.6 as a full 1M-context model at standard pricing |

| Max output | 128,000 | 64,000 | GPT-5.4 is better for long single-pass outputs |

| App availability | ChatGPT (as GPT-5.4 Thinking), plus API and Codex | Default on Claude Free and Pro plus Claude Cowork; also Claude Code, API, and major clouds | Sonnet 4.6 is more broadly surfaced inside Anthropic's own products |

| Tool posture | Explicit published support for web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, computer use, MCP, tool search | Strong Claude platform tool stack around Sonnet 4.6, including adaptive thinking, compaction, web search, fetch, code execution, memory, and tool search | GPT-5.4 is easier to justify when you want one model with a clearly published tool matrix |

| Best for | One-model default, long outputs, tool-heavy agents, Codex/API alignment | Claude-first workflows, Claude Code, fast Anthropic default, people avoiding Opus pricing | Choose based on surface and workflow, not brand loyalty |

The surprising detail here is pricing. A lot of pages still assume Sonnet must be the cheaper option. On the current official published base prices checked March 19, 2026, that is not true: GPT-5.4 is cheaper on input and tied on output.

What Actually Changed In March 2026

The most important difference is not a benchmark number. It is product positioning.

OpenAI's GPT-5.4 launch page says GPT-5.4 is its most capable and efficient frontier model for professional work. OpenAI also says GPT-5.4 brings together recent advances in reasoning, coding, and agentic workflows in one model, and explicitly states that GPT-5.4 incorporates the coding capabilities of GPT-5.3-Codex. That matters because it tells you how OpenAI wants developers to choose: not "pick a reasoning model for one task and a coding model for another by default," but "use GPT-5.4 as the mainline default unless you have a specialist reason not to."

Anthropic's Claude Sonnet 4.6 launch page tells a different story. Sonnet 4.6 is framed as the best capability-per-speed balance in the Claude line. Anthropic says it is a full upgrade across coding, computer use, long-context reasoning, agent planning, knowledge work, and design. The February 17 launch page introduced its 1M context window as beta, and that historical wording is still visible today. At the same time, Anthropic's current live docs are more permissive: the current models overview lists Sonnet 4.6 with 1M context and 64k max output; the current context windows guide says Claude Sonnet 4.6 and Claude Opus 4.6 now have 1M token context windows generally available; and Anthropic's March 13, 2026 "What's new in Claude 4.6" notes say the full 1M token context window is now available for Sonnet 4.6 at standard pricing. The practical reading is that Anthropic's launch page preserves the beta-era rollout wording, while the current models overview, context guide, and release notes support treating Sonnet 4.6 as a 1M-context, 64k-output model. GPT-5.4 still keeps the better base input price and larger output ceiling. More importantly for actual adoption, Anthropic made Sonnet 4.6 the default on free and Pro Claude plans plus Claude Cowork, while also making it available in Claude Code, the API, and major cloud platforms. That gives Sonnet 4.6 a real advantage in product reach, even if the raw API spec sheet is still less favorable than many people expect.

That is why this comparison is more subtle than "which lab is ahead this week." GPT-5.4 is the stronger default route if you want one model across API, Codex, and tool-heavy work. Sonnet 4.6 is the stronger Claude-default route if your real choice is happening inside Claude.ai or Claude Code and you do not want to step up to Opus 4.6.

This also explains why the SERP is messy. Many ranking pages broaden the query into "GPT-5 vs Claude" or even "GPT-5.4 vs Claude Opus 4.6." That still satisfies broad comparison intent, but it fails the more useful question: what should I actually set as my default model right now?

If you want the OpenAI-side context for how GPT-5.4 sits above the older specialist lane, our guide to GPT-5.4 vs GPT-5.3-Codex goes deeper on that split.

What You Can Compare Directly And What You Cannot

This is where most model-comparison articles quietly get sloppy.

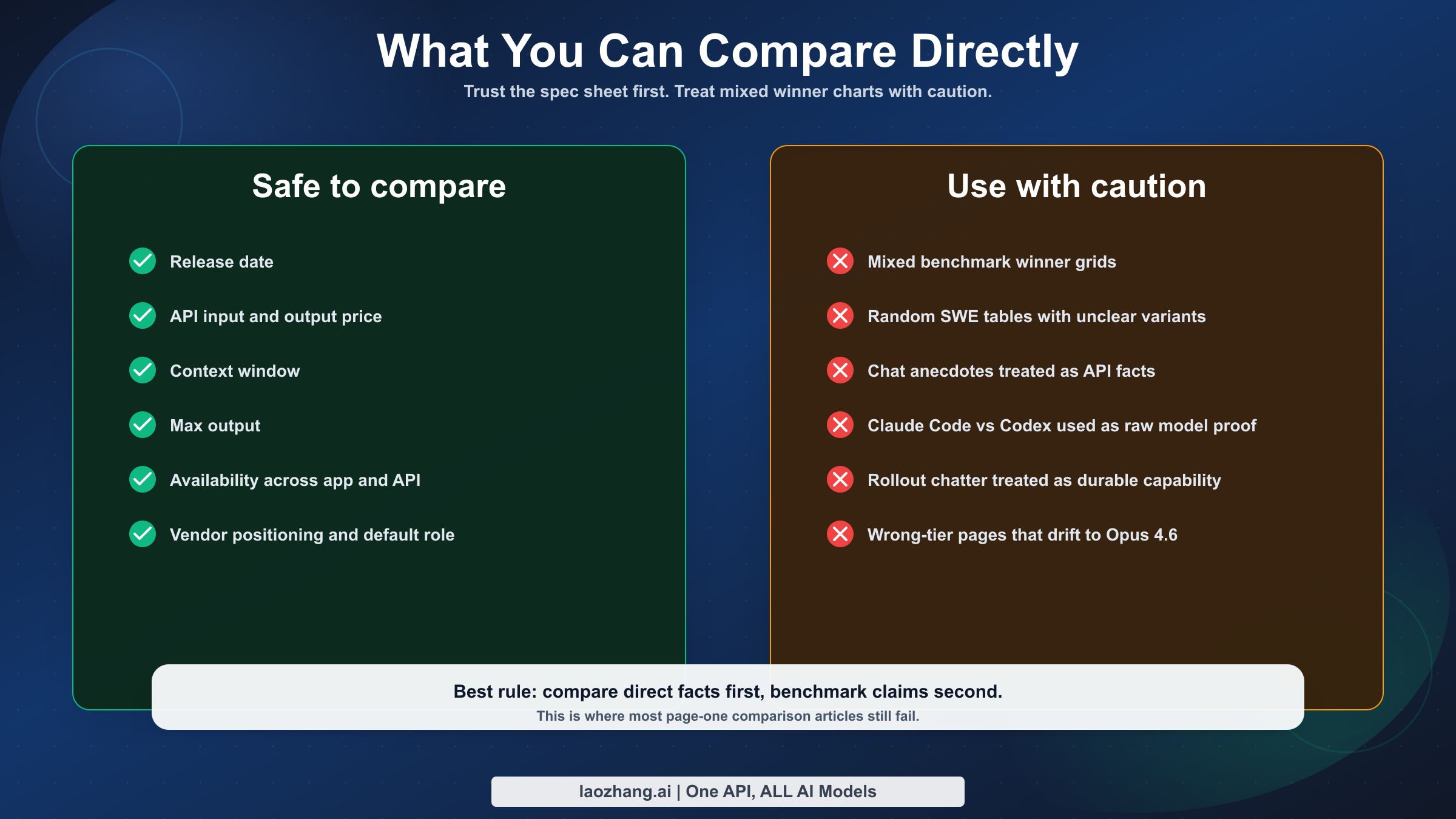

You can compare these things directly because both companies publish them clearly:

| Safe direct comparison | Why it is safe |

|---|---|

| Release date | Both companies publish launch dates on official release pages |

| API input and output price | Both companies publish current base API pricing |

| Context window | Both companies publish current context limits |

| Max output tokens | Both companies publish current output caps |

| App and API availability | Both companies publish current rollout surfaces |

| Product positioning | Both launch pages explicitly explain where each model fits |

You should not treat these as clean apples-to-apples without caveats:

| Risky comparison area | Why it is messy |

|---|---|

| Benchmark winner grids | OpenAI and Anthropic do not publish one shared same-settings GPT-5.4 vs Sonnet 4.6 chart |

| SWE-bench claims from random blogs | Variants, prompts, harnesses, and trial counts differ |

| Terminal and coding anecdotes | Claude Code, Codex, API, and chat products are related but not identical surfaces |

| Community rollout chatter | Useful as friction signals, but weak as durable evidence about model capability |

OpenAI's launch page mostly compares GPT-5.4 against GPT-5.3-Codex and GPT-5.2, not against Sonnet 4.6. Anthropic's release and system card mostly compare Sonnet 4.6 against earlier Sonnet or Opus behavior and explain methodology in Anthropic's own evaluation setup. That means a lot of third-party "winner" charts are filling in gaps with inference, not with a clean vendor-to-vendor benchmark release.

Even so, the official benchmark signals still matter directionally. OpenAI's launch page gives GPT-5.4 a strong coding and tool-use profile, including 57.7% on SWE-Bench Pro (Public), 75.1% on Terminal-Bench 2.0, 82.7% on BrowseComp, and 54.6% on Toolathlon in OpenAI's published setup. Anthropic's release page takes a different approach: instead of a clean cross-vendor table, it emphasizes that early Claude Code users preferred Sonnet 4.6 over Sonnet 4.5 roughly 70% of the time, and even preferred it to Opus 4.5 59% of the time. That is useful evidence that Sonnet 4.6 is a serious coding model, but it is not the same thing as a clean head-to-head benchmark against GPT-5.4.

Anthropic's own wording also matters. On the Sonnet 4.6 launch page, Anthropic still says Opus 4.6 remains the strongest option for tasks demanding the deepest reasoning, such as codebase refactoring and multi-agent coordination. That tells you how Anthropic itself sees the ladder: Sonnet 4.6 is the fast, capable default, but not the absolute ceiling inside the Claude family. OpenAI, by contrast, is explicitly telling developers to use GPT-5.4 as the mainline frontier default. That positioning difference is one reason the final verdict here is not especially close.

That does not mean benchmarks are useless. It means they should be interpreted as supporting context, not as license to publish a fake precision table. The more reliable way to guide a buyer here is:

- compare published spec and pricing facts directly

- compare product positioning directly

- compare workflow fit honestly

- use benchmark claims only where the evaluation setup is transparent enough to trust

That is also why a page like this can beat the current result average. The current first page is full of pages that want the confidence of a hard verdict without earning it.

Pricing, Context Window, Output, And Tools

For most developers, this section matters more than any leaderboard screenshot.

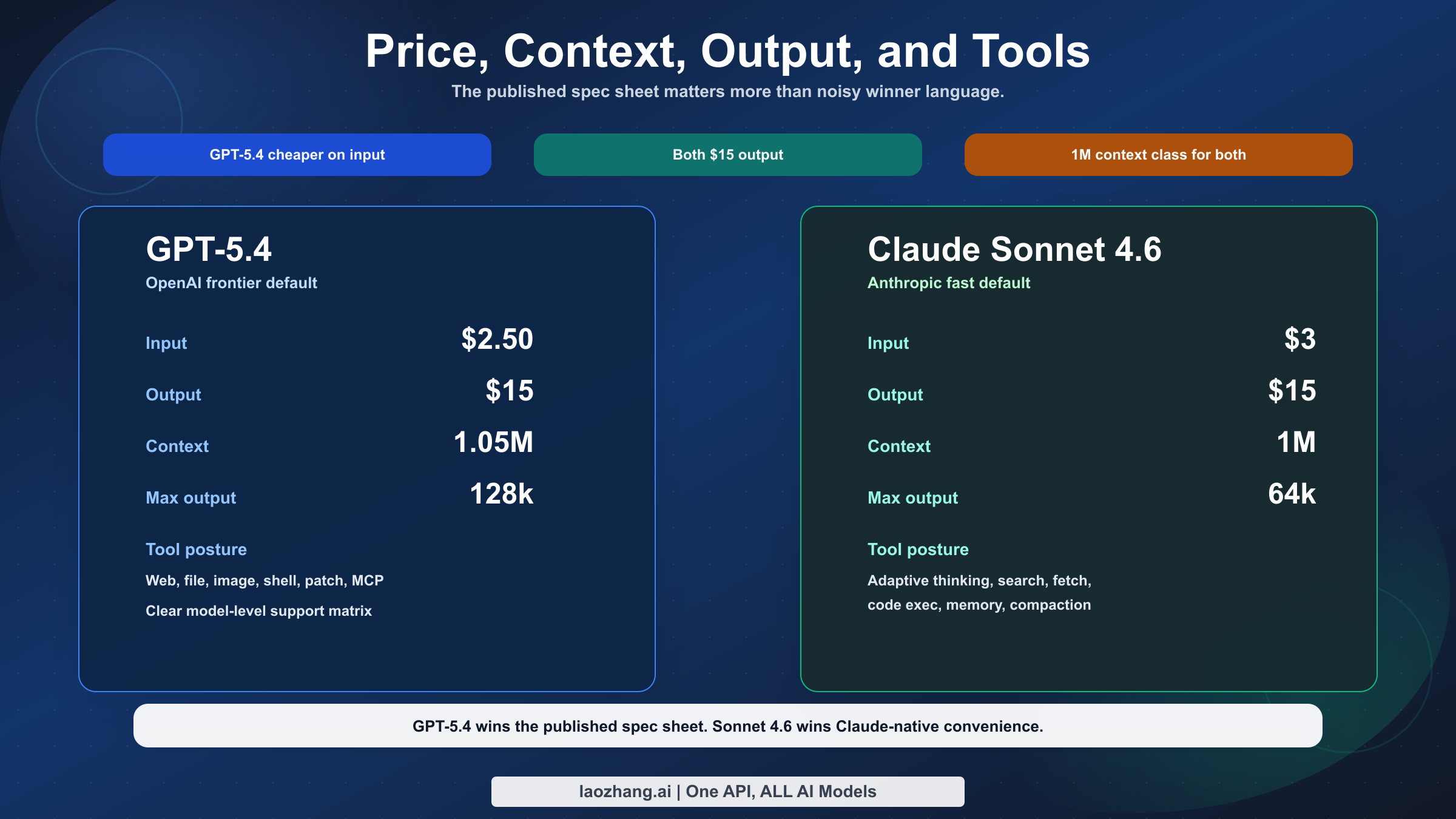

The first important fact is pricing. According to the current OpenAI GPT-5.4 model page, GPT-5.4 costs $2.50 input, $0.25 cached input, and $15 output per million tokens. According to Anthropic's current models overview, Claude Sonnet 4.6 costs $3 input and $15 output per million tokens. So on base published API pricing, GPT-5.4 is cheaper on input and equal on output.

That API view is only part of the buying decision, because many users are not paying per token first. They are choosing by product surface first. On the OpenAI side, GPT-5.4 launched in ChatGPT (as GPT-5.4 Thinking), the API, and Codex. On the Claude side, Anthropic says Sonnet 4.6 is already the default on Free and Pro, plus Claude Cowork, while also being available in Claude Code, the API, and major clouds. That means Sonnet 4.6 can still be the more convenient choice for a lot of non-API users even when it is not the cheaper API model.

That does not tell the whole story, because OpenAI also publishes an important caveat: prompts above 272K input tokens on GPT-5.4 are charged at 2x input and 1.5x output for the full session. In other words, GPT-5.4's huge context window is real, but the far end of that context window becomes materially more expensive. If your workflow constantly lives in the extreme long-context range, you should not treat the headline price as the only cost signal.

The second important fact is context and output. Both models now sit at roughly the same headline context scale: GPT-5.4 has 1,050,000 tokens of context, and Sonnet 4.6 is listed at 1M in Anthropic's current models overview. Anthropic's historical rollout still matters because the launch page introduced 1M as beta, and that wording remains on the release page. But the current live docs are more expansive: Anthropic's context windows guide says Sonnet 4.6 now has a generally available 1M-token window, and Anthropic's March 13, 2026 "What's new in Claude 4.6" notes say the full 1M token context window is available for Sonnet 4.6 at standard pricing. So the safest current official read is not "the beta wording vanished," but "Anthropic's launch page preserves the rollout history while the current models overview, context guide, and release notes support treating Sonnet 4.6 as a 1M-context, 64k-output model." The more meaningful difference is max output: GPT-5.4 publishes 128k max output, while Sonnet 4.6 publishes 64k. If you routinely ask for long single-pass outputs, complex rewrites, or wide code transformations, GPT-5.4 has more headroom.

The third important fact is tool posture. OpenAI's current model page gives a very explicit tool matrix for GPT-5.4: web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, computer use, MCP, and tool search are all listed as supported. Anthropic's Sonnet 4.6 story is a little different. The release page and current docs describe a strong Claude platform stack around Sonnet 4.6, including adaptive thinking, extended thinking, context compaction in beta, web search, fetch, code execution, memory, tool search, and programmatic tool calling. That is real capability. But it is documented more as a platform workflow than as a single clean model-spec matrix.

In practice, that means GPT-5.4 is easier to justify as the one default model for tool-heavy agentic work. Sonnet 4.6 is easier to justify when you already prefer the Claude platform's working style and want the best high-speed default inside that ecosystem.

There is also a straightforward speed signal in the vendor docs. Anthropic labels Sonnet 4.6 as the best balance of speed and intelligence and marks its comparative latency as Fast in the models overview. OpenAI lists GPT-5.4 speed as Medium on the API model page. That does not prove Sonnet 4.6 is universally faster in every real workload, but it does support the idea that Sonnet 4.6 is meant to feel like the faster strong default, while GPT-5.4 is meant to be the broader heavy-duty default.

So the practical split is this:

- if you are paying through an API and routing tasks automatically, GPT-5.4 is easier to defend

- if you are choosing the default experience inside Anthropic's own products, Sonnet 4.6 is easier to adopt

- if you need the broadest one-model tool story, GPT-5.4 has the cleaner published case

- if you want the strongest non-Opus Claude default, Sonnet 4.6 is the obvious answer

Which Model Should You Use For Each Workflow

This is the part that should actually change your decision.

| Workflow | Better pick | Why |

|---|---|---|

| One default API model for coding plus tools | GPT-5.4 | Broader published tool support, cheaper input, same output price, higher max output |

| Long-context repo work with large final outputs | GPT-5.4 | Same class of context, but more output headroom |

| Tool-heavy agentic jobs that combine search, shell, patching, and MCP | GPT-5.4 | OpenAI publishes the clearer model-level tool matrix |

| Claude.ai or Claude Code as your main surface | Claude Sonnet 4.6 | It is the native Anthropic default and the mainline high-capability choice in that ecosystem |

| Free-tier or Pro-tier Claude usage | Claude Sonnet 4.6 | Anthropic made it the default on Free and Pro plans |

| Anthropic-centered enterprise stack | Claude Sonnet 4.6 | Better fit if your surrounding tooling, governance, and habits already assume Claude |

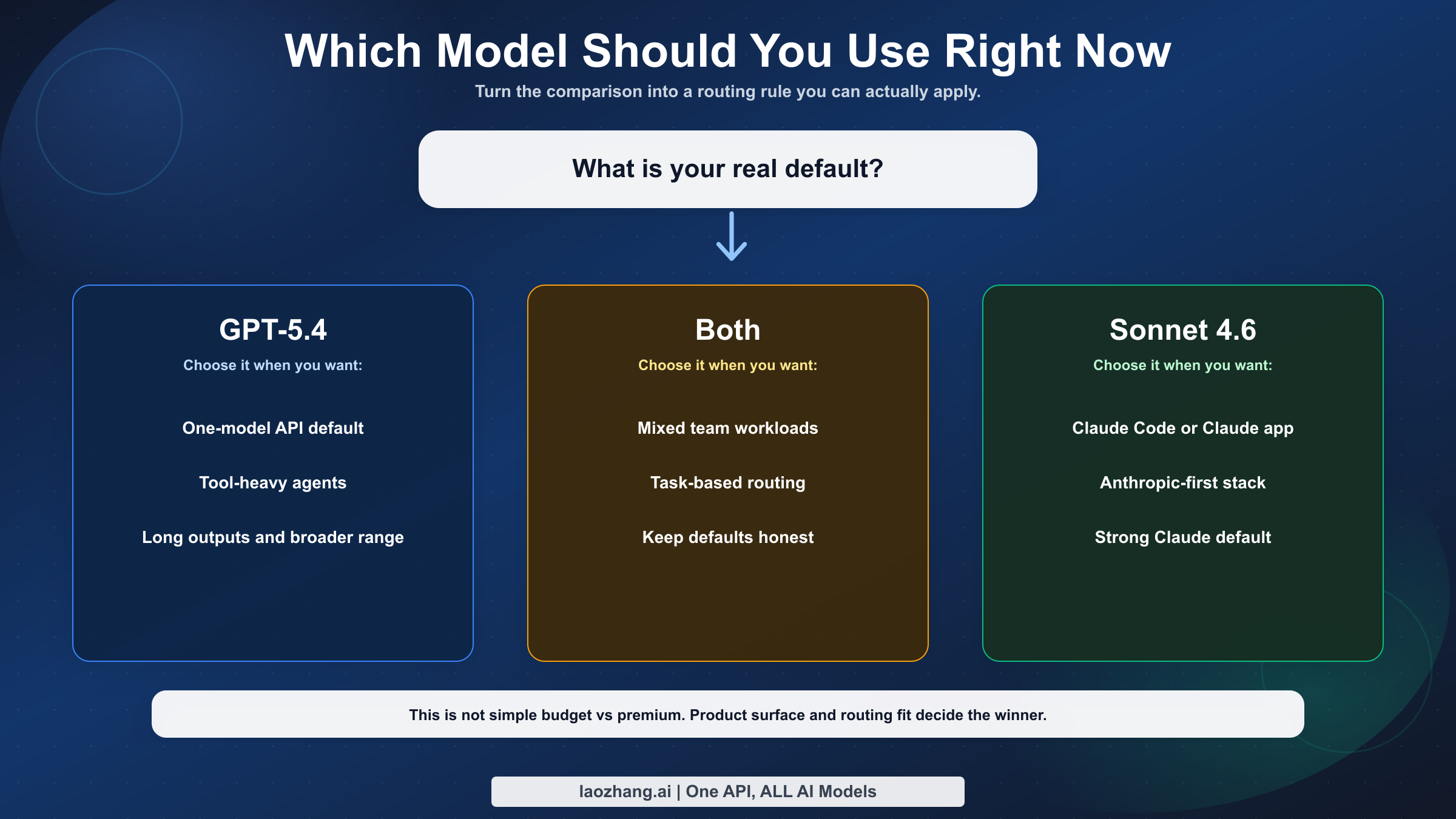

| Mixed team that can route by task | Both | Use GPT-5.4 as the broad default and Sonnet 4.6 as the Claude-native secondary lane |

For a solo developer or a small team choosing one API default, GPT-5.4 is the better answer more often than not. It is newer, cheaper on input, equal on output, better on max output, and easier to defend when tasks drift from "write code" into "use tools, search docs, patch files, and work across a long chain of steps."

For a Claude-first team, the answer changes. If your real question is not "which raw model wins?" but "which model should we use inside Claude Code, Claude.ai, and the Anthropic stack we already run?" then Sonnet 4.6 becomes much easier to justify. It is the default there for a reason. Anthropic is clearly trying to make Sonnet 4.6 the model you reach for first, reserving Opus 4.6 for the deepest and most expensive tasks.

For teams routing requests automatically, the smartest answer may be both. Default to GPT-5.4 when the job looks tool-heavy, long-context, or likely to benefit from larger outputs. Keep Sonnet 4.6 as a secondary route for Claude-native usage, fast strong responses, and Anthropic-centered workflows. If you already use a gateway such as laozhang.ai to keep multiple providers behind one OpenAI-compatible endpoint, this kind of split is straightforward to operationalize without forcing the rest of the stack to care which vendor sits underneath.

A simple routing rule works well in practice:

- send repo-wide planning, tool-using tasks, browser-backed research, and long output generation to GPT-5.4

- send Claude Code sessions, Anthropic-native review loops, and teams already standardized on Claude workflows to Sonnet 4.6

- reserve Opus 4.6 or GPT-5.4 Pro only for the narrower slice of work where the extra reasoning ceiling clearly pays for itself

That is not fence-sitting. It is just a more realistic answer than pretending one model dominates every surface equally.

If your decision is really about developer products rather than raw model IDs, our Claude Code vs Codex guide is useful because it shows how much product surface can change the lived experience even when the underlying model comparison looks similar on paper.

Where Each Model Wins

The wrong conclusion from this article would be "Sonnet 4.6 is irrelevant." It is not.

Claude Sonnet 4.6 is the smarter pick when your workflow is already anchored in Claude Code or Claude.ai, when you want Anthropic's current platform features and defaults, when Free or Pro plan availability matters, or when you specifically want the strongest Claude default without moving up to Opus 4.6 pricing. It also remains attractive for teams that have already standardized on Anthropic's API patterns and do not want to split their agent stack across providers unless the gain is clearly worth it.

GPT-5.4 is worth paying for when you want a single default across ChatGPT, Codex, and the API, when your agents need a broad tool surface, when you value larger single-pass outputs, or when you simply want the cleaner spec-and-pricing story. In this specific comparison, GPT-5.4 is unusual because the stronger default model is also not obviously the more expensive one on base API pricing.

The cleaner way to phrase the verdict is this:

- If you want the best single default model, choose GPT-5.4.

- If you want the best Claude-native default, choose Claude Sonnet 4.6.

- If you are a serious team routing by task, keep both.

That answer is less dramatic than a typical "X destroys Y" headline, but it is much more usable.

FAQ

Is GPT-5.4 better than Claude Sonnet 4.6 for coding?

Usually yes, if what you want is one default model for coding plus broader agentic work. GPT-5.4's current published profile is stronger overall: cheaper input, the same output price, equal-class context, more max output, and a clearer tool matrix. Sonnet 4.6 still makes sense in Claude-first workflows, but it is harder to justify as the universal default.

Is Claude Sonnet 4.6 cheaper than GPT-5.4?

No on the current official base API prices checked on March 19, 2026. GPT-5.4 is listed at $2.50 / 1M input and $15 / 1M output. Claude Sonnet 4.6 is listed at $3 / 1M input and $15 / 1M output. A lot of ranking pages still imply Sonnet is the cheaper option, but the current official published prices do not support that.

Can Claude Sonnet 4.6 replace GPT-5.4?

Yes in some workflows, especially if you already live in Claude Code or Claude.ai and do not need OpenAI's broader model-level tool list. No if you want one model that is easier to justify across API routing, Codex, long single-pass outputs, and tool-heavy agentic tasks.

Should teams use both models?

Often yes. GPT-5.4 makes sense as the main default. Sonnet 4.6 makes sense as the Anthropic-native lane. Using both is especially rational for teams that already evaluate providers per workload rather than trying to force every task through one vendor.