The battle between Claude Code and OpenAI Codex has become the defining rivalry in AI-assisted development for 2026. Both tools promise to transform how developers write, debug, and ship code from the terminal, but they approach the problem from fundamentally different directions. Claude Code emphasizes a developer-in-the-loop local workflow with deep codebase understanding and multi-agent orchestration, while Codex prioritizes cloud-based autonomous execution with generous usage limits and broader model flexibility. After examining benchmarks, pricing, architecture, and real developer feedback, the answer to which tool is better turns out to be more nuanced than most comparison articles suggest — and for many developers, the optimal strategy involves using both.

TL;DR

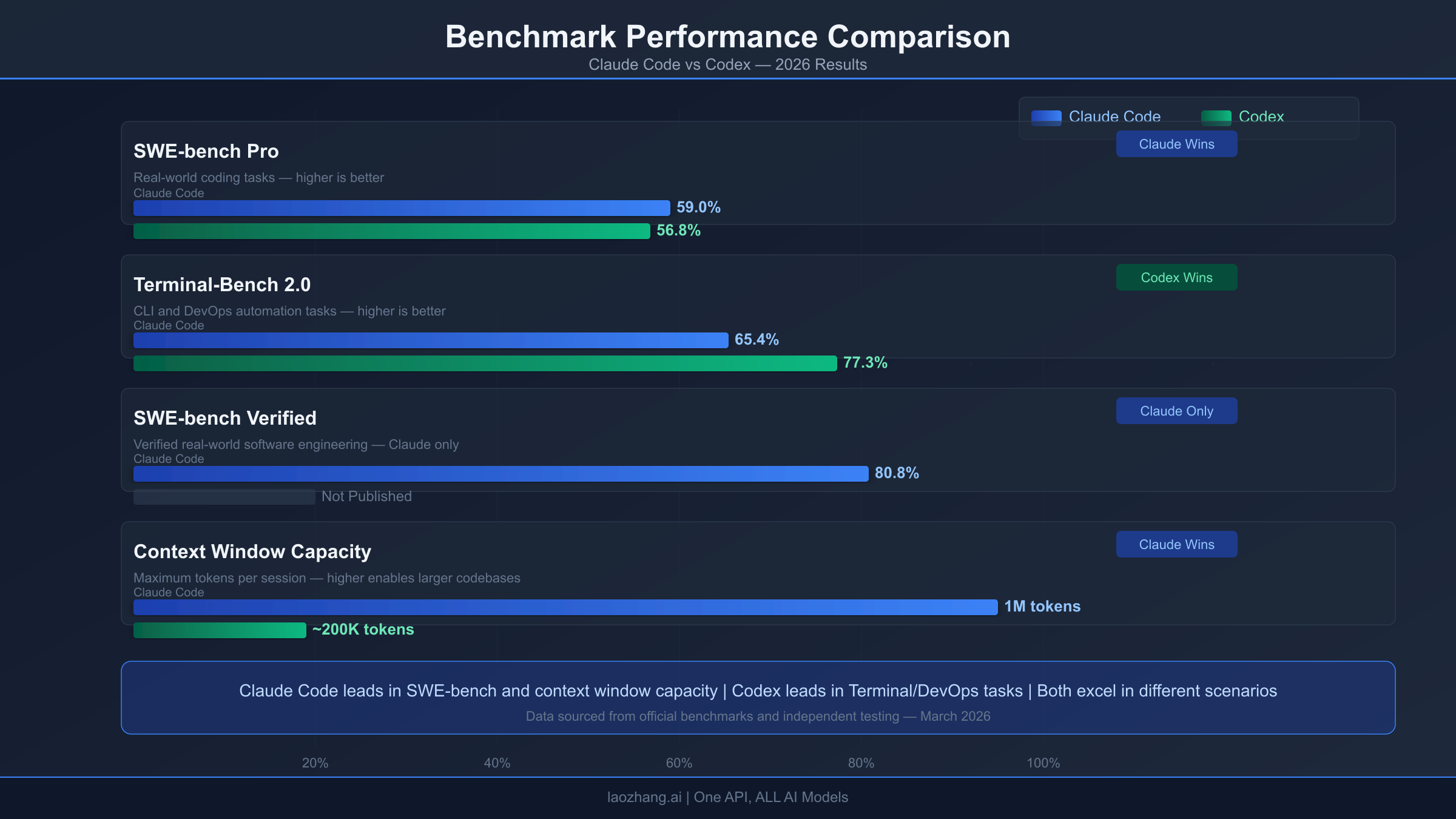

Claude Code wins on code quality, complex reasoning (80.8% SWE-bench Verified), and multi-agent orchestration for large codebases with its 1-million-token context window. Codex wins on terminal-native tasks (77.3% Terminal-Bench), token efficiency (uses 3-4x fewer tokens), generous usage limits at $20/month, and offers an $8/month entry tier. Both cost $20/month at the standard level, but Codex provides substantially more sessions per dollar. The smartest developers in 2026 are using Claude Code for complex multi-file refactoring and architectural decisions, and Codex for rapid prototyping, script generation, and terminal workflows — spending roughly $40/month total for the best of both worlds.

How Claude Code and Codex Actually Work (Architecture Deep Dive)

Understanding why these tools perform differently requires looking under the hood at their fundamental architecture. The technical decisions each company made about execution environments, safety models, and context handling explain nearly every difference in capability and limitation.

Claude Code operates as a local-first application that runs directly in your terminal and interacts with your file system in real time. When you give Claude Code a task, it reads your actual project files, understands the directory structure, and makes changes directly to your codebase. The tool uses Anthropic's Claude models — primarily Opus 4.6 for complex reasoning and Sonnet 4.6 for faster operations — with a beta 1-million-token context window that can hold enormous codebases in memory simultaneously. Claude Code's configuration lives in CLAUDE.md files, which support layered settings, policy enforcement, and 17 programmable hook events that let developers customize behavior at a granular level. The recently introduced Agent Teams feature allows spawning multiple Claude Code instances that coordinate through shared task lists, dependency tracking, and direct agent messaging — each working in its own git worktree to prevent conflicts.

Codex takes a fundamentally different approach by executing tasks in cloud-based sandboxes. When you submit a task to Codex through the ChatGPT interface or the open-source Rust CLI, each task gets its own isolated cloud environment with independent execution threads. This architectural choice means Codex can run multiple tasks truly in parallel without the risk of one task interfering with another, and it means your local machine's resources are not consumed during execution. Codex reads AGENTS.md — an emerging open standard also adopted by several other tools — for its configuration. The context window is 400,000 tokens, which is substantial but significantly smaller than Claude Code's maximum. Codex primarily uses the GPT-5.3-Codex model optimized specifically for coding tasks, with the ability to switch between GPT-5.4 and other models using the /model command.

The safety architectures represent perhaps the starkest philosophical difference between these two tools, and this difference cascades into how they handle everything from file system access to network requests during code execution. Codex enforces safety at the operating system kernel level with relatively coarse-grained controls — the sandbox prevents any action that could escape the isolated environment, including network access and file system modifications outside the designated workspace. This approach is inherently secure by isolation, meaning even a badly crafted prompt cannot cause Codex to access files or resources it should not touch. Claude Code enforces safety at the application layer through fine-grained programmable hooks, giving developers 17 distinct events where they can inject custom logic, approval workflows, or restrictions. These hooks cover events like pre-tool-use, post-tool-use, notification, and various command execution events, allowing teams to build sophisticated guardrails without losing the flexibility of local file system access.

In practice, this means Codex is simpler to set up securely but less customizable, while Claude Code requires more initial configuration but offers much more flexibility for teams with specific security requirements. A startup with two developers will likely prefer Codex's zero-configuration security model, while an enterprise with compliance requirements around code access patterns and audit trails will benefit from Claude Code's hook-based approach that can enforce custom policies at each step of the agent's workflow. Understanding this trade-off is fundamental to choosing the right tool for your team's security posture.

The Benchmark Showdown: What the Numbers Actually Tell Us

Benchmarks are the most frequently cited and most frequently misunderstood aspect of the Claude Code versus Codex comparison. The raw numbers paint a picture, but understanding what each benchmark actually measures is essential for translating scores into real-world expectations.

On SWE-bench Pro, which tests the ability to resolve real GitHub issues from popular open-source projects, Claude Code scores 59.0% compared to Codex at 56.8% (source: morphllm.com, verified March 2026). This approximately two-percentage-point gap is meaningful but not dramatic — it suggests Claude Code has a slight edge in understanding complex, multi-file bug fixes that require deep reasoning about code relationships across an entire project. The SWE-bench Verified variant, which uses a curated subset of higher-quality test cases, shows Claude Code at an impressive 80.8%, though direct comparison is complicated because Codex uses a different variant of this benchmark. If you want a deeper dive into how Claude's Opus 4.6 model compares to GPT-5.3 across all benchmarks, our comprehensive model comparison covers the complete picture.

Terminal-Bench 2.0 tells a very different story. This benchmark specifically measures performance on terminal-native tasks — shell scripting, DevOps automation, system configuration, and command-line tool creation. Here, Codex leads convincingly at 77.3% compared to Claude Code's 65.4%. The nearly twelve-point gap is substantial and reflects Codex's optimization for the specific kinds of tasks that terminal-heavy developers encounter daily. If your workflow revolves around writing bash scripts, configuring CI/CD pipelines, managing containers, or building CLI tools, Codex has a measurable and meaningful advantage.

The benchmark numbers alone, however, miss a critical dimension: token efficiency. Real-world testing by Morph shows that Claude Code consistently uses 3-4x more tokens than Codex to complete identical tasks. A Figma plugin project consumed 6.2 million tokens with Claude Code versus just 1.5 million with Codex — a 4.2x difference. A scheduler application showed 235,000 tokens versus 73,000 (3.2x), and an API integration required 650,000 versus 180,000 tokens (3.6x). This token disparity has profound implications for actual cost that pure benchmark scores completely obscure. Claude Code may produce slightly higher quality output, but it does so at a significantly higher token cost per task, which directly translates into either faster limit exhaustion on subscription plans or higher API bills for BYOK users.

Understanding why Claude Code uses more tokens reveals something important about its approach. Claude Code generates more complete, well-documented outputs with extensive inline comments, comprehensive error handling, and careful attention to matching existing code patterns. Codex generates shorter, more functional implementations with less explanation — code that works but requires more human review to fully understand. Neither approach is inherently superior; the right choice depends on whether you value thoroughness and documentation (Claude Code) or speed and efficiency (Codex). For solo developers working on personal projects, Codex's leaner output is often preferable. For teams maintaining production codebases where every commit needs clear documentation, Claude Code's verbosity becomes a genuine advantage.

One additional benchmark worth noting is the adoption data that reveals how developers are actually voting with their usage. Claude Code currently drives approximately 135,000 GitHub commits daily, representing roughly 4% of all public commits — a staggering figure that reflects its deep integration into production workflows. The Claude Code VS Code extension has accumulated 5.2 million installs with a 4.0/5 rating. Meanwhile, the Codex CLI has garnered 62,365 GitHub stars with 365 active contributors and an impressive 1.8 releases per day average, signaling extremely rapid development velocity. Both tools have achieved massive real-world adoption, but Claude Code's commit dominance suggests deeper integration into production workflows, while Codex's star count and contributor base indicate a more vibrant open-source community.

Pricing Deep Dive: The True Cost of Each Tool

Surface-level pricing comparison between Claude Code and Codex appears straightforward — both offer a $20/month tier — but the actual economics diverge significantly once you factor in usage limits, token consumption, and the full range of subscription options.

Codex offers three individual subscription tiers through ChatGPT. The Go plan at $8 per month is the entry point, providing basic Codex access with limited sessions — ideal for developers who use AI assistance occasionally rather than daily. The Plus plan at $20 per month is the standard tier, offering approximately 160 messages every three hours with GPT-5.2, which most individual developers find sufficient for productive daily use. The Pro plan at $200 per month provides a 6x boost in usage limits and is designed for developers who use Codex as their primary coding workflow throughout the workday (source: openai.com, verified March 2026).

Claude Code's subscription tiers start at the Pro plan for $20 per month ($17/month with annual billing at $200 up front), which includes Claude Code access with approximately 45 messages per 5-hour window. The Max plans offer 5x usage at $100 per month or 20x usage at $200 per month (source: claude.com/pricing, verified March 2026). Notably, Claude Code is not available on the free tier — you need at minimum a Pro subscription or an API key, which is a meaningful barrier for developers exploring the tool. For those looking to try Claude Code without commitment, our guide to the Claude Code free tier and workarounds covers every legitimate method including the 30-day Pro trial.

The token economics make the pricing comparison even more interesting. Because Claude Code uses 3-4x more tokens per task, a $20 Claude Pro subscription provides effectively fewer productive sessions than a $20 ChatGPT Plus subscription. Multiple developers on Reddit have described hitting Claude Code limits within hours of intensive use, while Codex Plus users report rarely encountering limits during normal workdays. This disparity has led to what one Reddit user described as the consensus: "Claude Code is higher quality but unusable. Codex is slightly lower quality but actually usable."

For enterprise and team plans, the comparison reveals additional options. Claude Team costs $20-25 per seat per month for standard seats or $100-125 for premium seats (5x usage), while ChatGPT Team and Enterprise plans offer comparable per-seat pricing with Codex included. The enterprise tier pricing varies based on contract terms, but both companies offer volume discounts for larger deployments. If you need a thorough breakdown of Claude's complete API pricing structure, our API pricing guide covers every tier and model option in detail.

For API-level users who bring their own keys, the math shifts considerably. Claude's Sonnet 4.6 costs $3/$15 per million tokens (input/output), while Codex's codex-mini-latest costs $1.50/$6 per million tokens with a 75% prompt caching discount. Combined with Claude's 3-4x higher token consumption, a BYOK Claude Code user might spend 6-8x more per equivalent task compared to a BYOK Codex user. This calculation matters enormously for teams and heavy individual users. For developers who want to access Claude's models at lower cost, API aggregation platforms like laozhang.ai can provide some savings by consolidating access to multiple providers through a single endpoint.

| Feature | Claude Code | OpenAI Codex |

|---|---|---|

| Cheapest plan | $20/mo (Pro) | $8/mo (Go) |

| Standard plan | $20/mo (Pro) | $20/mo (Plus) |

| Premium plan | $100-200/mo (Max) | $200/mo (Pro) |

| Messages at $20 | ~45/5hr | ~160/3hr |

| API input cost | $3/MTok (Sonnet) | $1.50/MTok (codex-mini) |

| API output cost | $15/MTok (Sonnet) | $6/MTok (codex-mini) |

| Tokens per task | 3-4x higher | Baseline |

Developer Experience: Setup, Config, and Daily Workflow

The daily experience of using Claude Code versus Codex reveals differences that benchmark scores and pricing tables cannot capture. These practical distinctions often matter more for developer satisfaction than raw performance metrics.

Setting up Claude Code involves installing the CLI through npm, authenticating with your Anthropic account, and optionally creating CLAUDE.md configuration files in your project roots. The CLAUDE.md system is remarkably powerful — it supports layered configurations (project, user, global), policy enforcement rules, and integration with the 17 programmable hook events for customizing behavior. This flexibility comes at the cost of complexity: getting Claude Code configured optimally for a specific project can take significant experimentation, particularly when tuning how it handles large codebases, manages context windows, and coordinates agent teams. The Claude Code installation guide walks through the complete setup process including configuration best practices.

Codex CLI installation is similarly straightforward — it is a Rust binary that you download or install through a package manager. Authentication uses your OpenAI/ChatGPT credentials. Configuration happens through AGENTS.md files, which follow an emerging open standard that several other tools also support. This interoperability means an AGENTS.md file you write for Codex also works with other tools in the ecosystem, reducing vendor lock-in. The Codex CLI is noticeably lighter and faster to start up than Claude Code, which developers working in rapid iteration cycles appreciate.

The execution model creates the most significant daily workflow difference. Claude Code runs locally and operates on your actual files in real time — you see changes happening as Claude writes them, can interrupt mid-task, and maintain complete control over what gets modified. Codex operates in cloud sandboxes, which means tasks run asynchronously and you receive completed results. This cloud model enables Codex to handle multiple tasks in genuine parallel isolation (not just multi-threading), but it means you cannot watch or intervene while work is in progress. For developers who prefer a pair-programming feel, Claude Code's interactive approach is more natural. For developers who prefer delegating tasks and reviewing results, Codex's async model is more productive.

Context handling represents another critical workflow difference. Claude Code's 1-million-token context window (in beta) can hold massive codebases entirely in memory, which is transformative for understanding and refactoring large projects with deep interdependencies. Codex's 400,000-token context is substantial for most individual tasks but cannot hold an entire large codebase simultaneously. In practice, this means Claude Code excels at tasks that require understanding relationships across many files, while Codex handles individual file or small module tasks with comparable quality and greater speed.

Model selection flexibility is another area where the tools diverge. Codex lets you switch between GPT-5.4, GPT-5.3-Codex, and other available models using the /model command, giving developers the ability to choose different performance/cost tradeoffs for different tasks within the same session. Claude Code defaults to using Anthropic's models through the official subscription, though it supports custom model providers for developers who want to route requests through alternative services like OpenRouter or local models via Ollama. This means Claude Code can technically access non-Anthropic models, but the experience is optimized for and best with Claude's own model family. For developers who want to understand more about Claude Code's rate limits and how to work within them, our dedicated guide covers strategies for maximizing productive usage.

The broader ecosystem integration also differs meaningfully. Codex benefits from being part of the OpenAI/ChatGPT ecosystem — it shares authentication, billing, and context with ChatGPT, meaning conversations started in the web interface can be continued in the CLI and vice versa. Claude Code is part of Anthropic's Claude ecosystem, integrating with Claude web, desktop, and mobile interfaces, as well as newer features like Cowork, Research, and Skills. For developers already invested in one ecosystem, the integration benefits of staying within that ecosystem are significant and often underestimated when comparing tools in isolation.

When Claude Code Wins: Specific Scenarios

Claude Code demonstrates clear superiority in several well-defined scenarios where its architectural strengths directly translate into better outcomes for developers.

Complex multi-file refactoring is where Claude Code's advantages compound most dramatically. When a task requires understanding how changes to one file ripple through dozens of others — renaming a core interface, restructuring a module hierarchy, or migrating from one framework pattern to another — Claude Code's 1-million-token context window and superior SWE-bench scores combine to produce significantly more reliable results. Codex can handle these tasks, but it frequently misses edge cases in files outside its 400K context window and generates more fixes that require manual correction.

Multi-agent orchestration for large projects is a capability that Codex simply does not match in its current form. Claude Code's Agent Teams feature lets you spawn multiple coordinated agents — one handling tests, another refactoring implementations, a third updating documentation — all working on the same codebase with shared task lists and dependency tracking through git worktrees. This capability transforms tasks that would take a single developer days into hours, particularly for comprehensive codebase-wide changes. Anthropic's own case study showed Claude Code building a complete C compiler through agent teams, a project that would have been impractical with a single-agent approach. Claude Code currently drives approximately 135,000 GitHub commits daily, representing roughly 4% of all public commits — a testament to its adoption for production-quality work.

Deterministic, high-quality code output matters for teams with strict code review standards. Claude Code consistently generates more complete, well-documented implementations that prioritize readability and match existing project patterns. For organizations where code quality standards are non-negotiable and every pull request undergoes thorough review, Claude Code's tendency to produce immediately review-ready code reduces the back-and-forth cycle significantly. Duolingo's engineering team noted that Claude Code's PR reviews caught bugs their human reviewers would have missed, and several teams at major companies have reported similar experiences where Claude Code's thoroughness surfaced subtle issues like backward compatibility breaks and edge cases that faster, less thorough tools consistently overlooked.

Deep codebase understanding and navigation is where Claude Code's larger context window becomes transformative rather than merely incremental. When working with a monorepo or a large application with hundreds of interdependent files, Claude Code can maintain awareness of the entire dependency graph, type system, and API surface simultaneously. This allows it to make changes that are globally consistent — updating every call site when a function signature changes, ensuring type safety across module boundaries, and identifying cascading effects that would be invisible to a tool with less context. Developers working on codebases exceeding 100,000 lines of code consistently report that Claude Code's understanding depth is unmatched by any other AI coding tool, including Codex.

When Codex Wins: Specific Scenarios

Codex demonstrates equally clear advantages in scenarios that align with its architectural strengths, and for many developers these scenarios represent the majority of their daily work.

Terminal-native workflows represent Codex's strongest domain, as reflected in its 77.3% Terminal-Bench score versus Claude Code's 65.4%. Writing shell scripts, configuring server environments, building CLI tools, managing Docker containers, and automating CI/CD pipelines all benefit from Codex's optimization for terminal operations. Developers whose primary work involves DevOps, infrastructure, or system administration will find Codex measurably more capable and reliable for their daily tasks.

Rapid prototyping and greenfield projects benefit from Codex's combination of speed and generous usage limits. When you are iterating quickly on a new idea — building a proof of concept, scaffolding a new application, or exploring different implementation approaches — Codex's lower token consumption and higher message limits mean you can iterate 3-4x more frequently within the same subscription. The cloud sandbox model also means each prototype attempt runs in a clean environment, eliminating the "works on my machine" problem during early development.

Budget-conscious development is where Codex's economic advantages are most pronounced. The $8/month Go tier has no Claude Code equivalent, making Codex the only option for developers who want AI coding assistance at the absolute lowest cost. Even at the $20/month level, Codex's significantly higher message limits and lower token consumption per task mean more productive work per dollar. For freelancers, students, and developers in regions where $20/month is a significant expense, Codex delivers substantially more value per subscription dollar. The open-source Codex CLI with 62,365 GitHub stars and 365 active contributors also represents a thriving ecosystem that extends the tool's capabilities through community-built extensions and integrations.

Team standardization on OpenAI's ecosystem is a practical advantage for organizations already invested in ChatGPT Enterprise or Team plans. Codex integrates seamlessly with existing OpenAI subscriptions, shares authentication and billing, and requires no additional vendor relationship management. For enterprises where procurement complexity is a real concern, adding Codex to an existing OpenAI contract is far simpler than onboarding a new vendor for Claude Code.

Speed and responsiveness in interactive workflows give Codex an edge in sessions where developers are thinking fast and iterating rapidly. The Rust-based Codex CLI starts up faster, and Codex's models generally return results more quickly for routine coding tasks. This speed advantage compounds across a full day of development — saving even 5 seconds per interaction across hundreds of daily interactions translates into meaningful time savings. For developers who treat their AI coding assistant like a conversation partner during active development, this responsiveness makes Codex feel more fluid and less interruptive than Claude Code, which occasionally pauses for extended thinking on tasks that Codex handles instantly. The Codex ecosystem's 1.8 releases per day average also means the tool is improving at a pace that few other software products can match, with new capabilities and bug fixes arriving almost continuously.

The Hybrid Strategy: Why the Best Developers Use Both

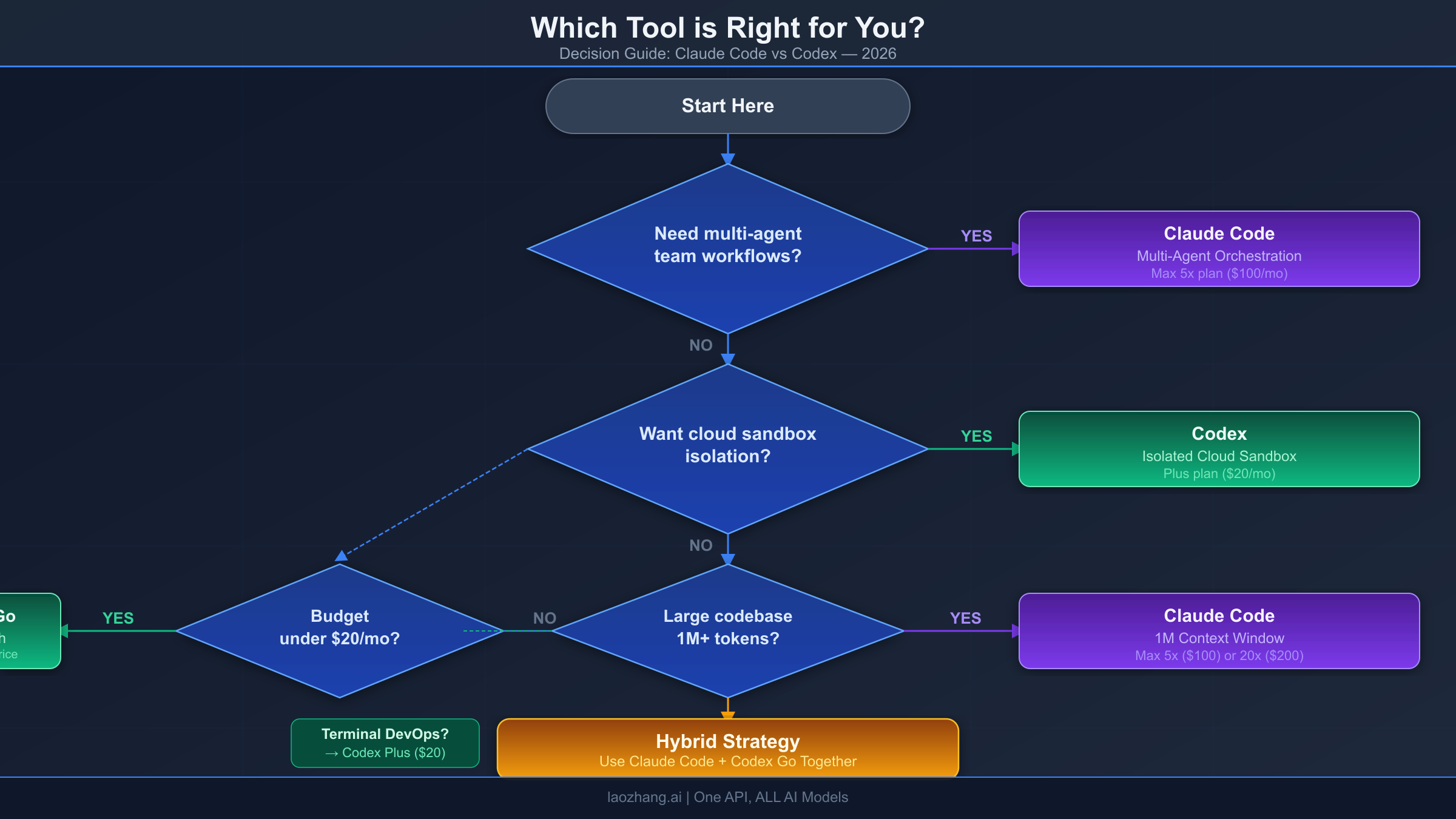

The most productive developers in 2026 are not choosing between Claude Code and Codex — they are using both tools strategically, selecting whichever is better suited for each specific task. This hybrid approach maximizes quality while optimizing cost and acknowledging that each tool has genuine strengths the other cannot match.

The practical hybrid workflow looks like this: use Codex for rapid prototyping in isolated cloud sandboxes, generating boilerplate, writing scripts, and handling terminal-native tasks where its speed and generous limits shine. Then switch to Claude Code for complex multi-file refactoring, architectural decisions requiring deep codebase understanding, coordinated agent team workflows, and final code quality polishing where its superior reasoning and larger context window deliver measurably better results.

At $20 per month for ChatGPT Plus and $20 per month for Claude Pro, the combined $40/month investment gives you the best AI coding assistance available in 2026 across all task types. Developers who have adopted this approach report significantly higher productivity than those locked into a single tool, because they stop trying to force one tool to handle tasks where the other excels. The key insight from the Reddit developer community — surveyed across 500+ responses on r/ClaudeAI and r/ChatGPT — is that tool loyalty is counterproductive when both options have genuine, measurable advantages in different domains.

For teams and enterprises, the hybrid approach requires slightly more configuration management (maintaining both CLAUDE.md and AGENTS.md files) but delivers flexibility that single-vendor solutions cannot match. Several engineering teams have reported establishing conventions where Codex handles routine tickets and bug fixes automatically while Claude Code handles architectural reviews and complex feature implementations that benefit from multi-agent coordination. This division of labor maximizes the strengths of each tool while minimizing the impact of their respective weaknesses.

The cost-benefit analysis of the hybrid approach is compelling when examined quantitatively. A developer who subscribes to both Claude Pro ($20/month) and ChatGPT Plus ($20/month) spends $40/month total. This same developer, using each tool for the tasks it handles best, will complete more work at higher quality than a developer spending $200/month on either Claude Max 20x or ChatGPT Pro alone. The reason is straightforward: specialization beats generalization. Using Codex for 70% of daily tasks (where its speed and efficiency dominate) and Claude Code for the remaining 30% (where its reasoning depth is essential) produces better aggregate outcomes than forcing either tool to handle 100% of tasks. This insight — that the optimal AI coding setup in 2026 costs $40/month across two tools rather than $200/month on one — is perhaps the most practical recommendation this comparison can offer.

Frequently Asked Questions

Which is better for beginners, Claude Code or Codex?

Codex is generally more accessible for beginners due to its lower $8/month entry point, simpler configuration (AGENTS.md is less complex than CLAUDE.md), and more generous usage limits that allow more experimentation. Claude Code's setup requires more initial configuration and its tighter usage limits can be frustrating while learning. That said, both tools are well-documented and have active communities.

Can I use both Claude Code and Codex on the same project?

Yes, and many developers do. Both tools can read and modify the same codebase since they operate on standard files and directories. You can maintain both CLAUDE.md and AGENTS.md configuration files in the same repository. The main consideration is avoiding having both tools modify the same files simultaneously, which could create conflicts.

Does Claude Code or Codex produce better code quality?

Claude Code generally produces more complete, well-documented code that matches existing project patterns more closely. Codex produces shorter, working implementations with less explanation but uses 3-4x fewer tokens. For production code that needs to pass rigorous code review, Claude Code has an edge. For rapid prototyping and iteration, Codex's speed advantage matters more than marginal quality differences.

Is the $20/month worth it for either tool?

For professional developers who code daily, absolutely. Both tools routinely save hours per week in debugging, boilerplate generation, and code understanding tasks. The question is which $20 subscription provides more value for your specific workflow — Codex if you primarily do terminal work and rapid iteration, Claude Code if you primarily do complex refactoring and need deep codebase understanding. At $40/month for both, the combined value exceeds what either delivers alone.

Will Codex replace Claude Code or vice versa?

Unlikely in the near term. Both companies are investing heavily in their respective approaches, and the architectural differences (local-first vs cloud sandbox, application-layer vs kernel-layer safety) reflect fundamentally different philosophies about how AI coding agents should work. Competition between the two is driving rapid improvement in both tools, benefiting developers regardless of which they prefer. The market is large enough to support multiple excellent options, and the emergence of open-source alternatives like Aider, Cline, and OpenCode further ensures no single tool will dominate completely.

How do Claude Code and Codex handle security and data privacy?

The approaches are fundamentally different. Claude Code runs locally and processes your code on your machine, with data sent to Anthropic's servers only for model inference. Pro users can opt out of having their data used for training. Codex runs tasks in cloud sandboxes, meaning your code is uploaded to OpenAI's infrastructure for execution. Both companies offer enterprise plans with stronger privacy guarantees, but developers working on proprietary or sensitive codebases should carefully evaluate each tool's data handling policies before adoption. Claude Code's local-first approach provides an inherent privacy advantage for developers who need to keep code off third-party servers during the reasoning process.

What about alternatives to both Claude Code and Codex?

The AI coding agent market has expanded significantly. Gemini CLI offers 1,000 free requests per day with a 1-million-token context window, making it the most generous free option available. Aider is a mature open-source tool that supports any LLM provider through BYOK and integrates deeply with Git. Cline is a VS Code extension with over 5 million installs that offers Claude Code-like capabilities within your editor. OpenCode specifically targets developers who want a Claude Code alternative with support for 75+ model providers. Amazon Q Developer provides free AWS-aware coding assistance. For most developers, however, the Claude Code plus Codex combination remains the highest-quality option — the alternatives are better suited as supplements or as free options for developers who cannot justify the subscription costs. If you are curious about how Claude Code compares with open-source alternatives like OpenClaw, that comparison provides additional context on the open-source landscape.