GPT-5.4 nano is usually the better cheap OpenAI API model for new high-volume workloads. Keep GPT-5 mini only if you already run a stable prompt stack on it, specifically need its lower-tier throughput profile, or have migration risk that matters more than the upgrade.

The practical decision is simple. If you are launching a new low-cost route for classification, extraction, ranking, or tool-using helper agents, start with GPT-5.4 nano because it is cheaper, much fresher, and better matched to current workflows. GPT-5 mini still fits as a legacy holdover for stable text-heavy traffic, not as the default cheap lane for a 2026 build. Its main surviving advantage is operational continuity, not a stronger default product position.

TL;DR

| Model | Best for | Main reason to choose it | Main reason not to choose it |

|---|---|---|---|

| GPT-5.4 nano | New low-cost classification, extraction, ranking, and simple subagent work | Cheaper than GPT-5 mini, far newer cutoff, broader tool support, and better current official coding/tool evals | Lower Tier 1 TPM and weaker than GPT-5 mini on a few multimodal or computer-use-style evals |

| GPT-5 mini | Legacy text-heavy traffic with proven prompt behavior and Tier 1 throughput pressure | Higher Tier 1 TPM and an already-known production profile | Older cutoff, higher cost than nano, narrower tool surface, and no longer the official default direction for new small-model routing |

If you want one decision rule, use this one: choose GPT-5.4 nano for new cheap API work unless you have a measured reason to keep GPT-5 mini.

Why This Comparison Is More Lopsided Than The Names Suggest

This keyword looks simple, but the naming makes it easy to think about it the wrong way.

Many readers see "GPT-5 mini" and assume it must sit above "GPT-5.4 nano" because "mini" sounds like a larger or stronger tier than "nano." In the current OpenAI lineup, that intuition no longer works. The latest GPT-5.4 guide says that for smaller, faster variants you should start with gpt-5.4-mini or gpt-5.4-nano. The current GPT-5 mini model page goes even further: for most new low-latency, high-volume workloads, OpenAI recommends starting with GPT-5.4 mini instead.

That matters because it changes how you should interpret GPT-5 mini. It is still live, still priced, and still usable. But it is no longer the forward-looking cheap default in OpenAI's own routing story. It is the older small-model branch that some teams may keep for legacy reasons.

GPT-5.4 nano, meanwhile, is not just a bargain-bin fallback. OpenAI explicitly recommends it for classification, data extraction, ranking, and simpler coding subagents. Those are not edge cases. They are exactly the kinds of jobs many teams assign to a cheap lane in a modern API stack.

So this is not really a fight between two current low-cost defaults. It is a comparison between a newer cheap model that OpenAI actively positions for fresh high-volume workloads and an older mini model that still survives for narrower operational reasons.

Pricing, Freshness, Tools, and Rate Limits Side by Side

Price is the first thing most teams check, and here the numbers already lean toward GPT-5.4 nano.

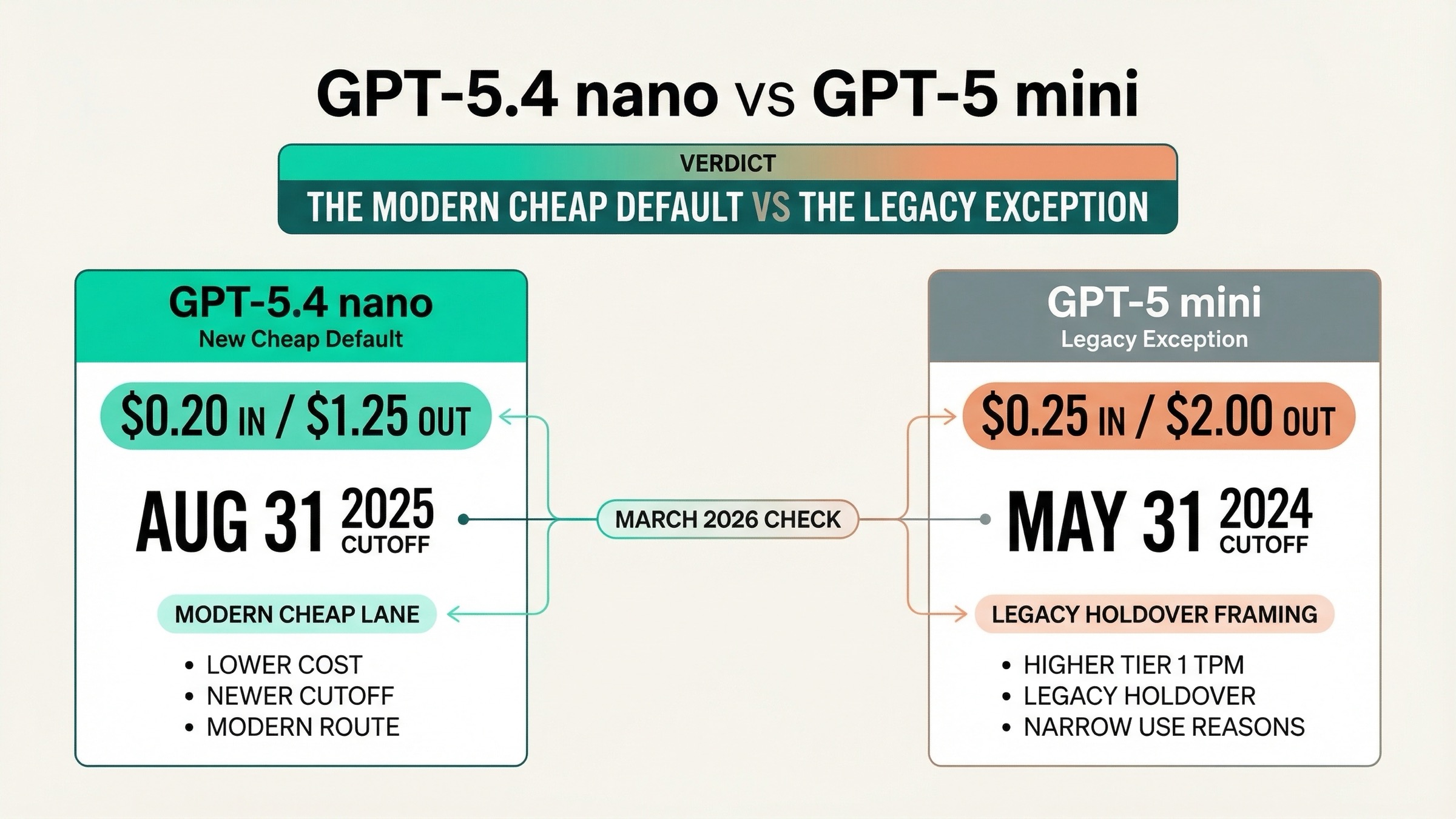

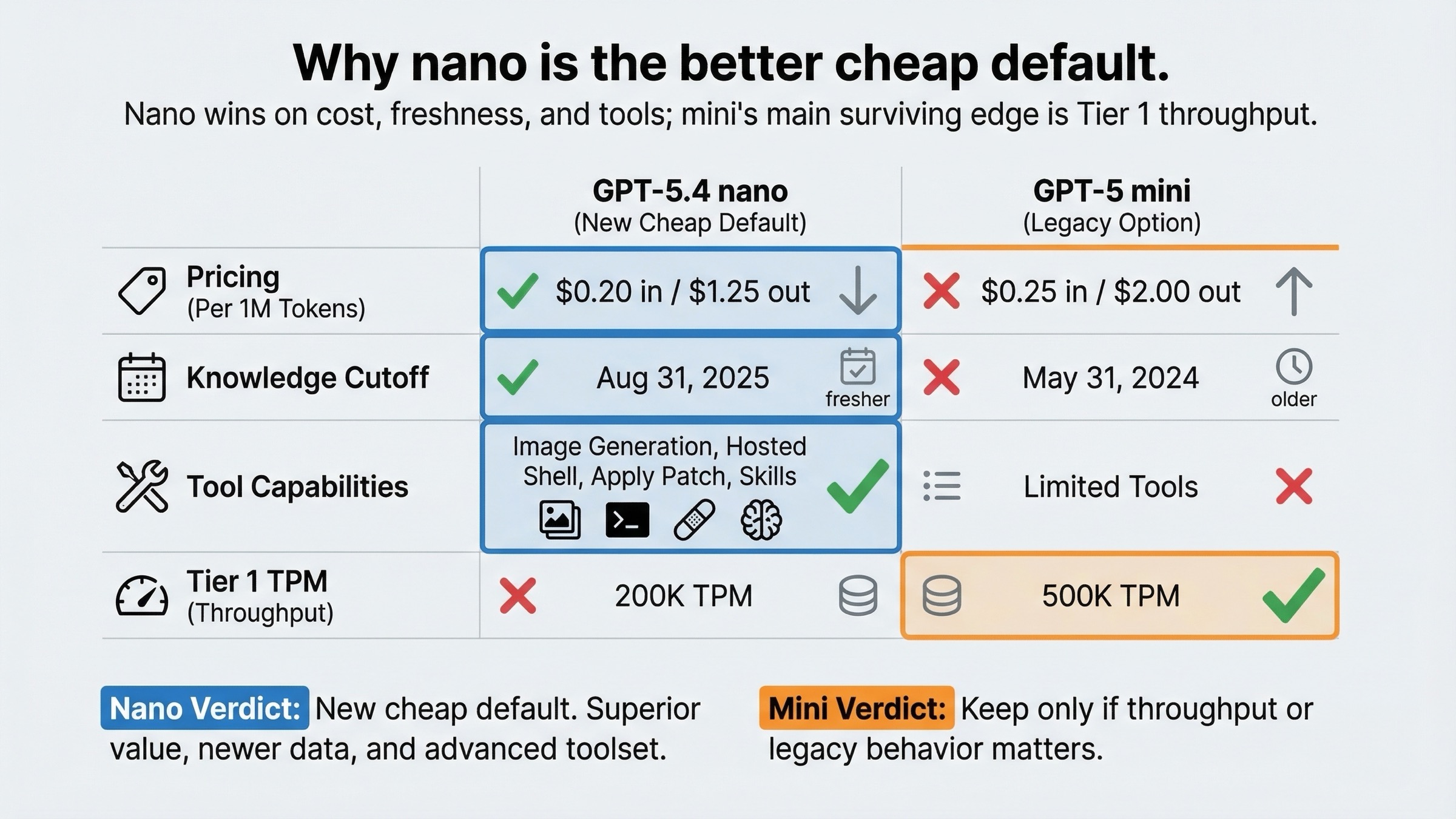

According to the current model pages checked on March 21, 2026, GPT-5.4 nano costs $0.20 per 1M input tokens, $0.02 cached input, and $1.25 per 1M output tokens. GPT-5 mini costs $0.25 per 1M input tokens, $0.025 cached input, and $2.00 per 1M output tokens. So GPT-5 mini is not just older. It is also more expensive than GPT-5.4 nano on every token price that most teams care about.

The second big difference is freshness. GPT-5.4 nano lists an Aug 31, 2025 knowledge cutoff. GPT-5 mini lists May 31, 2024. That is not a small gap. If your cheap lane touches recent APIs, newer libraries, or 2025-era product changes, GPT-5.4 nano starts with a meaningfully better baseline even before prompting strategy enters the picture.

The third difference is tools. GPT-5.4 nano currently supports web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, and MCP. GPT-5 mini supports web search, file search, code interpreter, and MCP, but does not support image generation, hosted shell, apply patch, skills, computer use, or tool search on its current model page. For plain text tasks, you may not care. For any low-cost worker that still needs useful tools, that gap changes the architecture.

The one obvious operational advantage for GPT-5 mini is throughput on lower paid tiers. On the current compare-models page, Tier 1 TPM is 500,000 for GPT-5 mini versus 200,000 for GPT-5.4 nano. That does not automatically make GPT-5 mini the better buy, but it is the clearest reason some teams may keep it for stable, throughput-sensitive traffic.

| Spec | GPT-5.4 nano | GPT-5 mini |

|---|---|---|

| Input price | $0.20 / 1M tokens | $0.25 / 1M tokens |

| Cached input | $0.02 / 1M tokens | $0.025 / 1M tokens |

| Output price | $1.25 / 1M tokens | $2.00 / 1M tokens |

| Context window | 400,000 | 400,000 |

| Max output | 128,000 | 128,000 |

| Knowledge cutoff | Aug 31, 2025 | May 31, 2024 |

| Snapshot shown on model page | gpt-5.4-nano-2026-03-17 | gpt-5-mini-2025-08-07 |

| Image generation tool | Yes | No |

| Hosted shell | Yes | No |

| Apply patch | Yes | No |

| Skills | Yes | No |

| Computer use | No | No |

| Tool search | No | No |

| Tier 1 TPM | 200,000 | 500,000 |

| Tier 5 TPM | 180,000,000 | 180,000,000 |

That table is why the current SERP feels incomplete. If you only looked at the model names, GPT-5 mini sounds like the safer or stronger cheap choice. If you actually compare cost, cutoff, and tools, GPT-5.4 nano looks like the better default for most new simple workloads.

What The March 17, 2026 Official Benchmarks Actually Mean

The launch post is where this comparison becomes more interesting.

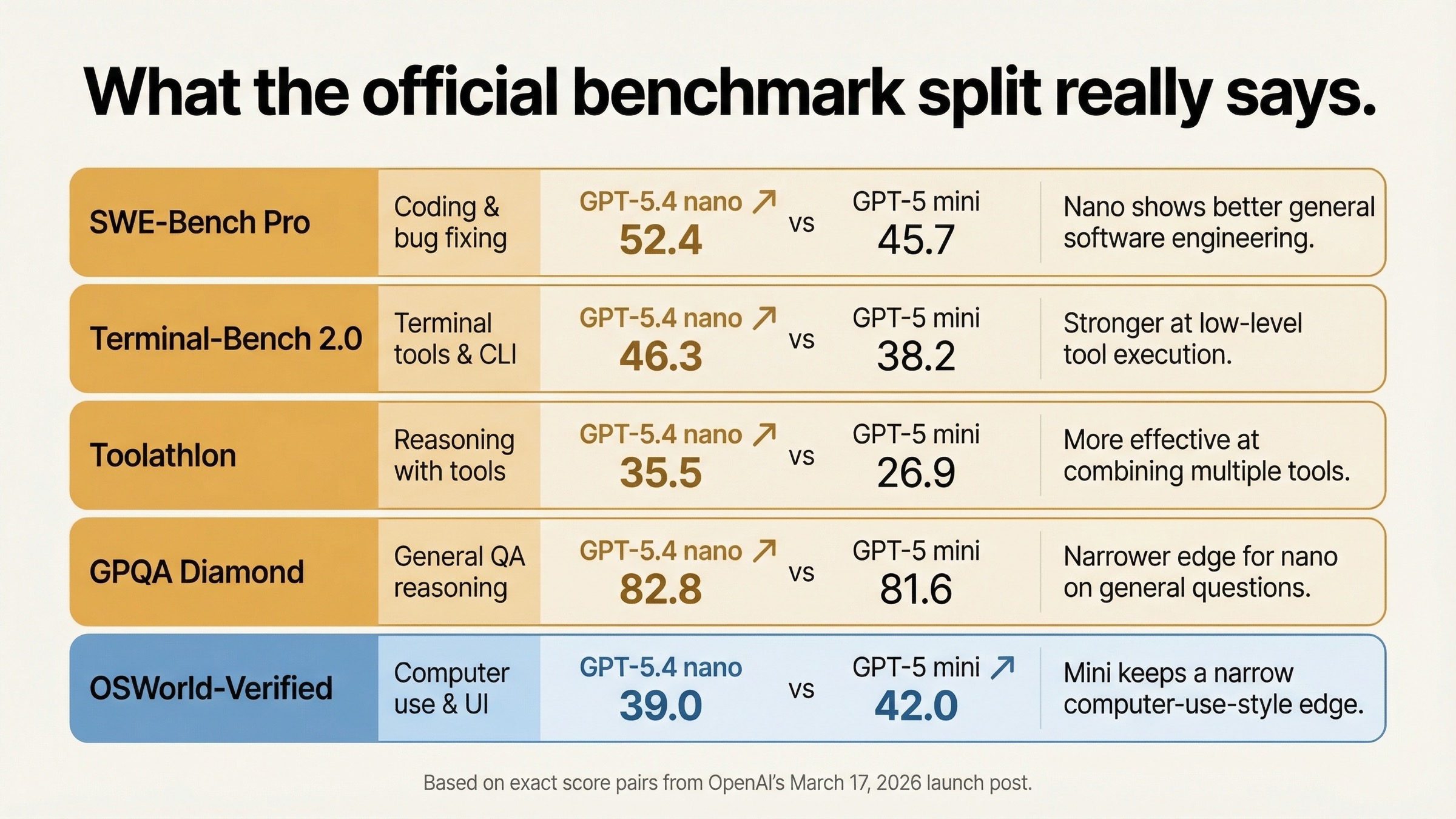

OpenAI's March 17, 2026 release table does not show GPT-5.4 nano as merely "cheaper but weaker." In several places, it shows GPT-5.4 nano outperforming GPT-5 mini on the exact kinds of work teams often assign to low-cost worker models: coding, tool use, and long-context retrieval.

| Benchmark from OpenAI's March 17, 2026 post | GPT-5.4 nano | GPT-5 mini | Why it matters |

|---|---|---|---|

| SWE-Bench Pro (Public) | 52.4% | 45.7% | Nano is stronger on real coding issue resolution |

| Terminal-Bench 2.0 | 46.3% | 38.2% | Nano is stronger on terminal-style tool workflows |

| Toolathlon | 35.5% | 26.9% | Nano is stronger on tool-use reliability |

| GPQA Diamond | 82.8% | 81.6% | Nano still edges mini on general high-end reasoning |

| OpenAI MRCR v2 128K-256K | 33.1% | 19.4% | Nano is better once prompts become genuinely large |

| OSWorld-Verified | 39.0% | 42.0% | GPT-5 mini keeps a small edge on this computer-use-style eval |

Three things matter here.

First, the broad direction is clear. GPT-5.4 nano is ahead of GPT-5 mini on more of the high-value rows than many buyers would expect. That means it is not only a cheaper replacement candidate. It is often a capability upgrade at the cheap end as well.

Second, GPT-5 mini still has a few real wins. It scores better on OSWorld-Verified, better on MMMUPro with Python, and better on Graphwalks parents in the same launch post. So the honest reading is not "nano wins everything." The honest reading is that GPT-5.4 nano wins more of the rows most cheap coding and tool workers actually care about, while GPT-5 mini still has narrower pockets where it performs better.

Third, you should read the table as a current product comparison, not as a clean architecture lab test. OpenAI notes that the highest reasoning_effort available for GPT-5 mini in that table is high, while the GPT-5.4 models are shown at xhigh. That does not make the comparison useless. It means the table reflects the best currently exposed settings for each product surface, which is exactly how many real buyers evaluate the models anyway.

The biggest practical takeaway is simple: if your cheap lane touches coding, tool use, or retrieval-heavy prompts, GPT-5.4 nano is not merely "good enough for the price." It is often the better model full stop.

When GPT-5.4 Nano Is The Better Choice

GPT-5.4 nano is the better choice when the cheap lane is being designed today rather than defended from the past.

The clearest case is structured high-volume work. OpenAI recommends GPT-5.4 nano for classification, data extraction, ranking, and simpler subagents. Those are exactly the tasks where teams want low cost, low latency, and still enough reasoning to avoid brittle prompt stacks. Because nano is cheaper than GPT-5 mini and also newer, there is little reason to start a fresh extraction or ranking service on the older model by default.

The second case is lightweight coding support. GPT-5.4 nano is not marketed as the premium coding small model. That is GPT-5.4 mini's job. But the official launch data still shows nano ahead of GPT-5 mini on SWE-Bench Pro, Terminal-Bench, and Toolathlon. If the cheap lane in your system handles support edits, triage, narrow code transforms, or tool-heavy helper tasks, nano is the more defensible place to begin.

The third case is modern tool-enabled workers. Hosted shell, apply patch, skills, and image generation support matter if you are building useful low-cost workers instead of plain text endpoints. GPT-5 mini's thinner tool surface makes it feel older not only in name but in workflow fit.

The fourth case is new product architecture. If your plan is to pair one premium lane with one cheap lane, the current OpenAI routing logic points toward the GPT-5.4 family, not toward reviving GPT-5 mini as the default budget branch. If you later realize your cheap lane needs stronger reasoning or computer use, your next step is usually GPT-5.4 mini vs GPT-5 mini, not deeper commitment to GPT-5 mini.

Use GPT-5.4 nano if most of the following are true:

- You are building a new cheap lane for classification, extraction, ranking, or helper-agent work.

- You want the lowest token cost among the two models in this comparison.

- You care about newer world knowledge and a more current product branch.

- You want more tool flexibility than GPT-5 mini currently offers.

- You are comfortable trading away GPT-5 mini's higher Tier 1 TPM.

That last bullet matters. Nano is the better default, but it is not the better answer for every traffic pattern.

When GPT-5 Mini Still Makes Sense

GPT-5 mini still makes sense when you have a specific operational reason to keep it, not when you are choosing blindly from names.

The strongest case is a stable legacy deployment. If GPT-5 mini is already in production, the prompts are tuned, the behavior is measured, and the business is happy, you do not need to replace it just because a newer cheap model exists. Migration has a cost. If the cheap lane is deeply wired into your product, you should benchmark before swapping.

The second case is Tier 1 throughput pressure. GPT-5 mini's 500,000 TPM ceiling at Tier 1 is materially higher than GPT-5.4 nano's 200,000 TPM. If you are operating at that lower paid tier and pushing a lot of small requests, that difference can be operationally important. It is the cleanest current reason to keep GPT-5 mini around.

The third case is a narrow fit to its remaining benchmark pockets. GPT-5 mini still does a little better than GPT-5.4 nano on some multimodal and computer-use-style evals in the March 17 table. That does not automatically override nano's tool and freshness advantages. But if your measured workload resembles those tasks more than it resembles cheap coding or extraction, you should test instead of assuming.

There is also a softer reason to keep GPT-5 mini temporarily: production confidence. Teams often value the model they already know. That is a real engineering preference, not just inertia. But it only stays defensible if the difference is measured. If you have not compared your actual workload on GPT-5.4 nano, you are defending a guess.

Keep GPT-5 mini only if most of these are true:

- You already run it in production and changing the cheap lane has real migration cost.

- Tier 1 throughput matters enough that 500,000 TPM versus 200,000 TPM changes your routing math.

- Your workload is mostly plain text rather than tool-enabled helper work.

- Bench tests on your real prompts do not show enough quality or cost advantage from moving to GPT-5.4 nano.

That is a much narrower list than "cost-sensitive low-latency work" in general. And that narrowing is the whole point of the article.

API vs ChatGPT: Do Not Mix This Up

This query attracts surface confusion because people search model names, then land on pages about ChatGPT visibility instead of API routing.

The current OpenAI Help Center article about GPT-5.3 and GPT-5.4 in ChatGPT describes the visible picker as GPT-5.3 Instant, GPT-5.4 Thinking, and GPT-5.4 Pro. That is a different question from which API model you should call. ChatGPT fallback behavior, usage limits, and visible labels are not the same as API routing advice.

That distinction matters here because GPT-5.4 nano is API-only in the March 17 launch post, while GPT-5 mini is an API model page and not a current ChatGPT picker concept. If you are deciding how to build or migrate an API workflow, the model pages, launch post, and latest-model guide matter more than whatever a consumer-facing ChatGPT article happens to emphasize.

If you are just getting started on the API side, set up your environment first with our OpenAI API key guide. If you are deciding how to keep one premium lane and one cheap lane at the same time, read GPT-5.4 vs GPT-5 mini after this page. That second comparison answers the "do I keep a flagship lane too?" question more directly.

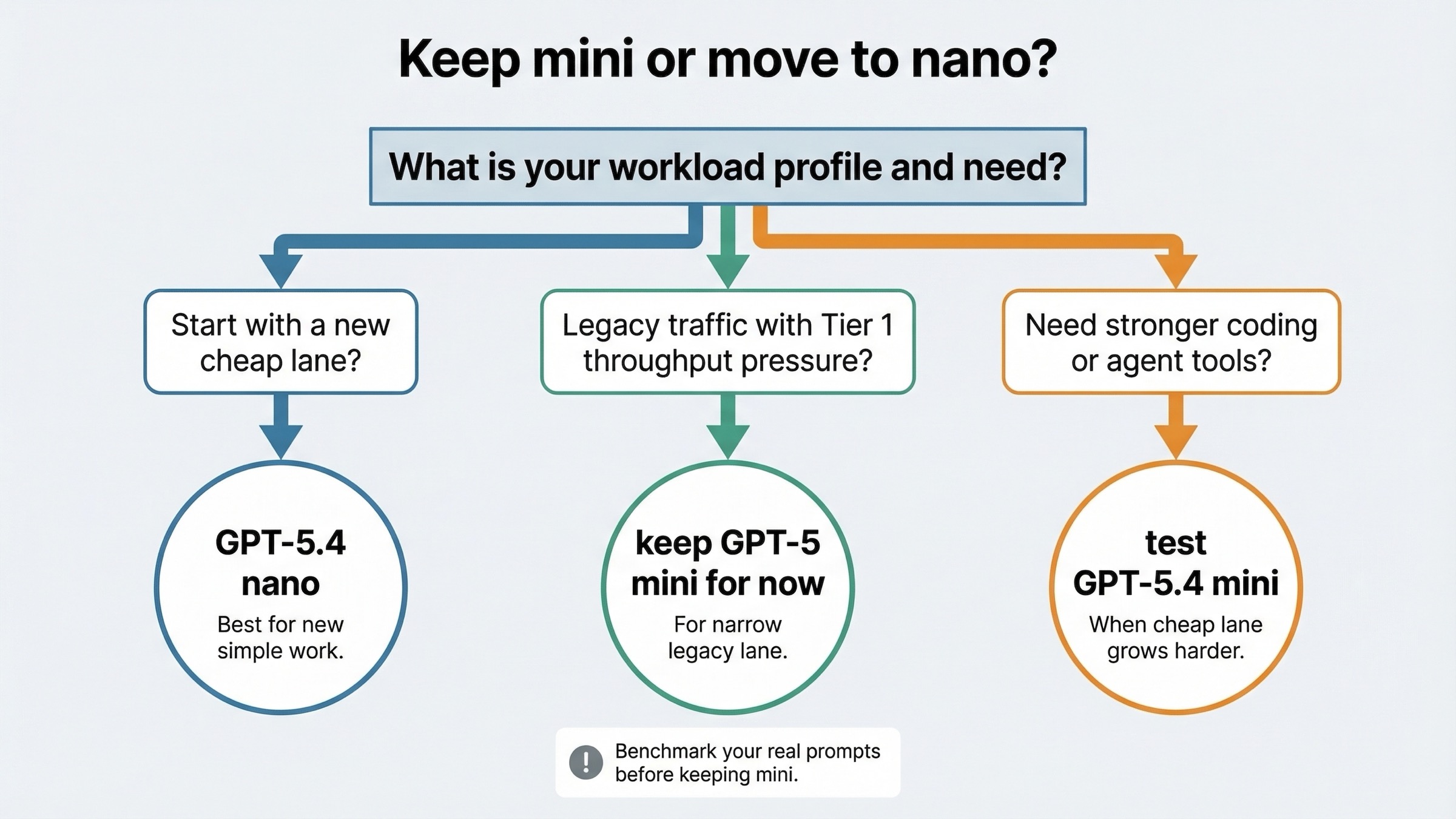

My migration rule is simple:

- For any new cheap workload, test GPT-5.4 nano first.

- Keep GPT-5 mini only if your real prompts show a throughput or stability reason to keep it.

- If the cheap lane needs stronger coding or agent capability, move the evaluation to GPT-5.4 mini instead of assuming GPT-5 mini is the next-best branch.

FAQ

Is GPT-5 mini deprecated?

No. As of March 21, 2026, GPT-5 mini still has a live model page, live pricing, and live rate limits. The practical issue is not deprecation. It is that GPT-5 mini no longer looks like the default cheap direction for new OpenAI API work.

Does GPT-5.4 nano replace GPT-5 mini for every workload?

No. GPT-5 mini still makes sense for some legacy traffic, especially if you already trust its production behavior or care a lot about its higher Tier 1 TPM ceiling. But for new cheap workloads, GPT-5.4 nano is usually the better starting point.

What is the strongest reason to keep GPT-5 mini?

The strongest current reason is operational rather than conceptual: higher Tier 1 throughput plus a known prompt stack you may not want to disturb yet. That is a better reason than simply assuming mini should be stronger because of the name.

When should I compare GPT-5.4 nano to GPT-5.4 mini instead?

When your cheap lane is starting to do more coding, more tool use, or more agent-style work than a simple helper model should handle. In that case, GPT-5.4 mini vs GPT-5 mini is usually the more important follow-up comparison.

The shortest honest conclusion is this: GPT-5.4 nano is usually the right cheap default for new OpenAI API work in 2026, and GPT-5 mini is now the exception case you keep only when a narrow legacy reason is real.