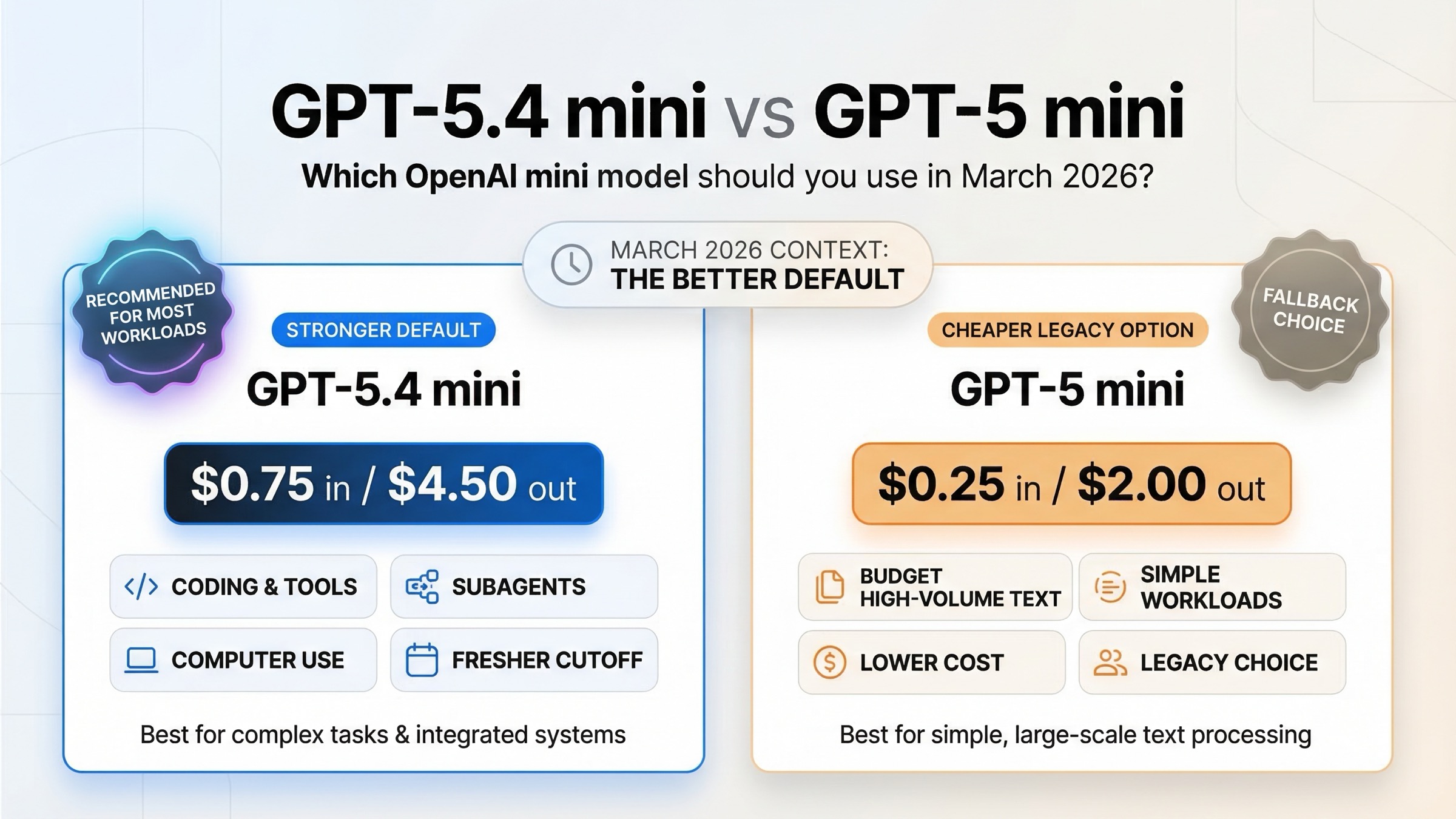

As of March 20, 2026, GPT-5.4 mini is the model most teams should choose for new low-latency OpenAI API workflows. It is more expensive than GPT-5 mini, but OpenAI's March 17, 2026 launch post says GPT-5.4 mini improves over GPT-5 mini across coding, reasoning, multimodal understanding, and tool use while running more than 2x faster. The current GPT-5 mini model page also says that for most new low-latency, high-volume workloads, OpenAI recommends starting with GPT-5.4 mini instead.

That does not mean GPT-5 mini is useless. If you already run a large volume of simpler text-heavy requests and your top priority is cost containment, GPT-5 mini can still be rational. The real question is whether your workflow benefits enough from GPT-5.4 mini's broader tool stack, newer cutoff, and stronger coding and computer-use performance to justify the higher bill.

TL;DR

If you want the short answer, here it is: choose GPT-5.4 mini for most new builds, choose GPT-5 mini only when the workload is stable, tool-light, and strongly cost-sensitive.

| Model | Best for | Main reason to choose it | Main reason not to choose it |

|---|---|---|---|

| GPT-5.4 mini | New coding assistants, agent tools, Codex-style subagents, screenshot-heavy flows | Better benchmarks, newer cutoff, broader tool support, and OpenAI's current recommendation | Costs more: $0.75 in / $4.50 out per 1M tokens |

| GPT-5 mini | Existing high-volume text pipelines and budget-sensitive legacy usage | Lower price: $0.25 in / $2.00 out per 1M tokens | Older model, weaker tool stack, and weaker results on the March 2026 official comparison |

The simplest decision rule is this:

- If you are starting a new OpenAI API product in 2026, default to GPT-5.4 mini.

- If your system depends on computer use, hosted shell, apply patch, skills, or tool-search-style agent loops, GPT-5.4 mini is the safer pick.

- If your workload is mostly plain text classification, routing, or cheap high-volume generation, the price gap may matter more than the capability gap.

- If you are choosing based on ChatGPT model names, stop and separate that from API model choice. ChatGPT availability and API recommendations are not the same surface.

What Actually Changed From GPT-5 mini to GPT-5.4 mini

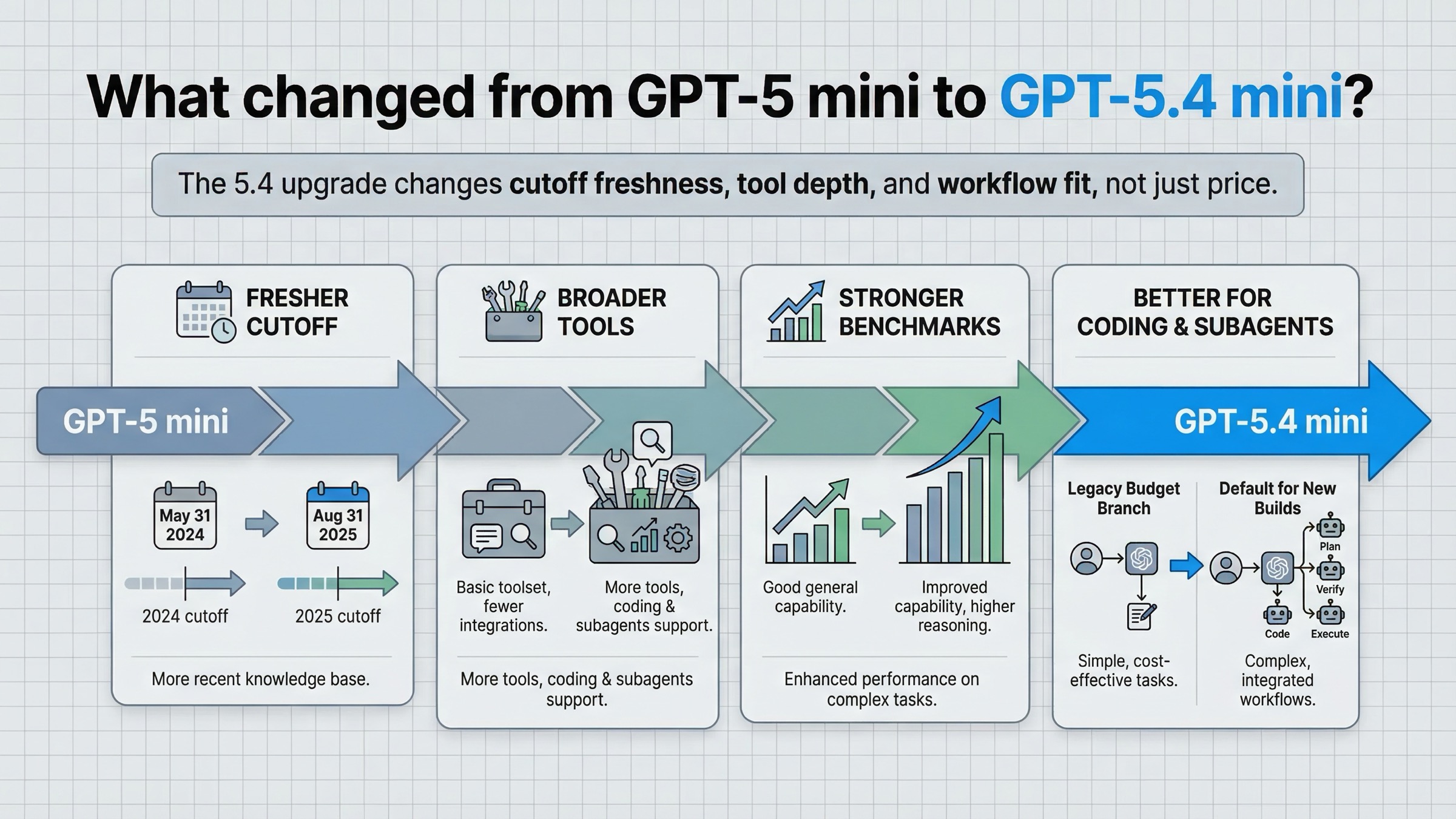

The easiest way to misread this comparison is to assume GPT-5.4 mini is just a minor point release. It is not. OpenAI positioned it as the small-model upgrade for coding, computer use, and subagents, not as a cosmetic refresh of GPT-5 mini.

In the official March 17, 2026 announcement for GPT-5.4 mini and nano, OpenAI makes three points that matter more than the name change.

First, GPT-5.4 mini is explicitly framed as the strongest mini model yet for coding, computer use, and subagents. That is stronger positioning than "cheaper GPT-5." It tells you the product goal changed from general small-model reasoning to a more agent-ready small model.

Second, OpenAI says GPT-5.4 mini improves over GPT-5 mini across coding, reasoning, multimodal understanding, and tool use while running more than 2x faster. For buyers, that is the center of gravity of the whole comparison. You are not paying more just for freshness. You are paying for a newer small model that is supposed to land better on real coding and tool-driven work.

Third, the official model cards show a bigger workflow gap than many readers expect. GPT-5.4 mini keeps the same 400K context window and 128K max output window, so the headline difference is not context. The difference is what the model can do with that context.

Here is the quick before-and-after in practical terms:

| Area | GPT-5 mini | GPT-5.4 mini | Why it matters |

|---|---|---|---|

| Positioning | Cheap small GPT-5 reasoning model | Strongest mini model for coding, computer use, and subagents | New builds should treat 5.4 mini as the active small-model line |

| Knowledge cutoff | May 31, 2024 | Aug 31, 2025 | GPT-5.4 mini is substantially fresher |

| Tool stack | Web search, file search, code interpreter, MCP | Web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, computer use, MCP, tool search | The upgrade is much bigger for agent workflows than for plain chat |

| Official recommendation | Legacy but still available | Current default small-model recommendation | OpenAI now points new low-latency workloads here |

That last line is why this article can be direct. When the legacy model's own page tells developers to start with the newer mini for most new low-latency, high-volume workloads, the burden of proof shifts. GPT-5 mini is no longer the default recommendation. It is the exception case.

Pricing, Context, and Tool Support Side by Side

For many teams, the decision starts with price and then gets filtered through capability. That is the right instinct, but the raw price delta can be misleading if you do not compare it to the tool and workflow delta.

According to the current GPT-5.4 mini model page, GPT-5.4 mini costs $0.75 per 1M input tokens and $4.50 per 1M output tokens. According to the current GPT-5 mini model page, GPT-5 mini costs $0.25 per 1M input tokens and $2.00 per 1M output tokens.

So yes, GPT-5.4 mini is about 3x the input price and 2.25x the output price. That is not a rounding error. But price is only the beginning of the comparison.

| Spec | GPT-5.4 mini | GPT-5 mini |

|---|---|---|

| Input price | $0.75 / 1M tokens | $0.25 / 1M tokens |

| Cached input | $0.08 / 1M tokens | $0.025 / 1M tokens |

| Output price | $4.50 / 1M tokens | $2.00 / 1M tokens |

| Context window | 400K | 400K |

| Max output | 128K | 128K |

| Knowledge cutoff | Aug 31, 2025 | May 31, 2024 |

| Snapshot shown on model page | gpt-5.4-mini-2026-03-17 | gpt-5-mini-2025-08-07 |

On context length alone, the models look similar. That is why many quick comparison pages stop too early. If you only compare price and context, GPT-5 mini looks like the bargain. But once you compare what the models support in tool-heavy environments, the story changes.

| Capability | GPT-5.4 mini | GPT-5 mini |

|---|---|---|

| Web search | Yes | Yes |

| File search | Yes | Yes |

| Image generation tool | Yes | No |

| Code interpreter | Yes | Yes |

| Hosted shell | Yes | No |

| Apply patch | Yes | No |

| Skills | Yes | No |

| Computer use | Yes | No |

| MCP | Yes | Yes |

| Tool search | Yes | No |

| Distillation | Yes | No |

This is the real dividing line. If your system is basically a smart completion engine with a few simple tools, GPT-5 mini can still be enough. If your system looks anything like a modern coding agent, UI agent, or delegated subagent pipeline, GPT-5.4 mini is on a different branch of the product tree.

The other under-discussed difference is freshness. A May 31, 2024 knowledge cutoff versus an Aug 31, 2025 cutoff is a real gap when your users ask about current libraries, newer APIs, documentation drift, or 2025 product changes. Even with web search available, a fresher base model usually means less recovery work in prompts and fewer awkward miss assumptions.

Benchmark Deltas That Actually Matter

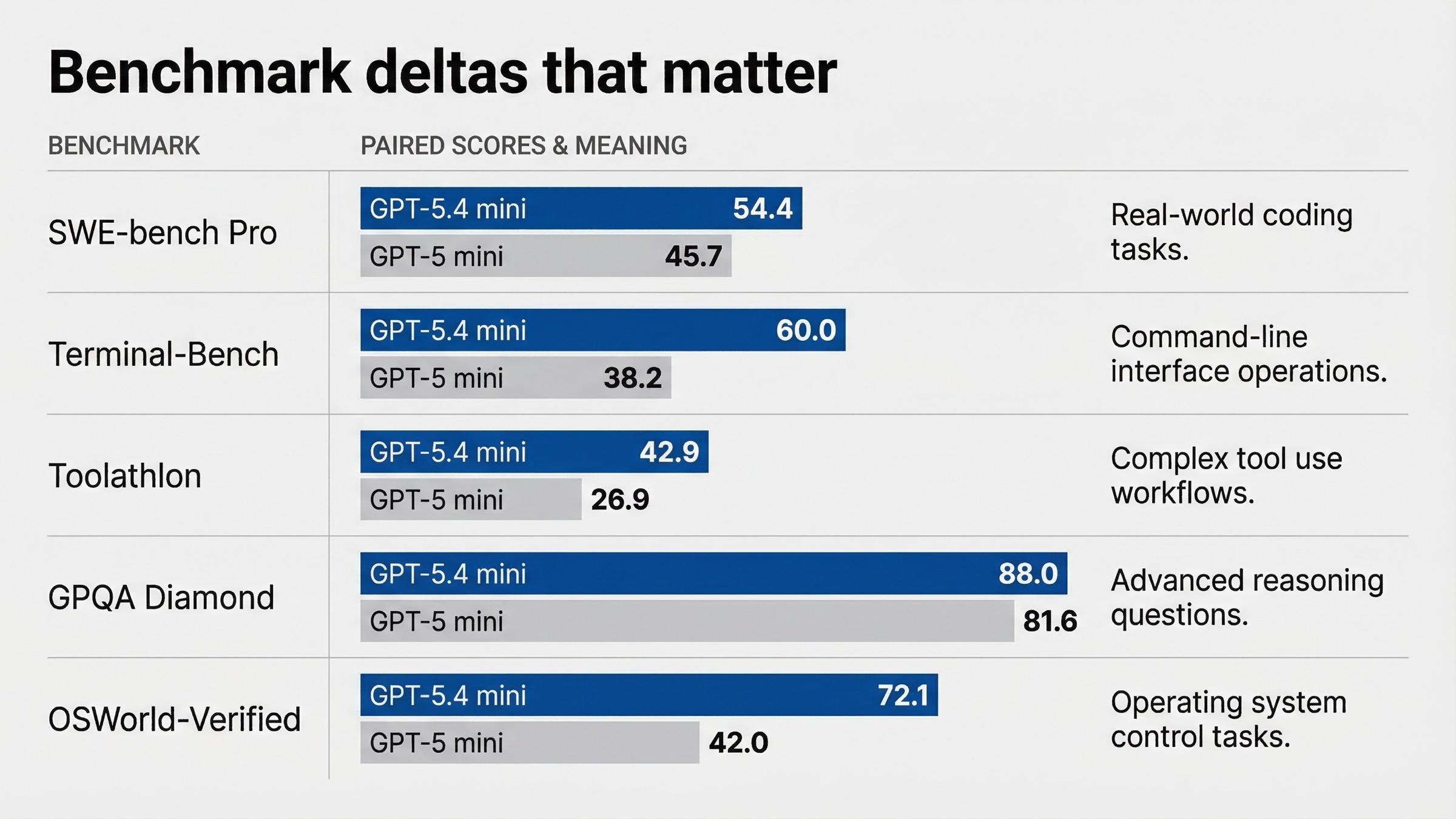

Benchmark tables are useful only if they answer a buying question. The official March 2026 release post is valuable because it compares GPT-5.4 mini directly against GPT-5 mini in the exact places where developers care: coding, tool use, intelligence, computer use, and long context.

| Benchmark from OpenAI's March 17, 2026 post | GPT-5.4 mini | GPT-5 mini | Why you should care |

|---|---|---|---|

| SWE-bench Pro (Public) | 54.4% | 45.7% | Better on real software issue resolution |

| Terminal-Bench 2.0 | 60.0% | 38.2% | Stronger in terminal-style tool execution |

| Toolathlon | 42.9% | 26.9% | Better at tool use reliability |

| GPQA Diamond | 88.0% | 81.6% | Better general high-end reasoning |

| OSWorld-Verified | 72.1% | 42.0% | Much stronger for computer-use workflows |

| OpenAI MRCR v2 128K-256K | 33.6% | 19.4% | Better once the prompt gets genuinely large |

Three takeaways matter more than the raw scores.

The first is that GPT-5.4 mini is not winning by tiny margins. The coding and tool deltas are large enough to change product behavior, especially on Terminal-Bench, Toolathlon, and OSWorld-Verified. If your workflow is a coding assistant, UI operator, or anything close to Codex-style orchestration, this is not a cosmetic improvement.

The second is that GPT-5.4 mini is not just a better coding model. It is also better on general high-end reasoning and long-context retrieval in the official comparison. That means the newer model buys you a broader safety margin, not just sharper code edits.

The third is that benchmark wins are only worth paying for if they map to your actual product. A team doing millions of short classification calls does not automatically need a better Terminal-Bench score. A team building agentic code review, test repair, or screenshot interpretation almost certainly does.

One footnote matters when you read the official table closely. OpenAI says the highest reasoning_effort available for GPT-5 mini in that launch comparison is high, while GPT-5.4 mini was evaluated at xhigh. That means the benchmark table is not a perfectly symmetric "same knob, same level" bake-off. It is still useful because it reflects the best currently exposed settings for each model, but careful buyers should treat it as a current product comparison, not a pure architecture lab test.

This is exactly where current page-one results are weak. They show the numbers, but they do not say which numbers should move your budget decision. The rule of thumb is simple:

- If your workflow depends on tools, coding, or UI interpretation, benchmark gains matter a lot.

- If your workflow is mostly cheap text output at scale, the benchmark gains may not justify the higher price.

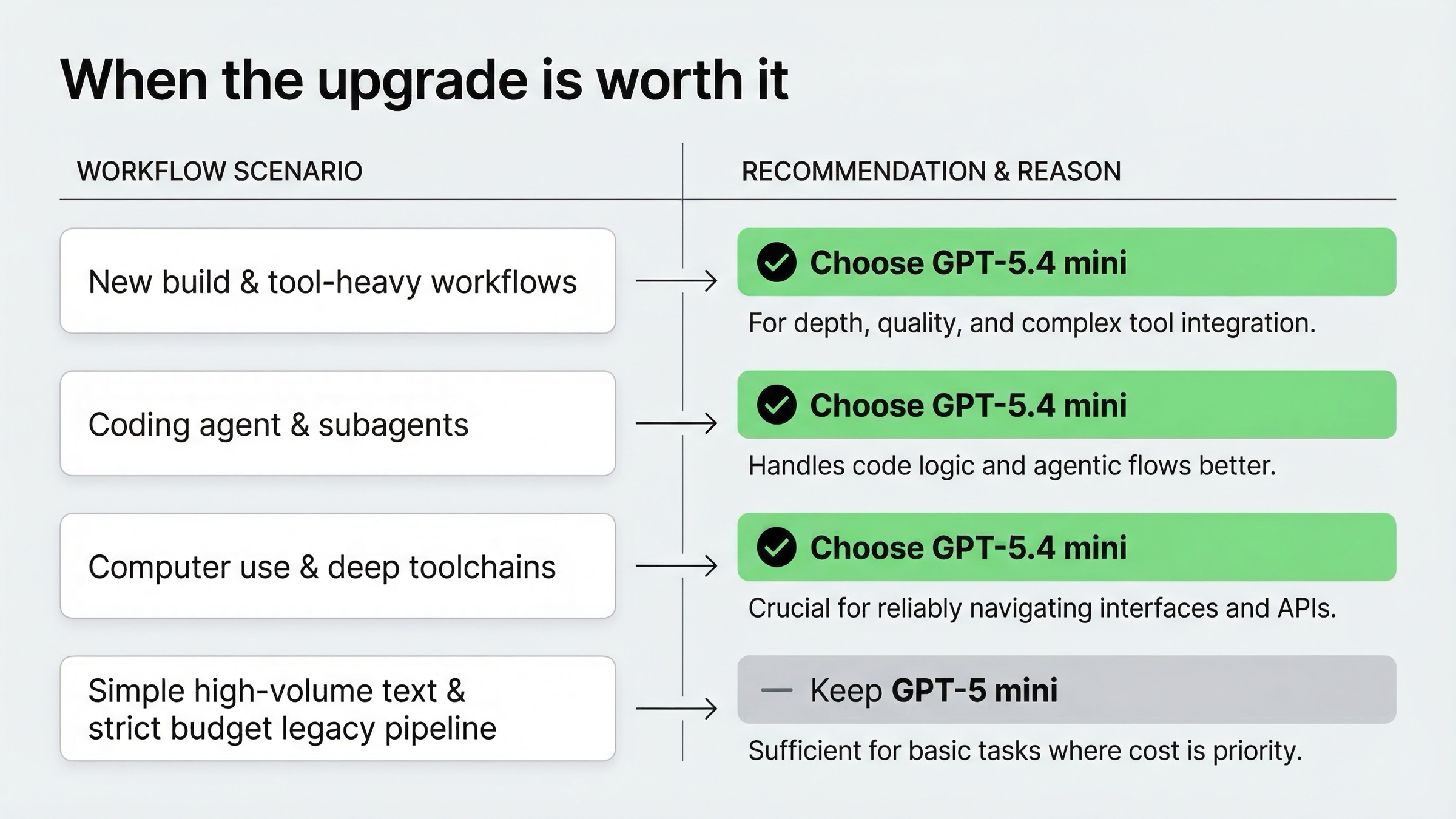

When GPT-5.4 mini Is Worth the Extra Cost

GPT-5.4 mini is worth paying for when your product would otherwise lose time, reliability, or engineering effort compensating for what GPT-5 mini does not handle as well.

The clearest case is coding. OpenAI positioned GPT-5.4 mini around coding assistants and subagents, and the benchmark deltas support that positioning. If your model needs to navigate codebases, recover from tool failures, inspect large files, call multiple tools, or operate inside a coding harness, GPT-5.4 mini is the more defensible default.

The second clear case is agent workflows with real tool depth. Hosted shell, apply patch, skills, computer use, and tool search are not tiny extras. They change what sort of product architecture you can build without special casing another model. If your product roadmap includes delegated subtasks, browser-style workflows, or local-environment style operations, the newer model saves you architectural compromises.

The third case is multimodal workflow density. The March 2026 post emphasizes computer use and screenshot interpretation. If users upload dashboards, bug screenshots, UI states, or dense interface captures, GPT-5.4 mini is the model OpenAI is actively pointing at those use cases.

The fourth case is migration from older "cheap reasoning" picks such as o4-mini-like usage patterns. In the latest GPT-5.4 guide, OpenAI explicitly says gpt-5.4-mini is a great replacement for o4-mini or gpt-4.1-mini. That is a strong signal about where the small-model line is headed.

If your workload looks like any of the following, the extra cost is usually justified:

- A coding assistant that needs reliable tool calling and patch-style operations.

- A UI agent that reads screenshots or performs computer-use actions.

- A subagent worker inside a larger orchestration system.

- A product where newer documentation and 2025-era ecosystem knowledge reduce support burden.

- A team that would otherwise spend time prompt-tuning around legacy GPT-5 mini weaknesses.

When GPT-5 mini Still Makes Sense

GPT-5 mini still makes sense when the workload is simple enough that GPT-5.4 mini's extra capability would be underused.

The strongest case is cost-sensitive legacy traffic. If you already run GPT-5 mini in production, your prompts are stable, your tool usage is shallow, and your failure rates are acceptable, switching everything to GPT-5.4 mini may raise cost faster than it raises user value.

The second case is simple high-volume text work. If the workload is mostly short structured outputs, lightweight generation, or narrow routing tasks, GPT-5 mini can still be the cheaper operating point. In those situations, the real comparison should often be GPT-5 mini versus GPT-5.4 nano, not necessarily GPT-5.4 mini versus GPT-5 mini.

The third case is when your architecture already separates heavy and light paths. For example, you may use a better model only for complex branches and keep a cheaper model for easy branches. In that setup, GPT-5 mini can survive as the cheap branch if benchmark-sensitive or tool-sensitive work is already routed elsewhere.

Use GPT-5 mini if most of the following are true:

- Your requests are mostly plain text rather than tool-heavy.

- You do not need hosted shell, apply patch, skills, computer use, or tool search.

- You care more about lowering token spend than improving coding or agent benchmarks.

- You are optimizing an existing system, not designing a new one from scratch.

That said, even in these cases, you should not assume GPT-5 mini is automatically the cheapest good choice forever. OpenAI's current docs point new workloads away from it, which is usually a sign that long-term product attention is now on the 5.4 line.

Migration Notes for Existing GPT-5 mini Workloads

If you already use GPT-5 mini, do not migrate blindly. Test the workflows that actually cost you money or user trust.

Start with these checkpoints:

| Migration question | Why it matters |

|---|---|

| Does the workload benefit from better tool reliability? | GPT-5.4 mini's strongest wins are in coding and tool use, not just prose quality |

| Do you need newer knowledge without over-relying on search? | The cutoff gap is large |

| Do you use agent features that GPT-5 mini lacks? | Hosted shell, apply patch, skills, computer use, and tool search all point toward GPT-5.4 mini |

| Is the workload truly latency-sensitive but shallow? | If yes, cost pressure may still favor GPT-5 mini or even GPT-5.4 nano |

There is also a practical prompt-behavior angle. The latest GPT-5.4 guide notes that older GPT-5 family models behave differently from GPT-5.4 around certain parameters. Separately, developers in the OpenAI Developer Community described real friction with older GPT-5 and GPT-5 mini for deterministic low-latency tasks, especially when they wanted reasoning fully disabled. That matters because many teams do not compare models on a clean benchmark harness; they compare them inside annoying production constraints.

My migration advice is:

- Test GPT-5.4 mini first on the flows where GPT-5 mini already feels weak: coding, tool chaining, structured action extraction, and screenshot-heavy reasoning.

- Keep GPT-5 mini only if your measured gain is small and the spend increase is large.

- If the workload is very simple and price-sensitive, also test whether o4-mini-era use cases or GPT-5.4 nano cover the cheaper branch better.

If you are new to the API entirely, start by getting a clean setup with our OpenAI API key guide. Then test GPT-5.4 mini first. That matches both the current docs and the practical direction of OpenAI's small-model lineup.

ChatGPT vs API: Do Not Mix These Up

This keyword attracts a lot of surface confusion because people see "mini" in multiple places and assume all the names map one-to-one.

They do not.

OpenAI's March 17, 2026 GPT-5.4 mini and nano launch post says GPT-5.4 mini is available across API, Codex, and ChatGPT surfaces. But the current Help Center article, checked on March 20, 2026, says GPT-5.3 is now the default ChatGPT line for logged-in users, while paid users can manually pick GPT-5.4 Thinking and chats switch to a generic mini version after some usage limits reset. In other words, the ChatGPT model picker no longer maps cleanly onto this API comparison.

If you are deciding for API work, model pages and API guides matter most. If you are deciding for ChatGPT plan behavior, Help Center availability notes matter more. The article you are reading is primarily about API and Codex-style usage, with ChatGPT mentioned only to prevent naming confusion.

FAQ

Is GPT-5 mini deprecated?

No. As of March 20, 2026, GPT-5 mini still has a current model page and current API pricing. But it is clearly the older small-model option, not the one OpenAI is recommending for most new low-latency, high-volume builds.

Does GPT-5.4 mini fully replace GPT-5 mini?

Functionally, it is the better default for most new builds. Operationally, no, because GPT-5 mini still exists and can still be the better choice for narrow cost-sensitive workloads. Think of GPT-5.4 mini as the active recommendation and GPT-5 mini as the older cheaper branch.

Which one should I use for coding agents or Codex-style subagents?

GPT-5.4 mini. That is exactly how OpenAI positions it, and the official March 2026 benchmarks support that choice.

Which one should I use for cheap high-volume text tasks?

If the workload is simple enough and tool-light enough, GPT-5 mini can still be reasonable. But before locking that in, compare it with GPT-5.4 nano as well, because GPT-5 mini is no longer the obvious future-facing cheap option.

Which model is better for deterministic low-latency tasks?

This depends on your prompts and response format. Older GPT-5 mini workflows created friction for some developers who wanted fully non-reasoning behavior, according to OpenAI Developer Community discussions. If the task is truly shallow and deterministic, test carefully rather than assuming the legacy model is automatically better.

Final Recommendation

If you want one line to take back to your team, use this one: GPT-5.4 mini is the right default in 2026 unless you have a specific cost-driven reason to stay on GPT-5 mini.

That recommendation rests on four facts verified on March 20, 2026:

- OpenAI now recommends GPT-5.4 mini for most new low-latency, high-volume workloads.

- GPT-5.4 mini has a much broader tool stack for agent and coding workflows.

- The official March 17, 2026 comparison shows meaningful gains over GPT-5 mini in coding, tool use, computer use, and reasoning.

- GPT-5 mini is cheaper, but it is no longer the default path for new systems.

So the real decision is not whether GPT-5.4 mini is better. It is. The real decision is whether your workload is simple enough that you should ignore that improvement and keep the cheaper older model. For many 2026 API teams, the answer will be no.