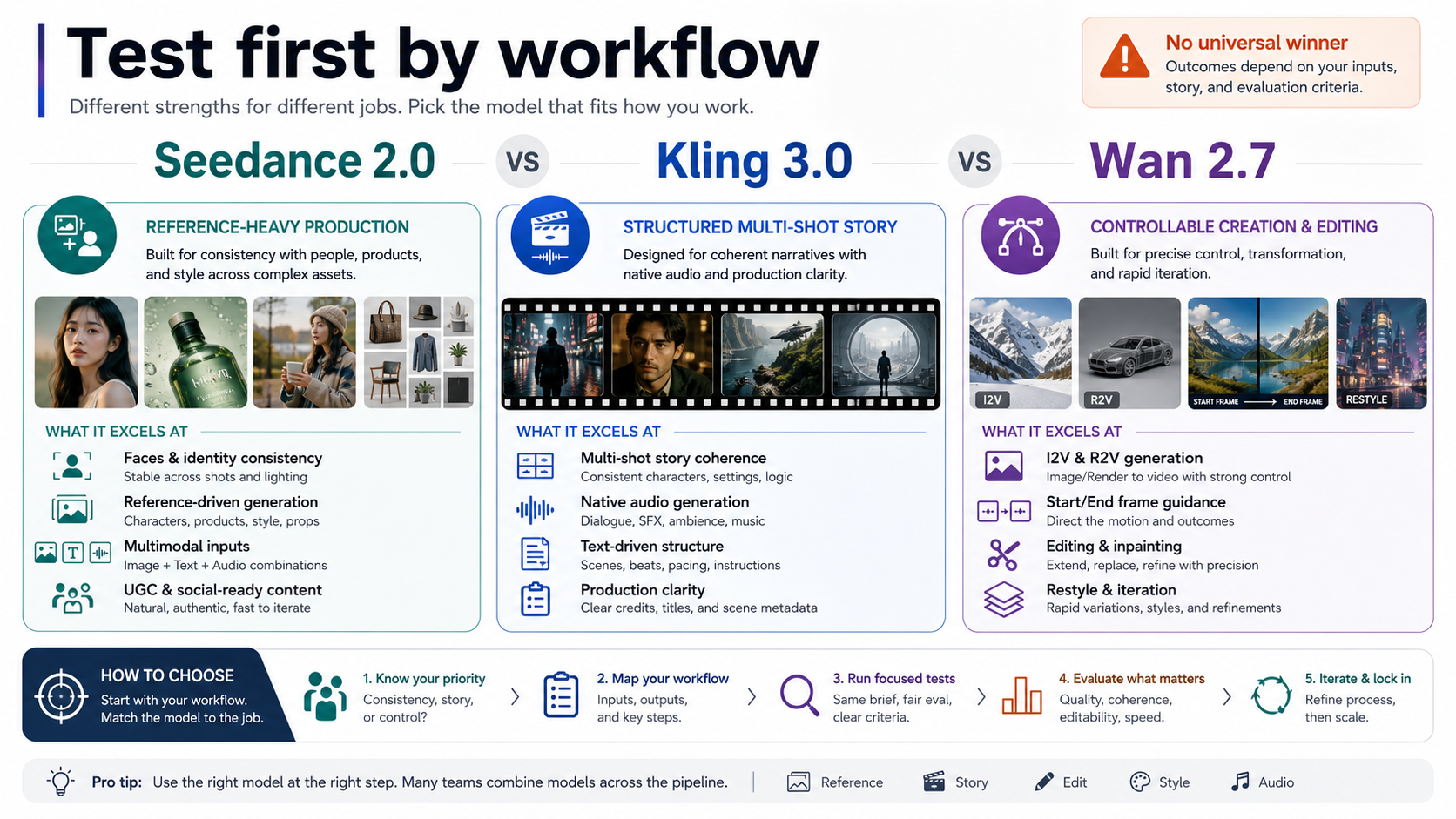

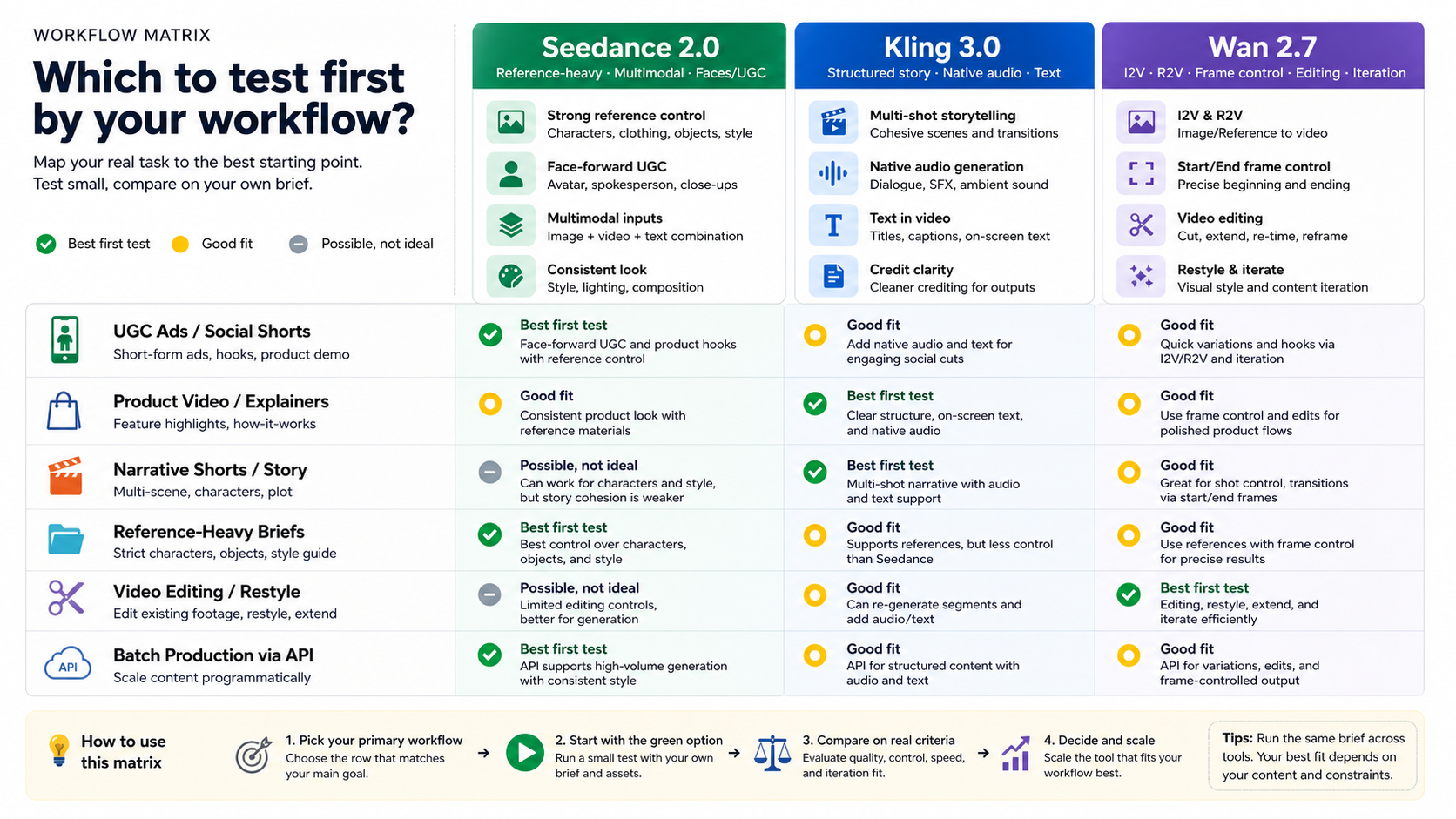

There is no universal winner between Wan 2.7, Kling 3.0, and Seedance 2.0. Use Seedance 2.0 as the first serious test when the brief depends on references, faces, UGC ads, or multimodal production. Use Kling 3.0 first when the deliverable needs structured multi-shot storytelling, native audio, text-in-video, and clearer credit accounting. Use Wan 2.7 first when the job is Alibaba-hosted I2V, R2V, start/end frame control, video editing, restyle, or rapid iteration.

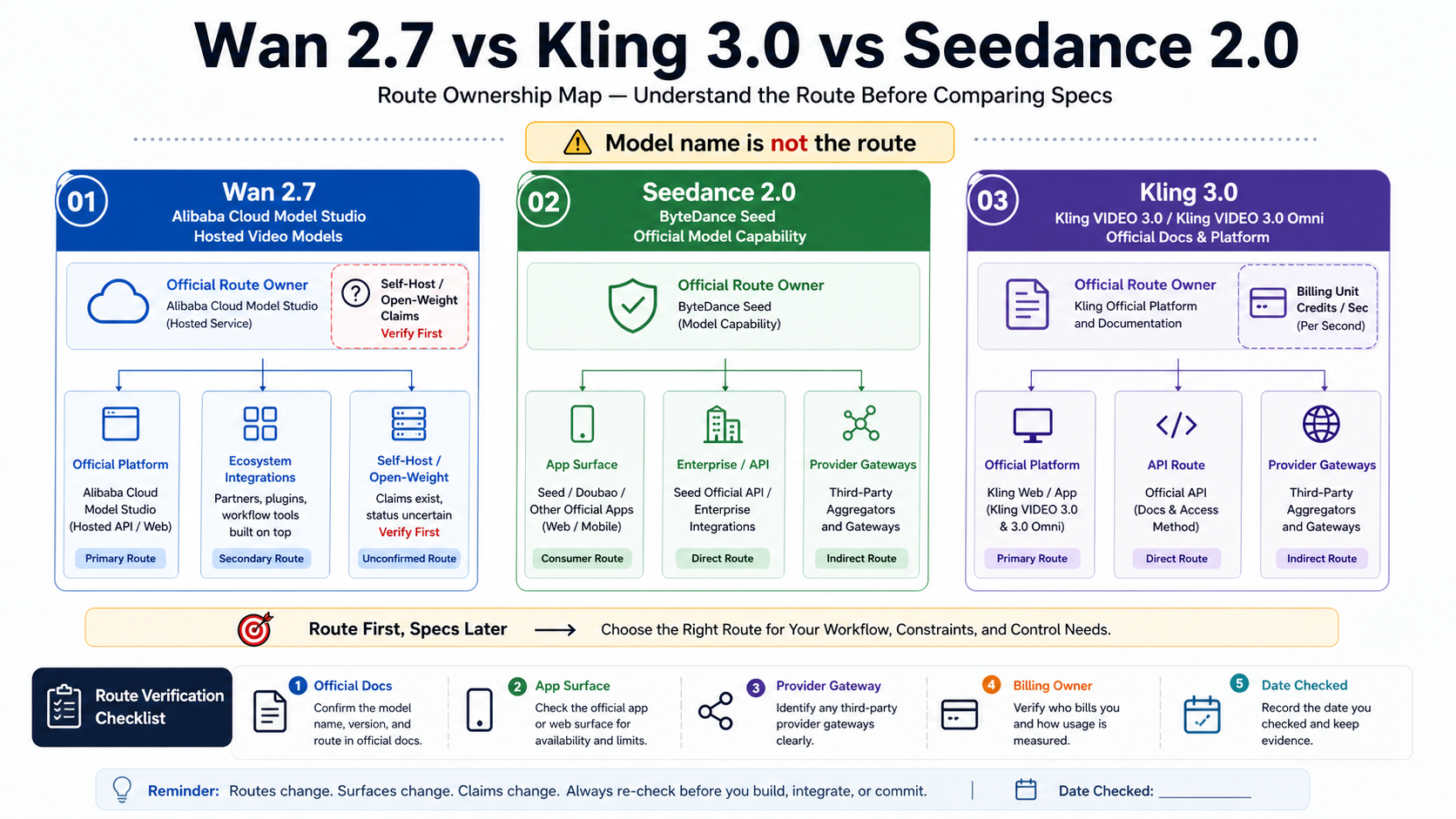

The route matters as much as the model. Wan 2.7 is safest to discuss from Alibaba Cloud Model Studio's hosted video model IDs unless first-party weight proof is added later. Seedance 2.0 capability claims belong to ByteDance Seed, while app, enterprise, and provider access have to be labeled separately. Kling 3.0's official cost language is credits per second; dollar conversions only make sense after the current credit package is checked.

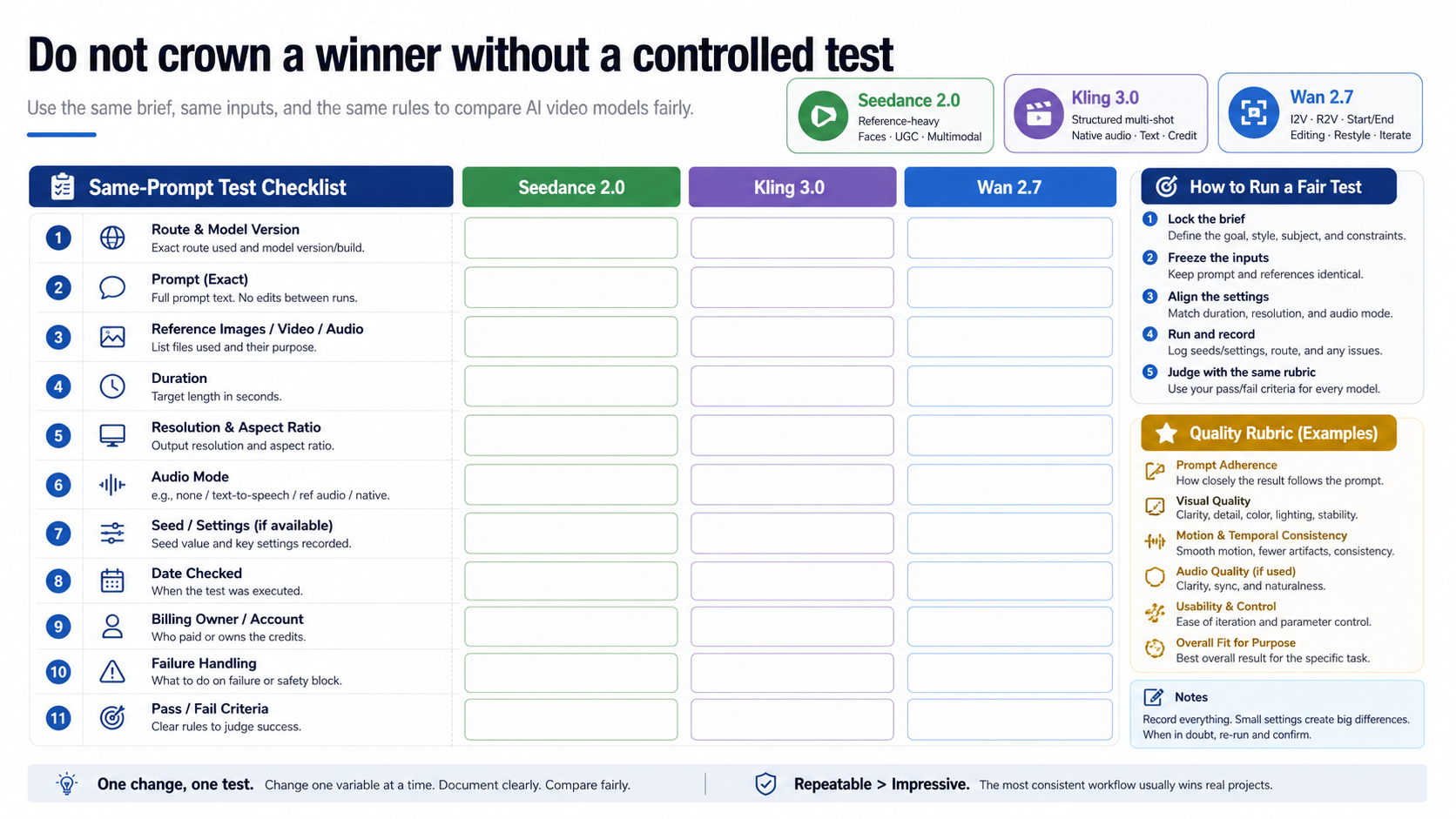

Before production, test finalists with the same prompt, reference assets, duration, resolution, audio mode, route, checked date, billing owner, and pass/fail criteria. A model that wins one public sample can still be the wrong first choice for your workflow.

Quick Decision: Start With the Workflow

The fastest useful answer is a first-test choice, not a crown. If you are making a face-forward UGC ad, a product clip that must preserve a reference look, or a creative brief with image, audio, and video inputs, Seedance 2.0 should move to the front of the queue. ByteDance describes Seedance 2.0 as a unified multimodal audio-video model with text, image, audio, and video inputs, and its launch material says it can use up to 9 images, 3 videos, and 3 audio clips plus instructions. That makes it the most natural first test when the input material already carries the look, subject, pacing, or sound the output must preserve.

If the work is a sequence rather than a single styled shot, test Kling 3.0 early. The official Kling VIDEO 3.0 guide describes native audio, multi-shot generation, element consistency, multilingual support, native text output, and 3 to 15 second generation. That combination matters for narrative shorts, product explainers, speaker-style clips, subtitles, scene beats, and any deliverable where a clean structure is more valuable than a single beautiful frame.

Wan 2.7 belongs first when the job is control and iteration. Alibaba Cloud's video model docs list wan2.7-t2v, wan2.7-i2v, wan2.7-r2v, and wan2.7-videoedit, with hosted output at 720P or 1080P, 30 fps MP4, 2 to 15 seconds for T2V/I2V, and 2 to 10 seconds for R2V or video editing. That makes Wan 2.7 the practical first stop for I2V, first/last-frame control, render-to-video references, editing, restyle, and variations where the source frame or source clip is the anchor.

Route Ownership Before Specs

A model comparison becomes unsafe when it treats every access route as the same product. A video model can be visible in an official model page, app surface, cloud console, provider gateway, leaderboard, and community sample at the same time. Those surfaces do not own the same promises.

For Wan 2.7, the current safe claim starts with Alibaba Cloud Model Studio. The official video generation and editing docs list the Wan 2.7 model IDs and hosted specs. They do not, by themselves, prove that every open-weight or self-host claim attached to "Wan 2.7" is current, complete, or production-safe. If you care about local deployment, license, weight availability, or GPU cost, verify the exact first-party repository or model card before building around that assumption.

For Seedance 2.0, separate model capability from access. The Seedance 2.0 official launch page and product page support the capability story: multimodal references, audio-video generation, complex motion, control, and multi-shot output. They do not automatically settle which self-serve API, enterprise route, consumer app, or third-party gateway is right for your team. A developer route should name the platform that bills you and handles failures.

For Kling 3.0, the official documentation is unusually useful because it gives credit-per-second billing language. The Kling VIDEO 3.0 guide lists native audio 1080p at 12 credits/s, native audio 720p at 9 credits/s, no-native-audio 1080p at 8 credits/s, no-native-audio 720p at 6 credits/s, and voice control as an extra 2 credits/s. The Kling VIDEO 3.0 Omni guide adds multimodal input, voice-driven characters, storyboarding, and direct audio-visual output. Dollar cost still depends on the current credit purchase route, so credits are the safer official denominator.

Workflow Strengths and Weak Spots

Seedance 2.0 should be tested first when the brief already contains reference material. The value is not just "more inputs"; it is the ability to tell the model what must stay stable. Faces, wardrobe, product shape, product color, mood, sound, and shot language can all matter in a UGC ad or brand concept. If your team spends most of its time fighting identity drift or style drift, Seedance deserves an early slot. The weak spot is route clarity: capability and public developer access are not the same contract, so do not let a good demo settle your integration route.

Kling 3.0 should be tested first when the output has to behave like a structured piece of video. Multi-shot support, native audio, text output, and element consistency are practical for short narratives, product explainers, dialogue clips, and multilingual tests. Kling is also easier to budget at the official documentation level because the model guide states credit-per-second rates. The weak spot is that "Kling 3.0" can point to base VIDEO 3.0, Omni, app surfaces, developer routes, and provider wrappers. Choose the exact route before comparing cost or capability.

Wan 2.7 should be tested first when you need precise source-based control. I2V, first/last frame, continuation, R2V references, and instruction-based video editing are not secondary details; they are the reason Wan belongs in this comparison. If your workflow starts with a product render, a storyboard frame, an existing clip, or a style reference that must be transformed rather than invented, Wan can be the most efficient first experiment. The weak spot is open-weight confusion. Treat hosted Model Studio facts as confirmed and self-host claims as separate proof work.

The practical split is simple: Seedance for rich reference production, Kling for structured story and audio, Wan for controlled image/video-to-video and editing. The moment your job spans two of those lanes, test both finalists rather than forcing a single label to do too much.

Current Facts That Change the Choice

Official facts matter most when they change the decision. Wan 2.7's official model split tells you it is not one generic video button. wan2.7-t2v supports text-to-video, wan2.7-i2v supports image-driven routes, wan2.7-r2v supports reference-to-video routes, and wan2.7-videoedit supports instruction-based editing. The generation window also matters: 2 to 15 seconds for T2V/I2V and 2 to 10 seconds for R2V/video editing in the reviewed Alibaba Cloud docs.

Seedance 2.0's important fact is the multimodal contract. ByteDance's launch material frames it as joint audio-video generation with text, image, audio, and video inputs, not merely as a text-to-video model. That changes how you should test it. A fair Seedance test should include the reference assets that make the model relevant, not only a bare text prompt copied from a simpler T2V comparison.

Kling 3.0's important facts are structure and denominator. Native audio, multi-shot, element consistency, multilingual support, text output, and 3 to 15 second duration are directly useful for production planning. Credits per second are also more actionable than an unsourced "cheap" or "expensive" label. If a provider translates those credits into a dollar price, record whose price it is and when it was checked.

Benchmarks can help, but they cannot replace workflow fit. Artificial Analysis is useful as a dated preference surface for current video models. It should not be treated as proof that one model wins your UGC, product, story, or editing workflow. Your prompt, references, route, and failure behavior can matter more than a leaderboard row.

Cost and Access Caveats

Cost comparisons are where most AI video pages become misleading. Kling 3.0 has a clear official credit-per-second structure in the model guide, but a credit is not a dollar until the current purchase package is checked. Use credits per second when you want a stable official comparison, then add a dated dollar estimate only if you verify the package route.

Wan 2.7 has confirmed hosted model IDs and specs in Alibaba Cloud Model Studio. The reviewed source material did not expose a clean Wan 2.7 official pricing row suitable for copying into a simple per-second table. Provider pages may quote their own prices, but that is provider pricing, not necessarily Alibaba Cloud pricing. If budget is the deciding factor, test the exact route you will use and record the owner of the bill.

Seedance 2.0 has strong official capability material, but public self-serve API and pricing claims need route ownership. App access, enterprise access, cloud partner routes, and third-party gateways can all expose different limits, moderation behavior, support, latency, and billing. A model can be excellent and still be the wrong first integration if the route is not stable for your team.

The safest budget rule is to compare only route-owned numbers. Official credits, official cloud billing, provider usage price, and app subscription credits belong in separate columns. If you cannot name the bill owner, you cannot safely compare the cost.

Run a Fair Same-Prompt Test Before Production

The final winner should come from a controlled test, not a public sample. Start by writing one real brief: subject, scene, camera, motion, style, audio need, duration, aspect ratio, and the intended output use. Then decide which references are part of the job. If the Seedance test uses rich references, the Kling and Wan tests should either receive their closest equivalent or be judged on a category where those references are not required.

Log the exact route and model version. "Seedance 2.0" through a consumer app, enterprise platform, or provider gateway may not behave like the same production system. "Kling 3.0" may mean base VIDEO 3.0, Omni, app mode, or API route. "Wan 2.7" may mean hosted Model Studio, a partner surface, or a claimed self-host workflow. Without the route, your result is not reproducible.

Keep the evaluation criteria small enough to use. For most teams, a good rubric is prompt adherence, identity or product consistency, motion stability, audio quality if used, text quality if needed, editability, failure rate, and total route cost. Do not average those into one score too quickly. A model that loses on cinematic polish may still win if it is the only one that preserves the product shape or lets your pipeline edit an existing clip.

When Another Page Is the Better Fit

If your real question is only Seedance versus Kling, the older Seedance 2.0 vs Kling 3.0 comparison is the narrower route-out, though current claims should still be checked before production. If your real question is the older cloud-versus-Wan architecture split, use Kling vs Wan as background rather than as current Wan 2.7 proof. If you are comparing the wider market, the broader MiniMax vs Kling vs Wan vs Veo vs Seedance map is closer to that job.

Keep the scope tight. Wan 2.7, Kling 3.0, and Seedance 2.0 overlap enough to compare, but they are not the same kind of commitment. The useful choice is the first route to test for a specific video job. After that, the winner is the one that survives your own prompt, assets, billing route, and failure handling.

FAQ

Is Kling 3.0 or Seedance 2.0 better?

Neither is better in every workflow. Seedance 2.0 is the stronger first test when references, faces, UGC realism, and multimodal inputs matter most. Kling 3.0 is the stronger first test when structured multi-shot storytelling, native audio, text output, and clearer credit accounting matter most.

What is the difference between Wan 2.7 and Seedance 2.0?

Wan 2.7 is most useful to test for Alibaba-hosted I2V, R2V, start/end frame control, video editing, restyle, and iteration. Seedance 2.0 is most useful to test for reference-heavy multimodal generation and audio-video production. Do not treat Wan open-weight claims or Seedance public API claims as settled unless the exact route owner proves them.

Which one should developers test first?

Developers should test the route, not only the model. Start with Kling 3.0 if official credit-per-second accounting and structured API-style production are the deciding factors. Start with Wan 2.7 if the workflow depends on Model Studio's hosted I2V/R2V/editing modes. Start with Seedance 2.0 if the product must expose reference-heavy or multimodal generation and the access route is already verified.

Can I use a provider gateway to compare all three?

You can use a provider gateway as a testing surface if it clearly names the model mapping, billing owner, failure behavior, storage policy, and support boundary. Do not treat provider availability, price, speed, or reliability as official model facts. A gateway test is useful only when you record the route that produced the result.

Is Wan 2.7 open-weight?

The safe answer is to separate hosted Wan 2.7 from self-host claims. Alibaba Cloud Model Studio docs confirm hosted Wan 2.7 model IDs and specs. Any open-weight, license, model-card, or self-host claim should be verified from the exact first-party weight source before production planning.

Should I test all three models?

Test all three only if the workflow spans references, story structure, and editing control. Most teams should start with two finalists: Seedance plus Kling for ad or story work, Kling plus Wan for storyboard-to-video and edit-heavy work, or Seedance plus Wan when reference control and iteration are both central. Keep one brief, one asset set, and one scoring rubric so the result is usable.