Claude 4 Opus sits at the top of Anthropic's model stack for difficult reasoning, long-horizon coding work, and expert-level analysis. It is not the right default for every request, but it is often the right escalation model when cheaper options stop being reliable. This guide keeps the focus on official integration, realistic use cases, and cost decisions that hold up in production.

Claude 4 Opus is best treated as a premium model for the hardest tasks, not as the default answer to every API workflow

What Claude 4 Opus Is Good At

Claude 4 Opus is designed for workloads where correctness, reasoning depth, and sustained context handling matter more than raw volume.

Typical strong-fit use cases:

- Complex code generation and refactoring

- Deep technical analysis across long documents or repositories

- Multi-step agent workflows that need consistent reasoning

- High-stakes drafting, review, and structured decision support

For simpler classification, extraction, or templated writing tasks, Sonnet or smaller models are usually the more economical choice.

Core Capabilities Developers Care About

The exact model limits and pricing can evolve, but the practical areas to evaluate remain consistent:

- Large-context reasoning over documents, code, and mixed inputs

- Strong coding performance with useful explanations and revision loops

- Tool-enabled workflows for execution, retrieval, or structured actions

- Better handling of ambiguous or multi-constraint tasks than lighter models

The mistake many teams make is reading flagship-model capability pages and assuming they should send all traffic to Opus. In practice, Opus is best used as a selective premium tier inside a broader routing strategy.

When Opus Is Worth It

Opus is usually worth the extra cost when failure is expensive.

Examples:

- A code review or migration step where bad output creates engineering risk

- Legal, policy, or technical summarization where nuance matters

- Internal expert tools used by senior operators, analysts, or developers

- Agent workflows where weak intermediate reasoning causes cascading errors

It is usually not worth it for:

- Basic chatbot responses

- Simple structured extraction

- Repetitive support content

- High-volume classification

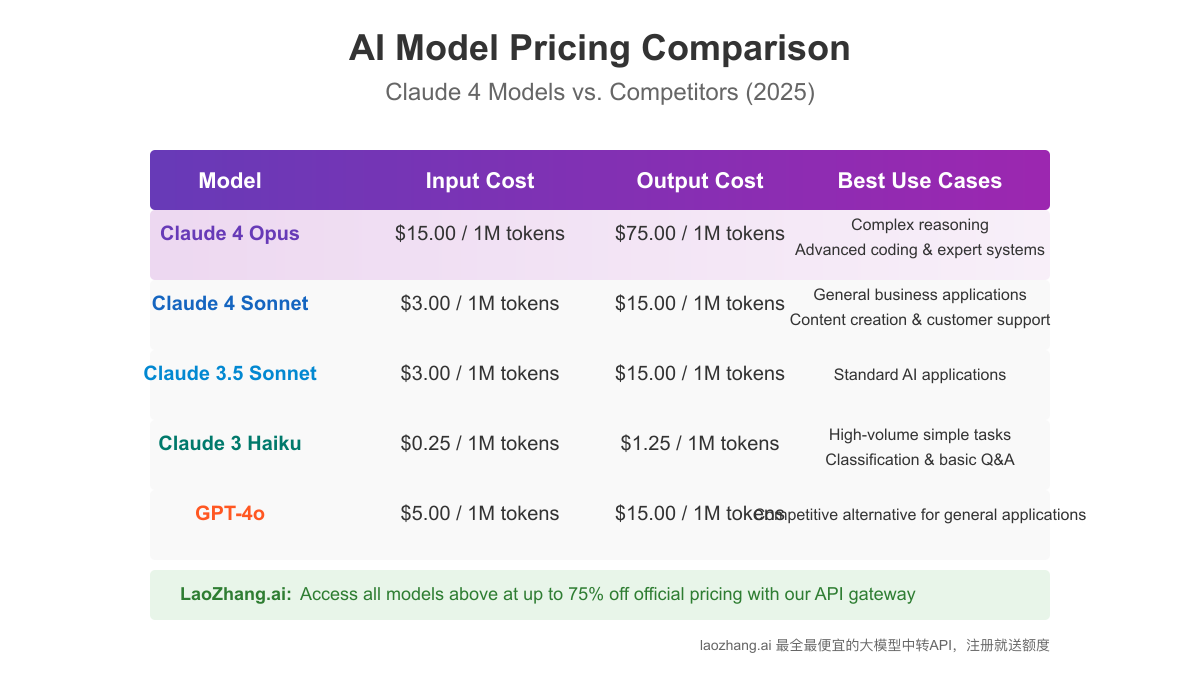

Pricing Model and Real-World Budgeting

Claude 4 Opus belongs in the premium end of the pricing spectrum. That means cost control should be designed into the workload from the beginning.

Useful budgeting questions:

- Which requests truly need expert-level reasoning?

- Which prompts can be handled by Sonnet first?

- How much output length is actually necessary?

- How much repeated context can be cached or removed?

If you cannot answer those questions yet, you are not ready to use Opus at scale.

Cost Optimization Strategies That Preserve Quality

Route Only Hard Requests to Opus

One of the most effective patterns is a two-stage system:

- Use a cheaper model for the first pass

- Escalate to Opus only when the task crosses a confidence or difficulty threshold

This keeps quality high without turning every workflow into a flagship-model bill.

Use Prompt Caching

If your system prompt, style guide, or policy block stays mostly constant, prompt caching can cut repeated input cost materially.

This is especially useful in:

- Document review tools

- Coding assistants with stable instructions

- Enterprise agent systems with long reusable context

Cap Output Aggressively

Many teams overspend on output they do not need. Ask for:

- JSON when structured data is enough

- A short ranked list instead of an essay

- Diffs instead of full rewrites

- A decision plus rationale instead of a long chain of prose

Batch Offline Work

If the task is not latency-sensitive, batch processing is often a better fit than real-time API calls.

Good candidates:

- Dataset annotation

- Report generation

- Evaluation runs

- Backlog summarization

Official Integration Pattern

The safest implementation baseline is the official Anthropic SDK and official account configuration.

pythonimport anthropic client = anthropic.Anthropic(api_key="YOUR_ANTHROPIC_API_KEY") message = client.messages.create( model="claude-opus-4-20250514", max_tokens=800, messages=[ { "role": "user", "content": "Review this database migration plan and identify the highest-risk failure modes." } ] ) print(message.content)

For production systems, build around:

- Official key management

- Retry and timeout handling

- Rate-limit backoff

- Usage logging

- Task-based cost attribution

Tool-Enabled Workflows

Claude 4 Opus becomes more useful when it is attached to controlled tools instead of being asked to solve everything inside a single prompt.

Examples:

- Retrieval from internal documentation

- Safe code execution or validation layers

- Structured file ingestion

- Search over issue trackers or repositories

The key design rule is that tools should reduce hallucination and operator effort, not become an excuse to send every task to the most expensive model.

Where Opus Beats Sonnet

In many teams, the real decision is Opus versus Sonnet.

Opus usually wins when you need:

- Better reasoning under ambiguity

- More consistent handling of large, messy context

- Higher success rates on hard coding or analysis tasks

- Stronger performance on "review and improve" style prompts

Sonnet usually wins when you need:

- Better cost-efficiency

- Faster iteration

- More requests within the same budget

- A default model for broad production usage

That is why the best architecture is often "Sonnet first, Opus second" rather than choosing a single model for everything.

Common Deployment Patterns

Expert Escalation Layer

Most traffic goes to a cheaper model. A smaller percentage is promoted to Opus when:

- Confidence is low

- The task is unusually complex

- The user explicitly requests a deeper answer

- A first-pass result fails validation

Human-in-the-Loop Review

Opus is particularly effective when its output is reviewed by analysts, researchers, or senior developers before publication or release.

High-Value Internal Tools

If each successful answer saves real staff time or prevents expensive mistakes, Opus can be cost-effective even at a premium token price.

FAQ

Is Claude 4 Opus the best default API model?

Usually no. It is better treated as a premium model for the hardest tasks.

Should small teams start directly with Opus?

Only if the product depends on expert-level reasoning from day one. Otherwise start with Sonnet and add Opus selectively.

What is the safest way to access Claude 4 Opus API?

Use official Anthropic access or official cloud-platform integrations that your organization already supports.

How do I reduce Opus cost without changing providers?

Use model routing, prompt caching, output caps, batch processing, and better context selection.

Conclusion

Claude 4 Opus is most valuable when it is used deliberately. Teams that treat it as a precision tool for hard tasks usually get the best results. Teams that treat it as a universal default usually get an unnecessarily large bill. The highest-signal implementation strategy is to keep access official, use Opus selectively, and measure cost against real workflow outcomes.